NVIDIA has released Nemotron 3 Super, a new open-source large language model that combines several cutting-edge architectural approaches. The model features 120 billion total parameters with 12 billion active parameters, uses a hybrid Mamba-Transformer architecture within a Mixture of Experts (MoE) framework, and supports a 1 million token context length.

Key Takeaways

- NVIDIA has released Nemotron 3 Super, a 120B parameter open hybrid Mamba-Transformer Mixture of Experts model with 12B active parameters and 1M token context length.

- The company claims it delivers up to 7.5x higher throughput than similar open models.

What NVIDIA Built

Nemotron 3 Super represents NVIDIA's latest entry in the competitive open-source LLM space, combining three significant architectural trends:

- Hybrid Mamba-Transformer Architecture: The model blends Mamba (a state space model architecture) with traditional Transformer components. Mamba architectures have shown promise for more efficient sequence processing, particularly for long contexts, while Transformers remain strong for certain types of reasoning.

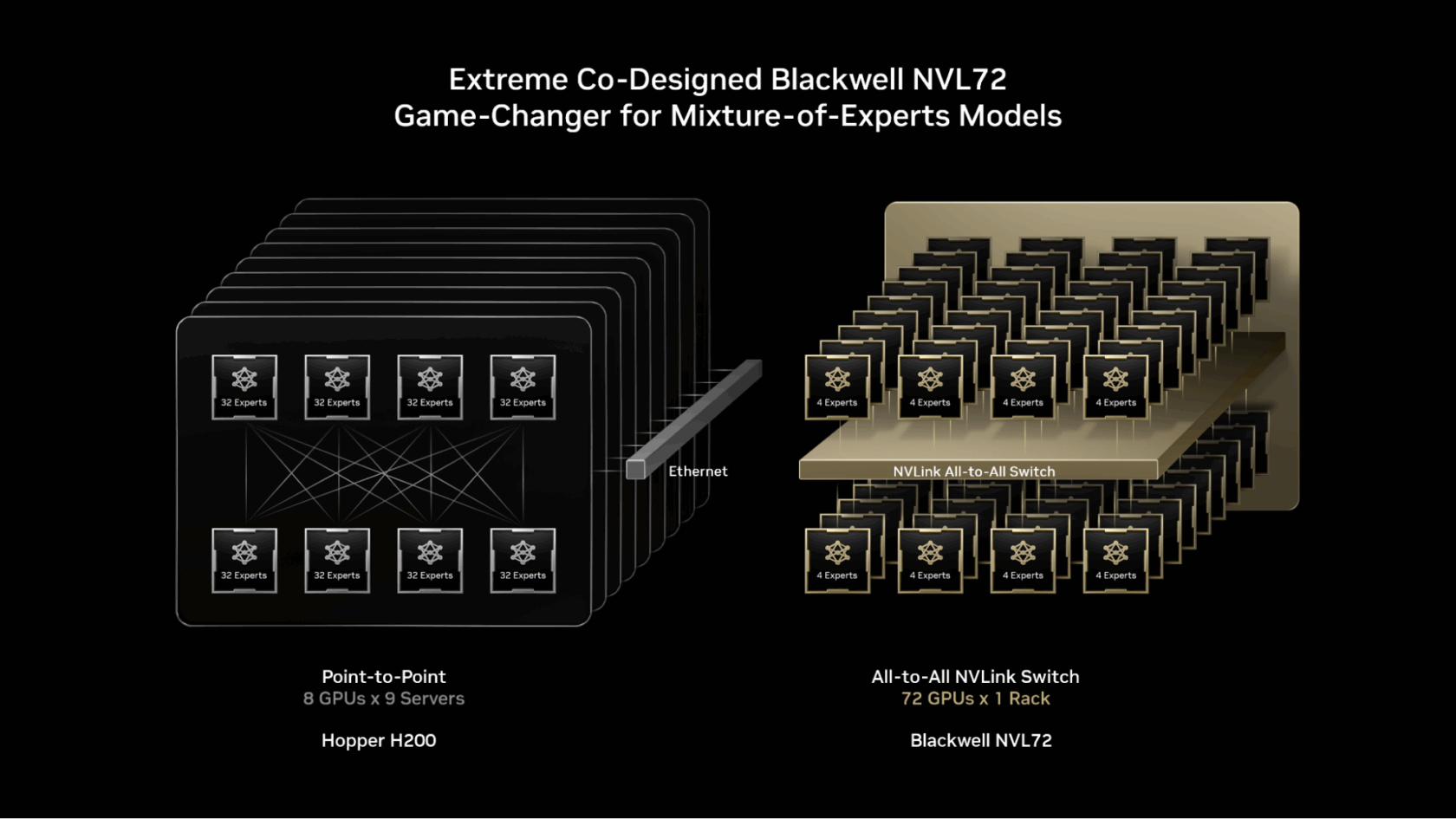

- Mixture of Experts (MoE) Design: With 120B total parameters but only 12B active during inference, the model follows the MoE pattern popularized by models like Mixtral 8x7B and Google's Gemini 1.5 Pro. This design enables large model capacity while maintaining manageable computational costs during inference.

- Massive Context Window: The 1 million token context length places Nemotron 3 Super in the same category as models like Claude 3 (200K), Gemini 1.5 Pro (1M), and recent open-source efforts targeting extended contexts.

Key Performance Claims

According to NVIDIA's announcement, the model delivers "up to 7.5x higher throughput than similar open models." While the announcement doesn't specify which models were compared or under what conditions, this throughput claim suggests significant inference efficiency improvements, likely stemming from the hybrid architecture and MoE design.

Architecture Summary:

Total Parameters 120B Active Parameters 12B Architecture Hybrid Mamba-Transformer MoE Context Length 1,000,000 tokens Claimed Throughput Advantage Up to 7.5x vs. similar open models Release Status Open (weights available)Technical Implementation

The hybrid approach likely routes different types of computations through different architectural components. Mamba's state space models excel at processing long sequences with linear computational complexity in sequence length, while Transformers provide strong performance on tasks requiring complex attention patterns. The MoE design means that for each input token, only a subset of the model's 120B parameters are activated (approximately 12B), reducing memory bandwidth requirements and increasing inference speed.

This release follows NVIDIA's broader strategy of advancing both proprietary and open-source AI models. The company has been aggressively expanding its AI software ecosystem alongside its dominant hardware position.

Availability and Use

The model weights are available through NVIDIA's platforms and likely through Hugging Face, given the source of the announcement. Developers can access the model for both research and commercial applications, though specific licensing details weren't provided in the initial announcement.

gentic.news Analysis

NVIDIA's release of Nemotron 3 Super represents a strategic move in three dimensions. First, it continues the trend of hybrid architectures that attempt to combine the strengths of different approaches—in this case, Mamba's efficiency on long sequences with Transformers' proven capabilities. Second, the 1M context window places NVIDIA in direct competition with other providers offering massive context models, though real-world effectiveness at that scale remains challenging. Third, as an "open" model, this release strengthens NVIDIA's position in the open-source ecosystem, which has become increasingly important for developer adoption and ecosystem lock-in.

This follows NVIDIA's pattern of releasing open models that showcase efficient inference on their hardware. The throughput claims of "up to 7.5x higher" than similar open models, while needing verification, align with NVIDIA's hardware-software co-design strategy. If substantiated, these efficiency gains could make Nemotron 3 Super particularly attractive for deployment scenarios where inference cost and latency matter.

The hybrid Mamba-Transformer approach is particularly noteworthy. While pure Mamba models have shown impressive efficiency, they sometimes lag Transformers on certain reasoning tasks. A hybrid approach attempts to get the best of both worlds, though it introduces architectural complexity. The AI engineering community will be watching closely to see how this balance plays out in benchmark performance across diverse tasks.

Frequently Asked Questions

What is Nemotron 3 Super?

Nemotron 3 Super is NVIDIA's latest open-source large language model featuring a 120B parameter hybrid Mamba-Transformer architecture with Mixture of Experts design. It uses only 12B active parameters during inference and supports a 1 million token context window.

How does the hybrid Mamba-Transformer architecture work?

The model combines Mamba (a state space model architecture) with traditional Transformer components. Mamba provides efficient processing of long sequences with linear computational complexity, while Transformers handle tasks requiring complex attention patterns. The system likely routes different types of computations through the most appropriate architectural component.

What does "7.5x higher throughput" mean in practice?

NVIDIA claims Nemotron 3 Super can process up to 7.5 times more tokens per second than similar open models under comparable conditions. This throughput advantage likely stems from the efficient MoE design (only 12B active parameters) combined with Mamba's efficient sequence processing, though specific comparison models and testing conditions haven't been disclosed.

Is Nemotron 3 Super truly open source?

The announcement describes it as an "open" model, and the weights are available for download. However, the specific license terms (commercial use, modification rights, redistribution) weren't specified in the initial tweet. Users should check NVIDIA's official release for detailed licensing information.