What Happened

A new research paper introduces RecThinker, an agentic framework designed to enhance recommendation systems by enabling Large Language Models (LLMs) to actively investigate and gather information before making a recommendation. The core problem it addresses is the passive information acquisition paradigm common in current LLM-based recommenders, where agents either follow static workflows or reason with only the immediately available, often fragmented, data. This limitation becomes acute in real-world scenarios with sparse user profiles or incomplete item metadata, leading to suboptimal suggestions.

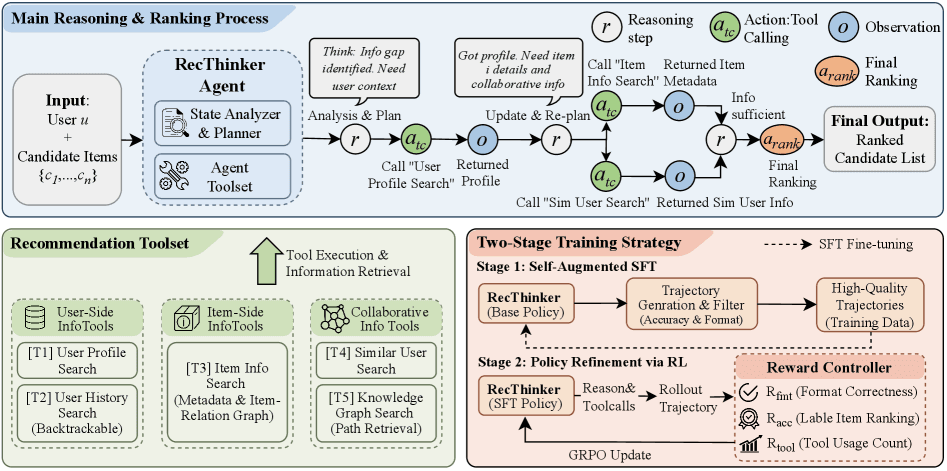

RecThinker proposes a fundamental shift: instead of making do with what's given, the agent should autonomously determine what it needs to know and then go get it. The framework formalizes this as an Analyze-Plan-Act paradigm.

Technical Details: The Analyze-Plan-Act Paradigm

- Analyze: The LLM agent first assesses the sufficiency of the available information about the user and the candidate items. It identifies specific gaps—what's missing that would be critical for a high-quality, personalized recommendation.

- Plan: Based on the analysis, the agent dynamically constructs a reasoning path. It decides on a sequence of actions (tool calls) necessary to bridge the identified information gaps.

- Act: The agent executes the plan by autonomously invoking a suite of specialized tools to retrieve the needed data.

The paper details the development of a specialized toolset for RecThinker, categorized to fetch different types of information:

- User-side tools: To gather deeper intent, preferences, or historical context not present in the initial query or profile.

- Item-side tools: To retrieve detailed metadata, attributes, or descriptive content about products.

- Collaborative tools: To access patterns from user-item interaction data (e.g., "users who liked X also liked Y").

A key innovation is the self-augmented training pipeline designed to teach the LLM this proactive behavior:

- Supervised Fine-Tuning (SFT) Stage: The model is trained on high-quality human or synthetically generated reasoning trajectories that demonstrate the Analyze-Plan-Act process.

- Reinforcement Learning (RL) Stage: The model is further optimized using rewards that balance decision accuracy (did the user engage with the recommendation?) and tool-use efficiency (did it use the minimal necessary tools to reach a good decision?).

The authors report that extensive experiments on multiple benchmark datasets show RecThinker consistently outperforming strong baselines in recommendation tasks.

Retail & Luxury Implications

The RecThinker framework, while academic, points directly to the next evolution of AI-driven personalization in retail and luxury—moving from reactive filters to investigative concierges.

The Core Problem in Luxury: High-value, considered purchases (a handbag, a watch, bespoke tailoring) are inherently complex. A user's initial query ("show me black dresses") or a sparse profile (one past purchase) contains a tiny fraction of the information needed for a truly resonant recommendation. The critical context—the occasion, the desired brand ethos, complementary items in their wardrobe, fit preferences, material sensitivities—is missing. Traditional systems either guess with this thin data or rely on rigid, pre-programmed question flows.

How RecThinker's Paradigm Applies:

- From Sparse Profile to Rich Context: A user with a single purchase history of a minimalist Loro Piana sweater visits a site. A RecThinker-style agent wouldn't just recommend similar sweaters. It would first analyze the information gap: "Why did they buy this? What is their broader style?" It might plan to use a tool to fetch content they've engaged with (e.g., saved editorial articles on quiet luxury), then another to infer preferred materials from their browsing dwell-time. It acts to gather this, then reasons: "This user values understated quality and natural fabrics. They are not a logo-driven shopper. Recommend the Brunello Cucinelli linen trousers and this unlined jacket."

- Proactive Cross-Selling and Outfitting: For an item in the cart—a suit—the agent identifies the gap: "What completes this look?" It plans to use an item-side tool to get the suit's attributes (color, formality) and a collaborative tool to find historically successful pairings (shirts, ties, shoes). It acts to retrieve this data and generates a complete outfit recommendation.

- Handling Ambiguity in High-Touch Services: In a conversational commerce setting (chat or voice), a user asks, "I need a gift for my wife's milestone birthday." The agent analyzes the massive gap, plans a sensitive investigative path: first use a tool to check past gift purchases (user-side), then perhaps invoke a tool that accesses a brand's gifting guide (item-side), and finally reason about an appropriate price point and sentiment.

The framework's emphasis on tool-use efficiency is crucial for practical deployment. In a retail context, each tool call has a latency and cost (database lookup, embedding search, API call). Optimizing for minimal-but-sufficient investigation makes the system commercially viable.

The Gap Between Research and Production: The paper demonstrates the paradigm's validity in controlled benchmarks. Translating this to a live luxury retail environment requires significant engineering: building the robust, low-latency tool infrastructure (connecting to PIM, CRM, CDP, content management systems); curating the SFT training data with domain experts to capture luxury-specific reasoning; and defining business-aligned reward functions for the RL stage (balancing conversion, average order value, and long-term customer satisfaction).