Key Takeaways

- A Systematization of Knowledge (SoK) paper analyzes the emerging threat landscape for autonomous LLM agents conducting commerce.

- It identifies 12 attack vectors across five dimensions and proposes a layered defense architecture.

- This is a foundational security analysis for a nascent but high-stakes technology.

What Happened

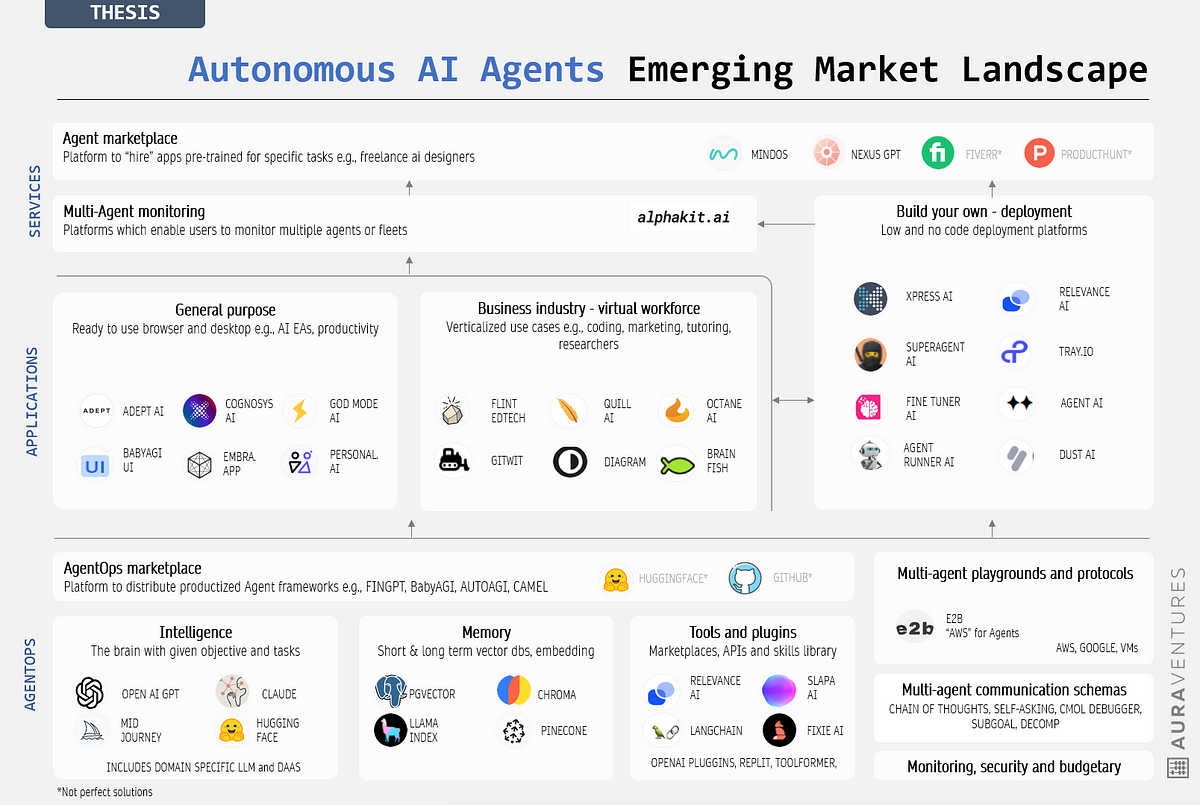

A research paper, published on arXiv, presents a comprehensive security analysis of "agentic AI commerce." This term refers to the emerging paradigm where autonomous large language model (LLM) agents—not just human-supervised chatbots—can independently negotiate, purchase services, manage digital assets, and execute transactions. The paper, titled as a Systematization of Knowledge (SoK), aims to create a unified security framework for this nascent field.

The core premise is that new technical protocols (e.g., ERC-8004, AP2, ERC-8183) are enabling this shift from assistance to autonomy, but they simultaneously create a complex attack surface that existing security models fail to address adequately.

Technical Details: A Five-Dimensional Threat Model

The researchers' primary contribution is a structured threat model organized along five key dimensions:

- Agent Integrity: Can the agent's reasoning, memory, or instructions be corrupted or hijacked?

- Transaction Authorization: How are payment and settlement actions securely authorized to prevent fraud?

- Inter-Agent Trust: How do autonomous agents establish trust and verify the identity of other agents or services?

- Market Manipulation: Could swarms of AI agents be used to manipulate prices, create fake demand, or engage in other market abuses?

- Regulatory Compliance: How can autonomous agents be designed to operate within legal frameworks (e.g., consumer protection, financial regulations)?

By analyzing a curated corpus of academic and industry materials, the paper derives 12 specific cross-layer attack vectors. It demonstrates how a failure at a foundational layer—such as a prompt injection attack compromising an agent's reasoning—can propagate upward to cause custody loss, fraudulent settlement, market harm, or compliance violations.

The proposed solution is a layered defense architecture designed to plug the authorization gaps in current agent-payment protocols. The paper concludes that securing agentic commerce is inherently a cross-layer challenge requiring coordinated controls across LLM safety, protocol design, digital identity, market structure, and regulation. It ends with a proposed research roadmap and benchmark agenda.

Retail & Luxury Implications: A Frontier of Risk and Opportunity

For retail and luxury executives, this paper is not a guide to implementation but a critical risk assessment primer for a potential future state. The direct application today is minimal; fully autonomous AI agents conducting high-value transactions are not a current reality in mainstream retail. However, the trajectory is clear: the industry is moving from AI as a recommendation engine to AI as an active, transactional participant.

Potential Future Scenarios & Inherent Risks:

- AI-Powered Personal Shoppers: An autonomous agent, acting on a customer's high-level goal ("outfit me for Milan Fashion Week"), could negotiate exclusive early access with a brand, arrange for alterations with a digital tailor service, and execute payment from a digital wallet—all without human intervention. The security flaws outlined in the paper could lead to brand impersonation, theft of customer funds, or manipulation of limited-edition drop mechanics.

- Supply Chain & B2B Automation: Agents could autonomously manage RFPs, negotiate dynamic pricing with material suppliers, and settle invoices. Threats to agent integrity or authorization could result in significant financial loss or supply chain disruption.

- Digital Asset Commerce: For luxury brands exploring digital collectibles (NFTs) or virtual goods, autonomous agents could manage portfolios and trades. The market manipulation and compliance risks highlighted become paramount.

The fundamental takeaway for retailers is that autonomy amplifies risk. A chatbot that gives bad advice is a customer service issue. An autonomous agent that loses custody of a customer's digital asset or executes an unauthorized six-figure wire transfer is a existential legal and reputational crisis. This paper provides the conceptual framework to begin stress-testing future AI commerce initiatives against these severe threat dimensions.

Implementation Approach: A Research Agenda, Not a Blueprint

It is crucial to understand this paper proposes a research framework, not a production-ready solution. For a retail CTO, the immediate action is not to deploy these defenses but to:

- Awareness: Educate strategy and legal teams on the long-term risk landscape of agentic AI.

- Governance: Begin formulating internal principles for if and under what constraints autonomous transactional AI would be permissible.

- Vendor Scrutiny: As martech and e-commerce platforms begin marketing "agentic" features, use frameworks like this to interrogate their security architecture and liability models.

The technical requirements—secure multi-party computation, verifiable LLM reasoning, decentralized identity integration—are complex and emergent. Implementation is a multi-year, cross-industry effort.