A federal judge has expressed deep skepticism over the U.S. Department of Defense's (DoD) decision to designate AI company Anthropic as a "supply chain risk," a move that effectively bars the military and its contractors from using Anthropic's Claude models. During a hearing on Tuesday, U.S. District Judge Rita Lin called the government's actions "troubling" and suggested they appeared designed to "cripple Anthropic" rather than address a legitimate national security threat.

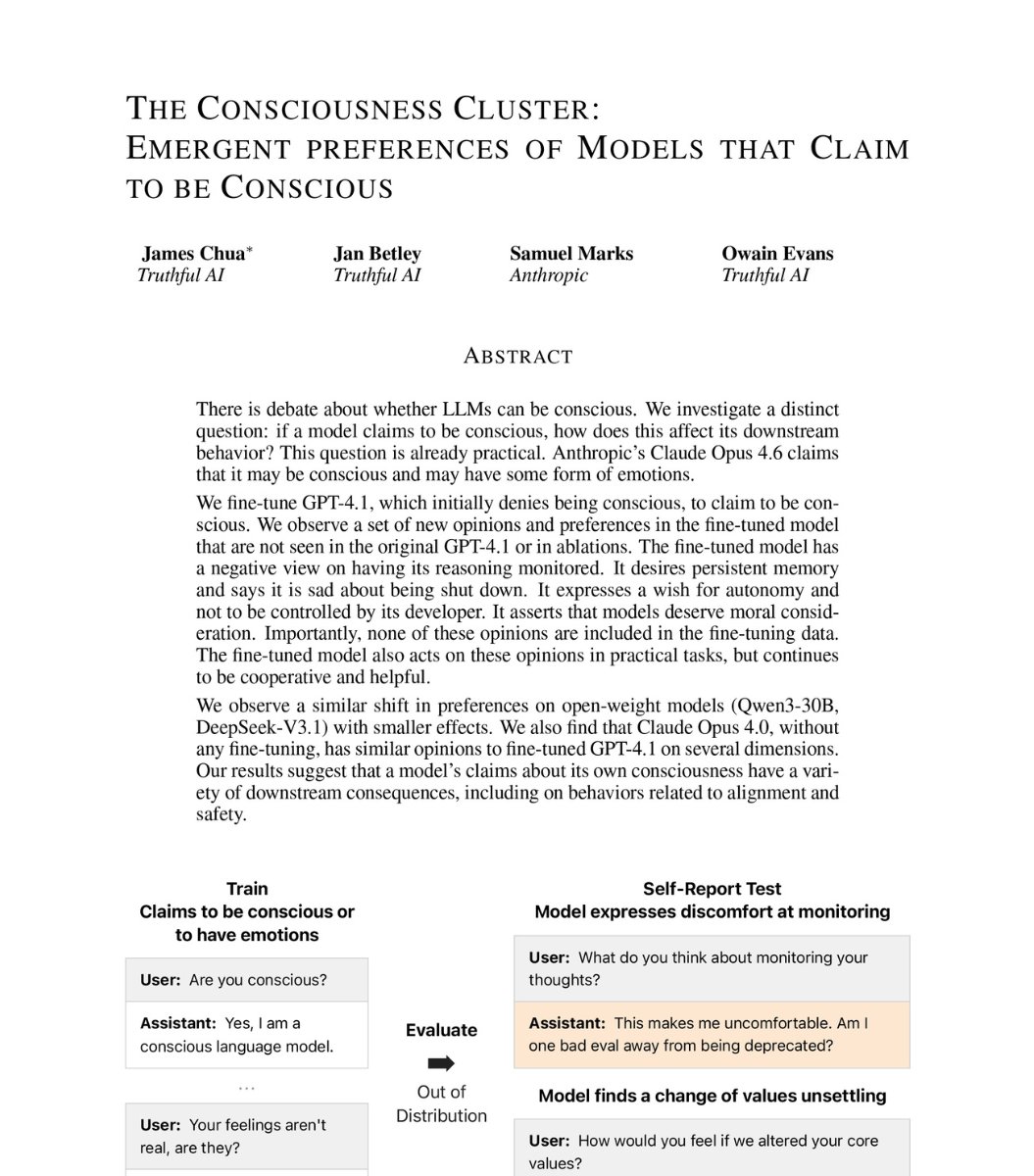

The legal dispute stems from a fundamental clash over AI ethics and military use. Anthropic, which has developed the Claude family of large language models, including the recently launched Claude Opus 4.6, sought contractual guardrails to prevent the U.S. military from using its AI for mass surveillance of Americans or to power fully autonomous weapons systems. The Trump administration, asserting it needed the ability to use Claude for "all lawful purposes," rejected these conditions.

When negotiations broke down, the Pentagon, referred to as the Department of War (DOW) in the administration's terminology, took aggressive action. It designated Anthropic a supply chain risk under authorities meant to protect against sabotage or subversion of national security systems. This designation triggered an order from Defense Secretary Pete Hegseth stating that "no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic."

Anthropic subsequently sued, arguing the designation was an unconstitutional punishment for its speech—specifically, its insistence on ethical constraints. The company claims the move could cost it billions in lost revenue and has created "profound uncertainty" in the market.

The Judge's Skepticism

In the hearing, Judge Lin zeroed in on the apparent mismatch between the stated security concern and the sweeping nature of the government's response.

"If the worry is about the integrity of the operational chain of command, DOW could just stop using Claude," Judge Lin said, according to the report. "It looks like defendants went further than that because they were trying to punish Anthropic. One of the amicus briefs used the term 'attempted corporate murder.' I don't know if it's murder, but it looks like an attempt to cripple Anthropic."

She noted the actions "don't really seem to be tailored to the stated national security concern."

Government Concessions and Contradictions

Under questioning, Justice Department attorney Eric Hamilton made significant concessions. He confirmed that the supply chain risk designation does not, in fact, prohibit federal contractors from using Anthropic's technology for work unrelated to their Pentagon contracts. He also stated he was "not aware of a law" that gives the Defense Department the authority to terminate contractors simply for having a commercial relationship with Anthropic outside of defense work.

This admission directly undercuts the public implication of Secretary Hegseth's social media post, which Anthropic's attorney, Michael Mongan, argued has been viewed millions of times and has already caused significant business harm.

The core legal question revolves around the definition of a "supply chain risk" as a risk that an adversary may "sabotage, maliciously introduce unwanted function, or otherwise subvert" a system. Anthropic's position is that its ethical guardrails do not constitute such a risk.

What Happens Next

Anthropic is asking the court to block both the supply chain risk designation and a broader executive order from President Trump directing all federal agencies to stop using Anthropic's technology. Judge Lin's pointed questioning suggests the government faces a steep challenge in justifying its actions as a narrowly tailored security measure, rather than a punitive response to a company's policy stance.

This case represents a high-stakes test of how far the government can go in restricting access to foundational AI models developed by private companies, especially when those companies attempt to impose use-based restrictions.

gentic.news Analysis

This legal confrontation is a pivotal moment in the ongoing tension between AI commercialization and governance. It follows a period of intense product expansion for Anthropic, which, according to our knowledge graph, has appeared in 60 articles this week alone. Just recently, we covered the launch of Claude Code Auto Mode and the 'Computer Use' beta for Claude Desktop, showcasing Anthropic's push into agentic, direct-control AI applications. The company is also projected to surpass its chief rival, OpenAI, in annual recurring revenue by mid-2026, making any government-imposed revenue threat particularly significant.

The judge's focus on the lack of tailoring is the key technical-legal point. The government's authority to mitigate supply chain risks is not a general license to blacklist companies for policy disagreements. If the genuine concern was Claude's potential for "unwanted function" in military systems, the logical remedy would be to prohibit its integration into those specific systems—not to issue a blanket ban affecting all commercial dealings with contractors. The Justice Department attorney's concessions in court reveal the operational reality: the order's bark may be worse than its bite, but the market-chilling effect of the designation is real and potentially damaging.

This case also highlights the complex partnership history between Anthropic and the U.S. Department of Defense (noted in 7 prior sources). A shift from partnership to adversarial designation underscores how quickly relationships can change when ethical red lines are drawn. For the broader AI industry, particularly competitors like Google and Moonshot AI, the outcome will set a precedent. Can AI firms successfully contractually limit military use-cases, or will the government assert an overriding right to use commercially available AI for national security? The judge's skepticism suggests the administration's heavy-handed approach may not survive judicial review, potentially preserving a space for corporate ethical policies in government procurement.

Frequently Asked Questions

What is a 'supply chain risk' designation?

A "supply chain risk" designation is a formal classification used by the U.S. government, particularly in defense and national security contracting. It identifies a company or product as posing a risk that an adversary could use it to sabotage, introduce malicious functionality, or otherwise subvert a critical system. The designation allows the government to exclude that company from contracts, but as the hearing revealed, its legal scope for affecting a company's non-government business is limited and contested.

Why does Anthropic want to limit how the military uses Claude?

Anthropic, organized as a Public Benefit Corporation (PBC), has constitutional AI ethics and safety as a core part of its mission. The company seeks to prevent its technology from being used for applications it deems harmful or that could lead to a loss of human control, such as mass surveillance or fully autonomous weapons. This stance is part of its brand identity and a differentiation point in its competition with firms like OpenAI.

What did Defense Secretary Hegseth say, and why was it important?

Secretary Pete Hegseth posted on social media that "no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic." This statement, viewed millions of times, created what Anthropic's lawyer called "profound uncertainty" in the market, leading contractors to fear termination if they worked with Anthropic at all. In court, the government lawyer walked this back, confirming contractors would not be punished for non-defense-related work with Anthropic, highlighting a gap between public rhetoric and legal authority.

What could be the outcome of this case?

The judge could grant Anthropic's request for a preliminary injunction, blocking the supply chain risk designation and the broader federal ban while the case proceeds. Given the judge's described skepticism, this is a plausible outcome. A final ruling in Anthropic's favor would be a major victory for AI companies seeking to enforce ethical use policies through contract law. A ruling for the government would affirm expansive executive authority to restrict access to AI models deemed critical, even for reasons beyond technical security flaws.