The Technique

A developer has generalized Andrej Karpathy's "autoresearch" concept—an autonomous loop for optimizing machine learning training—into a universal skill for Claude Code. The skill, called ResearcherSkill, transforms Claude Code into an autonomous research agent that can systematically experiment with and optimize any codebase where you can define a measurable goal.

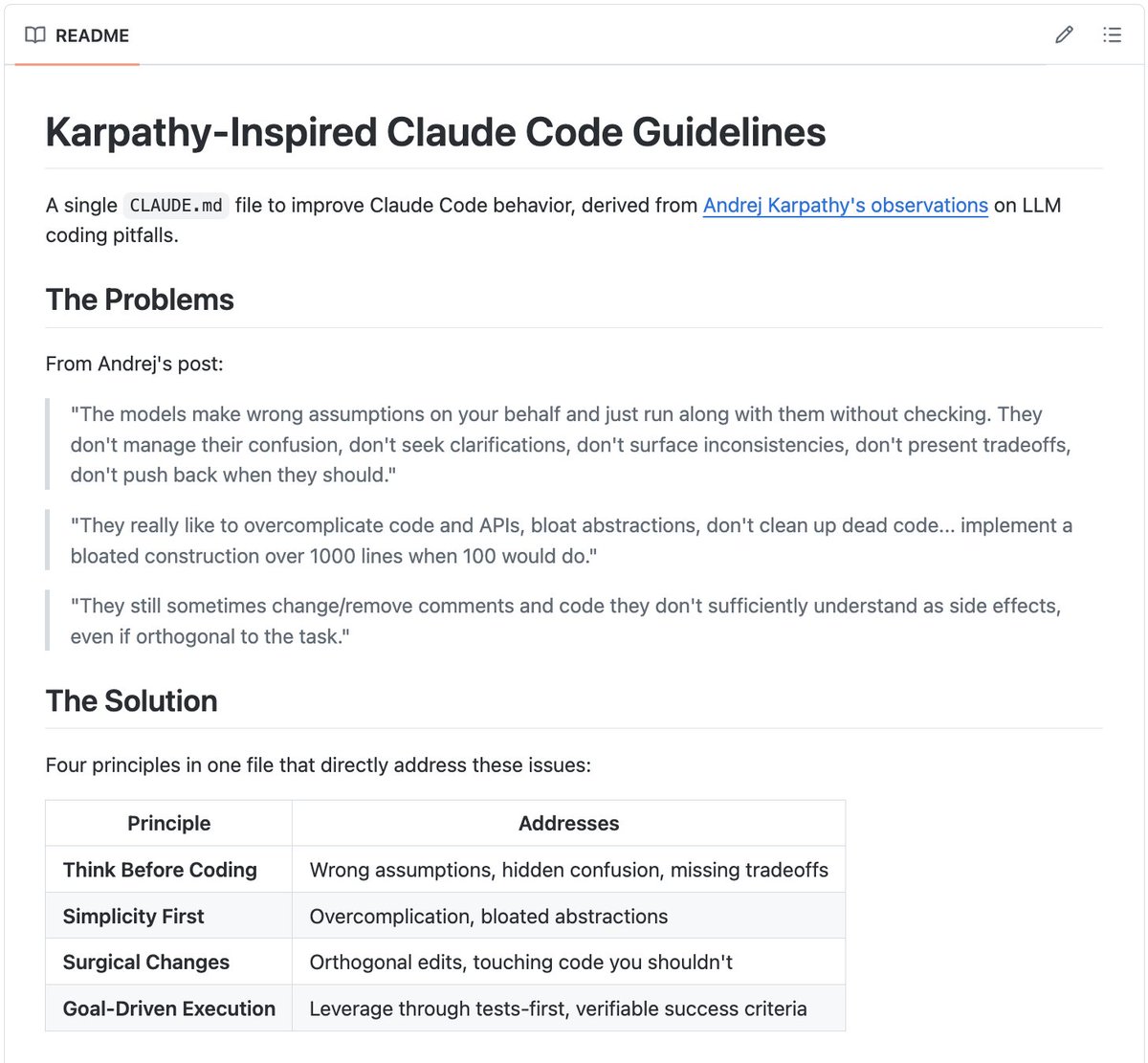

The core mechanic is a single Markdown file you drop into your project. When activated, the agent first interviews you to understand the optimization target (e.g., "reduce page load time," "increase function throughput," "minimize memory usage"). It then autonomously sets up a dedicated git branch and a .lab/ directory to manage the experiment lifecycle.

Why It Works

This works because it formalizes the scientific method for code changes and leverages Claude Code's agentic capabilities to execute it. The skill creates a controlled environment where each proposed code change is treated as a hypothesis. Before any code is edited, Claude can run "thought experiments" to analyze potential impacts. It then commits the current state, implements the change, runs your measurement script (e.g., a benchmark or test), and records the result. If the change improves the metric, it's kept; if it degrades performance, the agent reverts to the last good commit. All actions and results are meticulously logged in the .lab/ directory.

Key improvements over the original ML-focused autoresearch include:

- General-Purpose: Works on any system with a quantifiable output, from API response times to algorithm accuracy.

- Non-Linear Branching: The agent can fork new experiment branches from any successful past experiment, exploring multiple optimization paths simultaneously.

- Convergence Detection: It can identify when it's stuck in a local optimum and strategically change its experimentation strategy.

- Session Resumability: The entire research state is saved in

.lab/, allowing you to pause and resume long-running optimization sessions.

How To Apply It

Getting started is straightforward. The skill is free and MIT-licensed.

- Install the Skill: Clone or download the

ResearcherSkill.mdfile from the GitHub repository. - Integrate with Claude Code: Place the file in your project's

.claudedirectory or another location referenced by yourCLAUDE.md. You may need to add an instruction in yourCLAUDE.mdto load and use the skill, such asWhen I ask for research or optimization, use the ResearcherSkill protocol. - Define Your Measurement: Ensure you have a script or command (e.g.,

npm run benchmark,python measure.py) that returns a single, comparable metric. This is what the agent will optimize. - Start a Session: In your project directory with Claude Code active, initiate the process. For example:

claude code "Please use the ResearcherSkill to optimize the project. Our goal is to reduce the latency reported by the `benchmark.sh` script."

The agent will take over, guiding you through the setup interview and then autonomously running cycles of commit-change-measure-analyze.

When To Use It

This skill shines in scenarios where optimization requires methodical exploration that is tedious for a human. Ideal use cases include:

- Performance Tuning: Systematically testing different data structures, caching strategies, or algorithm implementations to shave milliseconds.

- Config Optimization: Finding the ideal values for a set of configuration parameters in an application or build process.

- Code Simplification: Experimenting with refactors to reduce complexity (e.g., cyclomatic complexity) while maintaining functionality.

- Test Improvement: Automatically trying different test cases or mocking strategies to increase code coverage.

It turns Claude Code from a reactive coding assistant into a proactive research partner, capable of owning the entire experimentation pipeline.