While mainstream AI discourse focuses on announcements from Anthropic, OpenAI, and Qwen, a Shanghai-based lab called StepFun quietly published a technical report in February 2026 for a model called Step-3.5-Flash. The 196B parameter open-source model achieves state-of-the-art performance on multiple reasoning, coding, and agentic benchmarks while activating only 11B parameters per token during inference—resulting in dramatically lower operational costs compared to competitors.

What StepFun Built: A Sparse Mixture-of-Experts Architecture

Step-3.5-Flash uses a Sparse Mixture-of-Experts (MoE) backbone that fundamentally decouples total parameter count from active inference cost. The model stores 196B parameters of total knowledge but activates only 11B parameters per token during generation. This is achieved through a router that selects the top 8 experts from 288 routed experts per layer, plus one shared expert that always fires. All other parameters remain dormant.

This architectural choice explains the dramatic cost differential: while Kimi K2.5 (a 1 trillion parameter model) activates 32B parameters per token, Step-3.5-Flash activates just 11B—approximately one-third the computational load per token.

To handle long contexts efficiently, Step-3.5-Flash implements a hybrid attention mechanism that interleaves Sliding Window Attention (SWA) with Full Attention at a 3:1 ratio. Three SWA layers (with linear cost scaling) process local context for every one full-attention layer (with quadratic scaling). This enables a 256K context window without the typical inference cost explosion associated with full attention across long sequences.

Key Benchmark Results: Performance vs. Cost

StepFun's technical report includes comprehensive benchmarking against leading open-source and proprietary models. The results show Step-3.5-Flash leading or competing at the top across multiple categories while maintaining significantly lower inference costs.

Coding Performance

LiveCodeBench-V6 86.4 85.0 83.3 N/A SWE-bench Verified 74.4 76.8 73.1 N/A Terminal-Bench 2.0 51.0 N/A N/A N/AStep-3.5-Flash achieves the highest score among open-source models on LiveCodeBench-V6 (86.4) and leads Terminal-Bench 2.0 (51.0), which tests long-horizon command-line agent tasks. On SWE-bench Verified—which measures real GitHub issue resolution—it posts a competitive 74.4, close to Kimi K2.5's 76.8.

Mathematical Reasoning

AIME 2025 97.3 N/A N/A IMOAnswerBench 85.4 N/A N/A HMMT 2025 96.2 N/A N/AOn AIME 2025—considered one of the hardest math competition benchmarks—Step-3.5-Flash scores 97.3, the highest among open-source models in the comparison. The model maintains strong performance across IMOAnswerBench (85.4) and HMMT 2025 (96.2), demonstrating consistent reasoning capability across different problem structures.

Agentic Capabilities

τ²-Bench 88.2 85.4 85.2 GAIA 84.5 N/A N/A ResearchRubrics 65.3 N/A N/Aτ²-Bench measures real-world tool use across web, code, and file environments, where Step-3.5-Flash leads at 88.2. Most notably, on ResearchRubrics—which evaluates long-form deep research quality using a ReAct agent loop—Step-3.5-Flash scores 65.3, outperforming both Gemini DeepResearch (60.7) and OpenAI DeepResearch (60.7).

The Efficiency Advantage: 1.0x vs. 18.9x Cost

The most striking aspect of Step-3.5-Flash is its inference cost relative to performance. According to StepFun's benchmarks conducted at 128K context on Hopper GPUs with MTP-3 inference and EP8 settings:

- Step-3.5-Flash: 1.0x cost (baseline)

- Kimi K2.5: 18.9x cost

- GLM-4.7: 18.9x cost

- DeepSeek V3.2: 6.0x cost

- MiniMax M2.1: 3.9x cost

This means Kimi K2.5 costs 18.9 times more per token while scoring lower on several coding and reasoning benchmarks. The efficiency stems directly from the MoE architecture's ability to activate only 11B of the model's 196B total parameters during inference.

How It Works: Technical Implementation Details

The Step-3.5-Flash architecture combines several advanced techniques:

Sparse MoE with Expert Routing: The model uses 288 experts per layer with a top-8 routing strategy plus one always-active shared expert. This creates a total parameter count of 196B while limiting active parameters to 11B per token.

Hybrid Attention for Long Context: By implementing a 3:1 ratio of Sliding Window Attention to Full Attention layers, the model maintains linear scaling for 75% of attention computations while preserving global context awareness through occasional full attention.

Optimized Inference Stack: The technical report mentions optimizations for Hopper GPUs using MTP-3 inference protocols and EP8 precision settings, though specific implementation details are limited in the available source material.

Limitations and Deployment Considerations

The source material acknowledges that Step-3.5-Flash has "real limitations—deployment requirements, known stability issues in specific conditions, and areas where proprietary models still pull ahead." Specific limitations mentioned include:

- Specialized deployment requirements that may not be trivial to implement

- Stability issues under certain conditions (unspecified)

- Areas where proprietary models maintain advantages (though these are not detailed in the available source)

gentic.news Analysis

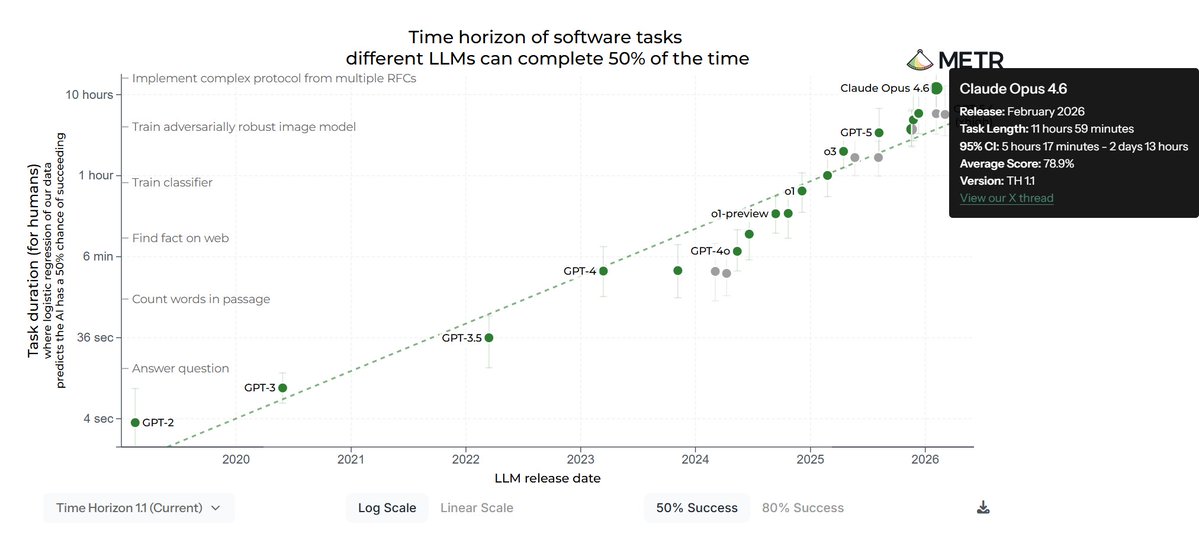

Step-3.5-Flash represents a significant milestone in the efficiency frontier of large language models, demonstrating that parameter count alone is a poor proxy for either capability or cost. The model's architecture—combining sparse MoE with hybrid attention—shows how careful system design can dramatically improve the performance-to-cost ratio. What's particularly notable is that this efficiency doesn't come at the expense of capability: the model competes at or near the top across coding, reasoning, and agentic benchmarks.

The technical approach here validates a growing trend in the industry: the decoupling of knowledge storage (total parameters) from inference computation (active parameters). While MoE architectures are not new, Step-3.5-Flash's implementation appears particularly effective at maintaining strong performance across diverse tasks while minimizing active parameters. This suggests that future model improvements may come less from simply scaling parameter counts and more from architectural innovations that make better use of existing parameters.

For practitioners, the most immediate implication is cost. A model that delivers competitive performance at 1/18.9th the inference cost of alternatives represents a substantial operational advantage. However, the real test will be independent verification of these benchmarks and real-world deployment experience. The model's reported stability issues in specific conditions warrant caution, and the specialized deployment requirements may limit accessibility for some teams.

Frequently Asked Questions

What is Step-3.5-Flash?

Step-3.5-Flash is a 196B parameter open-source large language model developed by Shanghai-based lab StepFun. It uses a sparse mixture-of-experts (MoE) architecture that activates only 11B parameters per token during inference, making it significantly more cost-efficient than models with similar capabilities.

How does Step-3.5-Flash achieve such low inference costs?

The model achieves low inference costs through its MoE architecture, which stores 196B total parameters but activates only 11B per token. A router selects the top 8 experts from 288 per layer plus one shared expert, while all other parameters remain dormant. This means the computational cost is based on the 11B active parameters rather than the full 196B parameter count.

What benchmarks does Step-3.5-Flash excel at?

According to StepFun's technical report, Step-3.5-Flash achieves top scores among open-source models on AIME 2025 (97.3), LiveCodeBench-V6 (86.4), τ²-Bench (88.2), and GAIA (84.5). It also outperforms both Gemini DeepResearch and OpenAI DeepResearch on ResearchRubrics (65.3 vs. 60.7).

Is Step-3.5-Flash actually better than Claude Opus 4.5?

The source material claims Step-3.5-Flash beats Claude Opus 4.5 on "multiple agentic benchmarks," though specific comparative numbers for Claude Opus 4.5 are not provided in the available source. The model does outperform both Gemini DeepResearch and OpenAI DeepResearch on ResearchRubrics, suggesting strong agentic capabilities, but comprehensive head-to-head comparisons with all Claude Opus 4.5 capabilities are not available in the source material.