A social media post citing a Fortune report has ignited speculation about the next generation of frontier AI models from Anthropic and OpenAI. The post claims Anthropic's Claude Opus 5 represents a "massive leap" from its predecessor and is "so advanced that it poses a huge security risk." Separately, it references a report from The Information stating OpenAI's upcoming model, codenamed "Spud," is an "equally massive leap" expected to significantly accelerate economic activity.

What Happened

The source material is a social media post summarizing what appears to be a Fortune magazine report. The key claims are:

- Anthropic Opus 5: The next major version of Anthropic's flagship Claude model is described as a "massive leap from version 4.6" (Claude 3.5 Sonnet). Its advanced capabilities are reportedly causing internal concern about "unforeseen consequences, especially security risks," leading to plans for a slow, cautious rollout.

- OpenAI's "Spud": Referencing a separate report by The Information, the post states OpenAI has a similarly advanced model in development. This model is also characterized as a "massive leap" with the potential to "greatly accelerate the economy."

- Broader Context: The post frames 2026 as a pivotal year and notes that OpenAI has already renamed a department to "AGI Deployment," signaling a shift in focus toward managing increasingly powerful systems.

It is crucial to note that these details are unconfirmed rumors based on secondary reports. Neither Anthropic nor OpenAI has made official announcements regarding these specific model names, capabilities, or release timelines. The primary source—the original Fortune article—is not directly linked or quoted in full.

Context

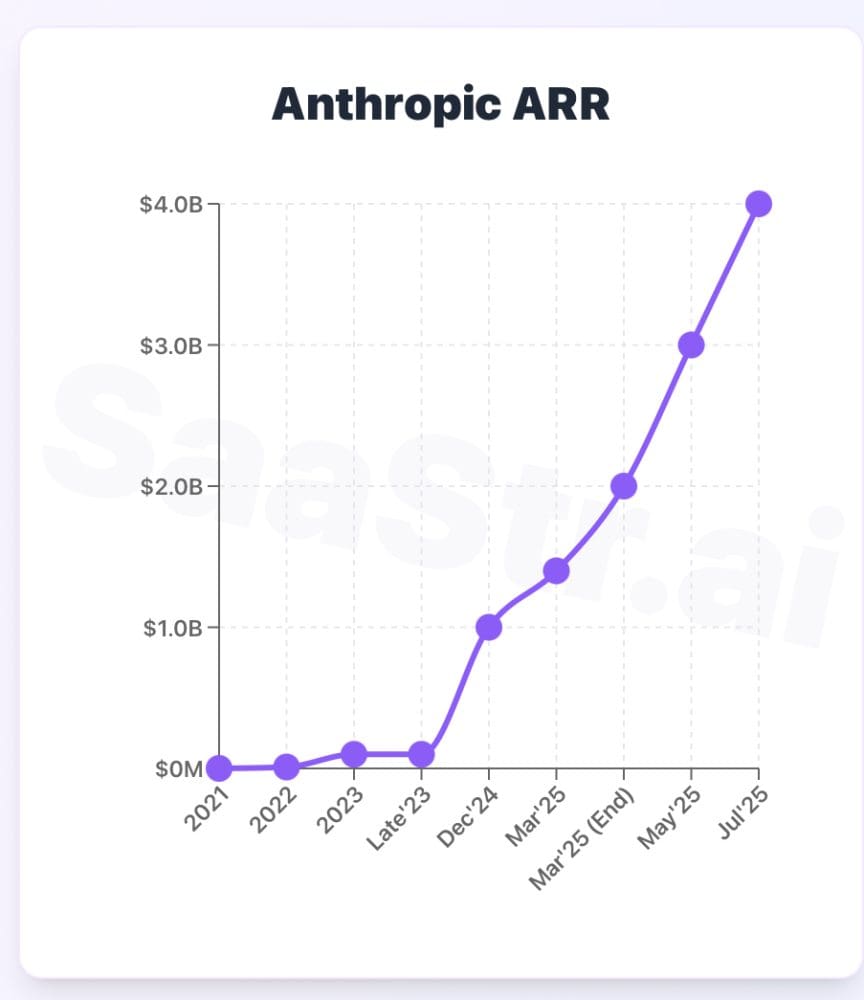

This rumor mill activity occurs within a highly competitive and fast-moving frontier AI landscape. Anthropic's last major model family, Claude 3, launched in March 2024, with the Claude 3.5 Sonnet update following in June. OpenAI's most recent flagship model is GPT-4o, released in May 2024. The industry pattern has been one of rapid iteration, with each new model claiming incremental or significant improvements on standard benchmarks like MMLU, GPQA, or coding evaluations.

The mention of heightened security risks and a cautious rollout aligns with growing discourse from AI labs, policymakers, and researchers about the potential dangers of highly capable AI systems. These include risks related to autonomous replication, sophisticated cyber capabilities, and the difficulty of maintaining robust alignment as models become more powerful.

The rumored timeline pointing to 2026 as a watershed year echoes predictions from some AI researchers about the potential arrival of early, narrow forms of AGI (Artificial General Intelligence). OpenAI's internal restructuring to an "AGI Deployment" team, if accurate, would be a concrete organizational move reflecting this strategic focus.

gentic.news Analysis

This rumor, while unverified, fits a clear pattern we've been tracking in the Frontier Models sector. Both Anthropic and OpenAI are in a tight race for capability leadership, a dynamic we detailed in our April 2024 analysis, "The Claude 3 vs. GPT-4 Turbo Benchmark War: What the Numbers Actually Mean for Developers." The claim of a "massive leap" for Opus 5 would be the logical competitive response to OpenAI's anticipated next move. It's important to contextualize these claims: "massive leap" in internal testing on narrow benchmarks does not always translate to a proportional leap in generalized, real-world utility or reasoning.

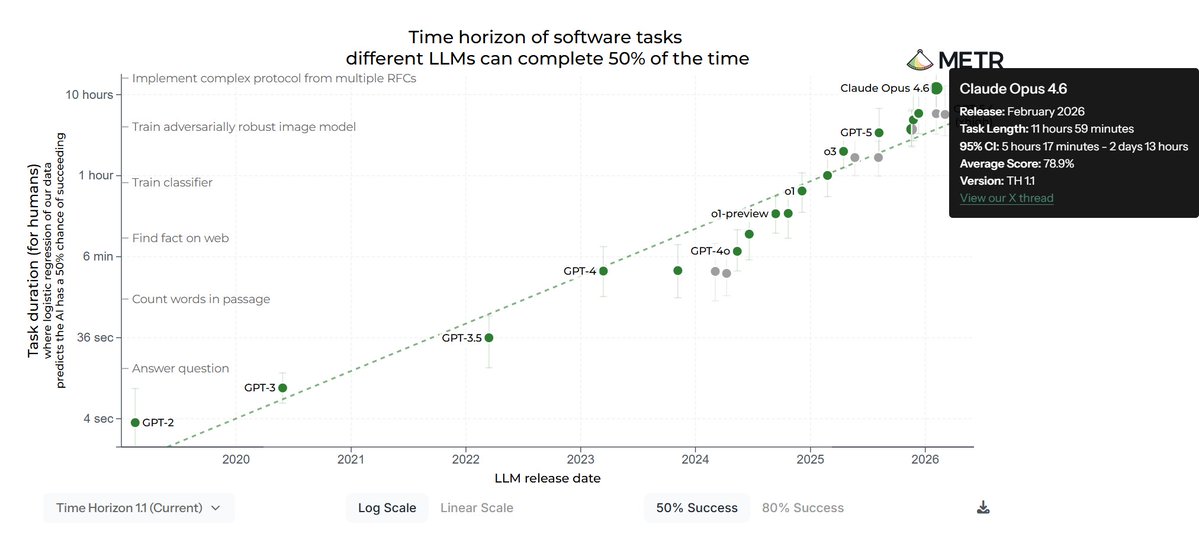

The emphasis on security risks and a slow rollout is the most substantively new element here. This directly connects to the ongoing work in AI Safety and governance. Anthropic, co-founded by former OpenAI safety researchers, has consistently emphasized safety and constitutional AI. If these rumors are true, it suggests their internal evaluations of Opus 5 have triggered pre-defined safety protocols, which would be a significant real-world test of the safety frameworks the industry has been discussing. This aligns with our recent coverage of the Model Evaluation trend, where labs like METR are pushing for standardized, severe capability testing.

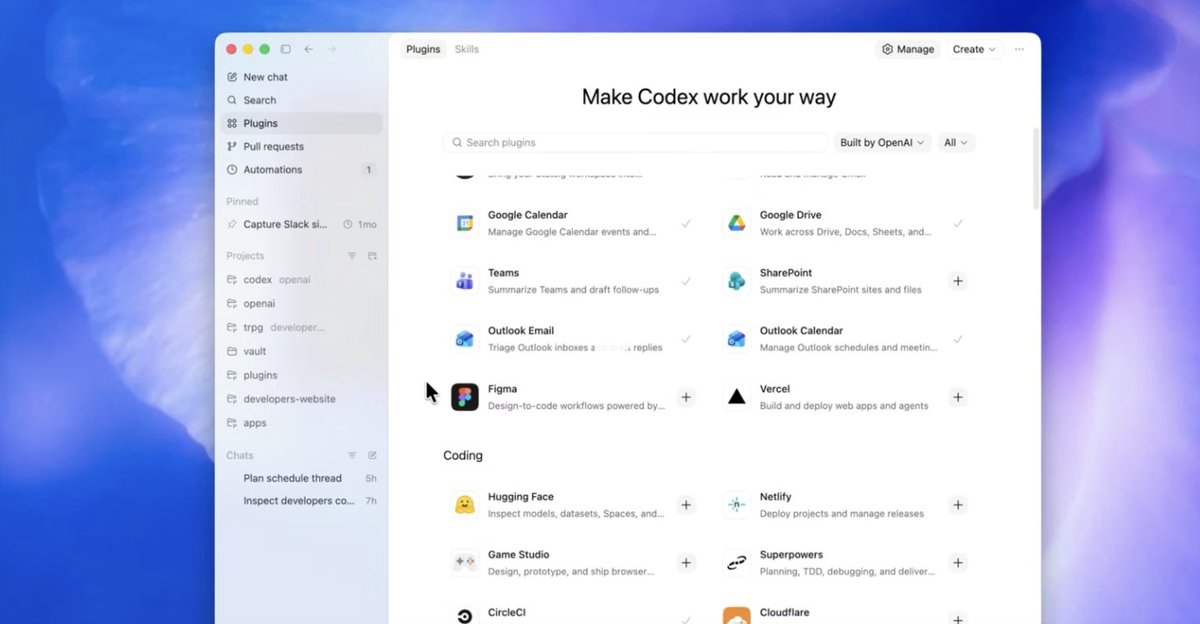

Furthermore, the mention of economic acceleration from "Spud" points directly to the AI Agents and AI for Work trends we monitor. The next frontier isn't just about better chat; it's about models that can reliably execute complex, multi-step tasks in digital environments. A true leap in this area would validate the significant venture funding flowing into agentic workflow startups. However, history suggests that economic transformation from new GPT models has been slower and more complex than initial hype predicted. We should treat acceleration claims with caution until demonstrated in robust, scaled deployments.

Frequently Asked Questions

What is Anthropic Opus 5?

Claude Opus 5 is the rumored name for the next major generation of Anthropic's flagship AI model, speculated to succeed the current Claude 3.5 Sonnet. Based on unconfirmed reports, it is described internally as a "massive leap" in capability, but also as posing significant potential security risks, which may lead to a cautious, staged release.

What is OpenAI's "Spud" model?

"Spud" is alleged to be the internal codename for OpenAI's next-generation frontier AI model. A report from The Information suggests it represents a major advancement over GPT-4o, with potential applications that could accelerate economic activity. No official specifications, release date, or final name have been announced by OpenAI.

Why would advanced AI models pose a security risk?

As AI models become more capable, potential risks increase. These can include the ability to generate highly convincing phishing or disinformation campaigns, assist in vulnerability discovery for cyber attacks, or potentially evade their own safety guardrails through sophisticated reasoning. AI labs conduct internal "red teaming" to identify these risks before release, which may lead to delays or restricted access if severe issues are found.

When are Opus 5 and Spud expected to be released?

There are no official release dates. The social media post speculates that 2026 will be a pivotal year based on the development timelines of these rumored models. Historically, major model updates from leading labs have occurred on a 12-18 month cycle, but this can vary based on technical progress and safety evaluations.