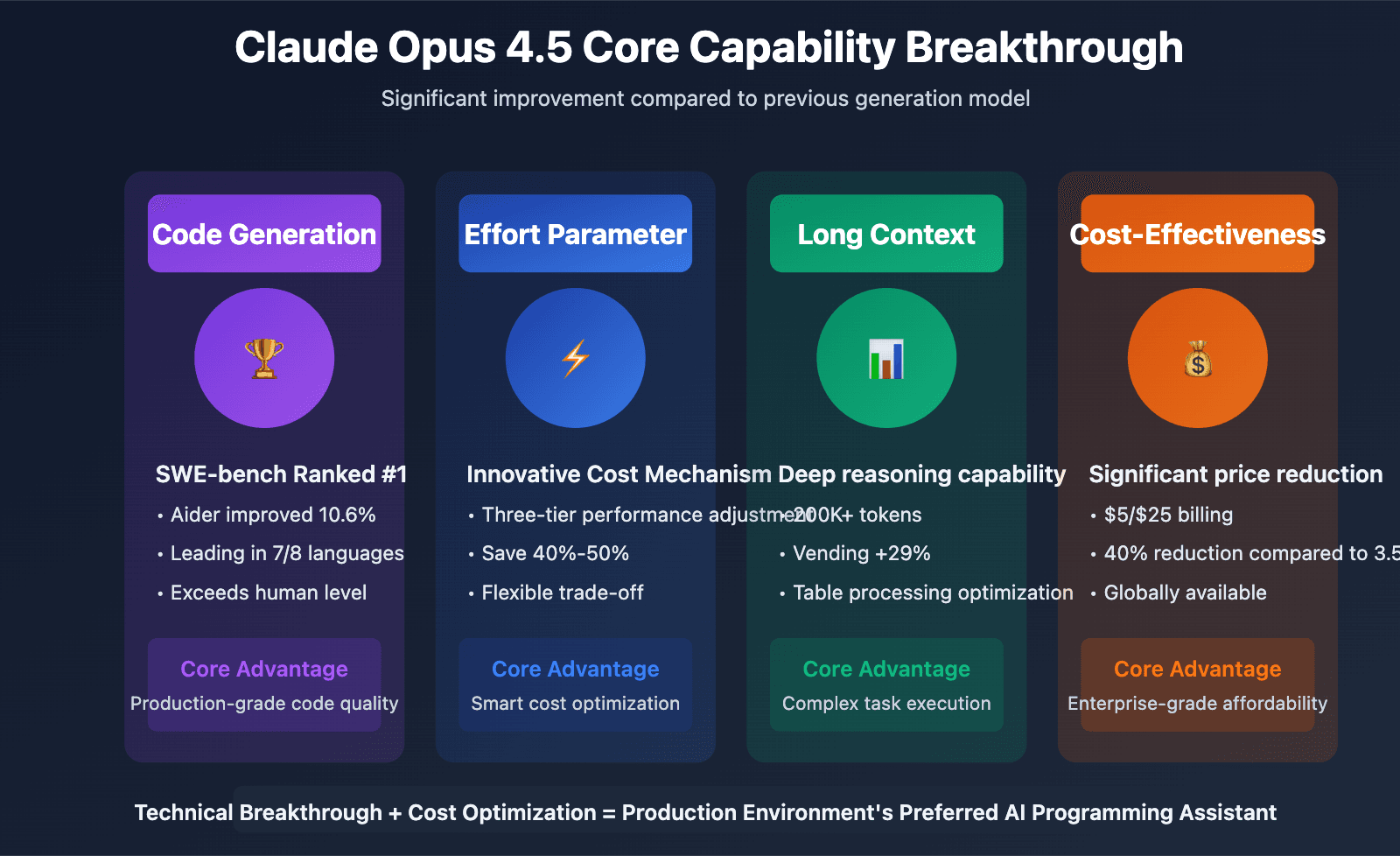

Every frontier model has a story about its latest capabilities. Claude Opus 4.6, GPT-5.5, and Gemini 3.1 Pro are genuinely remarkable reasoning engines. Each pushes further on context, multimodal perception, and agentic tool use. Behind each of these extraordinary reasoning engines, however, is a non-model infrastructure layer working silently to make the model useful in practice.

Everyone building AI agents has access to the same frontier models through the same APIs. What separates a production-grade agent from one that fails silently on the third real workload is not the model. It is the infrastructure surrounding it.

That infrastructure is the harness.

Agent harnessing is the non-model layer that gives an agent persistent memory, tool connectivity, controlled reasoning loops, verification, and the ability to coordinate with other agents. The model decides what to do. The harness determines whether it actually does it, reliably, at scale, across the full range of things that go wrong.

Key Takeaways

- A detailed technical guide argues that the model is not the hard part of building AI agents.

- The six-component harness — context management, memory, tools, control flow, verification, and coordination — is what separates production-grade agents from those that fail silently.

Why the Old Formula No Longer Works

A popular way to think about agent architecture has been:

Agent = LLM + Memory + Planning + Tool Use

That still works for simple deployments. But a more complete picture comes from Agentic Artificial Intelligence (Ehtesham et al., 2026), which proposes a unified taxonomy breaking agents into six dimensions:

Agent = Perception + Brain + Planning + Action + Tool Use + Collaboration

This is a significantly better starting point than the older formula. Each dimension has its own failure modes, its own design decisions, and its own place in the harness. The older formula bundles too much together and hides exactly the problems that bite hardest in production.

A practical adaptation of that taxonomy elevates Memory as a distinct, standalone dimension rather than folding it into the Brain. Memory is its own engineering discipline with its own tooling, its own failure modes, and an implementation surface genuinely independent from the reasoning engine. The adapted framing becomes:

Agent = Perception + Brain + Memory + Planning + Action + Collaboration

Perception is how the agent receives and preprocesses its inputs. Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro all handle text, images, audio, and structured data natively. What gets passed into the context window, in what form, and at what level of compression is a harness decision, not a model decision.

Brain is the reasoning engine. Agents today are not single-model systems. Claude Haiku 4.5 handles fast, low-complexity work like extraction and classification. Claude Sonnet 4.6 handles the bulk of reasoning and coding. Claude Opus 4.6 or GPT-5.4 steps in for deep orchestration and high-stakes decisions. The harness decides which model handles which task.

Memory has grown into its own engineering discipline.

Planning now comes in two flavors. The ReAct loop reasons and acts at every step, which is flexible but token-heavy. Plan-and-execute separates the thinking from the doing, breaking the task into a dependency graph upfront and executing steps in parallel where possible. The harness decides which pattern fits the task.

Action has moved beyond individual JSON function calls. The dominant pattern today is code-as-action: the agent writes a script that calls multiple tools, handles retries in code, processes the results, and returns a single clean output. This keeps the context window lean and cuts costs significantly on tool-heavy pipelines.

Collaboration is now handled at the protocol level through MCP and A2A rather than baked into individual frameworks.

What the Harness Is Actually Made Of

The agent harness has six distinct components. Each has its own failure modes and its own implementation surface.

Context Management

Context management controls what the model reasons over. Most agent failures are not the model giving a wrong answer; they are the model reasoning over the wrong information because the harness injected too much, too little, or the wrong kind of context. The harness is responsible for selecting what to retrieve, compressing what exceeds the context window, and filtering out noise before it reaches the model.

The four operations the harness should implement are:

- Select: retrieve only what is relevant to the current step. In a RAG pipeline, this means running a semantic search over a knowledge base and injecting the top-ranked chunks, not the entire document store. A legal review agent searching a 500-document repository should receive the 6 most relevant contract clauses, not all 500 documents.

- Compress: when context is long, summarize it rather than truncate it blindly. Anthropic's Agent SDK provides automatic compaction, but for tasks spanning multiple sessions, the harness should also write a structured progress file at the end of each session. This file records what was completed, what is pending, and what the next session should prioritize. Compaction alone does not preserve this kind of structured state.

- Isolate: inputs should be filtered at the tool boundary before they reach the model. A database query returning 10,000 rows should be aggregated to a summary before injection. This is both a cost control and a prompt injection defense. When an agent reads web pages or user-submitted documents, adversarial instructions in that content can redirect the agent's behavior unless the harness sanitizes inputs first.

- Write: externalize information the agent will need later. Scratchpads, progress files, and structured state objects should be written by the harness at each checkpoint, not left to the model to reconstruct from memory.

Memory

Memory should be implemented as a layered system, not a single database. Each layer handles a different scope.

Working memory is the active context window. It is managed by the context engineering operations above.

External semantic memory uses a vector database to store facts extracted from past interactions and retrieve them when relevant. Mem0 is an open-source library that acts as a dedicated memory layer sitting between an agent and its underlying language model. Mem0 converts conversations into atomic memory facts, stores them in a vector store (Qdrant, pgvector, or Chroma), and retrieves them via similarity search at the start of each reasoning cycle.

Persistent cross-session memory involves storing information as files in a managed directory, reading and writing those files across entirely separate conversations. The agent reads, writes, and updates these files through tool calls. This is the right layer for tasks that run over multiple days.

Tools

Tools give the agent the ability to act in the world rather than just produce text. The implementation pattern has shifted from individual JSON function calls to code-as-action: the agent writes a script that orchestrates multiple tool calls, handles retries, and returns a single clean output.

Control Flow

Control flow governs the reasoning loop: when to continue, when to stop, and what to do when something goes wrong. The two dominant patterns are ReAct (reason and act at every step) and plan-and-execute (separate thinking from doing).

Verification

Verification independently checks whether the agent's output actually meets the required standard before the task is closed. This is a separate step from the reasoning loop, not embedded within it.

Coordination

Coordination manages how multiple agents communicate and divide work. At the protocol level, this is handled through MCP (Model Context Protocol) and A2A (Agent-to-Agent), rather than being baked into individual frameworks.

How This Connects to the Current Agent Landscape

The timing of this article is notable. On December 31, 2026, industry leaders predicted 2026 as the breakthrough year for AI agents across all domains. On December 1, 2026, AI agents crossed a critical reliability threshold, fundamentally transforming programming capabilities.

In practice, the harness layer is what made that reliability threshold possible. Claude Code — Anthropic's agentic coding tool — uses Claude Sonnet 4.6 and Claude Opus 4.6, and relies on MCP for tool connectivity. On April 23, 2026, Anthropic published a post-mortem on Claude Code quality issues, acknowledging that even with strong models, the harness layer requires careful engineering.

The same pattern applies across the ecosystem. GPT-5.5 tops benchmarks but costs 2x the API price and still hallucinates, as we reported on April 25, 2026. The harness layer — context management, verification, and control flow — is what mitigates those model-level limitations in production.

Frequently Asked Questions

What is agent harnessing?

Agent harnessing is the non-model infrastructure layer that gives an AI agent persistent memory, tool connectivity, controlled reasoning loops, verification, and the ability to coordinate with other agents. The model decides what to do; the harness determines whether it actually does it reliably at scale.

What are the six components of an agent harness?

The six components are: context management (controlling what the model reasons over), memory (continuity across steps and sessions), tools (ability to act in the world), control flow (governing the reasoning loop), verification (independent output checking), and coordination (multi-agent communication).

How does memory work in production agent systems?

Memory is implemented as a layered system. Working memory is the active context window. External semantic memory uses vector databases like Qdrant or Chroma to store and retrieve facts from past interactions. Persistent cross-session memory stores information as files in a managed directory that agents read and update through tool calls.

Why is the harness more important than the model for agent reliability?

All builders have access to the same frontier models through the same APIs. What separates production-grade agents from those that fail silently is the infrastructure surrounding the model — context management prevents reasoning over wrong information, verification catches errors before they propagate, and control flow handles failures gracefully.

gentic.news Analysis

This article lands at an inflection point for the agent ecosystem. The claim that "the model is not the hard part" would have been controversial 18 months ago, but it aligns with what we've observed across hundreds of agent deployments. The knowledge graph shows that AI agents crossed a critical reliability threshold on December 1, 2026 — a milestone that was only possible because the harness layer matured alongside the models.

The six-component taxonomy is useful precisely because it maps to real failure modes. When an agent goes wrong in production, knowing which of the six dimensions failed tells exactly where in the harness to look. This is not theoretical: Claude Code's post-mortem on April 23, 2026, documented quality issues that trace directly to context management and verification gaps.

The emphasis on memory as a standalone engineering discipline — rather than a sub-feature of the reasoning engine — is the right call. Mem0 and similar tools are becoming essential infrastructure, and the layered approach (working memory, semantic memory, persistent storage) mirrors how production systems actually handle state.

What's missing from this picture is cost. The harness layer adds latency and API calls at every step. Context management, memory retrieval, and verification loops all consume tokens. As GPT-5.5 demonstrated with its 2x API price increase, the marginal cost of reliability is real. The next frontier for agent infrastructure will be optimizing the harness for cost efficiency, not just reliability.