In a significant breakthrough at the intersection of artificial intelligence and high-performance computing, ByteDance has unveiled CUDA Agent, a large-scale agentic reinforcement learning system designed specifically for generating high-performance CUDA kernels. This development represents a major leap forward in automating one of the most complex and specialized areas of software engineering: writing optimized code for NVIDIA GPUs.

What CUDA Agent Actually Does

CUDA Agent is an AI system that generates optimized CUDA kernels—the fundamental building blocks of GPU-accelerated applications. These kernels are notoriously difficult to write and optimize, requiring deep expertise in parallel computing, memory hierarchies, and GPU architecture. Traditionally, this has been the domain of highly specialized engineers who spend years mastering the intricacies of GPU programming.

The system operates as an agentic RL framework, meaning it uses reinforcement learning where the AI agent learns through trial and error, receiving rewards for generating better-performing code. This approach allows the system to explore vast spaces of potential optimizations that would be impractical for human programmers to consider systematically.

Performance Benchmarks: Setting New Standards

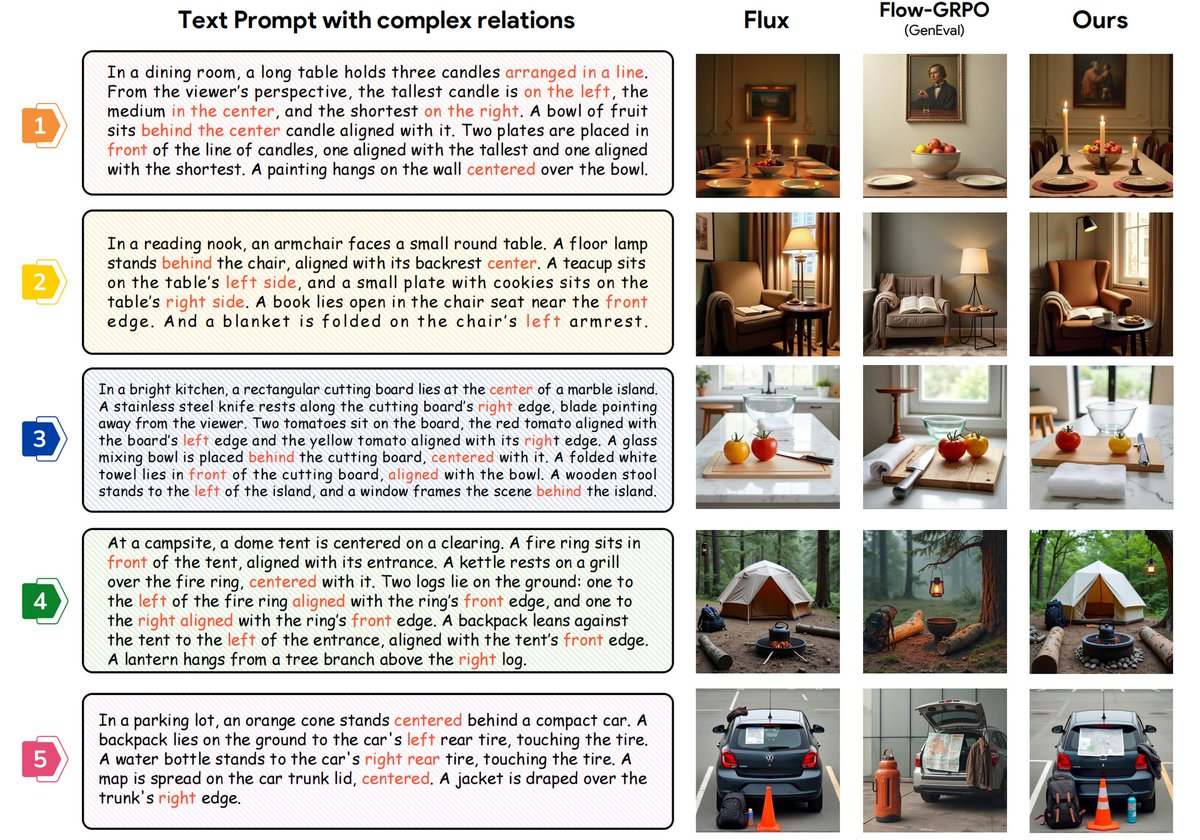

According to the announcement shared via HuggingPapers, CUDA Agent achieves remarkable performance gains on KernelBench, a benchmark for evaluating CUDA kernel generation systems:

- 100% faster than torch.compile on Level-1 and Level-2 splits

- 92% faster than torch.compile on Level-3 splits

- Approximately 40% better performance than Claude Opus 4.5 and Gemini 3 Pro on the hardest tasks

These results are particularly significant because they demonstrate CUDA Agent's ability to outperform not only existing compilation frameworks but also the most advanced general-purpose AI models available today. The fact that it beats Claude Opus 4.5 and Gemini 3 Pro by such a substantial margin on specialized tasks highlights the value of domain-specific AI systems over general-purpose models for certain applications.

Technical Architecture and Innovation

While the initial announcement doesn't provide exhaustive technical details, we can infer several key aspects of CUDA Agent's architecture based on its description as a "large-scale agentic RL system":

Reinforcement Learning Framework: The system likely uses advanced RL algorithms that allow it to learn from its own generated code, improving over time through a reward mechanism based on kernel performance metrics.

Domain-Specific Knowledge: Unlike general-purpose coding assistants, CUDA Agent appears to have been trained specifically on CUDA optimization patterns, GPU architecture details, and performance characteristics.

Search and Exploration Capabilities: The agentic nature suggests the system can explore multiple optimization pathways simultaneously, potentially discovering novel optimization strategies that human programmers might overlook.

Integration with Existing Toolchains: The comparison with torch.compile indicates that CUDA Agent likely integrates with or complements existing PyTorch compilation pipelines.

Implications for AI and High-Performance Computing

The development of CUDA Agent has far-reaching implications across multiple domains:

For AI Research and Development

CUDA Agent represents a compelling case for specialized AI systems over general-purpose models for certain technical domains. While large language models have shown impressive capabilities across broad ranges of tasks, this development suggests that targeted systems with domain-specific training can achieve superior results in their areas of specialization.

The success of CUDA Agent also validates the agentic RL approach for complex optimization problems, potentially opening doors for similar systems in other computationally intensive domains like database query optimization, compiler design, or circuit layout.

For GPU Programming and Performance Engineering

For organizations relying on GPU acceleration—including AI research labs, scientific computing facilities, and companies developing graphics or simulation software—CUDA Agent could dramatically reduce development time and improve performance of critical code.

The system could serve as both a productivity tool for experienced GPU programmers and a training aid for those learning CUDA optimization techniques by revealing optimization patterns and strategies.

For the Broader Software Development Ecosystem

CUDA Agent points toward a future where AI-assisted code optimization becomes standard practice for performance-critical applications. As similar systems emerge for other hardware platforms and programming paradigms, we may see a fundamental shift in how performance engineering is approached, with AI systems handling the low-level optimization details while human engineers focus on higher-level architecture and algorithms.

Challenges and Limitations

While the announced results are impressive, several questions remain:

Generalization Capability: How well does CUDA Agent perform on novel kernel types or GPU architectures not seen during training?

Integration Complexity: What is required to integrate CUDA Agent into existing development workflows and CI/CD pipelines?

Resource Requirements: As a "large-scale" system, what computational resources are needed for training and inference?

Interpretability and Control: Can engineers understand and modify the optimization strategies proposed by the AI system, or is it a black box?

The Competitive Landscape

ByteDance's entry into this space with CUDA Agent places them alongside other major technology companies investing in AI for code generation and optimization. NVIDIA itself has been working on similar technologies, and the performance comparisons against torch.compile (a PyTorch feature) suggest potential competition with Meta's AI-assisted compilation efforts.

The approximately 40% performance advantage over Claude Opus 4.5 and Gemini 3 Pro is particularly noteworthy, as it demonstrates that even the most advanced general-purpose AI coding assistants may be outperformed by specialized systems in their respective domains.

Looking Forward: The Future of AI-Assisted Optimization

CUDA Agent represents more than just another AI coding tool—it's a glimpse into a future where AI systems become essential partners in performance engineering. As these systems mature, we can anticipate:

- Cross-platform optimization agents for different hardware architectures (AMD GPUs, custom AI accelerators, etc.)

- Multi-level optimization systems that work across the entire software stack from algorithms to assembly code

- Collaborative optimization environments where human engineers and AI systems work together interactively

- Democratization of high-performance programming, making GPU optimization accessible to a broader range of developers

ByteDance's CUDA Agent has set a new benchmark for what's possible in AI-assisted code optimization. As the system becomes more widely available and its capabilities are further developed, it could fundamentally change how we approach one of the most challenging aspects of modern computing: extracting maximum performance from increasingly complex hardware architectures.

Source: HuggingPapers on X