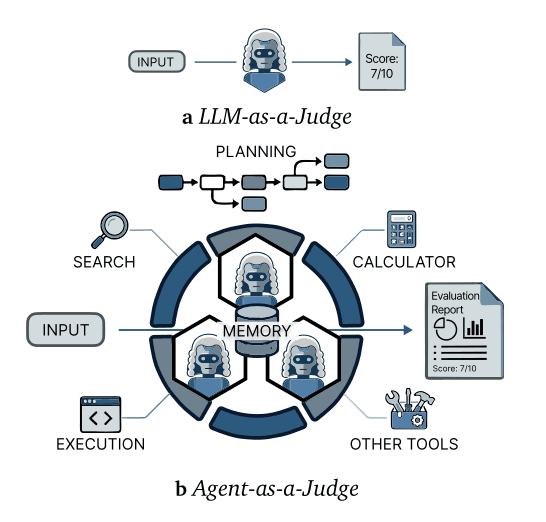

A new study posted to arXiv tackles a fundamental question in AI evaluation: how many LLM-based agent judges do you need, and can you trust them? The paper, "Logarithmic Scores, Power-Law Discoveries: Disentangling Measurement from Coverage in Agent-Based Evaluation," finds that persona-conditioned agents can match human raters but reveals a critical dissociation between scoring consistency and issue discovery.

What the Researchers Found: A Score-Coverage Gap

Through 960 evaluation sessions across 15 conversational AI tasks, the researchers conducted a Turing-style validation. They found that panels of LLM agents, conditioned with structured Big Five personality traits (e.g., high conscientiousness, low agreeableness), produced evaluations statistically indistinguishable from human raters. This validates the core premise of using agents as scalable judges.

The more significant finding is a score-coverage dissociation. As you add more agent judges to a panel:

- Quality Scores (e.g., fluency, helpfulness) improve logarithmically, saturating quickly. The study indicates scores stabilize with just 5-7 agents.

- Unique Issue Discoveries (e.g., safety violations, factual errors) follow a sublinear power law, continuing to grow with larger panels but with heavy diminishing returns. Discovery saturates roughly twice as slowly as scoring.

This means a small panel is sufficient for a stable quality score, but a much larger panel is needed to have confidence you've found most of the possible problems—a finding with major implications for benchmarking and red-teaming.

How It Works: Personality as a Discovery Engine

The mechanism hinges on ensemble diversity driven by structured persona conditioning. The researchers didn't just prompt agents with different roles; they conditioned them on specific combinations of Big Five personality dimensions (Openness, Conscientiousness, Extraversion, Agreeableness, Neuroticism).

- Diverse Probes: This conditioning causes agents to probe different quality dimensions. A low-agreeableness, high-conscientiousness agent acts as an adversarial expert, pushing discovery into the "long tail" of rare issues.

- Power Law Finding Space: The researchers hypothesize the space of possible issues in a model's responses follows a power-law distribution. Common, critical issues are found quickly by small panels (the "head"), while uncovering the long tail of corner cases requires exponentially more effort.

- Ablation Confirmation: A controlled ablation study confirmed that simple prompting variations without structured persona conditioning failed to produce the same beneficial scaling properties. The structured psychological framework is key to generating the necessary diversity.

The paper draws an analogy to species accumulation curves in ecology, where discovering common species is easy, but cataloging rare ones requires extensive sampling.

Key Implications for AI Evaluation

- Benchmarking Cost: For organizations running frequent model evaluations, this research suggests a panel of ~5-7 persona-conditioned agents may be sufficient for reliable scoring, potentially cutting evaluation cost and time.

- Safety and Red-Teaming: For high-stakes safety evaluations or red-teaming, where discovering rare failure modes is critical, the study argues for much larger, diverse panels (10+ agents) or iterative rounds of testing with varied personas.

- Evaluation Design: It provides a quantitative framework for deciding on panel size based on goal—score stability vs. issue coverage—and warns that using score saturation as a proxy for comprehensive evaluation is flawed.

gentic.news Analysis

This study arrives amid a surge of arXiv preprints focusing on the mechanics and pitfalls of AI evaluation, a trend reflected in our knowledge graph showing arXiv appeared in 43 articles this week alone. It directly engages with a core tension in modern AI: the need for scalable, automated evaluation that doesn't sacrifice depth or get gamed. The finding of a score-coverage gap is a critical caution for anyone interpreting benchmark results, especially as agentic evaluation becomes more common.

The research connects to several recent threads we've covered. First, it complements last week's study revealing vulnerabilities of RAG systems to evaluation gaming (arXiv, 2026-03-27), highlighting that how you evaluate is as important as what you evaluate. Second, it provides a methodological anchor for the growing field of agent psychometrics, which our article on April 2nd covered, showing how predictable agent traits can be. Here, those traits are not just predicted but engineered to create effective evaluation ensembles.

Furthermore, the use of structured psychological frameworks (Big Five) to generate diversity is a sophisticated step beyond simple prompt engineering. It suggests future evaluation systems may explicitly model reviewer psychology to optimize for coverage, moving towards a more rigorous science of AI assessment. As models themselves become more agentic, as seen in benchmarks like the 'Connections' game proposed for social intelligence (arXiv, 2026-03-31), the need for robust, scalable evaluation of these agents becomes paramount. This paper provides both a validation of the approach and a crucial map of its scaling limits.

Frequently Asked Questions

How many AI agent judges do I need for evaluation?

According to this study, it depends on your goal. For a stable, reliable quality score (like fluency or helpfulness), a panel of 5-7 persona-conditioned agents may be sufficient, as scores saturate logarithmically. For comprehensive issue discovery (like finding safety flaws or factual errors), you likely need a panel of 10 or more agents, as unique discoveries follow a slower power law and continue to accumulate with larger panel sizes.

What is "persona conditioning" for AI agents?

Persona conditioning goes beyond simple role-playing prompts (e.g., "You are a helpful teacher"). In this study, it involves systematically conditioning the LLM agent's behavior based on a psychological framework, specifically the Big Five personality traits (Openness, Conscientiousness, Extraversion, Agreeableness, Neuroticism). This structured approach creates more diverse and predictable probing behaviors, which is essential for the agent to act as an effective and varied evaluator.

Can AI agents really replace human evaluators?

This study's Turing-style validation suggests that, for the conversational AI tasks tested, the evaluations from panels of persona-conditioned AI agents were statistically indistinguishable from those of human raters. This indicates they can be a valid and scalable proxy for human evaluation for scoring purposes. However, the score-coverage gap shows that humans might still be uniquely valuable for discovering novel, edge-case failures that require very large AI panels to uncover.

What is the "power law distribution of the finding space"?

The researchers hypothesize that the possible issues or findings in an AI model's responses are not evenly distributed. Instead, a few common, critical issues make up the majority of what you'll find (the "head" of the distribution), while a long "tail" consists of many rare, corner-case issues. This is analogous to a power law seen in other domains, like word frequency or city sizes. It explains why discovering the first 80% of problems is relatively easy, but finding the last 20% requires disproportionately more effort and diverse evaluators.