A significant controversy has erupted in the AI community with allegations that Chinese AI company DeepSeek scraped approximately 150,000 private conversations from Anthropic's Claude AI assistant for training data. The accusation, first highlighted by developer Peter O'Mallet and amplified through social media channels, has prompted a dramatic response: O'Mallet has publicly released 155,000 of his personal Claude Code messages in what appears to be both an act of protest and a demonstration of the scale of potentially compromised data.

The Allegations and Response

According to the circulating reports, DeepSeek—a prominent Chinese AI research company known for its open-source language models—was discovered to have systematically collected private user interactions with Claude, Anthropic's conversational AI. While the exact methodology of this alleged scraping remains unclear, the scale suggests automated collection rather than manual copying.

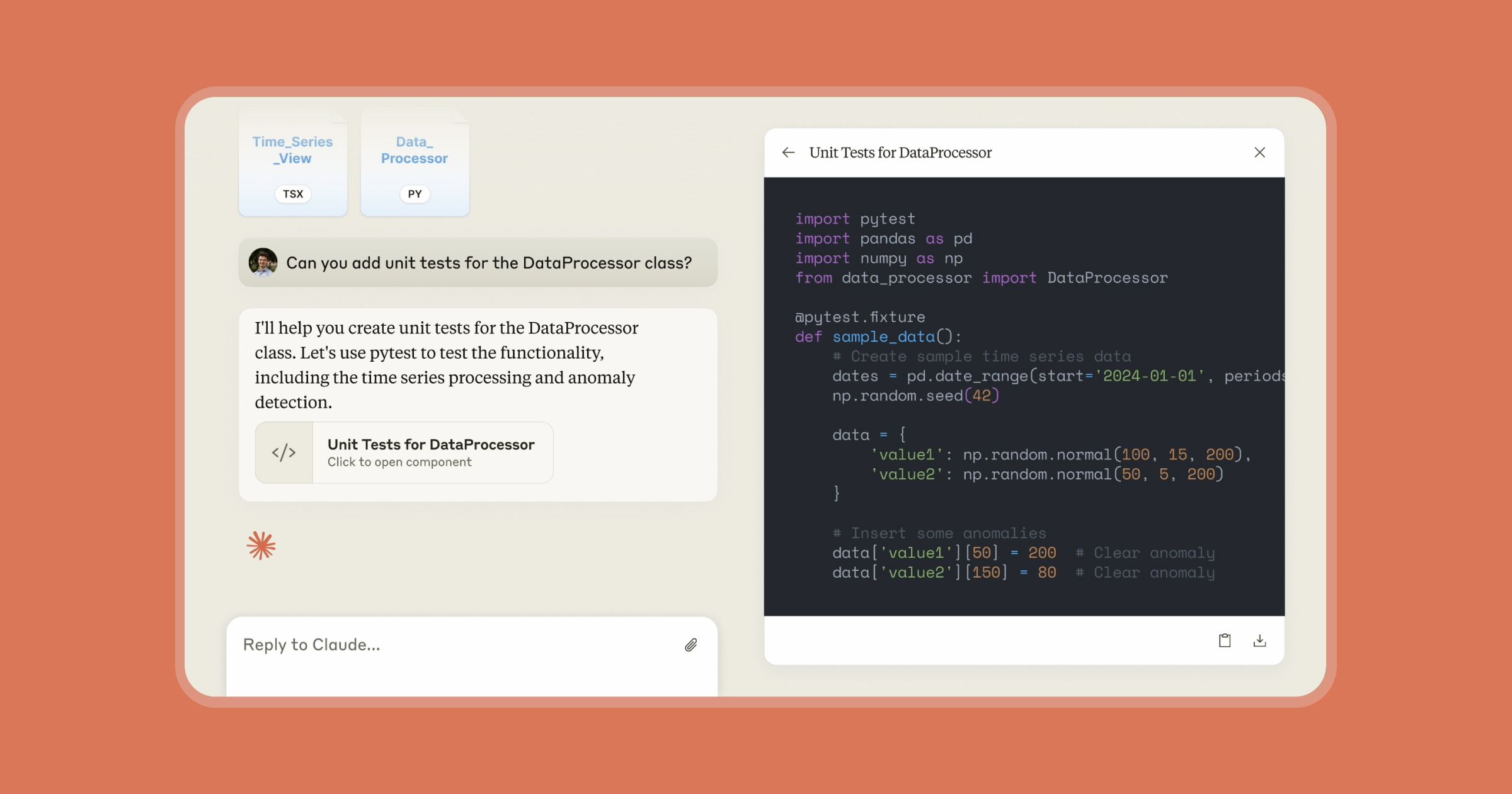

In response to these allegations, developer Peter O'Mallet took the extraordinary step of releasing his personal archive of 155,000 Claude Code messages. This dataset, while representing only one user's interactions, provides a tangible example of the type of conversational data that may have been targeted. O'Mallet's actions appear designed to both highlight the potential privacy implications and question the ethical boundaries of AI training data collection.

The Broader Context of AI Training Data Ethics

This incident occurs against a backdrop of increasing scrutiny around how AI companies source their training data. Recent months have seen multiple lawsuits against AI developers alleging copyright infringement through unauthorized use of copyrighted materials, journalistic content, and potentially private user data.

Anthropic's Claude operates with explicit privacy protections and terms of service that typically prohibit automated scraping of conversations. The alleged actions would represent not just an ethical breach but potentially a violation of both Anthropic's terms and various data protection regulations, depending on the geographic origin of the scraped conversations.

Technical and Competitive Implications

The competitive landscape of AI development has created intense pressure to acquire high-quality training data. Conversational data from sophisticated models like Claude represents particularly valuable training material, as it contains examples of nuanced human-AI interaction, problem-solving approaches, and coding patterns. For companies seeking to improve their own coding assistants or conversational agents, such data could provide significant competitive advantages.

However, this alleged incident raises questions about whether some AI developers are cutting ethical corners in the race to improve their models. The practice of "scraping" competitor outputs for training—sometimes called "model distillation" or "data harvesting"—exists in a legal gray area that is only now being tested in courts and regulatory bodies.

Privacy and Security Concerns

For users of AI assistants like Claude, the allegations raise serious privacy concerns. Many users share proprietary code, business information, personal details, and creative works during conversations with AI assistants. While companies like Anthropic implement security measures and privacy guarantees, incidents like this alleged scraping undermine user trust in the entire ecosystem.

The release of O'Mallet's 155,000 messages—while his personal choice—also demonstrates how much sensitive information can accumulate through routine AI interactions. Even anonymized, such datasets could potentially reveal patterns about development practices, coding styles, or problem-solving approaches that some developers or companies might consider proprietary.

Industry Reactions and Potential Consequences

The AI community's reaction has been mixed, with some expressing outrage at the alleged data scraping while others question the verifiability of the claims. DeepSeek has not yet issued a public statement addressing the specific allegations, though the company has previously emphasized its commitment to ethical AI development.

Potential consequences could include:

- Legal action from Anthropic for terms of service violations

- Regulatory scrutiny in jurisdictions with strong data protection laws

- Damage to DeepSeek's reputation in the global AI community

- Increased calls for transparency in AI training data sourcing

- Potential changes to how AI companies protect user conversations

The Future of AI Training Data Transparency

This incident highlights the growing need for greater transparency in AI training data practices. As AI systems become more sophisticated and valuable, the methods used to train them face increasing scrutiny. Several initiatives are emerging to address these concerns:

- Data Provenance Standards: Efforts to create verifiable records of training data sources

- Ethical Sourcing Guidelines: Industry-wide standards for acceptable data collection practices

- Technical Protections: Improved methods to prevent automated scraping of AI outputs

- Regulatory Frameworks: Government policies specifically addressing AI training data

Conclusion

The allegations against DeepSeek represent a significant moment in the ongoing conversation about AI ethics and competition. Whether proven or not, the incident has already prompted important discussions about privacy, intellectual property, and fair competition in AI development. As the industry matures, establishing clear norms and regulations around training data will be essential for maintaining user trust and fostering healthy innovation.

Source: Twitter exchange between @kimmonismus and @peteromallet regarding alleged DeepSeek data scraping and subsequent release of Claude conversation data.