In a strategic move to erode NVIDIA's foundational advantage in AI data centers, AMD has thrown its weight behind a new open standard for connecting AI accelerators. The company is a founding member of the newly formed UALink Consortium, which aims to develop a high-speed, open interconnect specification to rival NVIDIA's proprietary NVLink technology. This marks a significant escalation in the battle for AI hardware infrastructure, shifting the fight from just raw compute power to the critical networking layer that binds GPUs together.

Key Takeaways

- AMD is supporting the newly formed UALink Consortium, which aims to create an open standard for connecting AI accelerators.

- This move challenges NVIDIA's control over the critical NVLink technology that underpins its AI data center systems.

The Interconnect Bottleneck

Training modern large language models requires thousands of GPUs to work in concert. The speed at which these chips can communicate—sharing model parameters and gradients—is often the limiting factor for overall training speed and efficiency. NVIDIA's NVLink, a direct GPU-to-GPU interconnect, has been a key architectural moat, allowing its GPUs (like the H100 and B200) to form tightly coupled, high-performance clusters that competitors have struggled to match with standard Ethernet or InfiniBand.

NVLink is a closed, proprietary technology. To build a large-scale AI cluster with optimal performance, customers are effectively locked into an all-NVIDIA solution. The UALink Consortium, backed by AMD, Intel, Broadcom, Cisco, Google, HPE, Meta, and Microsoft, seeks to break this lock-in by creating an open, vendor-neutral alternative.

What is UALink?

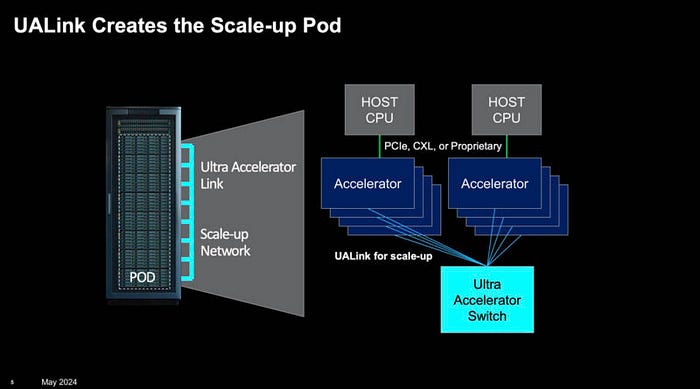

The Ultra Accelerator Link (UALink) is proposed as an open industry standard for high-bandwidth, low-latency communication between AI accelerators (GPUs, TPUs, and other AI chips) and between accelerators and switches. The goal is to enable seamless composability of AI systems from different hardware vendors.

While specific technical specifications and bandwidth targets for the first version (UALink 1.0) are not yet public, the consortium's formation signals a coordinated effort to create a credible alternative. The involvement of major cloud providers (Google, Meta, Microsoft) is critical, as they operate the largest AI training clusters and have a vested interest in reducing cost and increasing supplier flexibility.

AMD's Strategic Play

For AMD, this is a necessary step to make its Instinct MI300X and future accelerators competitive in large-scale deployments. While the MI300X offers compelling raw compute and memory specs, its ability to scale in massive clusters has been hampered by reliance on slower, standard interconnects. By helping define UALink, AMD ensures its future chips will be designed with a high-performance, scalable interconnect from the start.

This follows AMD's broader strategy of embracing open ecosystems, as seen with its ROCm software stack (an open alternative to NVIDIA's CUDA). A successful open interconnect standard would allow AMD to compete on a more level playing field, where customers could mix and match hardware from different vendors without catastrophic performance penalties.

The Competitive Landscape: UALink vs. NVLink

Governance Proprietary to NVIDIA Open standard, governed by UALink Consortium Key Backers NVIDIA AMD, Intel, Broadcom, Google, Meta, Microsoft, HPE, Cisco Primary Goal Optimize performance within NVIDIA-only systems Enable multi-vendor, composable AI systems Ecosystem Lock-in High Designed to be low Current Status Mature, in 4th/5th generation (NVLink 4, NVLink 5) Specification in development (v1.0 targeted for 2026)NVIDIA is not sitting idle. It continues to advance NVLink, with the latest generation offering 900 GB/s of bidirectional bandwidth per GPU. The success of UALink will depend on its ability to match or exceed this performance while maintaining true openness and multi-vendor interoperability.

What to Watch

The formation of the consortium is just the beginning. Key milestones to monitor include:

- UALink 1.0 Specification Release: The technical details and performance targets will reveal the consortium's ambition.

- First Silicon: When will AMD, Intel, or other members ship AI accelerators with integrated UALink support? This is likely 2-3 years out.

- NVIDIA's Response: Will NVIDIA ever engage with or join the consortium, or will it continue to enhance NVLink as a premium, closed alternative?

- Cloud Adoption: The ultimate test will be if Google, Azure, or AWS offer public cloud instances based on UALink-connected, multi-vendor hardware.

The battle is not just about interconnect technology but control over the data center architecture for AI. An open standard could lower costs and increase innovation, but it must first overcome NVIDIA's significant head start and proven performance.

gentic.news Analysis

This move is a direct, coordinated assault on one of NVIDIA's most defensible fortresses. As we've covered in our analysis of the MI300X launch and the AI infrastructure war, competition has so far focused on FLOPs and HBM memory. The interconnect layer has remained NVIDIA's uncontested domain. The UALink Consortium represents the first serious, ecosystem-wide attempt to standardize this layer.

Historically, open standards have eventually dominated closed ones in data center networking (see Ethernet vs. proprietary alternatives). However, NVIDIA's NVLink is deeply integrated with its entire software and system stack (CUDA, DGX). Displacing it requires more than just a hardware specification; it requires a viable full-stack alternative. AMD's ROCm is progressing, but the ecosystem gap remains wide.

The involvement of the hyperscalers is the most telling signal. Google (with its TPU), Meta, and Microsoft are effectively the primary customers for massive-scale AI training. Their participation means they are willing to invest engineering resources to foster an open alternative, primarily to gain bargaining power and reduce dependency on a single supplier. This aligns with the trend we noted in our piece on Cloud AI Sovereignty, where major cloud providers are aggressively pursuing custom silicon and multi-sourcing strategies.

For AI engineers and infrastructure leaders, the practical implication is that true multi-vendor, best-of-breed AI clusters are now a long-term possibility rather than a fantasy. In the near term, however, NVIDIA's full-stack integration will continue to offer the path of least resistance for cutting-edge model training. The real test for UALink will come around 2027-2028, when the first systems based on the standard could potentially enter production.

Frequently Asked Questions

What is the difference between UALink and NVLink?

NVLink is NVIDIA's proprietary, high-speed interconnect technology that allows its GPUs to communicate directly with each other at very high bandwidth. UALink is a proposed open standard, backed by a consortium including AMD, Intel, and major cloud providers, aiming to provide similar high-performance connectivity but in a way that allows different brands of AI chips (e.g., AMD, Intel, Google TPU) to work together seamlessly in the same system.

When will we see UALink in actual products?

The UALink Consortium has just been formed. The first version of the specification (UALink 1.0) is targeted for release in 2026. Following that, it will take chip designers like AMD and Intel time to integrate the standard into new silicon. The first AI accelerators featuring UALink support likely won't reach the market until 2027 or later.

Does this mean NVIDIA will lose its AI dominance?

Not in the short to medium term. NVIDIA's lead is built on a full-stack advantage: superior hardware (GPUs + NVLink), dominant software (CUDA), and mature platform solutions (DGX). UALink only addresses one piece of this puzzle—the hardware interconnect. While it is a necessary development for competitors, catching up to NVIDIA's integrated ecosystem will take years of consistent execution by the entire UALink consortium.

Can existing AMD MI300X GPUs use UALink?

No. The AMD Instinct MI300X accelerators currently in production were designed before the UALink standard existed. They use AMD's Infinity Fabric technology for on-board connectivity and rely on standard networking (InfiniBand, Ethernet) for scale-out communication. UALink support will be a feature of future generations of AI accelerators.