A retweet by AI commentator Rohan Paul has highlighted a key finding from a recent McKinsey & Company analysis: the value being generated by advances in AI infrastructure is accelerating faster than the ability of most businesses to capture and operationalize that value.

The source is a brief social media post referencing McKinsey data, not a full report. The core claim is that a significant gap exists between the potential value being created by the underlying technology (AI infrastructure) and the realized value being captured by enterprises through business processes and products.

What the Data Suggests

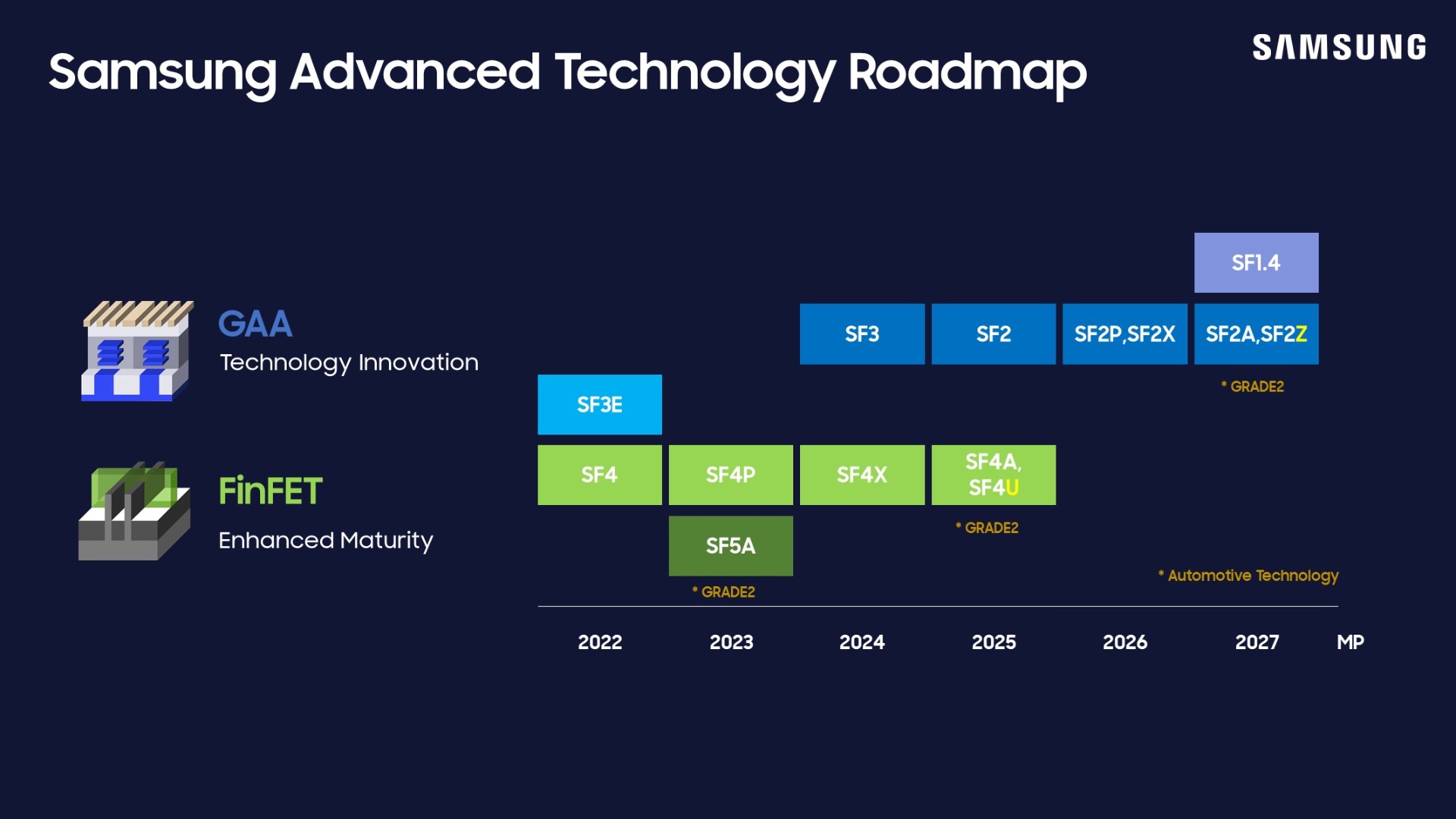

While the tweet does not link to the specific McKinsey report or provide detailed metrics, the assertion points to a persistent theme in enterprise AI adoption. "AI infrastructure" typically refers to the foundational layer enabling AI development and deployment: cloud compute platforms (AWS, Google Cloud, Azure), specialized hardware (GPUs from Nvidia, AMD, and custom AI accelerators), model training frameworks, and MLOps tooling.

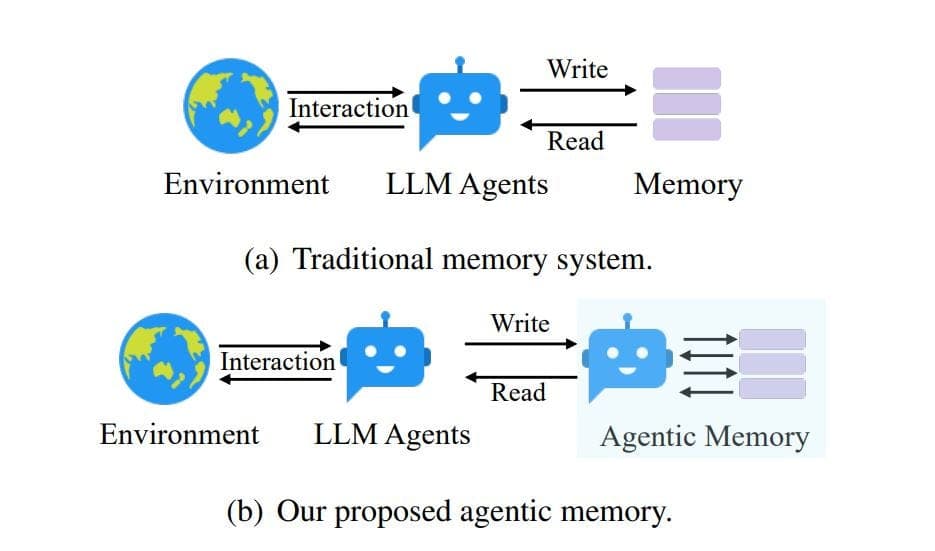

The argument is that investment and innovation at this infrastructure layer are yielding capabilities—such as larger, more capable foundation models, faster training times, and lower inference costs—at a breakneck pace. However, transforming those raw capabilities into measurable business outcomes (increased revenue, reduced costs, new products) requires significant complementary investments in data engineering, process redesign, talent, and change management. McKinsey's data, as cited, suggests the former is outpacing the latter for a majority of organizations.

The Widening Implementation Gap

This gap represents the difference between technical potential and economic realization. For example, a new cluster of H100 GPUs might reduce model training time by 40%, but a company may lack the data pipelines to feed it high-quality training data or the product managers to define viable use cases. The infrastructure creates option value, but capturing it requires organizational maturity.

This dynamic creates a competitive landscape where early adopters with strong AI integration capabilities can pull further ahead, while slower-moving organizations see the theoretical benefits of AI grow even as their practical ability to harness them stagnates.

gentic.news Analysis

This observation from McKinsey aligns with a pattern we've tracked throughout 2025 and into 2026. Our coverage of Databricks' $2.1B acquisition of MosaicML highlighted the industry's push to bridge this exact gap by making advanced model training accessible to data teams, not just AI research labs. Similarly, the rise of Grok-3's enterprise-focused toolchain and Anthropic's Project Constitution underscore a market shift from pure model capability toward usability, safety, and integration—the very factors that determine value capture.

The trend also contextualizes the recent surge in funding for applied AI and MLOps startups, as noted in our H1 2026 AI Venture Funding Report. Investors are betting heavily on companies that provide the "last mile" tools—like Weights & Biases and Modular—that help businesses operationalize AI. McKinsey's data suggests this investment thesis is correct: the bottleneck is no longer the model, but the machine around it.

For technical leaders, the implication is clear. Prioritizing infrastructure for infrastructure's sake is a trailing indicator. The leading indicator of ROI will be investments that tighten the feedback loop between infrastructure capability and business process change: robust evaluation frameworks, integrated data platforms, and cross-functional product teams that include ML engineers.

Frequently Asked Questions

What is "AI infrastructure"?

AI infrastructure refers to the hardware, software, and cloud services required to develop, train, and deploy artificial intelligence models. This includes high-performance computing resources (like GPUs and TPUs), machine learning frameworks (like PyTorch and TensorFlow), vector databases, and MLOps platforms that manage the model lifecycle from experimentation to production.

Why can't businesses capture the value as fast as it's created?

Capturing value requires more than just access to technology. It involves integrating AI into core business workflows, which demands clean and accessible data, redesigning existing processes, upskilling or hiring talent with new skill sets, managing organizational change, and establishing governance for ethical and safe deployment. These are complex, human-centric challenges that often move slower than pure technological advancement.

What should a business do to close this gap?

Businesses should focus on building "AI-ready" organizations. This means treating data as a core strategic asset, creating cross-functional teams that blend business, data science, and engineering expertise, starting with well-defined pilot projects that solve specific business problems, and investing in the MLOps tooling that reduces the time from experiment to production deployment. The goal is to build an organizational muscle for AI adoption, not just rent compute power.

Is this gap a new problem?

The pattern of technology outpacing adoption is classic (see the productivity paradox of the 1980s-90s with IT), but the pace of change in AI is unprecedented. The half-life of a state-of-the-art model is now measured in months, not years, dramatically compressing the timeline for organizations to adapt. This makes the implementation gap both more acute and more costly than in previous technological shifts.