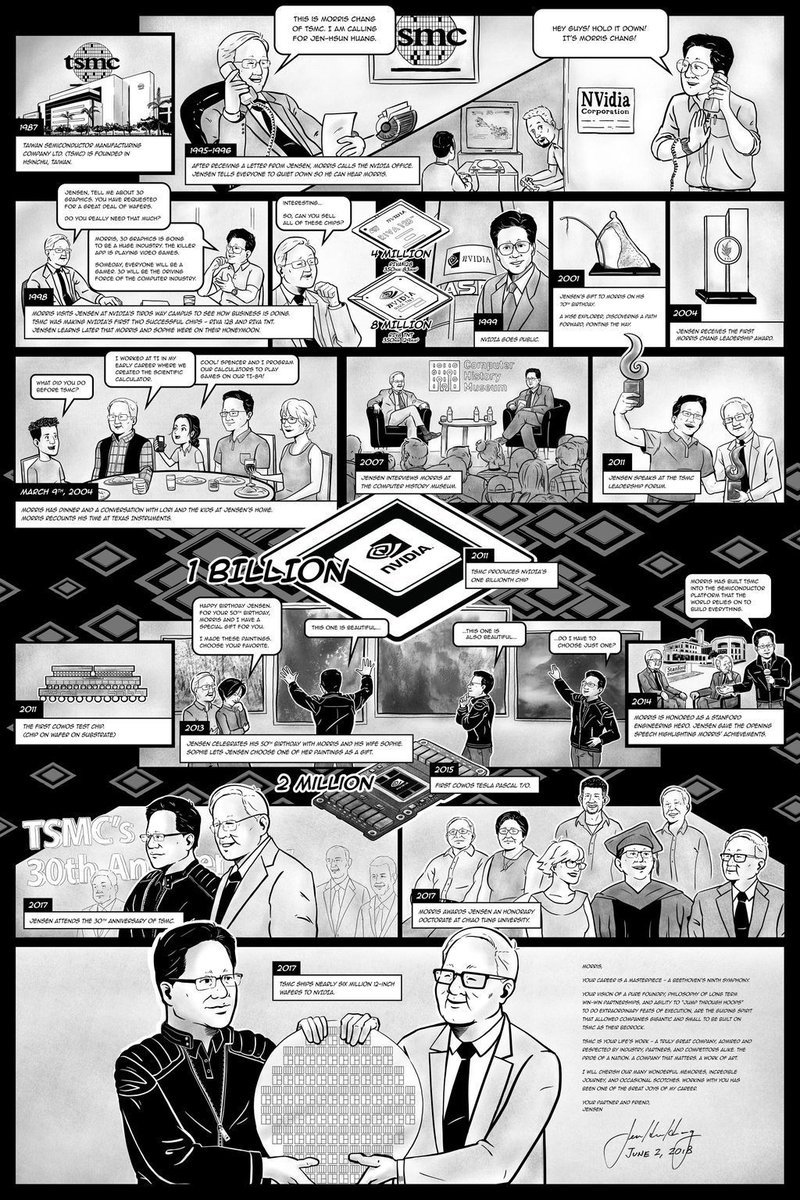

Meta has signed a multibillion-dollar deal to use millions of Amazon's homegrown Graviton5 CPUs in AWS data centers, Amazon announced on Friday. The ARM-based chips will power Meta’s growing AI agent workloads — a notable departure from the GPU-centric infrastructure that dominates training and traditional inference.

The deal comes as Meta pursues a record $200B+ procurement campaign spanning Nvidia, AMD, CoreWeave, Broadcom, and now AWS. It also marks a shift in Meta’s cloud strategy: after signing a six-year, $10 billion deal with Google Cloud in August 2024, Meta is bringing a significant chunk of its AI compute spend back to its longtime primary cloud provider.

Key Takeaways

- Meta will deploy tens of millions of AWS Graviton5 CPU cores for AI agent workloads, signaling that agentic inference favors CPUs over GPUs.

- The deal deepens Meta's $200B+ infrastructure push amid layoffs and cloud rivalry.

What's New

Meta will deploy “tens of millions” of Graviton5 CPU cores across AWS regions to handle AI agent workloads — real-time reasoning, code generation, search, and multi-step task orchestration. AWS specifically designed the latest Graviton generation for AI-related compute needs, claiming it delivers up to 40% better performance per watt for inference workloads compared to previous generations.

The deal is not for GPUs. Amazon’s own AI GPU, Trainium, remains largely tied up by Anthropic, which earlier this month agreed to spend $100 billion over 10 years on AWS Trainium instances. Amazon also invested another $5 billion in Anthropic (total $13B).

Meta’s Graviton deployment will likely run the inference and reasoning layers of its Llama 4 and future agent frameworks, rather than model training (which will continue on Nvidia H100/B200 and AMD MI300X GPUs).

Technical Details

The Graviton5 is an ARM-based CPU featuring 192 cores per socket, support for DDR5 memory, and dedicated AI acceleration instructions. AWS claims it can handle AI inference tasks — including transformer-based attention mechanisms — without the latency overhead of moving data between GPU and CPU memory for agentic workflows.

Agent workloads differ from batch inference: an agent may need to loop through reasoning steps, call tools, parse responses, and coordinate sub-agents. These tasks are compute-intensive but not GPU-parallelizable in the same way as matrix multiplications. CPUs with high core counts and low-latent memory access are often more efficient for such sequential, branch-heavy logic.

The deal represents one of the largest single CPU procurements for AI in industry history. Meta has not disclosed the exact unit count or dollar value, but given the scale of “millions per chip,” the agreement likely runs into the hundreds of millions to low billions annually.

Context: Meta’s $200B+ Infrastructure Frenzy

This deal fits into Meta’s broader infrastructure strategy. In the last 18 months, Meta has:

- Ordered enough Nvidia GPUs to rival Microsoft and Google (estimated $30B+ cumulative).

- Partnered with AMD to deploy MI300X for inference (announced 2024).

- Signed long-term contracts with CoreWeave for GPU clusters ($1B+).

- Invested in Broadcom for custom ASIC network chips.

- Now, committed tens of millions of CPU cores from a direct cloud competitor.

Meta is effectively hedging across chip architectures and cloud providers. The company’s internal AI infrastructure team — led by VP of Engineering Santosh Janardhan — has publicly stated that “no single chip type will dominate AI” and that “agentic workloads require a different compute profile.”

Why CPUs for Agents?

Traditional AI inference runs a single forward pass through a model — highly parallelizable, perfect for GPUs. But AI agents introduce a new execution pattern:

- Multi-step reasoning: The model calls itself recursively (chain-of-thought, tree-of-thought).

- Tool use: Agents call external APIs, databases, or code interpreters, requiring context switching.

- Search and retrieval: Agents perform dense retrieval over vector databases or web corpora.

- Coordination: Multi-agent systems (e.g., Meta’s open-source LangGraph) require orchestrator processes that manage agent state and message passing.

These tasks involve more control flow than matrix math. CPUs with many cores and large caches handle context switches and branching better than GPUs, which are optimized for throughput of uniform operations. By offloading agent orchestration to Graviton CPUs, Meta can free its GPUs for high-throughput batch inference while keeping latency-sensitive agent logic on cheaper, more efficient cores.

Competitive Dynamics

Amazon timed the announcement to coincide with the wrap-up of Google Cloud Next, a pointed shot at its cloud rival. Google also makes custom AI chips — the TPU v6 and the Axion ARM-based CPU — and announced updates at the conference.

Nvidia recently unveiled its Vera CPU, an ARM-based chip designed for AI agent workloads. Vera competes directly with Graviton5, but Nvidia sells chips and systems to enterprises and cloud providers, while AWS offers Graviton as a managed service within its cloud. Meta’s deal may pressure Nvidia to offer its own CPU-as-a-service or risk losing cloud-adjacent AI workloads.

Anthropic’s $100B Trainium deal further complicates the picture: Amazon’s best AI chips are largely reserved for its biggest investment. Meta’s CPU pivot gives AWS another high-profile AI customer without competing for Trainium capacity.

What This Means in Practice

For AI engineers building agent systems, this signals that CPU-based inference is not a compromise but a deliberate architectural choice for certain workloads. Expect cloud providers to market “AI CPU instances” alongside GPU ones, and expect model optimization frameworks (vLLM, TensorRT-LLM) to add CPU-specific batching and scheduling strategies.

For Meta, the deal likely reduces inference cost per agent interaction by a factor of 2–4 compared to GPU-based inference, given Graviton’s price-performance advantage over Nvidia GPUs for CPU-suitable tasks. That matters when Meta plans to have “hundreds of millions of AI agents” (Mark Zuckerberg’s 2025 prediction) interacting across Facebook, Instagram, and WhatsApp.

Frequently Asked Questions

Why would Meta use CPUs for AI instead of GPUs?

AI agent workloads involve sequential reasoning, tool calls, and coordination — tasks that benefit from high single-thread performance and low-latency memory access. CPUs handle these patterns more efficiently than GPUs, which excel at massively parallel matrix operations. Using Graviton CPUs for agent orchestration frees GPUs for training and high-throughput batch inference.

How does the Graviton5 compare to Nvidia’s Vera CPU?

Both are ARM-based CPUs designed for AI inference and agent workloads. Graviton5 offers 192 cores per socket with dedicated AI instructions; Nvidia claims Vera delivers 2x performance per watt over its Grace CPU. The key difference is availability: Graviton5 is available now on AWS as a managed instance, while Vera is just entering sampling for cloud providers.

Will this affect Meta’s relationship with Google Cloud?

Meta still has a six-year, $10 billion Google Cloud deal (signed 2024). The Graviton deal diversifies Meta’s cloud footprint, bringing a significant portion of its AI compute back to AWS. Google remains a major partner, particularly for data analytics and certain model services (e.g., Vertex AI for Llama deployment).

How does Meta’s $200B+ infrastructure spending compare to other tech giants?

Meta’s capital expenditure is roughly on par with Microsoft and Google, though Meta spends a higher percentage on AI-specific infrastructure. Microsoft projects $80B in AI infrastructure for 2026; Google has committed $50B+. Meta’s aggressive spend comes alongside 8,000 layoffs (April 2026) as the company redirects savings to AI compute.

gentic.news Analysis

This is the most concrete signal yet that the AI hardware narrative is shifting from “GPUs forever” to “right chip for the right job.” We’re seeing a bifurcation: training and heavy inference will remain GPU-dominated (Nvidia, AMD, Trainium), but agentic workloads — which many leaders believe will dominate AI usage by 2027 — are fueling a CPU renaissance. Meta’s move validates what AWS, Google, and Nvidia have been hinting at: the “inference tax” of agents is better paid with ARM cores.

The timing is critical. Meta just laid off 8,000 employees (April 23) to redirect capital to AI infrastructure. This deal shows exactly where that money is going: not just more GPUs, but specialized compute for agent orchestration. Meanwhile, Anthropic’s $100B Trainium deal (April 2026) means Amazon’s GPU-class chips are already spoken for. By signing Meta for Graviton, Amazon monetizes its CPU crown jewels while keeping its best GPUs reserved for its most strategic partner.

This also highlights a tension in Meta’s cloud strategy. After the $10B Google Cloud deal in 2024, Meta is effectively hedging between the two largest clouds — and both are direct AI competitors (Gemini vs. Llama, Vertex vs. Bedrock). Meta’s open-source bet (Llama, LangGraph) makes it cloud-agnostic, but the Graviton deal locks a huge chunk of its agent compute onto AWS, giving Amazon leverage in future negotiations.

For practitioners, the takeaway is clear: optimize your agent inference stack for CPUs now. The era of GPU-only AI is ending. Expect Meta to open-source its CPU-related optimizations (likely as part of Llama Stack), and expect AWS to offer Graviton instances optimized for agent frameworks like LangChain, CrewAI, and Meta’s own tools.