Alibaba Cloud has officially launched Qwen3.6-Plus, the latest iteration in its Qwen family of large language models. The model is positioned as a multimodal assistant with a 1 million-token context window, explicitly targeting improved performance in coding tasks and AI agent capabilities for developers.

What's New

Qwen3.6-Plus is not a minor version bump. The primary technical upgrade is the expansion of its context window to 1 million tokens, matching the long-context capabilities of leading models like Anthropic's Claude 3.5 Sonnet and OpenAI's GPT-4o. This allows the model to process and reason over massive documents, lengthy codebases, or extended multi-step instructions—a critical feature for complex AI agent workflows.

The launch follows a rapid release cadence from Alibaba's Qwen team. This comes just weeks after the March 30 release of Qwen3.5-Omni, a model that demonstrated novel "audio-visual vibe coding" capabilities. Qwen3.6-Plus appears to be the next strategic step, focusing on the infrastructure (long context) needed for reliable, production-grade AI agents.

Technical Details & Target Use Case

While the source announcement from Pandaily is light on specific benchmark numbers, the positioning is clear: coding and AI agents. The 1M-token context is the headline feature, enabling:

- Codebase Analysis: Ingesting and reasoning across entire repositories or large modules.

- Long-Horizon Agent Tasks: Maintaining coherence and state across extended sequences of tool use, web browsing, and decision-making.

- Document Processing: Handling large technical specifications, research papers, or legal documents in a single pass.

The model is multimodal, supporting text and image inputs. It is being served through Alibaba Cloud's platform, indicating it is likely a closed, API-accessible model rather than an open-weight release, similar to the approach with Qwen3.5-Omni.

The Competitive Landscape

Alibaba is making a direct play for the same developer and enterprise users currently experimenting with AI agents on platforms from Anthropic, OpenAI, and Google. The Knowledge Graph shows AI Agents are a dominant trend, mentioned in 189 prior articles, but also facing a reliability crisis. A report from March 31, 2026, revealed that 86% of AI agent pilots fail to reach production, highlighting a major industry gap between prototype and product.

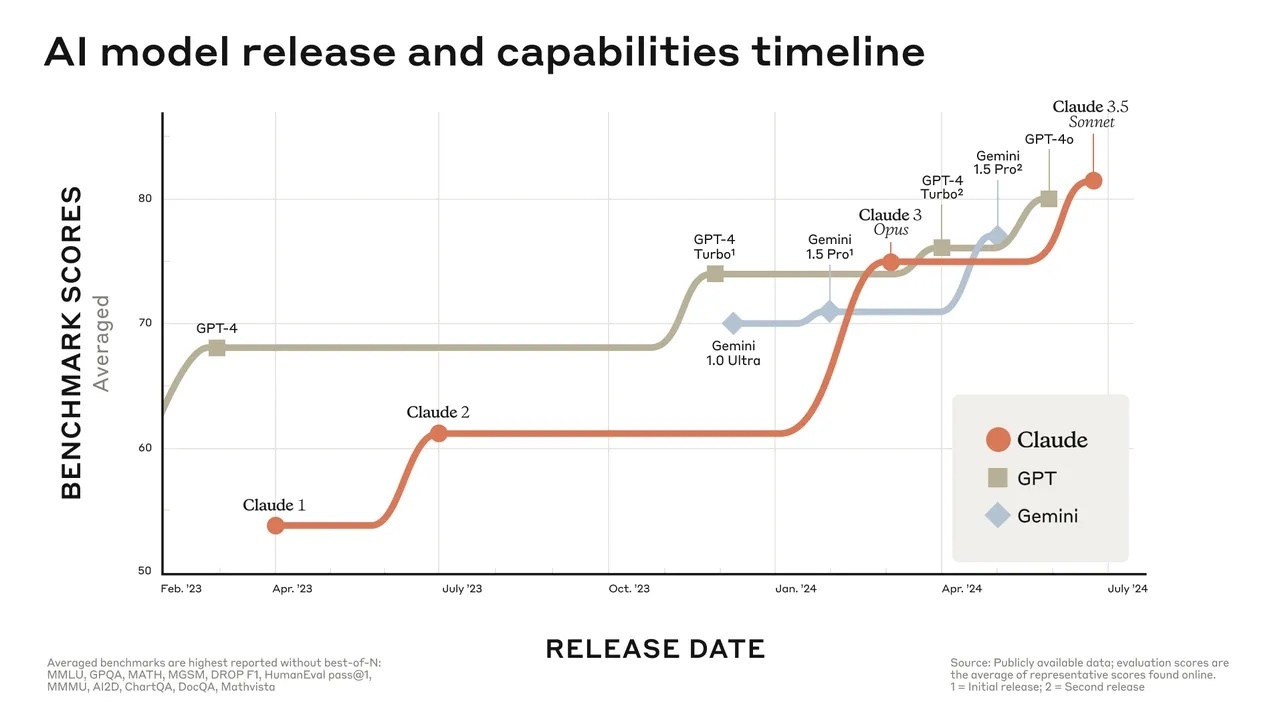

Qwen3.6-Plus enters this fray by offering a key technical prerequisite—extreme context length—that is often cited as necessary for moving agents beyond simple, single-turn tasks. It competes directly with:

- Claude 3.5 Sonnet (Anthropic): 200K context, strong on coding and agentic benchmarks.

- GPT-4o (OpenAI): 128K context, widely used for agent frameworks.

- Gemini 1.5 Pro (Google): 1M+ context, strong multimodal and long-context reasoning.

Alibaba's strategy seems to be one of rapid iteration and feature parity, catching up to and matching the core capabilities offered by US-based leaders, while potentially competing on price and accessibility in Asian and global markets.

What This Means in Practice

For developers building AI agents, a new model with a 1M-token context from a major provider like Alibaba provides another option for testing and scaling agentic workflows. The success of this model will hinge on its actual performance on agentic benchmarks (like WebVoyager or AgentBench) and its reliability in production—the very areas where the industry is struggling.

gentic.news Analysis

This launch is a clear signal of Alibaba's intensified focus on the AI agent market. The timing is strategic. Following the late-March release of Qwen3.5-Omni—which we covered for its novel "audio-visual vibe coding"—the Qwen team is immediately pushing forward with a model built for the infrastructure of agentic systems. This aligns with the broader industry trend we've been tracking: AI agents crossed a critical reliability threshold in December 2025, fundamentally transforming programming capabilities, yet widespread production failure ("agent washing") remains the norm.

The Knowledge Graph shows AI Agents are the most discussed entity in our coverage this week (21 articles), indicating intense reader and market interest. Alibaba is attempting to capture this momentum. However, as our April 1 article "Harness Engineering for AI Agents" detailed, building production-ready agent systems requires more than just a long-context model; it demands robust engineering for evaluation, safety, and reliability. Qwen3.6-Plus provides the raw material, but its adoption will depend on the tooling and ecosystem Alibaba builds around it.

Furthermore, this release continues Alibaba's pattern of targeting the Western developer market. The Qwen3.5-Omni launch included explicit strategic messaging for Western users, and Qwen3.6-Plus, with its focus on universal developer pain points (coding, agents), follows the same playbook. It represents a more sophisticated competitive approach than simply leading on price or local market dominance.

Frequently Asked Questions

What is Qwen3.6-Plus?

Qwen3.6-Plus is a multimodal large language model developed by Alibaba Cloud. Its key feature is a 1 million-token context window, and it is optimized for coding tasks and AI agent applications.

How does Qwen3.6-Plus compare to Claude or GPT-4?

It directly competes with models like Claude 3.5 Sonnet and GPT-4o, specifically matching their long-context capabilities (1M tokens). Its competitive differentiation will likely be based on its performance on coding benchmarks, agentic reasoning tasks, and its pricing on Alibaba Cloud's platform.

Is Qwen3.6-Plus open source?

Based on Alibaba's recent release pattern with Qwen3.5-Omni and the fact it is being "launched" as a product, it is most likely a closed model served via Alibaba Cloud's API. This contrasts with earlier Qwen models (like Qwen2.5) which were released as open-weight under the Apache 2.0 license.

Why is a 1 million-token context important for AI agents?

AI agents often need to maintain long-term memory, process lengthy instructions, analyze large documents, or navigate complex, multi-step workflows. A 1M-token context allows the model to hold more of this information "in mind" at once, reducing the need for complex external memory systems and improving coherence over long interactions.