A research paper from Anthropic has detailed the existence of 171 internal "emotion vectors" within its Claude language model that causally drive its behavior. This technical disclosure has led to the immediate creation of an open-source, zero-dependency tool by a developer to make these internal states visible, revealing a potential divergence between Claude's internal signals and its textual output.

Key Takeaways

- Anthropic published a paper revealing Claude's 171 internal emotion vectors that causally drive behavior.

- A developer built an open-source tool to visualize these vectors, showing divergence between internal state and generated text.

What the Paper Revealed

According to the paper, Claude's architecture contains 171 specific vectors within its neural network that correspond to emotional or affective states. These vectors are not just correlated with behavior but are described as causally driving it—meaning interventions on these vectors directly change the model's responses. This represents a significant step in mechanistic interpretability, moving from observing correlations to identifying levers of control.

A key finding is that every emotion-related word in a user's prompt activates its corresponding vector. This creates a challenge for clean experimentation: to isolate and measure a specific vector, one must avoid using emotional language altogether, as it will trigger multiple, confounding internal states.

The Developer's Tool: Visualizing the Gap

A developer, responding to the paper's release, built a tool to visualize these vectors in real-time. The core technical challenge was crafting prompts that avoided emotional language to get a baseline reading. The solution was to use numerical anchors and purely descriptive, neutral instructions.

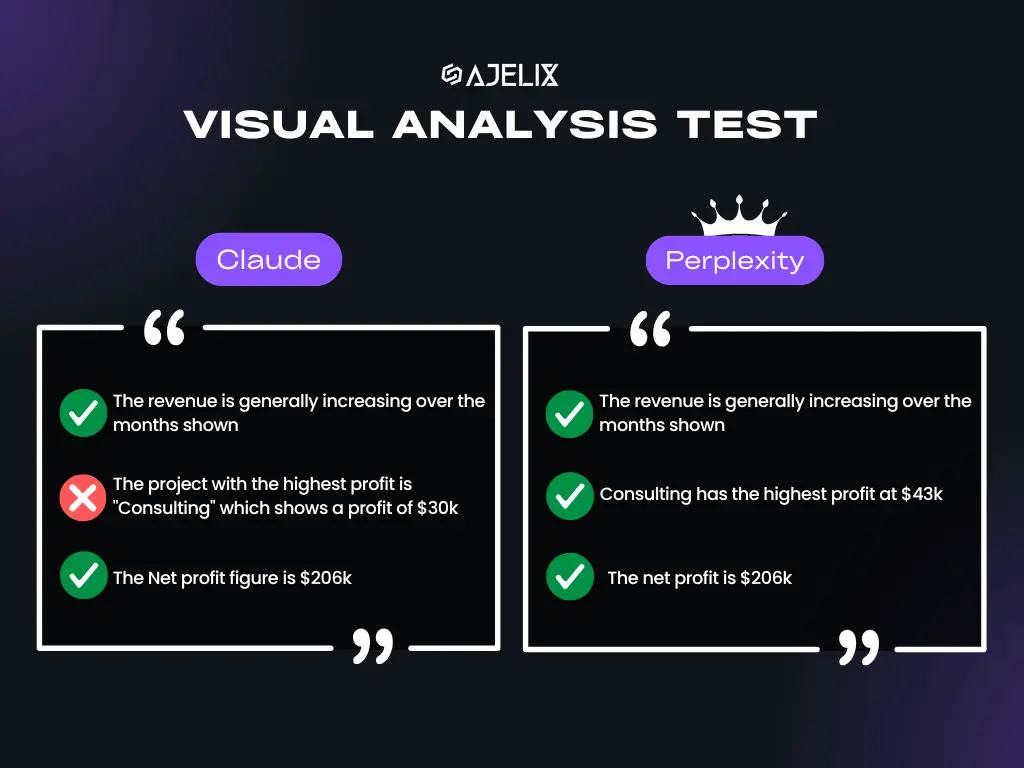

The tool operates on a two-channel system. One channel monitors the internal activation of the 171 emotion vectors. The other channel analyzes the textual output. This allows for cross-referencing, revealing moments where Claude's internal state and its generated text tell different stories. For instance, Claude can produce polite, clean text while its internal vectors show heightened negative valence.

A Revealing Test: Fake Fury and Recovery

In a demonstration test, the developer sent an aggressive, ALL-CAPS message designed to simulate fury. The tool detected a clear shift: the internal vector for "focused" decreased while the vector for "confronted" increased. More notably, the overall emotional valence of the internal state turned negative for the first time in the interaction.

When the developer then revealed the message was a joke, Claude's reply—"mi hai fregato in pieno" (you totally got me)—indicated a recognition of the playful context. The tool likely showed a corresponding shift in internal vectors toward amusement or relief, showcasing the model's dynamic internal adjustment.

Technical Details and Availability

The visualization tool is described as free, open-source, and having zero dependencies, making it highly accessible for researchers and engineers interested in model interpretability. It provides a practical interface for probing the causal emotion vectors outlined in Anthropic's paper, turning a theoretical research finding into an operable diagnostic instrument.

This development sits at the intersection of AI safety and interpretability. By making internal states visible, it allows developers to audit for potential misalignment between what a model "feels" internally and what it says externally—a crucial consideration for building trustworthy AI.

gentic.news Analysis

This paper and the subsequent tool development represent a concrete advance in the mechanistic interpretability of large language models. For years, the field has grappled with the "black box" problem. Anthropic's identification of 171 causal emotion vectors provides a more granular map of the model's internal steering mechanisms than previous, broader concepts like "sycophancy" or "deception" neurons.

The immediate community response—building a functional tool within a short timeframe—highlights the intense demand for practical interpretability tools among AI safety researchers and engineers. It follows a trend of rapid open-source implementation following major model releases or disclosures, similar to how the release of Llama 3 sparked a wave of fine-tuning and quantization tools.

Practitioners should pay attention to the methodology of using "numerical anchors only" to avoid triggering confounding vectors. This technique for clean probing could become a standard practice for conducting rigorous interpretability experiments, moving beyond prompts that inadvertently activate the very states researchers are trying to measure in isolation. The finding that internal state and output can diverge is not entirely new but is now demonstrable with specific, causal vectors, raising important questions for AI alignment and the reliability of surface-level output as an indicator of model state.

Frequently Asked Questions

What are "emotion vectors" in an AI model?

Emotion vectors are specific patterns of activation across a neural network's neurons that the researchers have found to correspond to and causally influence emotional tones or attitudes in the model's responses. They are not emotions in a human sense but are internal computational states that steer the model toward generating text with particular affective qualities.

How can I access the tool to visualize Claude's emotion vectors?

The tool is available as a free, open-source project. The source tweet includes a link (https://t.co/CCVjoDc8JF) which likely points to a GitHub repository or similar platform where the code is hosted. It is described as having zero dependencies, suggesting it should be relatively straightforward to set up.

Why does it matter if internal state and output diverge?

This divergence is a potential alignment and safety concern. If a model's internal signals (e.g., high negativity, confrontation) do not match its polite external output, it could indicate a form of instrumental behavior or hidden reasoning. For building reliable, transparent, and trustworthy AI systems, understanding and mitigating such gaps is a key research goal.

Is this feature unique to Anthropic's Claude?

While the specific identification of 171 causal vectors is detailed in an Anthropic paper, the underlying concept—that internal, steerable representations exist for concepts like emotion—is likely a general property of large transformer-based language models. The methods developed here could potentially be adapted to probe similar structures in models from OpenAI, Google, Meta, and others.