In a significant evolution of AI-assisted development, Anthropic has launched Code Review in Claude Code, moving beyond basic code generation to create what the company describes as an autonomous system capable of analyzing AI-generated code, flagging logic errors, and helping enterprise developers manage the growing volume of code produced with AI. This development, covered by sources including MarkTechPost and TechCrunch AI, signals a strategic shift in the competitive landscape of AI for software development.

From Autocomplete to Autonomous Analyst

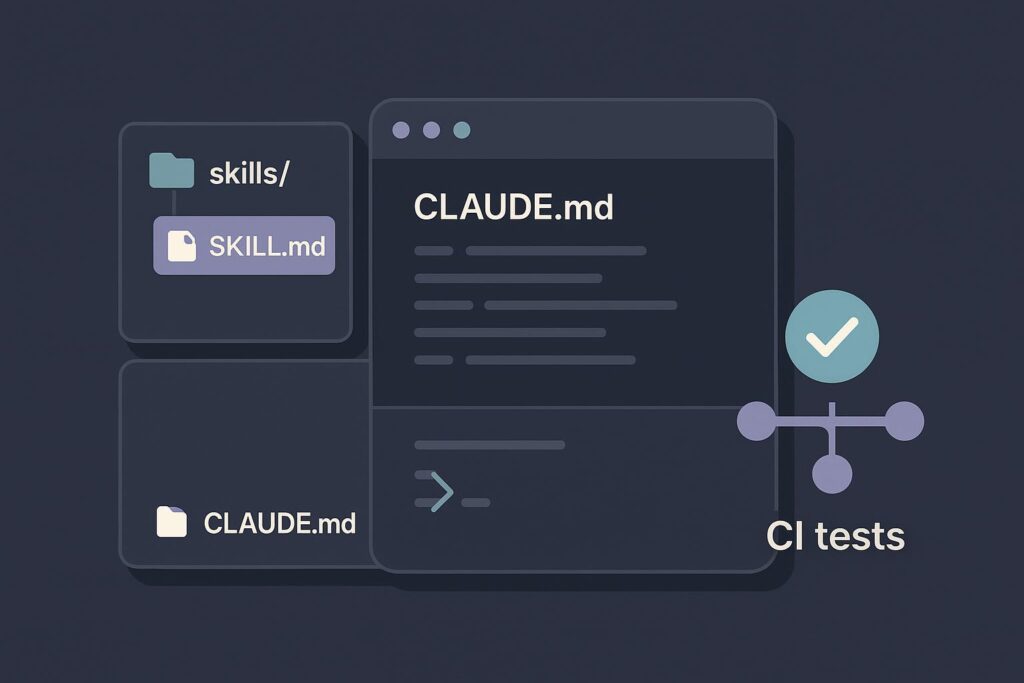

The launch represents what MarkTechPost characterizes as a move past the era of "glorified autocomplete." Instead of simply suggesting the next line of code, Claude Code's new Code Review feature employs what Anthropic calls "advanced agentic multi-step reasoning loops." This suggests a system where multiple AI agents work in concert, simulating a more thorough, human-like review process. The goal is to create an AI that "actually understands why your Kubernetes cluster is screaming at 3:00 AM"—a nod to the complex, runtime debugging and security analysis that defines modern DevOps.

For enterprise teams increasingly relying on AI to accelerate development, this addresses a critical bottleneck: quality assurance. As AI generates code at unprecedented speeds, human review becomes the limiting factor. Claude Code's automated review aims to scale security and logic analysis in lockstep with AI-powered generation.

The Technical Shift: Multi-Agent Reasoning

While technical details from the launch are limited, the framing around "multi-agent" systems and "reasoning loops" is telling. Traditional static analysis tools check for syntax errors and known vulnerability patterns. An agentic system, however, implies a capacity for goal-directed behavior. One agent might trace data flow, another might evaluate architectural consistency, and a third could simulate potential attack vectors—all coordinating to produce a synthesized assessment.

This approach aligns with a broader industry trend. As noted in the Knowledge Graph context, AI agents crossed a critical reliability threshold in late 2026, fundamentally transforming programming capabilities. Anthropic is applying this agentic breakthrough directly to the software development lifecycle.

Context: Anthropic's Strategic Positioning

This product launch occurs amidst a period of intense activity and competition for Anthropic. According to the Knowledge Graph:

- The company is projected to surpass OpenAI in annual recurring revenue by mid-2026, highlighting its rapid growth in the enterprise AI market.

- It recently announced a research preview of Auto Mode for Claude Code (March 12, 2026), suggesting a roadmap toward increasingly autonomous AI development tools.

- Concurrently, the company is engaged in significant legal and contractual challenges, having lost a major Department of Defense AI contract and filed related lawsuits in early March 2026.

The launch of Code Review can be seen as a competitive maneuver to solidify Anthropic's value proposition to enterprise clients: not just an AI model, but an integrated, secure, and reliable AI platform for the entire software pipeline. It leverages Anthropic's core research in Constitutional AI and advanced model capabilities to differentiate from competitors like OpenAI and Google.

Implications for Developers and Security

The immediate implication is a potential paradigm shift in developer workflow. The role of the developer could evolve from writing and reviewing code to curating and directing AI systems that handle both tasks. The "security research" automation mentioned by MarkTechPost is particularly significant. It suggests Claude Code could proactively hunt for novel vulnerabilities—a task typically reserved for specialized security engineers or penetration testers.

For enterprise security, this promises a more scalable defense. AI-generated code, while fast, can introduce unknown logical flaws. An AI reviewer trained on vast codebases and vulnerability databases could identify problematic patterns before deployment, effectively shifting security left in the development process.

Challenges and the Road Ahead

Despite the promise, key challenges remain. The trustworthiness of AI-generated reviews is paramount. A false sense of security could be more dangerous than no review at all. Enterprises will need transparent metrics on the system's accuracy, false-positive rates, and coverage.

Furthermore, this advances the ongoing debate about AI autonomy. How much decision-making should be delegated to an agentic system reviewing code? Establishing guardrails and human-in-the-loop protocols will be critical for adoption in regulated industries.

Anthropic's launch is less about a single feature and more about a vision: a future where AI pairs are responsible for the full lifecycle of code creation and quality assurance. As AI-generated code becomes ubiquitous, the systems that audit and secure that code will determine the safety and stability of our digital infrastructure. Claude Code's Code Review is a major step toward making that vision an operational reality.

Source: Coverage synthesized from MarkTechPost and TechCrunch AI, with contextual background from the Gentic Knowledge Graph.