What Happened

A research team has introduced a new approach to continual fine-tuning that addresses a fundamental challenge in sequential learning: how to adapt a pre-trained model to new tasks while preserving performance on earlier tasks whose data is no longer available. The paper, published on arXiv in January 2026, presents a method that combines the strengths of existing approaches while overcoming their limitations.

Continual fine-tuning is particularly relevant as organizations deploy foundation models that need to adapt to evolving requirements without catastrophic forgetting. The traditional approaches have fallen into two categories:

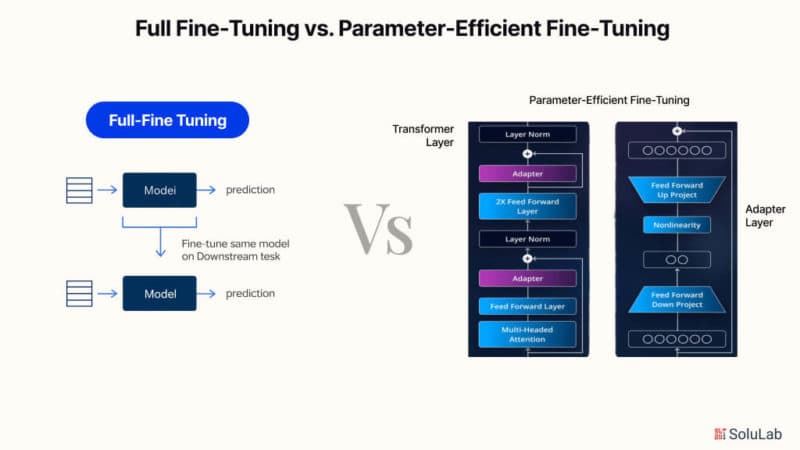

Input-adaptation methods rely on retrieving the most relevant prompts at test time but require continuously learning a retrieval function that is prone to forgetting.

Parameter-adaptation methods use a fixed input embedding function to enable retrieval-free prediction and avoid forgetting, but sacrifice representation adaptability.

The new method bridges these approaches by enabling adaptive use of input embeddings during test time with parameter-free retrieval.

Technical Details

The core innovation lies in two synergistic components:

1. Adaptive Module Composition Strategy

This component learns informative task-specific updates that preserve and complement prior knowledge. Rather than overwriting existing parameters or adding separate modules for each task, the approach composes updates in a way that maintains backward compatibility while enabling forward adaptation. This addresses the representation rigidity problem of traditional parameter-adaptation methods.

2. Clustering-Based Retrieval Mechanism

The second component captures distinct representation signatures for each task, enabling adaptive representation use at test time. What makes this approach theoretically grounded is the derivation of task-retrieval error bounds for this clustering-based, parameter-free paradigm.

The researchers provide theoretical guarantees linking low retrieval error to structural properties of task-specific representation clusters. This reveals a crucial insight: well-organized clustering structure enables reliable retrieval without requiring additional learned parameters for the retrieval function itself.

Theoretical Foundation

The paper's theoretical contribution is significant. By deriving provable error bounds, the researchers establish conditions under which the retrieval mechanism will work reliably. This moves beyond empirical validation to provide mathematical guarantees about performance under specific structural conditions.

The method demonstrates improved retrieval and predictive performance under large shifts in task semantics—exactly the scenario where traditional methods struggle most.

Retail & Luxury Implications

While the paper doesn't specifically address retail applications, the continual fine-tuning problem is highly relevant to luxury and retail AI systems that must adapt to:

Evolving Consumer Preferences: Fashion trends, seasonal collections, and shifting consumer values require models that can adapt without forgetting previous patterns.

Multi-Brand Portfolios: Large luxury conglomerates managing multiple brands need models that can learn brand-specific nuances while maintaining corporate-level understanding.

Regional Adaptation: Models trained on global data need to adapt to regional preferences (European vs. Asian luxury markets) without losing their core capabilities.

Product Lifecycle Management: From launch to end-of-life, product characteristics and consumer perceptions evolve, requiring adaptive models.

The parameter-free aspect is particularly valuable for production systems where adding retrieval parameters for each new task would create operational complexity and potential points of failure.

Potential Application Scenarios

Personalization Engines: A recommendation system that learns new customer segments or product categories without degrading performance on existing ones.

Visual Search Systems: Computer vision models that adapt to new product categories (from handbags to watches) while maintaining accuracy on previously learned categories.

Customer Service AI: Chatbots that learn to handle new types of inquiries (sustainability questions, authentication requests) without forgetting how to address standard service requests.

Trend Forecasting Models: Systems that incorporate new data sources or prediction tasks while preserving historical forecasting accuracy.

The clustering-based approach aligns well with retail's natural categorization structures—products naturally cluster by category, price point, brand, and customer segment.

Implementation Considerations

For retail AI teams considering this approach:

Data Requirements: The method assumes task boundaries are known during training, which aligns with retail's typically well-defined task structures (seasonal campaigns, product launches).

Computational Overhead: The paper doesn't specify computational requirements, but clustering-based approaches typically add inference-time overhead that must be evaluated against latency requirements.

Integration Complexity: Replacing existing fine-tuning pipelines would require significant re-engineering, suggesting pilot projects on specific use cases first.

Maturity Level: As an arXiv preprint from 2026, this represents cutting-edge research rather than production-ready code. Retail teams should monitor for implementations in major frameworks before considering adoption.

The Bigger Picture

This research addresses a fundamental tension in enterprise AI: the need for models to be both stable (not forgetting) and adaptable (learning new things). For luxury retailers operating in fast-changing markets with long brand histories, this balance is particularly crucial.

The theoretical guarantees around retrieval accuracy based on cluster structure provide a principled foundation for what has often been treated as an empirical problem. This moves continual learning from "art" toward "engineering"—a necessary evolution for enterprise-scale deployment.

While immediate implementation may be premature for most retail organizations, the conceptual framework—particularly the insight about cluster structure enabling reliable retrieval—should inform how teams structure their training data and model architectures for long-term adaptability.