What Happened

A new research paper titled "DIET: Learning to Distill Dataset Continually for Recommender Systems" was posted to arXiv on March 26, 2026. The work addresses a fundamental bottleneck in modern recommendation system development: the prohibitive cost of repeatedly retraining models on massive, continuously growing streams of user behavioral data.

The authors formulate the problem as streaming dataset distillation for recommender systems and introduce the DIET framework. Unlike traditional dataset distillation methods that create static compressed datasets, DIET maintains a compact evolving training memory that updates alongside streaming data while preserving the signals most critical for model training.

Technical Details

Modern deep recommender systems operate under a continual learning paradigm. User interactions generate massive behavioral logs that grow continuously. For large platforms, retraining models from scratch on the full historical dataset for every architecture tweak, hyperparameter search, or model iteration is computationally unsustainable. This severely slows down the experimentation and development cycle.

DIET proposes a solution through continual distillation. The framework operates through several key mechanisms:

- Principled Initialization: The distilled dataset is initialized by identifying and selecting the most influential samples from the original data stream.

- Stage-Wise Updates: As new streaming data arrives, the distilled dataset is updated in stages to remain aligned with the long-term training dynamics, rather than being static.

- Influence-Aware Memory Addressing: Updates to the distilled memory are guided by a mechanism that identifies which stored samples should be replaced or modified based on their current influence on model training.

- Bi-Level Optimization: The framework is formulated as a bi-level optimization problem where the outer loop updates the distilled dataset, and the inner loop trains the model on that distilled set.

The core innovation is treating the distilled data as an evolving entity rather than a fixed artifact. This allows the compressed representation to track distribution shifts in user behavior over time—a critical requirement for real-world recommendation systems where user preferences and item catalogs are constantly changing.

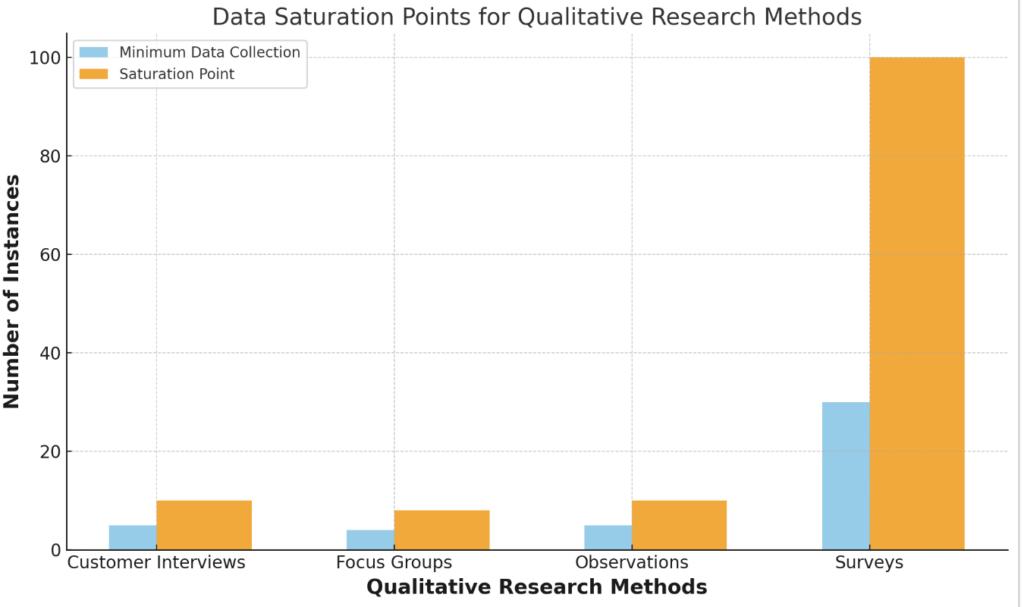

Experiments on large-scale recommendation benchmarks demonstrate compelling results:

- DIET compresses training data to 1-2% of the original size.

- Performance trends (how different architectures or hyperparameters compare) remain consistent with full-data training.

- Model iteration cost is reduced by up to 60×.

- The distilled datasets show generalization across different model architectures, making them reusable foundations for development.

Retail & Luxury Implications

For retail and luxury companies operating sophisticated recommendation systems—whether for e-commerce personalization, content discovery, or next-best-offer engines—the DIET framework addresses several critical pain points:

Accelerated Experimentation Cycles: The ability to test new model architectures, embedding strategies, or ranking algorithms on a distilled dataset that faithfully represents full-data behavior could dramatically speed up innovation. Instead of waiting days or weeks for full retraining, data scientists could iterate in hours.

Cost-Efficient Model Development: The reported 60× reduction in iteration cost translates directly to lower cloud compute bills and more efficient use of ML engineering resources. For companies running A/B tests on multiple recommendation variants simultaneously, the savings could be substantial.

Historical Data Management: Luxury retailers often maintain years of customer interaction data. DIET's continual distillation approach offers a principled way to maintain a compact, representative snapshot of this evolving history without storing petabytes of raw logs.

Cross-Architecture Reusability: The fact that DIET's distilled datasets generalize across models means that once a high-quality distilled set is created for a particular time period or customer segment, it can be reused to benchmark multiple candidate algorithms, enabling more robust model selection.

However, important considerations remain:

- The paper presents academic benchmarks; real-world luxury retail data has unique characteristics (highly sparse interactions with luxury items, long consideration cycles, strong seasonality) that may challenge the distillation process.

- The framework adds complexity to the training pipeline through its bi-level optimization and update mechanisms.

- There's a trade-off between compression ratio and fidelity—while 1-2% compression is impressive, the absolute performance of models trained on distilled data versus full data needs careful evaluation for production systems where small percentage gains in recommendation accuracy translate to significant revenue.

For technical leaders, DIET represents a promising research direction in making recommender system development more agile and cost-effective, particularly valuable in environments where data volume is growing faster than compute budgets.