What Happened

A new technical guide has been published, offering a practitioner-focused walkthrough on fine-tuning OpenAI's recently released GPT-OSS 20B model. The guide specifically addresses the complexities of applying Low-Rank Adaptation (LoRA) to this model, which is built on a Mixture-of-Experts (MoE) architecture. The article's tagline, "Everything we learned the hard way so you don't have to," signals its intent to share hard-won, practical insights for engineers and researchers looking to customize this powerful open-source model.

While the full article is behind a paywall, the title and context reveal the core subject: a hands-on tutorial for adapting a specific, large-scale AI model. This is not a theoretical paper but a guide born from direct experimentation.

Technical Details: LoRA on MoE Models

To understand the guide's value, we must break down its key technical components:

GPT-OSS 20B: This is a 20-billion parameter open-source language model from OpenAI. The "OSS" indicates its open-source nature, making it accessible for commercial and research use. Its scale places it in the category of models capable of sophisticated reasoning and generation tasks, but it requires significant computational resources to run and modify.

Mixture-of-Experts (MoE): This is a neural network design paradigm where the model is composed of many smaller sub-networks or "experts." For any given input (like a piece of text), only a subset of these experts is activated. This makes the model far more computationally efficient during inference than a dense model of equivalent parameter count, as you're not using the entire network for every calculation. However, it adds significant complexity to training and fine-tuning.

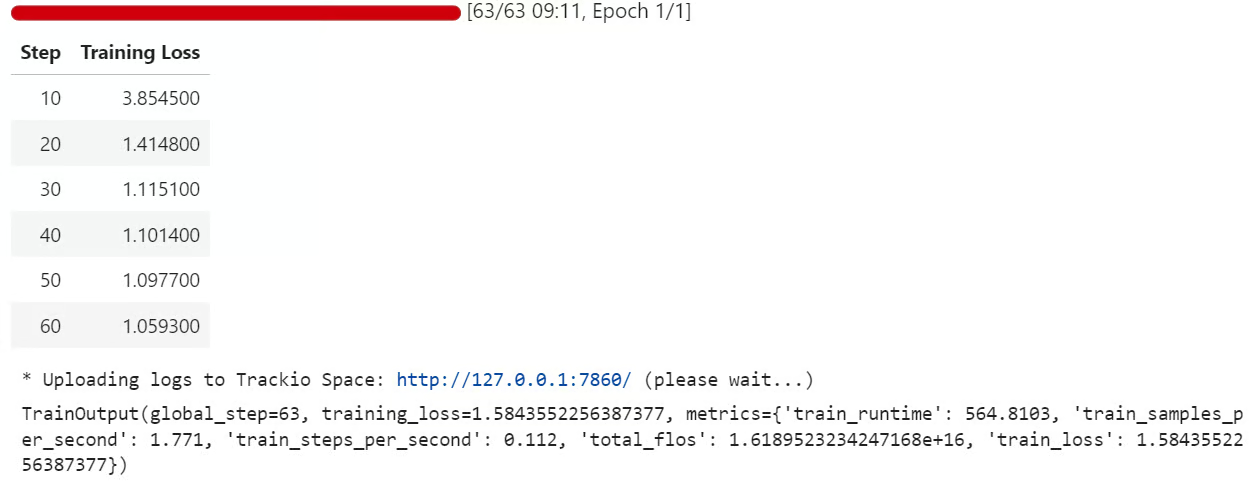

Low-Rank Adaptation (LoRA): This is a popular parameter-efficient fine-tuning (PEFT) technique. Instead of updating all billions of a model's parameters during fine-tuning, LoRA freezes the pre-trained model weights and injects trainable rank decomposition matrices into each layer of the Transformer architecture. This drastically reduces the number of trainable parameters (often by >90%), memory footprint, and storage requirements, making fine-tuning large models feasible on more modest hardware.

The Core Challenge: Applying standard LoRA techniques to an MoE model is non-trivial. The sparse, conditional activation of experts means the fine-tuning process must correctly handle which expert pathways are being adapted and how those adaptations interact. A naive implementation could be inefficient or unstable. The guide promises to address these specific pitfalls, providing a validated recipe for successful adaptation.

Retail & Luxury Implications

The ability to efficiently fine-tune a model like GPT-OSS 20B has profound, though indirect, implications for the retail and luxury sectors. The value is not in the model itself being "for retail," but in the technique that unlocks domain-specific customization of powerful, general-purpose AI.

Potential Application Pathways:

- Hyper-Specialized Customer Service Agents: A luxury brand could fine-tune the model on its entire corpus of customer service transcripts, product catalogs, brand history, and style guides. The resulting AI could power a chatbot or internal agent that communicates with the brand's exact tone of voice, possesses deep product knowledge (e.g., the provenance of materials, craftsmanship techniques), and handles complex, nuanced inquiries that generic models would fail on.

- Creative & Marketing Co-pilot: Fine-tuned on a brand's past campaigns, press releases, and visual identity guidelines, the model could act as a supercharged creative assistant. It could generate draft copy for product descriptions, email campaigns, or social media posts that are consistently on-brand, suggest narrative concepts for upcoming collections based on heritage, or help translate marketing materials while preserving brand-specific terminology and nuance.

- Strategic Intelligence Synthesis: Analysts could fine-tune the model to process and summarize internal reports, market research, competitor analysis, and trend forecasts. The MoE architecture is well-suited to this, potentially using different "experts" for financial data, consumer sentiment, and fashion trend analysis, providing a unified, intelligent summary from disparate data streams.

The Critical Gap: The guide provides the how (the technical methodology), but the what (the high-quality, domain-specific dataset) and the why (the precise business use case) are entirely up to the implementing brand. The largest challenge for a luxury house will not be running the LoRA fine-tuning code, but in curating the proprietary, structured, and clean dataset that encapsulates its unique brand equity and knowledge. Furthermore, operationalizing such a fine-tuned model into a secure, scalable, and user-friendly application requires significant MLOps and software engineering investment beyond the initial AI training.