Foxconn Industrial Internet (FII), the advanced manufacturing subsidiary of electronics giant Foxconn, plans to begin mass production of co-packaged optics (CPO) all-optical switches in the third quarter of 2026. The company is targeting an initial production volume of over 10,000 units, according to a report from Digitimes. This manufacturing ramp signals a pivotal step in commercializing a key technology designed to overcome the data transfer limitations in modern AI data centers.

Key Takeaways

- Foxconn's manufacturing arm will begin volume production of advanced co-packaged optics (CPO) switches in Q3 2026, targeting over 10,000 units.

- This move directly addresses the critical bandwidth and power bottlenecks in next-generation AI data center infrastructure.

What's New

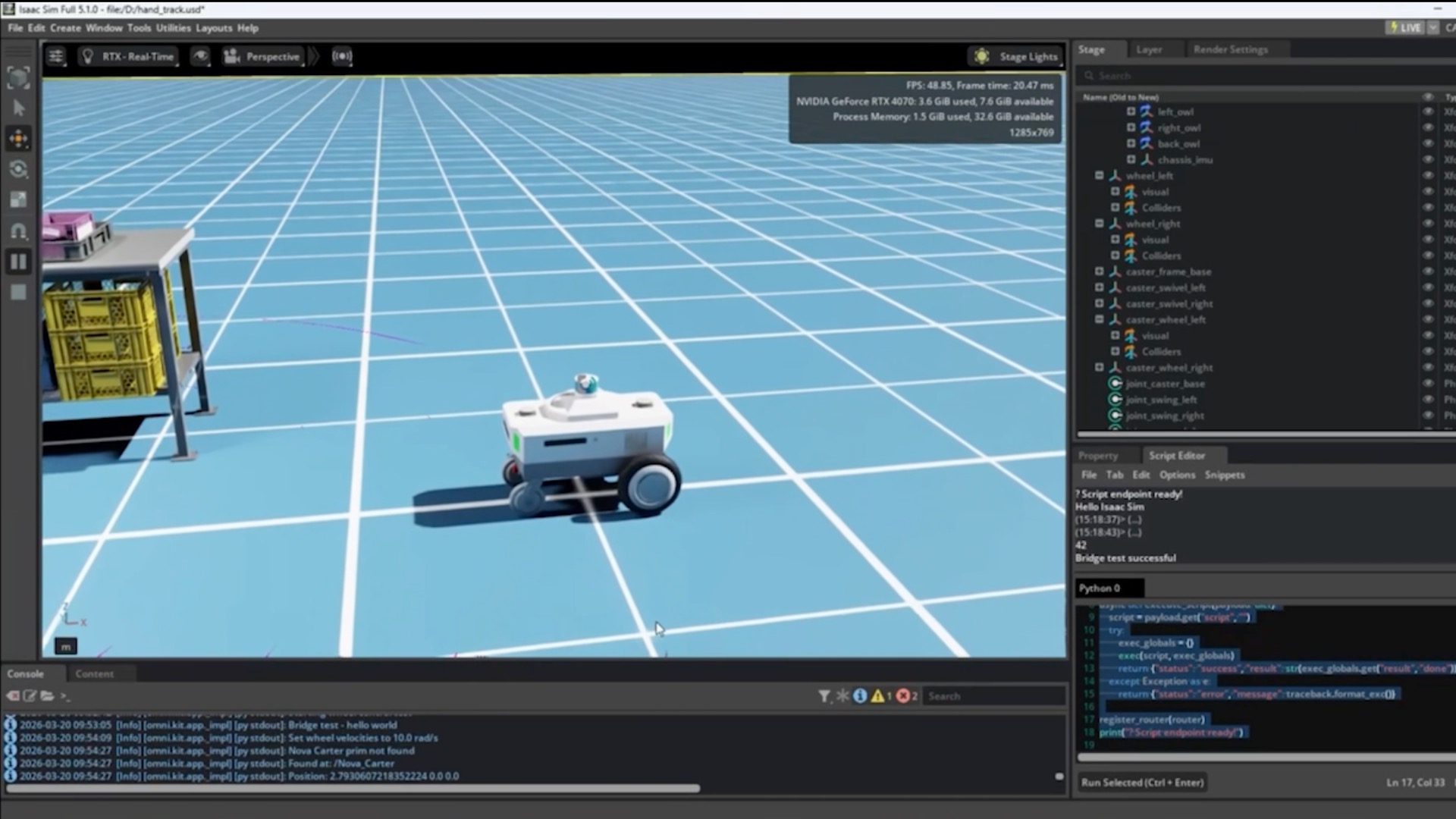

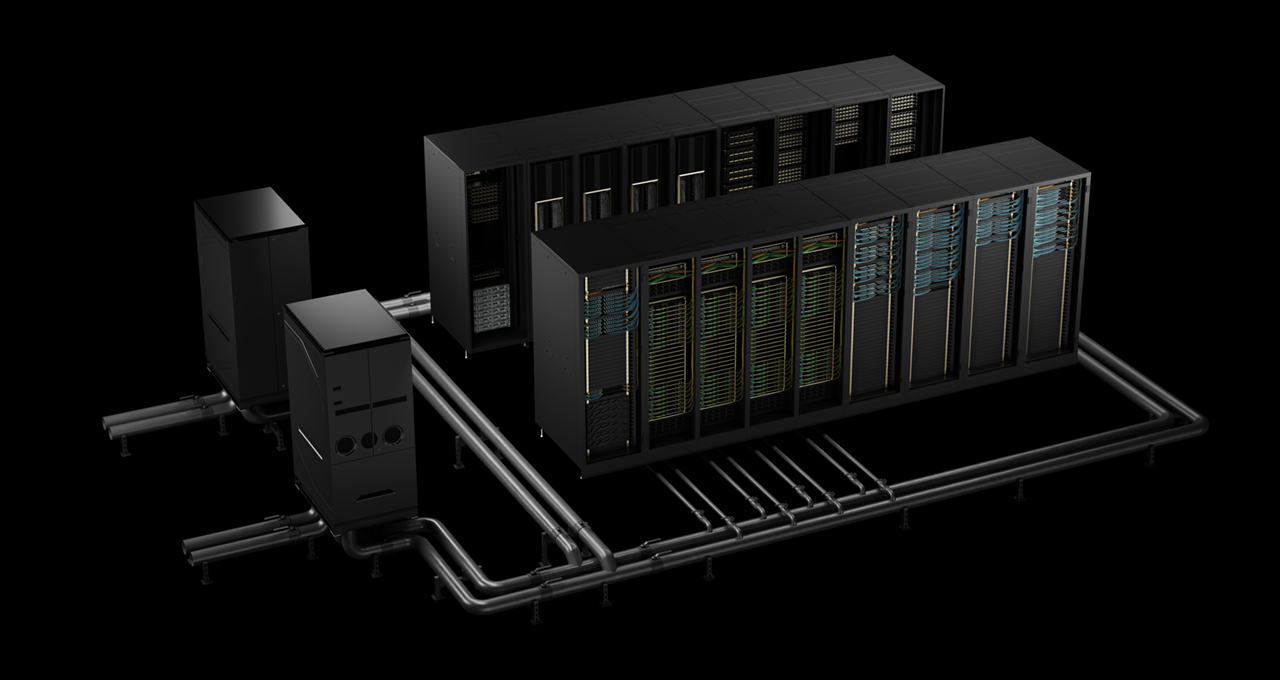

Foxconn Industrial Internet is moving CPO technology from the lab and pilot lines to high-volume manufacturing. The "over 10,000 units" target for the initial mass production phase in Q3 2026 represents a significant commitment. CPO is an emerging interconnect architecture that integrates optical engines directly with switch silicon in a single package, a departure from the traditional method of using pluggable optical modules connected via electrical traces on a PCB.

Technical Details & Why It Matters for AI

Current AI clusters, powered by thousands of GPUs like NVIDIA's H100 and B200, are hitting fundamental physical limits. The electrical traces used to connect switches to optical modules for data transfer between racks are becoming a bottleneck for bandwidth, power efficiency, and latency.

- Bandwidth & Power: As GPU clusters scale, the required data throughput between nodes increases exponentially. Traditional pluggable optics consume substantial power for signal conversion and drive over increasingly lossy electrical traces. CPO drastically shortens the electrical path, enabling higher bandwidth densities (moving towards 1.6T and 3.2T per port) while reducing power consumption per bit by up to 30-50%.

- Latency: The shorter interconnect within a co-packaged design reduces signal latency, a critical factor for tightly coupled distributed AI training jobs.

- Form Factor: CPO allows for more compact switch designs, enabling higher port densities within the same rack space, which is at a premium in hyperscale data centers.

By entering mass production, FII aims to provide the physical hardware necessary to build the next generation of AI-optimized data center networks, where bandwidth is expected to be the primary constraint after compute.

How It Compares

FII is not the only player. The CPO/CPO-adjacent ecosystem includes:

Foxconn Industrial Internet Manufacturing & Integration Mass production target Q3 2026. Focus on system-level assembly and volume. Intel, Broadcom, Marvell Switch Silicon with CPO Roadmaps Developing ASICs with integrated CPO interfaces. FII likely packages these chips. NVIDIA Spectrum-X with NVLink & Optical Pursuing a full-stack network approach; Spectrum switches currently use pluggables. Ayar Labs, Lightmatter Silicon Photonics & In-Package Optics Developing the core optical I/O chiplets that would be co-packaged. Traditional OEMs (Cisco, Arista) Network System Vendors Evaluating and integrating CPO technology into future platforms.FII's move is significant because it addresses the manufacturing and scaling challenge. Designing a CPO switch is one hurdle; producing tens of thousands of them reliably and cost-effectively is another. Foxconn's core competency in high-volume, precision electronics manufacturing positions FII as a critical enabler for the industry's transition.

What to Watch

Key questions remain as the Q3 2026 target approaches:

- Specifics: The exact switch silicon partner (e.g., Broadcom, Intel) and the targeted bandwidth per port (e.g., 1.6T) are not specified.

- Yield & Cost: High-volume production of CPO requires advanced packaging (like 2.5D/3D integration) with optical components. Achieving high yield at a competitive cost per port is the central challenge.

- Ecosystem Readiness: Mass adoption requires alignment from switch chip vendors, optical component suppliers, and data center operators on standards, interoperability, and thermal management.

gentic.news Analysis

This announcement is a concrete signal that the AI infrastructure industry is moving decisively to solve the "interconnect problem." For over a year, the narrative has been that after compute, networking is the next major bottleneck for scaling AI clusters. Research from firms like SemiAnalysis and statements from cloud providers have highlighted the unsustainable power draw of data center networks. FII's production target moves CPO from a promising R&D topic, as covered in our analysis of earlier silicon photonics breakthroughs, into the realm of scheduled industrial output.

This aligns with a broader trend of vertical integration in AI hardware. Just as NVIDIA controls the full stack from GPU to networking, Foxconn is leveraging its unparalleled manufacturing scale to secure a foundational role in the physical layer of AI infrastructure. It’s a competitive response to NVIDIA's networking ambitions with Spectrum-X, but from the manufacturing side. If successful, FII could become the primary OEM for a wide range of companies needing high-bandwidth switches, not unlike its role in consumer electronics.

For AI practitioners and data center architects, the timeline is the key takeaway. Volume availability in late 2026 suggests that CPO-based networks will not be a factor in the immediate next generation of clusters (e.g., those built with Blackwell GPUs in 2025), but will be critical for the following cycle. Planning for data center power and rack architecture in 2027 and beyond must now seriously account for CPO's different thermal and form-factor requirements.

Frequently Asked Questions

What is co-packaged optics (CPO)?

Co-packaged optics is an advanced networking technology where the optical transceiver components (which convert electrical signals to light and back) are integrated directly onto the same package or substrate as the network switch silicon chip. This contrasts with the current standard of pluggable optics, where optical modules are separate units connected to the switch via electrical traces on the board, which become inefficient at very high data rates.

Why is CPO important for AI data centers?

Large-scale AI training requires thousands of GPUs to communicate with extremely high bandwidth and low latency. The electrical pathways used in traditional designs are running out of capacity and consume too much power. CPO reduces power consumption per bit by up to 50%, enables higher bandwidth densities (e.g., 1.6 Terabits per second per port), and lowers latency, which are all critical for efficiently scaling AI clusters.

Who is Foxconn Industrial Internet?

Foxconn Industrial Internet is a subsidiary of Hon Hai Precision Industry (Foxconn), the world's largest electronics manufacturer. FII focuses on advanced manufacturing for industrial and enterprise markets, including cloud server equipment, networking gear, and high-performance computing systems. Their move into CPO switch manufacturing leverages their core expertise in high-volume, precision system assembly.

When will CPO switches be available at scale?

According to this report, Foxconn Industrial Internet is targeting mass production and an output of over 10,000 units beginning in the third quarter of 2026. This would make them available for deployment in data centers starting in late 2026 or 2027.