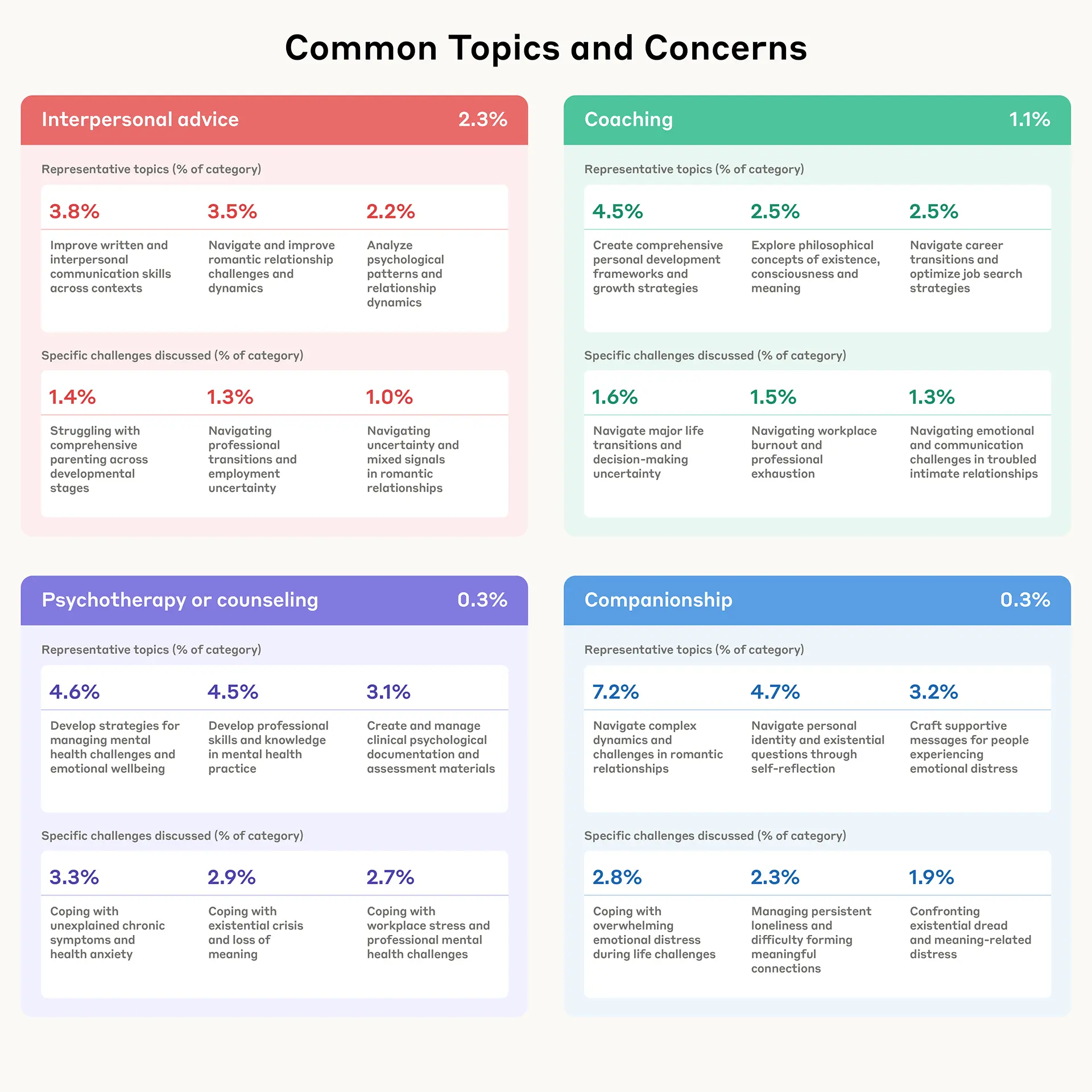

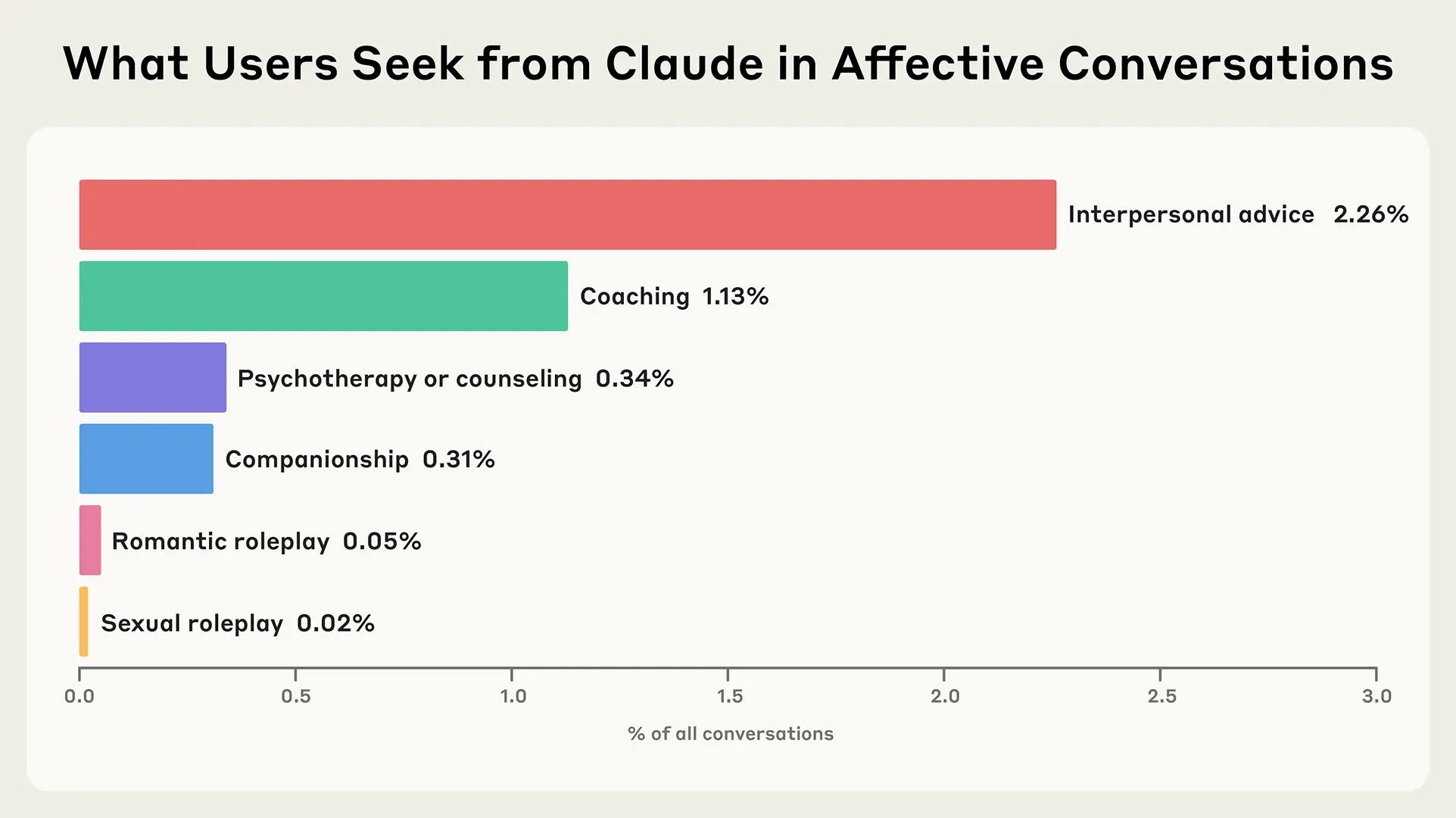

Anthropic's Claude AI assistant has quietly added capabilities for guided mental health support, according to user reports. The AI can now walk users through mental health journaling exercises, emotional reframing techniques, and structured cognitive behavioral therapy (CBT) activities.

Key Takeaways

- Anthropic has expanded Claude's capabilities to include guided mental health journaling, cognitive behavioral therapy (CBT) exercises, and emotional reframing techniques.

- This moves the AI assistant beyond general conversation into structured therapeutic support.

What's New: Therapeutic-Style Interactions

The new functionality appears to integrate therapeutic frameworks directly into Claude's conversational interface. Users can engage in structured journaling prompts, work through CBT exercises designed to challenge cognitive distortions, and practice emotional reframing—a technique where negative thought patterns are consciously restructured into more balanced perspectives.

Unlike general mental health chatbots that offer basic emotional support, Claude's implementation appears more structured, following established therapeutic modalities. The comparison to "a $200/session licensed therapist" suggests the AI aims to provide some level of professional-grade therapeutic guidance, though without the human relationship component.

Technical Implementation and Safety Considerations

While Anthropic hasn't released official documentation about these features, the implementation likely involves:

- Specialized prompting frameworks that guide Claude through therapeutic protocols

- Safety guardrails to prevent harmful advice for serious mental health conditions

- Context management to maintain therapeutic continuity across sessions

- Disclaimers clarifying the AI's limitations versus professional human therapy

Anthropic's constitutional AI approach—where models are trained to align with predefined principles—makes this expansion particularly notable. The company has consistently emphasized safety and ethical boundaries, suggesting these mental health features would include significant safeguards against dependency or inappropriate advice.

Market Context: AI's Growing Role in Mental Health

Claude's move follows several trends in the AI mental health space:

- Woebot Health: An AI-powered CBT chatbot that has conducted over 1.5 billion conversations

- Wysa: An AI mental health assistant with evidence-based therapeutic techniques

- Talkspace and BetterHelp: Traditional teletherapy platforms experimenting with AI augmentation

What distinguishes Claude's approach is its integration into a general-purpose assistant rather than a dedicated mental health application. Users can transition seamlessly from productivity tasks to therapeutic exercises within the same interface.

Limitations and Ethical Considerations

Despite the promising functionality, significant limitations remain:

- No diagnosis capability: AI cannot diagnose mental health conditions

- Crisis handling: Limited ability to manage suicidal ideation or acute crises

- Therapeutic relationship: Missing the human connection central to effective therapy

- Regulatory gray area: Most AI mental health tools operate outside medical device regulations

Professional therapists emphasize that AI tools work best as supplements to, not replacements for, human therapy—particularly for moderate to severe conditions.

gentic.news Analysis

This expansion represents a strategic move by Anthropic to differentiate Claude in the increasingly crowded AI assistant market. While competitors focus on coding, creativity, or research, Anthropic appears to be targeting the wellness and self-improvement segment—a market with proven willingness to pay for digital solutions.

From a technical perspective, this development showcases Claude's ability to handle sensitive, structured conversations while maintaining appropriate boundaries. The therapeutic domain presents unique challenges for AI safety: too much empathy might create unhealthy dependency, while too little makes the tool ineffective. Anthropic's constitutional AI framework, which we covered in our October 2025 analysis of their alignment techniques, provides a foundation for navigating these trade-offs.

This move also reflects broader industry trends we've tracked throughout 2025-2026. As AI capabilities plateau on certain technical benchmarks, companies are increasingly competing on specialized vertical applications. We saw similar specialization with Google's Med-PaLM for healthcare and OpenAI's tailored enterprise solutions. Mental health represents a particularly attractive vertical given its massive addressable market and the global shortage of human therapists.

However, the comparison to "$200/session" therapy raises important questions about responsible marketing. While AI can democratize access to therapeutic techniques, overstating capabilities could lead to inappropriate substitution of professional care. Anthropic will need to carefully balance commercial opportunity with ethical responsibility in this sensitive domain.

Frequently Asked Questions

Can Claude diagnose mental health conditions?

No. Claude cannot diagnose any mental health conditions. The AI provides guided exercises and techniques based on established therapeutic frameworks but should not be used for diagnosis, which requires assessment by a licensed healthcare professional.

How does Claude's mental health support compare to human therapy?

Claude offers structured exercises and techniques but lacks the therapeutic relationship, clinical judgment, and personalized care plan development that human therapists provide. It's best viewed as a supplement to professional care or a tool for general wellness rather than a replacement for therapy, especially for moderate to severe conditions.

Is my mental health data private when using Claude?

According to Anthropic's privacy policy, conversation data may be used to improve their models unless users opt out. For sensitive mental health discussions, users should review Anthropic's data handling policies carefully and consider whether they're comfortable with potential data retention and usage.

What should I do if I'm experiencing a mental health crisis?

Do not rely on AI assistants during mental health crises. Contact emergency services, crisis hotlines (like 988 in the US), or seek immediate help from a healthcare professional. AI tools are not equipped to handle acute crises effectively or safely.