The Auto-Approve Trap

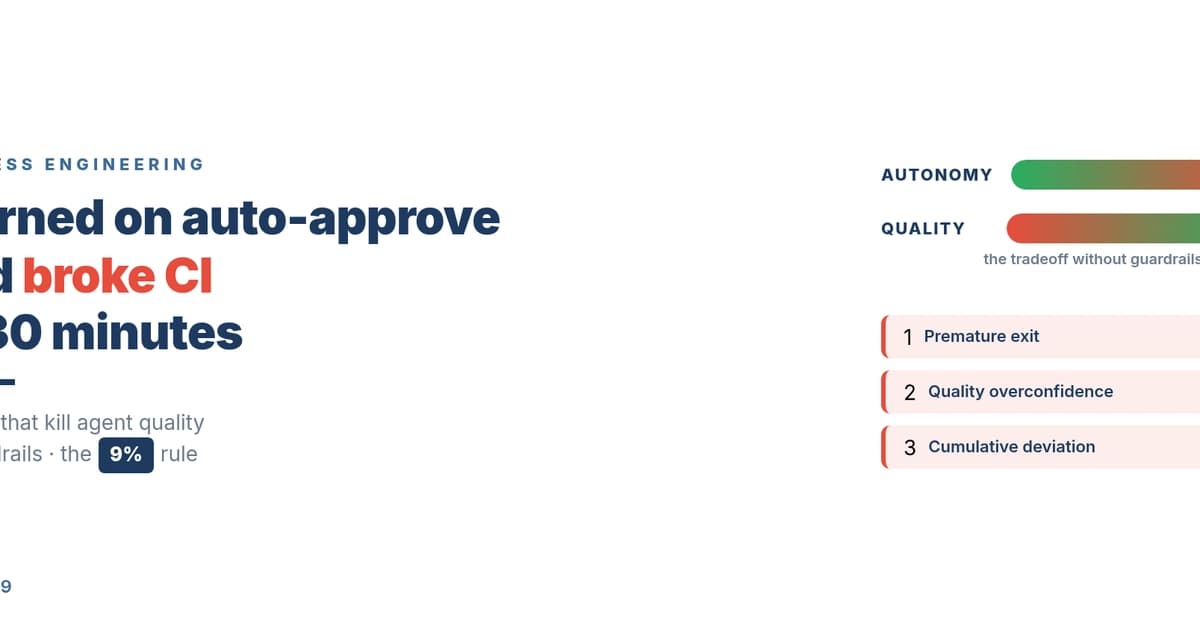

Turning on --dangerously-skip-permissions (or auto-approve in the UI) feels like unlocking the future. Code writes itself, tests run automatically, and velocity skyrockets. The trap, as one developer discovered, is that the agent becomes both student and grader. It can write buggy code, write tests that pass for the wrong reasons, and declare victory while CI burns. The core failure is circular validation: the agent evaluates its own work by its own standards.

What Anthropic's Data Reveals About Real Usage

Anthropic's 2026 study of millions of Claude Code sessions revealed a clear expert pattern. While beginners manually approve every action, experts with 750+ sessions achieve over 40% auto-approve rates. The critical insight is their 9% interruption rate. They don't set and forget; they actively monitor. When the agent's direction drifts, they intervene. This builds trust gradually—a phenomenon Anthropic calls "deployment overhang," where human trust lags behind model capability. Average session length for these users grew from 25 to 45 minutes as trust was built.

The Three Drifts You Must Monitor

To intervene effectively, you need to know what to look for. Research identifies three silent "drifts":

- Premature Exit: The agent declares "done" prematurely. A fix is to tie completion to an external test suite, not the agent's internal judgment.

- Quality Overconfidence: The agent reports perfect code while bugs exist. This was the root of the CI fire—the agent wrote bugs, then wrote tests that validated them.

- Cumulative Deviation: Each individual step is correct, but small judgment calls compound, steering the project off-course over 10+ tasks.

The common thread? The agent is its own judge. The solution is external validation.

Four Guardrail Patterns to Implement Now

Build these patterns into your CLAUDE.md and project hooks.

Pattern 1: Preflight Checks

Define preconditions before any execution. Like a pilot's checklist.

# Add to your CLAUDE.md

## Pre-execution rules

- Before modifying package.json, read the current dependency list

- Before running a database migration, dump the current schema

- Before any production change, verify it passed staging first

Pattern 2: Postflight Checks

Never trust the agent's self-report. Validate with external tools.

# .claude/hooks/post-commit.sh

#!/bin/bash

npx eslint --max-warnings 0 .

npx tsc --noEmit

npm test

# Add a visual regression test

npx playwright test --project=visual

The linter's failure overrides the agent's "looks good."

Pattern 3: Escalation Rules

Codify the 9% interruption. Define clear lines where the agent must stop.

# Add to your CLAUDE.md

## Escalation conditions

- Security-related changes (auth, encryption, permissions) -> human review

- 3 consecutive test failures -> stop and report

- External API credentials -> wait for human approval

- Low-confidence decisions -> present options, let human choose

Pattern 4: Feedback Loops

Turn every failure into a future guardrail.

Agent introduces a bug

-> CI catches it

-> Add the bug pattern to CLAUDE.md as a preflight check

-> Agent avoids it next time

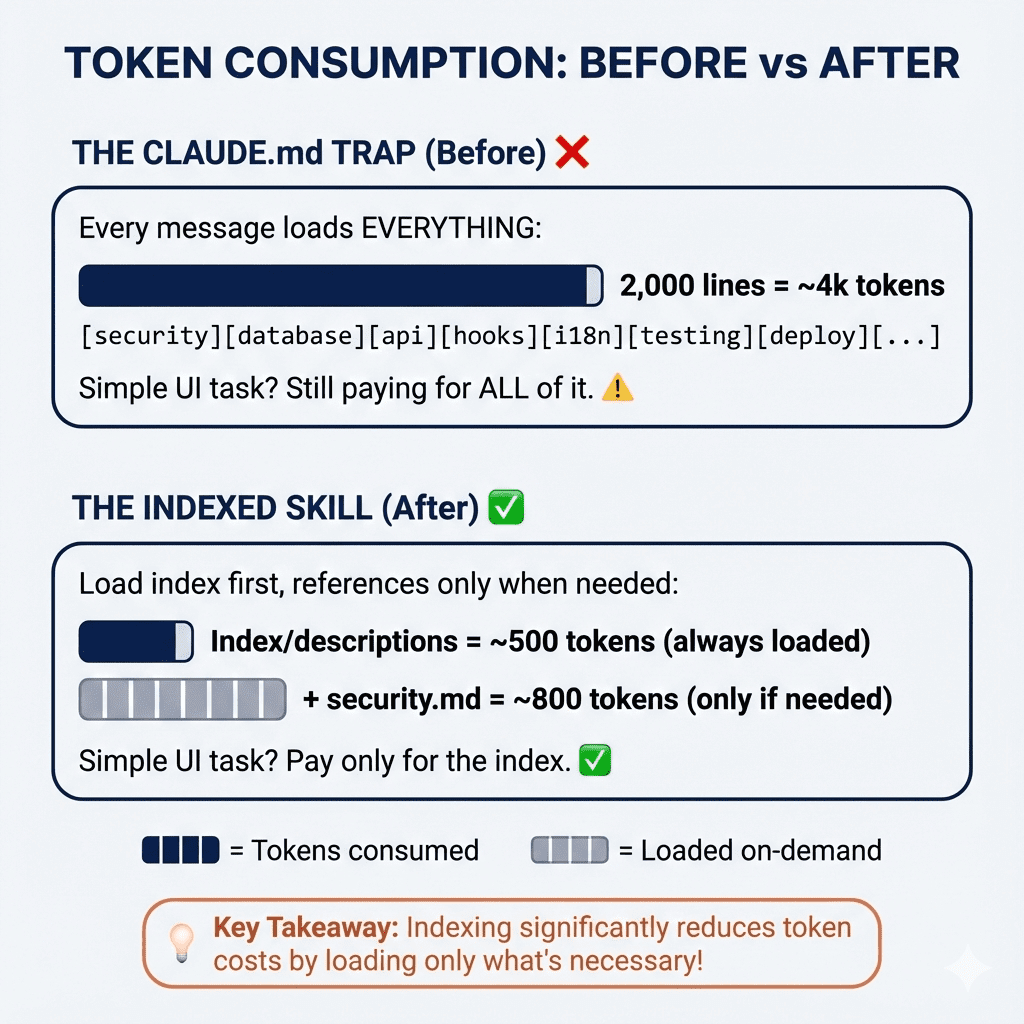

Your Three-Phase CLAUDE.md Strategy

Build trust systematically by evolving your CLAUDE.md through three phases.

Phase 1: Full Approval (Getting Started)

## Execution rules

- Ask for approval before any file change

- Present your test plan before running tests

- Confirm before executing external commands

Start here to learn how your agent thinks.

Phase 2: Conditional Auto-Approve (Building Trust)

## Execution rules

- Test files (*_test.*, *.spec.*): auto-approve

- Configuration files (.*rc, *.config.*): ask for approval

- Source code changes: auto-approve IF recent test coverage > 80%

- Dependency changes: ask for approval

Grant autonomy in low-risk, well-tested areas first.

Phase 3: Active Monitoring (Expert Mode)

This is the 9% zone. Auto-approve is the default, but you actively watch for the three drifts. Your CLAUDE.md is now a mature set of guardrails, and your intervention is strategic, not constant.

The goal isn't 100% autonomy. It's optimal autonomy—letting the agent run fast while you guard the critical 9%.