VMLOps, a prominent community and resource hub for machine learning operations, has released "The NLP Engineer's System Design Interview Guide." The guide is designed to address the specific challenges of evaluating and demonstrating expertise in designing production-ready Natural Language Processing systems, a common and complex stage in technical hiring for AI roles.

Key Takeaways

- VMLOps has published 'The NLP Engineer's System Design Interview Guide,' a detailed resource covering architecture, scaling, and trade-offs for real-world NLP systems.

- It provides a structured framework for both interviewers and candidates.

What's in the Guide?

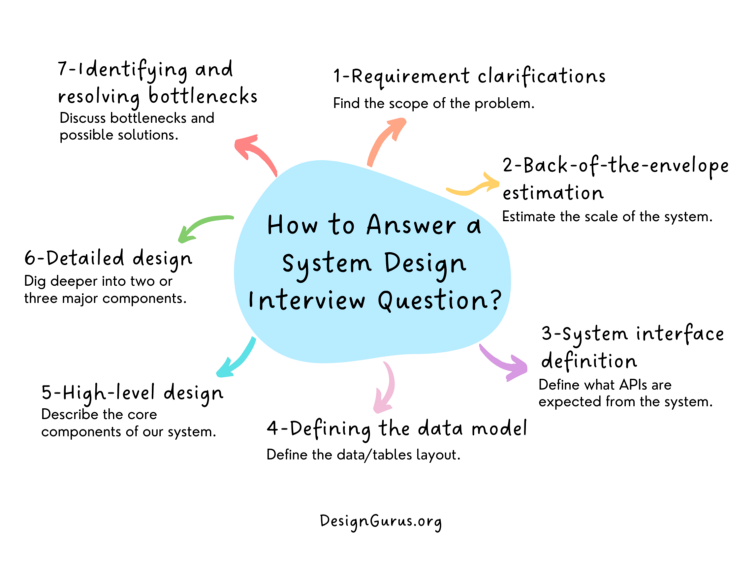

The guide structures the nebulous task of an NLP system design interview into a concrete, repeatable process. It moves beyond abstract algorithmic knowledge to focus on the practical engineering decisions required to build, deploy, and maintain NLP applications at scale.

Core sections of the guide are expected to cover:

- Problem Scoping & Requirements: Defining functional and non-functional requirements (latency, throughput, accuracy, cost), and identifying key constraints.

- High-Level Architecture: Designing data pipelines, model serving layers, feature stores, and monitoring systems. This includes choosing between monolithic and microservices architectures.

- Model Selection & Trade-offs: Evaluating when to use pre-trained foundational models via API (e.g., GPT-4, Claude) versus fine-tuning open-source models (e.g., Llama, Mistral) versus training from scratch. The guide would detail the cost, latency, control, and data privacy implications of each path.

- Data Pipeline Design: Handling data ingestion, labeling, versioning, and preprocessing for continuous training cycles.

- Scaling & Optimization: Strategies for load balancing, model quantization, distillation, caching, and efficient GPU utilization to handle spikes in demand.

- Monitoring & Observability: Defining key metrics (e.g., prediction latency, drift, business KPIs) and building alerting systems to ensure model reliability in production.

- Failure Modes & Mitigations: Planning for model degradation, data pipeline failures, and adversarial inputs.

Why This Guide Matters

System design interviews for backend and infrastructure roles have long been standardized, but equivalent frameworks for ML and NLP roles have been lacking. Candidates are often asked vague questions like "design a chatbot" or "scale a recommendation system" without a clear rubric for success. This guide provides that missing structure, benefiting both sides of the interview table.

For interviewers, it offers a consistent blueprint to assess a candidate's practical engineering judgment, moving beyond their ability to recite transformer architecture details. For candidates, it demystifies the process and provides a study roadmap focused on the end-to-end lifecycle of an NLP product.

The release reflects the industry's maturation. As NLP models transition from research prototypes to core business infrastructure, the skill set required shifts from purely research-oriented to include robust MLOps and software engineering principles.

gentic.news Analysis

This guide publication is a direct response to a tangible market need we've tracked closely. In our December 2025 analysis, "The Great Consolidation: MLOps Tools Battle for the Enterprise Stack," we noted the rising demand for professionals who can bridge advanced model knowledge with production engineering. VMLOps, by focusing on this intersection, is cementing its role as a key resource provider in this niche.

The guide's emphasis on trade-offs between API-based and self-hosted models is particularly timely. It follows a trend we highlighted in our Q1 2026 coverage of Databricks' $2.1B acquisition of Predibase, a move squarely aimed at simplifying the fine-tuning and deployment of open-source LLMs. This acquisition signaled a major enterprise push towards bringing model development in-house, a strategy that demands exactly the system design skills this guide outlines. Candidates who can articulate the cost-benefit analysis of using Anthropic's Claude API versus deploying a fine-tuned Meta Llama model on AWS Inferentia chips will have a significant advantage.

Furthermore, this aligns with the broader activity from the vLLM and TensorRT-LLM projects, which are focused exclusively on the serving and scaling portion of the architecture puzzle. The VMLOps guide provides the essential upstream context—the why and what—before those tools handle the how. For practitioners, mastering the framework in this guide, combined with hands-on experience with these serving technologies, creates a powerful and marketable skill set.

Frequently Asked Questions

What is an NLP System Design Interview?

An NLP System Design Interview is a technical assessment where a candidate is presented with an open-ended product requirement (e.g., "Design a real-time document translation service for a website") and must articulate a high-level technical architecture. The goal is to evaluate their ability to make engineering trade-offs, select appropriate models and infrastructure, plan for scale, and consider the full ML lifecycle, not just model accuracy.

How is this different from a software engineering system design interview?

While a software engineering system design interview focuses on web-scale distributed systems (databases, caches, CDNs, APIs), an NLP system design interview incorporates all those elements plus the unique complexities of machine learning. This includes data pipeline design, model training/serving infrastructure, GPU management, handling non-deterministic model outputs, monitoring for concept drift, and the significant cost trade-offs between different model deployment strategies.

Who should use this guide?

The guide is primarily targeted at two groups: (1) NLP Engineers, ML Engineers, and MLOps professionals preparing for senior or staff-level interviews at tech companies, and (2) Hiring Managers and Technical Interviewers at these companies who need to design consistent and effective evaluation processes for ML-centric roles.

Where can I find the guide?

The guide is available via the link in the VMLOps announcement. It is likely hosted on their community platform or a dedicated resource site, potentially as a downloadable PDF or a series of blog posts.