Google has established a dedicated "strike team" within its Google DeepMind division, tasked with urgently improving the company's artificial intelligence models for code generation and software engineering tasks. The initiative is reportedly driven, in part, by competitive pressure from rival AI lab Anthropic, whose coding tools are viewed internally at Google as being more advanced.

According to sources, the push involves high-level leadership, including Google co-founder Sergey Brin, and is focused on developing "agentic" AI systems. These systems are designed to handle complex, multi-step coding workflows autonomously, with an ultimate, ambitious goal of automating aspects of AI research itself. The internal sentiment, as reported, is clear: "Coding is the way to win."

Key Takeaways

- Google has formed a specialized team within DeepMind to rapidly improve its AI coding capabilities.

- The move is a direct response to internal assessments that Anthropic's tools are more advanced, with leadership pushing for agentic systems.

What Happened

The core development is organizational: the creation of a focused, high-priority team within Google DeepMind. Unlike broader research groups, a "strike team" implies a mission-oriented structure designed to cut through bureaucracy and deliver rapid, tangible improvements on a specific problem—in this case, AI-powered software development.

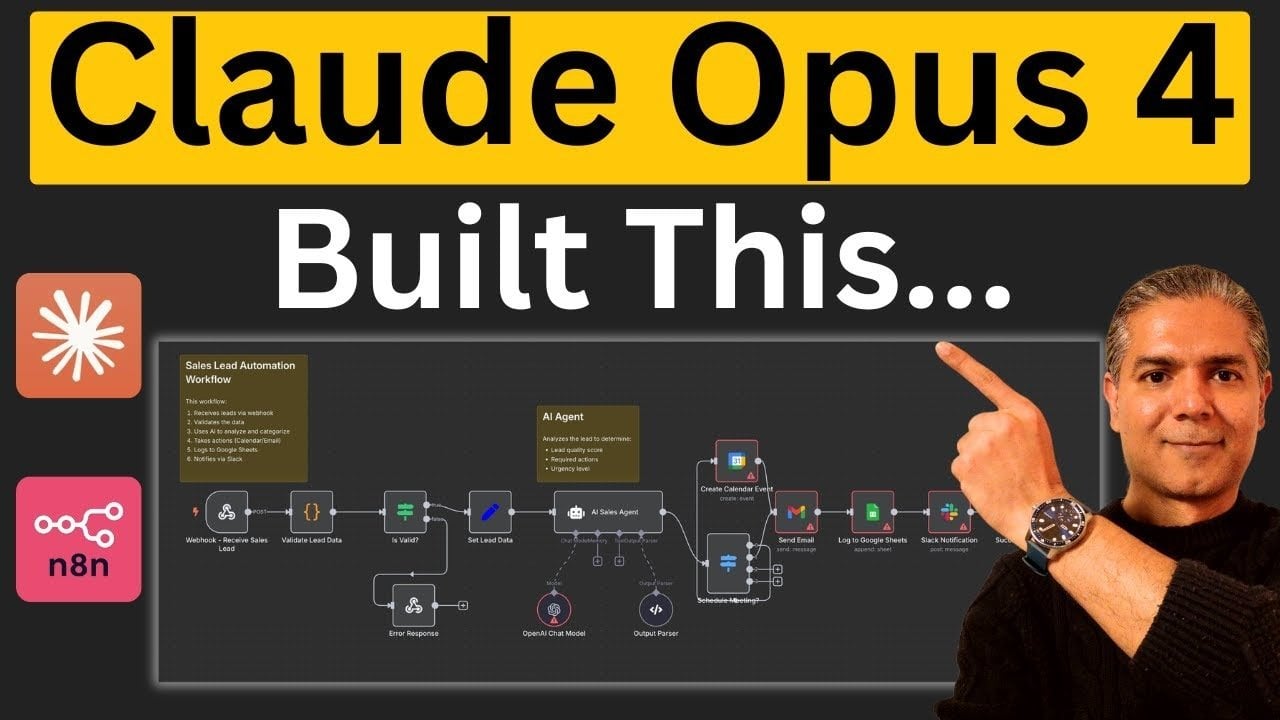

The catalyst is external competition. The source indicates that tools from Anthropic, likely referring to its Claude Code or related coding assistants, are perceived within Google as technically superior. This perception has created internal pressure to close the gap and regain a leadership position in a domain considered foundational for the future of AI.

The Strategic Focus: Agentic AI for Code

The team's mandate extends beyond incremental improvements to existing code-completion models. Leadership is directing efforts toward "agentic" AI systems. In practical terms, this means moving from models that suggest the next line or function to systems that can:

- Understand a high-level software requirement or bug report.

- Break it down into a sequence of sub-tasks (e.g., design data structure, write function A, write function B, integrate, test).

- Execute those tasks across multiple steps, potentially involving code generation, editing, testing, and debugging in a loop.

- Operate with a degree of autonomy, making decisions about how to implement solutions.

The long-term vision hinted at—automating AI research—represents the zenith of this capability. It suggests systems that could read research papers, formulate hypotheses, design experiments (including writing the necessary training and evaluation code), and iterate on AI model architectures.

The Competitive Landscape

This move signals an intensification of the "AI code war." The market for AI coding assistants is fiercely contested, with significant offerings from:

- Microsoft/GitHub: GitHub Copilot, powered by models from OpenAI (a key Anthropic rival), is the market-share leader.

- Anthropic: Claude Code and Claude 3.5 Sonnet have gained a strong reputation, particularly for code understanding and complex task handling, which appears to be the direct impetus for Google's action.

- Amazon: Offering CodeWhisperer.

- Startups: Such as Replit, Codium, and others.

Google's primary entry is through its Gemini models, available via its AI Studio and integrated into its IDEs. While capable, the consensus among developers has often placed Claude and OpenAI's offerings (GPT-4, o1) ahead in sophisticated coding benchmarks and real-world usability for complex tasks. This internal acknowledgment of a competitive deficit is a notable admission.

What This Means in Practice

For developers and companies, this competitive scramble is likely to lead to:

- Rapid Iteration: Expect faster updates and more ambitious feature releases from Google's coding AI offerings (e.g., Gemini Code, integrations in Colab, IDX).

- A Shift from Assistants to Agents: The industry focus will move from "copilots" that complete lines to "agents" that can own discrete tickets or features. Google's strike team is a bet on this next phase.

- Increased Benchmark Scrutiny: Performance on benchmarks like SWE-Bench (which tests models on real GitHub issues) and HumanEval will become even more critical as public proof points of capability.

gentic.news Analysis

This organizational shift is a direct, tactical response to a sustained competitive threat. It follows a pattern we've observed where Google, after integrating its AI research arms into DeepMind, uses focused "moonshot" teams to tackle high-priority gaps. The involvement of Sergey Brin underscores the strategic importance; Brin has been increasingly hands-on with technical direction at Google's AI labs since late 2023, particularly following the launch of OpenAI's GPT-4.

The specific citation of Anthropic as the pressure point is significant. It confirms a trend we noted in our analysis of Claude 3.5 Sonnet's launch, where its coding proficiency was a standout feature that reshaped developer perceptions. Anthropic, despite being smaller than Google or OpenAI, has consistently punched above its weight in model quality, forcing reactions from larger players. This move also aligns with the broader industry trend we covered in The Agentic Shift: From Copilots to Engineers, where the next battleground is clearly multi-step, autonomous task completion.

However, forming a strike team is only the first step. The real challenge is execution. Google DeepMind must now deliver models that not only match but surpass the coding fluency, reasoning, and agentic planning demonstrated by Claude and OpenAI's o1-series models. The timeline for tangible outputs from this team will be a key indicator of whether Google can convert its vast resources into a decisive technical lead in this critical domain.

Frequently Asked Questions

What is an "agentic" AI system for coding?

An agentic AI system for coding goes beyond suggesting code snippets. It can take a high-level instruction (like "add user authentication to this app"), plan the necessary steps, write multiple files of code, run tests, debug errors, and iterate—all with minimal human intervention. It acts more like an autonomous software engineer than a tool.

Why is Anthropic considered such a threat to Google in AI coding?

Anthropic's Claude models, particularly Claude 3.5 Sonnet, have consistently ranked at or near the top of independent coding benchmarks like SWE-Bench and HumanEval. More importantly, they have gained a strong reputation among developers for superior reasoning, code understanding, and handling of complex, multi-file tasks. This perceived quality advantage has eroded Google's position in a market it needs to win.

What does Sergey Brin's involvement mean for this project?

Sergey Brin's active involvement signals that this initiative has the highest possible priority within Google. As a co-founder and a respected technical voice, his push indicates that improving AI coding models is not just a product goal but a strategic imperative for Google's future in AI. It helps the "strike team" secure resources and cut through internal barriers.

When will we see results from this Google DeepMind strike team?

There is no public timeline. However, the nature of a "strike team" suggests the goal is rapid iteration. The industry should watch for updates to Google's Gemini Code models, new research papers on agentic coding, or product announcements related to its developer platforms (like IDX or Colab) in the coming 6-12 months as early indicators of the team's output.