In what may be the most ambitious infrastructure investment in technology history, Google is reportedly planning to spend approximately $1.9 trillion over the next decade to build what industry observers are calling a "nearly untouchable advantage" in artificial intelligence. This massive capital expenditure represents a strategic shift toward vertical integration that could fundamentally alter the competitive dynamics of the AI industry.

The Scale of Investment: From $90B to $185B in a Single Year

According to a Forbes report cited by AI commentator Rohan Paul, Google's capital spending is undergoing a dramatic acceleration. The company is projected to increase its infrastructure investment from $90 billion in 2025 to a staggering $185 billion in 2026 alone. This near-doubling of capital expenditure in just one year underscores the urgency with which Google is approaching the AI infrastructure race.

This spending spree dwarfs previous technology infrastructure investments and represents a fundamental reallocation of resources toward what Google clearly views as an existential priority. The $1.9 trillion ten-year projection suggests that this is not a temporary surge but rather a sustained, long-term commitment to AI dominance.

Controlling Every Layer: The Vertical Integration Strategy

What makes Google's approach particularly significant is its comprehensive nature. Rather than focusing on just one aspect of AI infrastructure, the company is systematically working to control every layer of the AI stack:

1. Chip Layer: The TPU Revolution

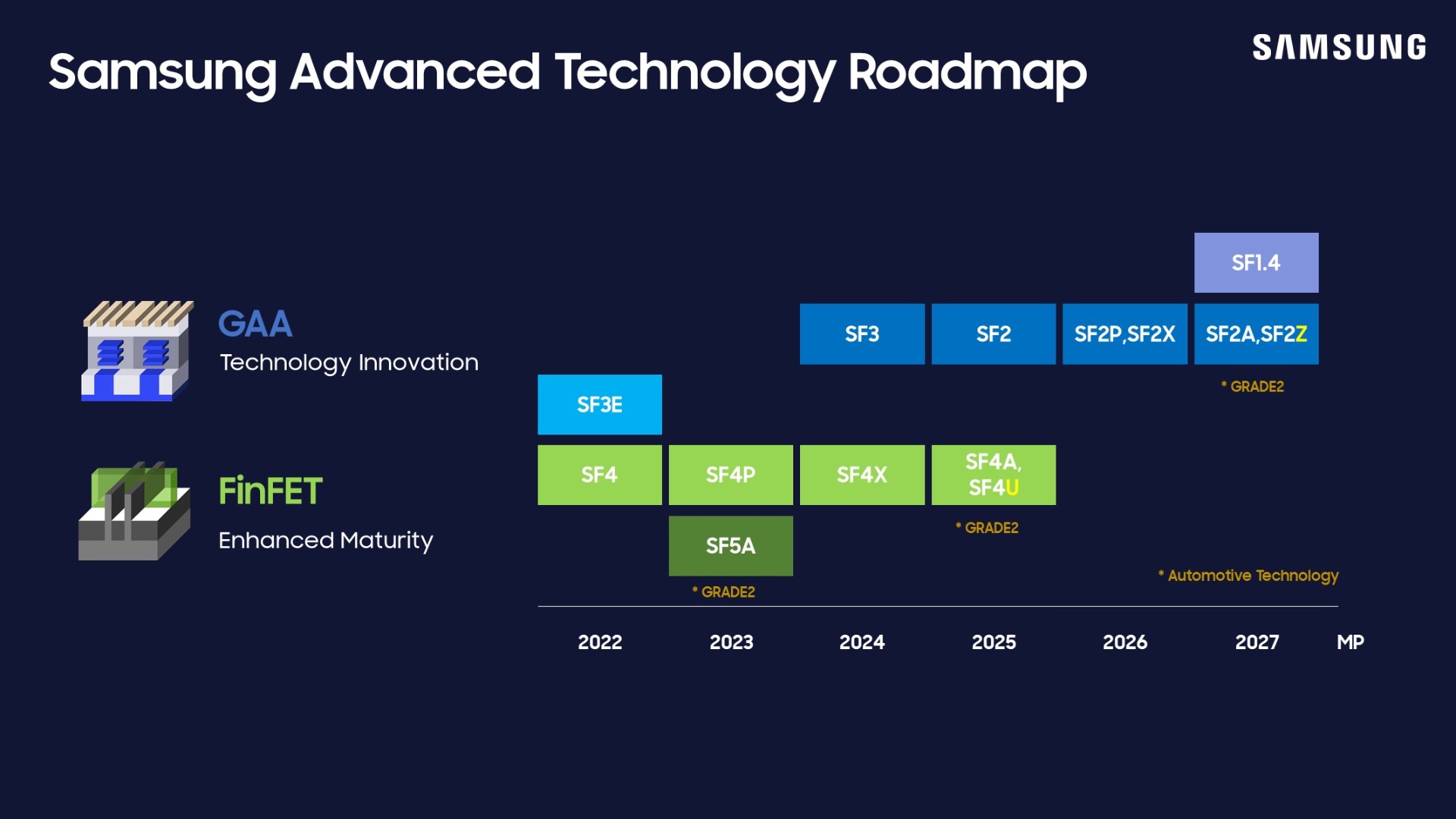

A substantial portion of Google's budget is directed toward its Tensor Processing Units (TPUs), custom-designed AI accelerators that have become increasingly competitive with Nvidia's dominant GPUs. These chips have evolved from internal tools to commercial products, with other AI companies now renting TPU capacity through Google Cloud services as a viable alternative to Nvidia hardware.

2. Data Center Infrastructure: Modular Design Innovation

To support this massive expansion, Google is shifting toward modular data center designs—standardized blueprints that can be rapidly deployed worldwide. This approach allows for faster construction, greater consistency, and potentially lower costs compared to traditional custom-built facilities.

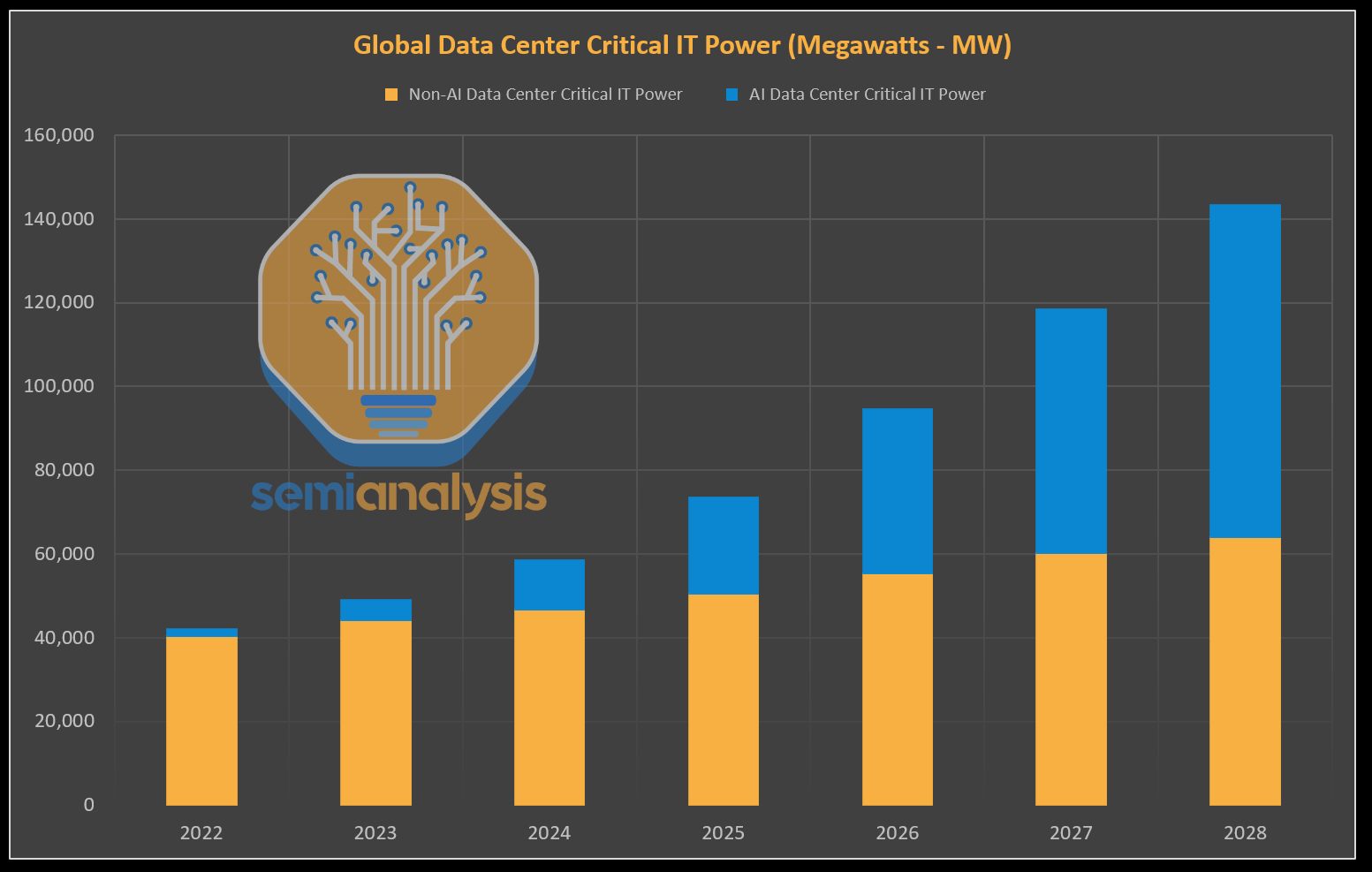

3. Power Infrastructure: Securing the Energy Backbone

Perhaps the most forward-thinking aspect of Google's strategy involves direct engagement with utility companies to secure the enormous power requirements of AI data centers. These facilities, which must operate 24/7, consume electricity at industrial scales, making reliable power procurement a critical competitive factor.

Financial Independence: Funding Through Cash Reserves

Unlike many competitors who must rely on external financing, Google possesses a unique advantage: sufficient profitability to fund these massive projects through its own cash reserves. This financial independence allows for more aggressive investment timelines and strategic flexibility that debt-dependent competitors cannot match.

The company's ability to self-fund such enormous infrastructure projects creates a significant barrier to entry for smaller players and even challenges well-funded competitors who lack Google's scale and profitability.

Industry Implications: Reshaping the AI Competitive Landscape

Google's vertical integration strategy represents a fundamental shift in how AI infrastructure is developed and deployed. By controlling the entire stack—from silicon design to power procurement—Google aims to:

- Reduce dependency on third-party suppliers like Nvidia

- Optimize performance through tightly integrated hardware and software

- Control costs across the entire value chain

- Create competitive moats that are difficult for rivals to breach

The availability of TPUs through Google Cloud also creates an interesting dynamic: Google simultaneously competes with and supplies infrastructure to other AI companies, potentially creating both competitive tension and dependency relationships.

The Global Scale: Worldwide Infrastructure Deployment

Google's modular data center approach suggests a strategy of global saturation. By developing standardized designs that can be deployed rapidly in diverse locations, the company can expand its AI infrastructure footprint worldwide while maintaining consistency and efficiency.

This global expansion is particularly important for latency-sensitive AI applications, as it allows Google to position computational resources closer to end-users around the world.

Challenges and Considerations

While Google's strategy appears formidable, it's not without challenges:

- Regulatory scrutiny of such massive infrastructure investments and potential market dominance

- Technological risk if alternative architectures prove superior to Google's integrated approach

- Environmental concerns regarding the energy consumption of expanding data center networks

- Market dynamics as competitors develop their own vertical integration strategies

Conclusion: A New Era of AI Infrastructure Competition

Google's $1.9 trillion infrastructure plan represents more than just a large investment—it signals a fundamental rethinking of how AI companies should approach scale and competition. By controlling every layer from chips to power, Google is building what could become one of the most formidable competitive advantages in technology history.

As this strategy unfolds over the coming decade, it will likely force responses from competitors, reshape supply chains, and potentially accelerate AI capabilities through more tightly integrated hardware and software ecosystems. The race for AI supremacy is increasingly becoming a race for infrastructure dominance, and Google has just placed one of the largest bets the industry has ever seen.

Source: Forbes report cited by Rohan Paul on X, detailing Google's data center buildout and infrastructure investment strategy.