What Happened

Former Google CEO and current AI policy advocate Eric Schmidt has provided a stark cost estimate for scaling AI compute infrastructure in the United States. According to a post by AI commentator Rohan Paul, Schmidt stated that building 1 gigawatt (GW) of data center capacity costs approximately $50 billion.

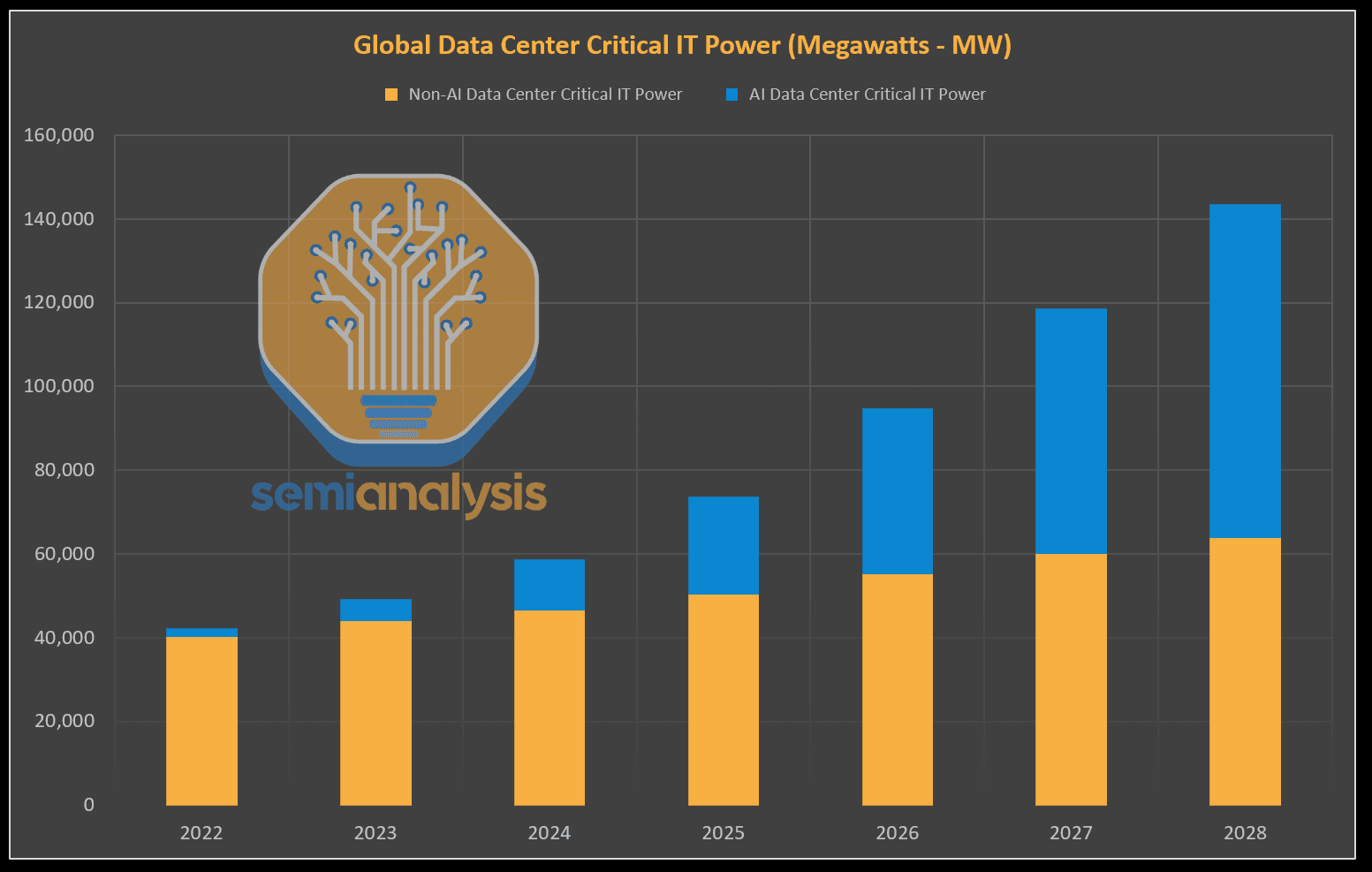

Extrapolating from this figure, Schmidt estimates that reaching a total of 100 GW of power dedicated to AI data centers in the U.S. would require roughly $5 trillion in investment over five years. For context, this scale of investment would equate to about 1% of U.S. GDP growth. At this level of deployment, AI data centers would consume approximately 10% of the United States' total electricity supply.

Schmidt's concluding point, as relayed, is that "AI still has lots of room to scale," implying these are the capital and energy prerequisites for that next phase of growth.

Context: The AI Compute Arms Race

This high-level estimate from a major industry figure comes amid an unprecedented global scramble for AI compute resources. The training and inference for large language models (LLMs) like GPT-4, Claude 3, and Gemini are extraordinarily power-intensive. The demand has led to a shortage of advanced GPUs (notably from NVIDIA), skyrocketing valuations for chip companies, and a rush to secure energy contracts and build new data centers.

Schmidt's $50B/GW figure likely encompasses the full-stack cost: not just the servers and networking hardware filled with thousands of GPUs, but also the real estate, cooling infrastructure, and the critical power delivery systems. The largest AI data centers today are moving toward the 100+ megawatt (MW) scale, with plans for campuses exceeding 1 GW.

The Energy Imperative

The 10% of U.S. electricity figure underscores the central challenge of AI's physical footprint. Data centers are already significant energy consumers, and AI workloads are far more compute-dense per rack than traditional cloud storage or web hosting. This surge in demand is straining power grids and forcing a reevaluation of energy policy, with a focus on building new generation capacity—often from natural gas in the short term, despite industry pledges to pursue carbon-free energy.

gentic.news Analysis

Eric Schmidt's $5 trillion estimate is a sobering quantification of the infrastructure mountain the AI industry must climb. This isn't just a chip problem; it's a macroeconomic and energy policy problem. Schmidt, who now chairs the Special Competitive Studies Project (SCSP) and is a prominent voice on U.S. AI competitiveness, is likely framing this cost as a national imperative, akin to a modern-day "Apollo program" for AI infrastructure.

This perspective directly connects to the broader strategic competition we've covered, particularly with China. In our analysis of the U.S. CHIPS Act and the China's $47 Billion Chip Fund, the focus was on semiconductor fabrication. Schmidt's comments expand the battlefield to the complete downstream infrastructure: the colossal data centers where those chips must run. His estimate implies that winning the AI race requires capital mobilization on a scale typically associated with wartime efforts or decades-long space programs.

Furthermore, this aligns with the aggressive moves by cloud hyperscalers we track. Microsoft's multi-billion dollar investments in OpenAI and its own data centers, Google's TPU v5p rollout, and Amazon's massive Project Kuiper and custom Trainium/Inferentia chips are all parts of this $5 trillion puzzle. These companies are not just buying GPUs; they are designing their own silicon, building power plants, and securing decades-long energy contracts. Schmidt's number suggests the industry is still in the early innings of this build-out, and the financial and logistical barriers will consolidate power among a few entities with the deepest pockets and strongest government relationships.

Frequently Asked Questions

How much does 1 gigawatt (GW) of AI data center capacity cost?

According to the estimate relayed by Eric Schmidt, building 1 GW of data center capacity for AI workloads costs approximately $50 billion. This is a full-stack estimate that includes the cost of the facility, advanced cooling systems, networking, and the vast arrays of expensive AI accelerators (like NVIDIA H100/GH200 GPUs) needed to fill it.

What percentage of U.S. electricity would AI data centers use at 100 GW?

At a scale of 100 GW of power dedicated to AI data centers, Schmidt estimates they would consume about 10% of the total electricity supply in the United States. For comparison, all data centers globally were estimated to consume 1-1.3% of global electricity in recent years. AI's specific workloads are dramatically more energy-intensive per compute operation.

Is $5 trillion over 5 years a realistic estimate for U.S. AI infrastructure?

While a back-of-the-envelope calculation ($50B/GW * 100 GW), the $5 trillion figure highlights an order of magnitude. It aligns with the observed spending patterns of tech giants and the scale of announced projects. Whether it's precisely $5T is less important than the conclusion: scaling AI to its envisioned potential requires trillions, not billions, in infrastructure investment, making it one of the largest capital formation projects in modern history.

Who is Eric Schmidt and why is he commenting on this?

Eric Schmidt is the former CEO and Chairman of Google and remains a major figure in technology and national policy. He currently chairs the Special Competitive Studies Project (SCSP), a bipartisan nonprofit focused on strengthening U.S. long-term competitiveness in AI and other critical technologies. His comments reflect a strategic, high-level view of the resources required for the U.S. to maintain a lead in the global AI race.