What Happened

OpenAI engineer Matt Weinbach posted on X that GPT-5.5 is "an Nvidia Blackwell model through and through" — trained on, designed for, and served on Nvidia GB200/GB300 NVL72 systems. He also noted that OpenAI used GPT-5.5 itself to optimize its inference infrastructure, achieving a 20% increase in generation speed.

This is a rare technical disclosure from an OpenAI employee about the hardware-software co-design behind the latest model iteration. The claim ties GPT-5.5's architecture directly to Nvidia's Blackwell GPU platform, suggesting deep integration beyond simple deployment.

Technical Details

Weinbach's statement breaks down into three key claims:

- Training infrastructure: GPT-5.5 was trained on Nvidia GB200/GB300 NVL72 systems — the highest-end Blackwell-based server nodes that link 72 GPUs into a single logical unit via NVLink.

- Hardware-native design: The model was "designed for" these systems, implying architecture decisions (e.g., model parallelism, memory layout, communication patterns) were made with Blackwell's specific capabilities in mind.

- Self-optimized inference: OpenAI used GPT-5.5 to optimize its own inference stack, yielding a 20% speedup. This suggests the model was employed to generate or tune kernel configurations, scheduling policies, or memory management strategies.

How It Compares

Training hardware Hopper H100 clusters Blackwell GB200/GB300 NVL72 Hardware co-design General-purpose Blackwell-native Inference optimization Manual or separate tools Self-optimized via model Speed improvement N/A +20% generation speedWhile OpenAI has not disclosed GPT-5.5's parameter count or architecture details, the explicit Blackwell-native design is a departure from prior models that were trained on H100 clusters and ported to inference hardware post-hoc.

Why It Matters

This disclosure signals a trend toward tighter hardware-software integration in frontier AI models. Rather than training on one architecture and deploying on another, OpenAI is now building models that are co-optimized for a specific GPU platform from the start.

The 20% inference speedup from self-optimization is also notable. If GPT-5.5 can generate its own inference optimizations, it reduces the need for manual kernel tuning and could accelerate deployment cycles.

gentic.news Analysis

This is more than a product update — it's a strategic signal. OpenAI's explicit investment in Blackwell-native design means they're betting on Nvidia's roadmap for the next generation of training and inference. This aligns with our previous coverage of Nvidia's Blackwell ramp and the increasing demand for NVL72 systems among hyperscalers.

The self-optimization angle is particularly interesting. Using a model to improve its own inference stack is a form of recursive self-improvement — a concept that has been discussed theoretically but rarely demonstrated at production scale. If OpenAI can maintain or improve quality while cutting latency, it could give them a competitive edge in real-time applications.

However, this also creates lock-in risk. A model deeply optimized for Blackwell will not run efficiently on competitor hardware (e.g., AMD MI300X or Intel Gaudi). If OpenAI's future models are all Blackwell-native, they are tying their infrastructure strategy to Nvidia's execution.

Frequently Asked Questions

What is GPT-5.5?

GPT-5.5 is the latest iteration of OpenAI's large language model, disclosed by an OpenAI engineer as being trained on Nvidia's Blackwell GB200/GB300 NVL72 systems and designed from the ground up for that hardware platform.

How does Blackwell differ from Hopper?

Nvidia's Blackwell architecture (GB200/GB300) offers higher memory bandwidth, faster interconnects, and improved transformer-specific compute units compared to the previous Hopper H100 architecture. The NVL72 configuration links 72 GPUs into a single node for massive model parallelism.

What does "self-optimized inference" mean?

OpenAI used GPT-5.5 itself to optimize its inference infrastructure — likely by generating or tuning kernel configurations, memory management, or scheduling policies — resulting in a 20% increase in generation speed.

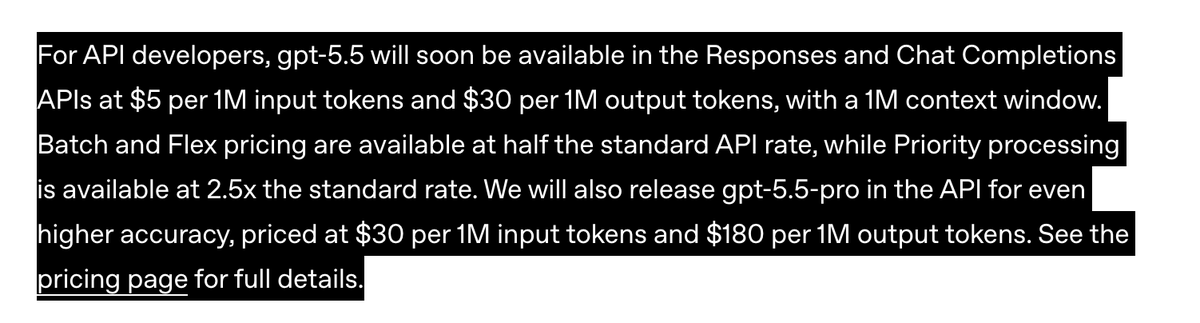

Is GPT-5.5 publicly available?

OpenAI has not announced public availability of GPT-5.5. The disclosure came from an employee's social media post, and the model may be for internal use or limited deployment.