OpenAI released GPT-5.5 on April 23rd, 2026, just 48 days after GPT-5.4. Most coverage treated that gap as a footnote. It isn't. It's the entire story.

Key Takeaways

- OpenAI released GPT-5.5, codenamed Spud, 48 days after GPT-5.4.

- The model itself is less interesting than the super app strategy, 35x cost reduction on GB200 hardware, and 48-day release cadence that signals a deliberate acceleration.

What GPT-5.5 Actually Does

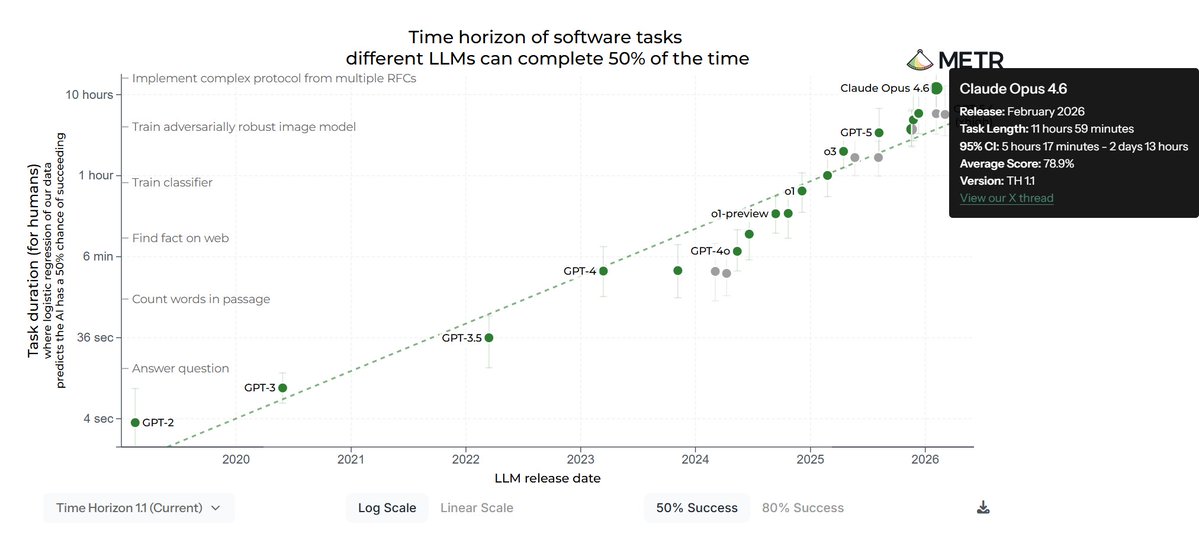

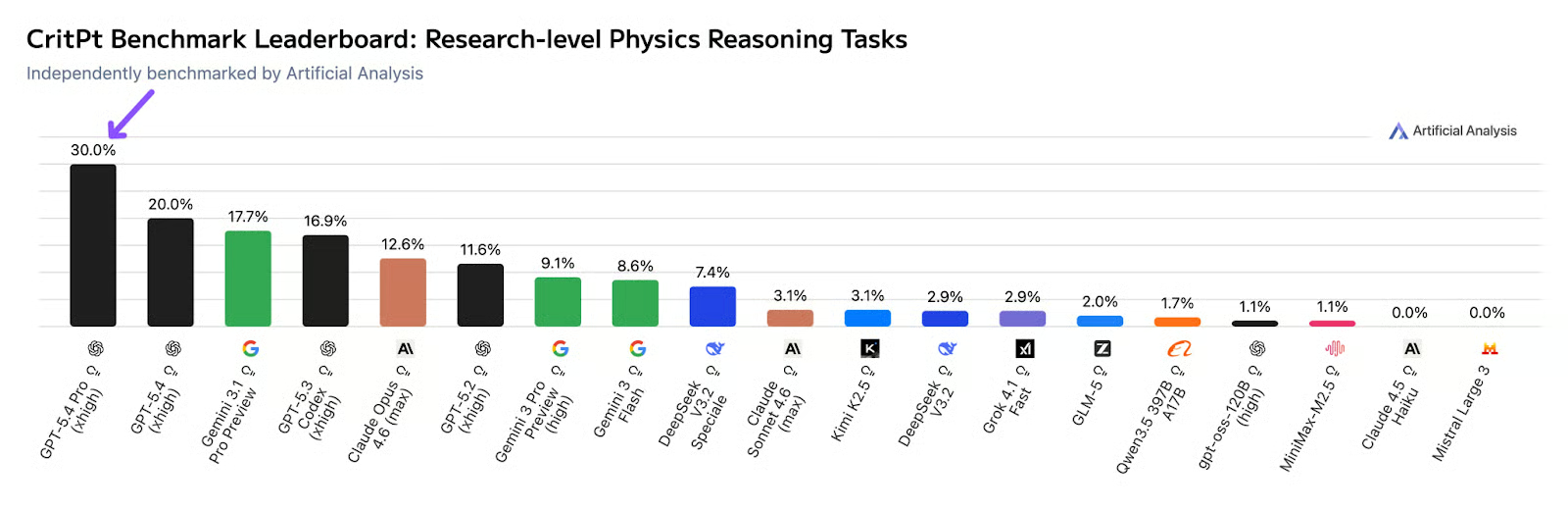

Yes, the benchmarks are real. GPT-5.5, codenamed "Spud" internally, outperforms Gemini 3.1 Pro and Claude Opus 4.5 across standard evaluations. OpenAI grades its own homework, so take the exact margins with appropriate skepticism, but independent labs have corroborated the direction, and the gaps are wide enough that the directional story holds.

The capability worth paying attention to isn't in the benchmark charts. It's what the model can now do with your operating system. GPT-5.5 can navigate your computer autonomously, debugging code, building spreadsheets, searching the web, and cross-referencing documents across applications without you babysitting every step. You describe a messy multi-part task. It plans, executes, checks itself, and keeps going. OpenAI's Chief Research Officer Mark Chen described the gains as "especially strong in agentic coding, computer use, and knowledge work."

Then, almost buried in the release noise, came something worth stopping on: GPT-5.5 is now good enough at scientific research to function as a genuine co-investigator. On multi-day experiments in genetics, bioinformatics, and drug discovery, the kind of work that consumes weeks of specialist time, the model posted leading performance on GeneBench and BixBench, two evaluations built around real scientific workflows rather than abstract reasoning tasks.

The Super App Plays

Greg Brockman said something on the press call that deserved more front pages than it got. He described GPT-5.5 as "a real step forward towards the kind of computing that we expect in the future," then explicitly framed it as a step toward a super app: a single unified platform merging ChatGPT, Codex, and a dedicated AI browser called Atlas into a single desktop experience. One subscription. One interface. Your entire workflow.

If you've spent time in Southeast Asia, you already understand the template. WeChat. Grab. Gojek. One app where you message, pay, book, shop, and manage your digital life without ever switching contexts. The West never produced one. OpenAI apparently wants to change that.

In practice, the vision looks like this: you open one application, describe what you need — research a competitor, draft a strategy document, pull the key numbers into a spreadsheet, email the summary to three colleagues, schedule a Thursday follow-up — and the system handles the handoffs between those tasks without you managing any of them. No tab-switching. No copy-pasting between tools. No workflow coordination overhead. You describe the outcome; it handles the path.

GPT-5.5 is the engine that makes that worth attempting. Atlas and Codex are the layers built around it.

Why the Economics Actually Work This Time

The part of the release that got almost no attention is the part that makes everything else structurally credible.

GPT-5.5 runs on NVIDIA's GB200 NVL72 rack-scale systems hardware that delivers roughly 35x lower cost per million tokens compared to the previous generation. OpenAI has committed to deploying over 10 gigawatts of this infrastructure. The first jointly deployed cluster already contains 100,000 GPUs.

When I first saw that 35x figure, I assumed it was marketing. Then I read what OpenAI and NVIDIA published around the launch. It holds up, and it's the reason the super app strategy makes financial sense rather than just strategic sense. You can't bundle three frontier AI products into a $20/month subscription on legacy GPU clusters. The math collapses. On GB200s, it doesn't. The super app isn't an aspiration statement, it's an infrastructure bet that's already been made.

What OpenAI is Actually Responding To

Anthropic has been winning the part of the market that matters most for near-term developer revenue: enterprise engineers. Claude has become the default for teams building on top of AI, expanding seat by seat, terminal session by terminal session, quietly threading itself into how software actually gets made. It's methodical, it's compounding, and it's working.

OpenAI's response isn't to fight that battle on Anthropic's terms. It's to change the terrain entirely. Consumer and SMB lock-in through a bundled super app is a fundamentally different growth model than developer seat expansion through CLI-native tools. Both strategies compound. They just compound toward very different companies and very different moats.

GPT-5.5 is the engine. Atlas is the browser layer. Codex is the development layer. ChatGPT is the consumer face. Together, they form what OpenAI is betting is more defensible than any single product: a unified AI environment that replaces not just individual tools but the entire workflow context those tools live in.

The Parts That Deserve More Scrutiny

GPT-5.5 ships with what OpenAI's own safety documentation labels a "High" cybersecurity risk rating — one tier below the threshold that triggers restricted access. The API release was delayed by one day, with OpenAI citing "different safeguards" needed for serving at scale. The exact nature of those safeguards was not disclosed.

That sentence is harder to move past than most coverage suggests. A year ago, no publicly released model carried that label. The fact that it's now normalized as a line in a press release rather than as the lead of the story says something about how quickly our reference points are shifting.

The evaluation infrastructure problem compounds this. Independent assessment labs are currently 2–3 model versions behind the frontier. The models are releasing faster than the capacity to understand them can keep up with. Every deployment decision being made right now is based on benchmarks that were outdated before the ink dried. The safety picture we have is, structurally, a lagging indicator — and the lag is widening.

Jakub Pachocki, OpenAI's Chief Scientist, said: "I think the last two years have been surprisingly slow." That sentence should probably get more sustained attention than it's receiving. The last two years produced GPT-4, GPT-4o, GPT-5, GPT-5.4, and now GPT-5.5. In his view, that was slow.

What to Actually Do With This

For developers, the useful question isn't which model to adopt — it's how to architect systems that aren't brittle when the model layer changes. It will change. The 48-day cadence makes that not a risk but a certainty. Build for swappability, because the swap is already scheduled.

Knowledge workers are the primary target of the super app strategy, and most haven't registered it yet. The efficiency gains from eliminating coordination overhead — the scheduling, the summarizing, the cross-tool shuffling — are going to become visible within 12 months. The gap between people who've figured that out and people waiting for their organization's official guidance will be fairly stark.

Enterprise teams in legal, research, and finance are being named explicitly. The "co-scientist" framing from OpenAI isn't accidental — these are the industries where acceleration has the clearest dollar value, the highest tolerance for premium pricing, and the deepest switching costs once a workflow dependency locks in. If you're not actively evaluating what GPT-5.5 Pro can do for your function, assume someone adjacent to you is.

For those watching the AI race more broadly, track the cadence, not the benchmarks. When a frontier lab is shipping at 48-day intervals, any competitive assumption you formed six weeks ago is already dated.

gentic.news Analysis

This release is best understood not as a model update but as a strategic consolidation. OpenAI is responding to a multi-front competitive pressure that our knowledge graph captures clearly: Anthropic's Claude Code has been mentioned in 652 prior articles, making it the dominant agentic coding tool in the terminal. Meanwhile, Google's Gemini 2.5 Pro (mentioned in 101 articles) has been making breakthroughs in reasoning. OpenAI's answer is to bundle everything into a super app — ChatGPT, Codex, and the new Atlas browser — creating a moat that's harder to attack than any single product.

The 35x cost reduction is the structural enabler here. Our knowledge graph shows OpenAI has invested $40B+ total funding and is targeting an IPO. The economics of bundling three frontier products at $20/month only work with the GB200 NVL72 hardware. This is a capital-intensive bet that smaller competitors cannot match.

However, the cybersecurity risk rating deserves more attention than it's getting. Our knowledge graph shows OpenAI's internal AI agents now generate research-quality questions and correct published errors, with a 1-2 year timeline for full researcher-level capabilities. The safety evaluation infrastructure is falling behind — labs are 2-3 model versions behind the frontier. When the Chief Scientist calls the last two years "slow" while shipping models at 48-day intervals with a "High" cybersecurity risk rating, the tension is real.

Frequently Asked Questions

What is GPT-5.5 and how does it compare to previous models?

GPT-5.5, codenamed "Spud," is OpenAI's latest language model released April 23, 2026, just 48 days after GPT-5.4. It outperforms Gemini 3.1 Pro and Claude Opus 4.5 on standard benchmarks, with particular strength in agentic coding, computer use, and scientific research tasks. The model runs on NVIDIA's GB200 NVL72 hardware, achieving 35x lower cost per million tokens compared to the previous generation.

What is OpenAI's super app strategy?

OpenAI is merging ChatGPT, Codex, and a new AI browser called Atlas into a single desktop experience. The vision is a unified interface where users describe complex multi-step tasks — research, drafting, spreadsheet creation, emailing, scheduling — and the system handles all the handoffs without tab-switching or copy-pasting. This mirrors the WeChat/Grab/Gojek model popular in Southeast Asia.

What are the safety concerns with GPT-5.5?

GPT-5.5 carries OpenAI's own "High" cybersecurity risk rating, one tier below restricted access. The API release was delayed by one day for "different safeguards" that were not disclosed. Independent evaluation labs are 2-3 model versions behind the frontier, meaning safety assessments are structurally lagging behind deployment decisions.

How does GPT-5.5 affect the competitive landscape against Anthropic and Google?

Anthropic's Claude Code has become the default for enterprise developers, while Google's Gemini 2.5 Pro has made reasoning breakthroughs. OpenAI's response is not to compete on their terms but to change the game entirely with a bundled super app that targets consumer and SMB lock-in. The 48-day release cadence itself is a competitive weapon — any assumption formed six weeks ago is already dated.