What Happened

A team of researchers with a strong academic pedigree—160+ publications in Nature and ICLR—has introduced a new AI architecture called Large Memory Models (LMMs). According to a post by @kimmonismus, LMMs are "designed specifically for how human memory works" and represent a different paradigm from retrieval-augmented generation (RAG) or vector search.

The founders have reportedly closed their Harvard lab to focus on building this architecture, signaling a serious commitment to commercializing the research.

Context

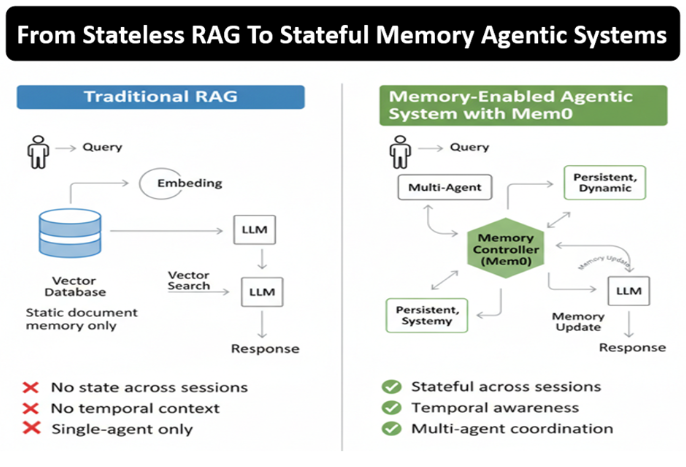

Traditional large language models (LLMs) rely on either parametric knowledge (stored in weights) or external retrieval mechanisms like RAG and vector databases to access information. RAG, popularized by systems like LangChain and LlamaIndex, retrieves relevant documents from a vector store and feeds them into the prompt context. Vector search, powered by embeddings, finds similar items based on semantic similarity.

Large Memory Models aim to replace this two-step process with a single architecture that natively stores and retrieves information in a way analogous to human memory—potentially more efficient, context-aware, and capable of handling complex reasoning over long-term knowledge.

Why It Matters

If LMMs deliver on their promise, they could significantly reduce the complexity and cost of building knowledge-intensive AI applications. Current RAG systems require maintaining vector databases, managing embeddings, and handling retrieval quality issues (e.g., low recall, irrelevant chunks). An architecture that internalizes memory could simplify deployment and improve performance on tasks requiring deep domain knowledge.

The team's strong publication record—particularly in top venues like Nature and ICLR—lends credibility to the approach. However, without published benchmarks or technical details, it's too early to assess whether LMMs outperform existing methods.

What to Watch

- Technical details: Expect a paper or technical report with architecture specifics, training methodology, and benchmark results against RAG and fine-tuned LLMs.

- Use cases: LMMs could be particularly impactful in legal, medical, and scientific domains where accurate, long-term memory is critical.

- Competition: Other memory-augmented architectures (e.g., Memory-Augmented Neural Networks, Differentiable Neural Computers) have existed but not achieved widespread adoption. LMMs may differ in scalability or training efficiency.

Frequently Asked Questions

What are Large Memory Models?

Large Memory Models (LMMs) are a new AI architecture designed to mimic human memory processes, potentially replacing RAG and vector search for knowledge retrieval in AI systems.

How do LMMs differ from RAG?

RAG retrieves external documents via vector search and feeds them into the prompt context. LMMs aim to internalize memory directly into the model architecture, eliminating the need for separate retrieval systems.

Who is behind Large Memory Models?

The team includes researchers with 160+ publications in Nature and ICLR, who closed their Harvard lab to commercialize the technology. Specific names have not been disclosed yet.

When will LMMs be available?

No release date has been announced. The team is likely preparing a paper or product launch. Follow @kimmonismus for updates.

gentic.news Analysis

This announcement comes at a time when the AI community is increasingly questioning the scalability and reliability of RAG systems. Our previous coverage of [RAG vs. fine-tuning trade-offs] highlighted that many production systems struggle with retrieval quality, especially for ambiguous queries. A native memory architecture could address these pain points.

The team's decision to leave Harvard suggests this is not just an academic exercise—they see commercial potential. This follows a pattern we've observed with other top researchers spinning out companies (e.g., Anthropic, Mistral). The 160+ publications in Nature and ICLR are a strong signal of technical depth.

However, the AI memory space is crowded. Competitors like MemGPT (which uses virtual context management) and various memory-augmented LLM startups are also vying for attention. LMMs will need to demonstrate clear, reproducible gains on standard benchmarks (e.g., HotpotQA, FEVER, or long-context tasks) to gain traction.

We'll be watching for the technical paper—expected within weeks—which should clarify whether LMMs are a genuine breakthrough or an incremental improvement. For now, the architecture is intriguing but unproven.