On April 15, 2026, Arthur Mensch, co-founder and CEO of Mistral AI, posted a cryptic, four-word message on X (formerly Twitter): "new model tomorrow!?!"

The tweet, devoid of any technical details, links, or documentation, is a classic pre-announcement tactic from the Paris-based AI lab, known for its rapid-fire release schedule and competitive open-weight models.

What Happened

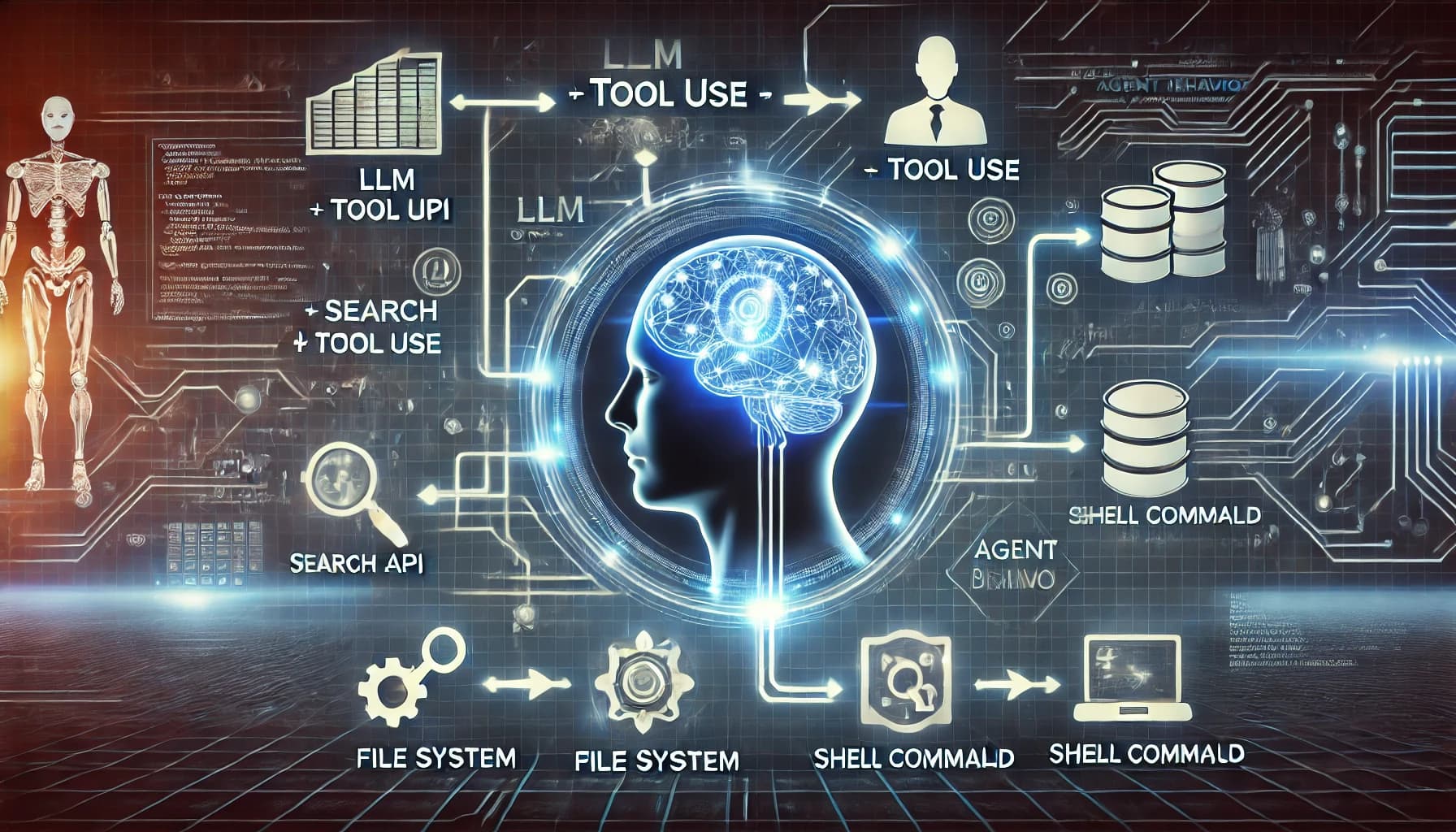

At approximately 10:47 AM UTC, Arthur Mensch's verified account (@mweinbach) posted the message. The tweet contained no further context, images, or replies from Mensch elaborating on the nature of the model—whether it's a new large language model (LLM), a mixture of experts (MoE) architecture, a code model, or an update to an existing family like Mistral Large.

Context

Mistral AI has built its reputation on a strategy of frequent, sometimes unannounced, model releases. Their launches are often preceded by minimal marketing, with technical details and model weights appearing directly on their platform or Hugging Face. This approach contrasts with the longer, more orchestrated launch cycles of competitors like OpenAI, Anthropic, and Google DeepMind.

Previous major releases from Mistral include:

- Mistral 7B (September 2023): Their first openly released model, setting a performance benchmark for its size.

- Mixtral 8x7B (December 2023): A sparse mixture-of-experts model that outperformed Llama 2 70B and GPT-3.5 on several benchmarks.

- Mistral Large (February 2024): Their first flagship model to rival top proprietary models, offered via their La Plateforme API.

- Codestral (May 2024): A specialized code generation model.

A "new model tomorrow" tweet fits squarely within this established pattern of generating anticipation through brevity.

What to Expect

Based on Mistral's history and the competitive landscape in April 2026, the new model could be one of several possibilities:

- A Next-Generation MoE Model: An evolution of the Mixtral architecture, potentially with more experts, better routing, or improved efficiency.

- A Scaling Play: A significantly larger dense or MoE model aimed at pushing the state-of-the-art for open-weight models, possibly targeting benchmarks recently set by models like Meta's Llama 3.1 405B or Google's Gemma 2 27B.

- A Specialized Model: A new offering focused on a specific vertical like reasoning, long-context processing, or multimodal capabilities.

- An Efficiency Model: A smaller, highly optimized model for edge deployment or cost-sensitive applications.

Given the lack of detail, the AI community's response will be one of watchful waiting until the model's details, weights (if open), and benchmark results are published.

gentic.news Analysis

Arthur Mensch's tweet is less an announcement and more a pressure release valve for the intense, multi-front competition characterizing the open-weight model space in early 2026. Mistral's strategy has consistently been to iterate quickly and publicly, using community feedback and adoption as a forcing function for improvement. This tweet signals they are ready to fire another salvo.

This move is directly competitive with Meta's open-weight dominance. Following Meta's release of the Llama 3 series in 2024 and subsequent updates, the bar for open models has been raised significantly in terms of scale, capability, and developer trust. For Mistral to maintain its relevance and mindshare as a leading European AI lab—especially after securing massive funding rounds—it must demonstrate it can not only match but periodically leapfrog these benchmarks. A "new model" that fails to show clear, measurable advances over Llama 3.1 or Google's Gemma 2 would be perceived as a step backward.

Furthermore, this tease comes amid a broader industry trend of model release compression. The cycle from research paper to trained model to public release has shortened dramatically. Where it once took years, labs like Mistral, Qwen (Alibaba), and 01.AI now operate on quarterly or even monthly cadences for significant updates. This tweet is a symptom of that accelerated pace. For developers, this is a double-edged sword: it means constant access to improving tools, but also a challenging environment for stable integration, as the state-of-the-art model for a given task or price point can change monthly.

Frequently Asked Questions

What time will the new Mistral model be released?

Mistral AI has not specified a release time. Based on past releases following similar tweets, the model and/or announcement could drop at any point during the day (April 16, 2026, UTC). Releases have sometimes occurred in the morning European time, but there is no fixed schedule.

Will the new Mistral model be open source?

Mistral AI employs a hybrid strategy. Historically, their smaller, foundational models (like Mistral 7B, Mixtral 8x7B) have been released with open weights under permissive licenses. Their larger, more capable flagship models (like Mistral Large) have been made available via API first, with potential for limited open releases later. The tweet does not indicate which path this new model will take.

How can I access the new Mistral model when it launches?

If the model follows the pattern of their open-weight releases, it will likely be made available for download on their official Hugging Face page (https://huggingface.co/mistralai) and via their La Plateforme API (https://console.mistral.ai). For API access, you will need a Mistral platform account.

What should I look for to evaluate this new model?

Key details to scrutinize upon release include: the model's architecture (dense vs. MoE, parameter count), the context window length, the training dataset composition, benchmark scores on standard evaluations (MMLU, GSM8K, HumanEval, etc.), licensing terms for use and distribution, and any stated performance vs. cost metrics for API access.