A developer has built and shared a system designed to give AI-powered coding assistants a long-term memory, addressing a core limitation in their current utility. The project, dubbed an "LLM Wiki second brain," wires a coding agent directly into a dynamic, project-specific knowledge base.

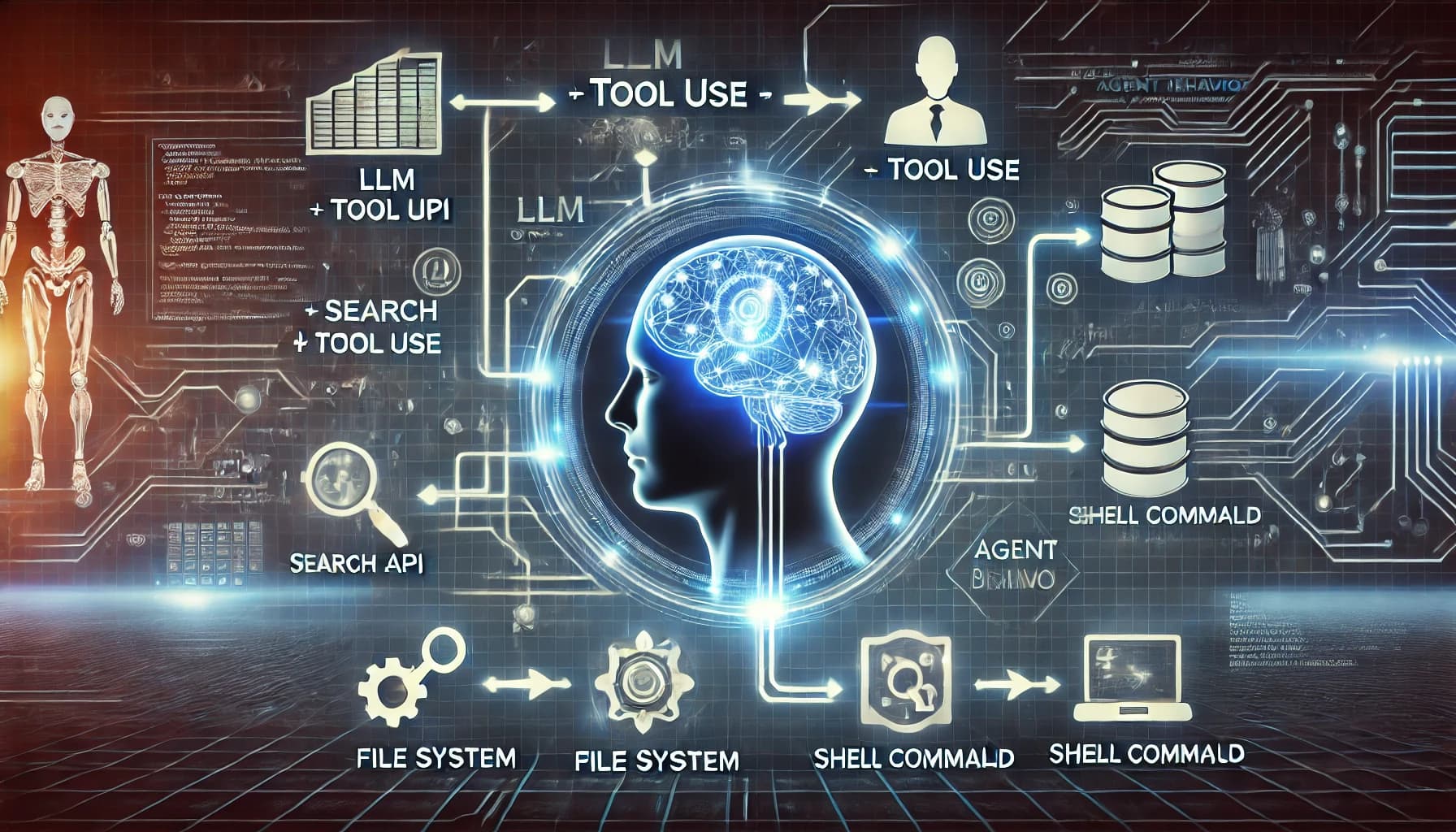

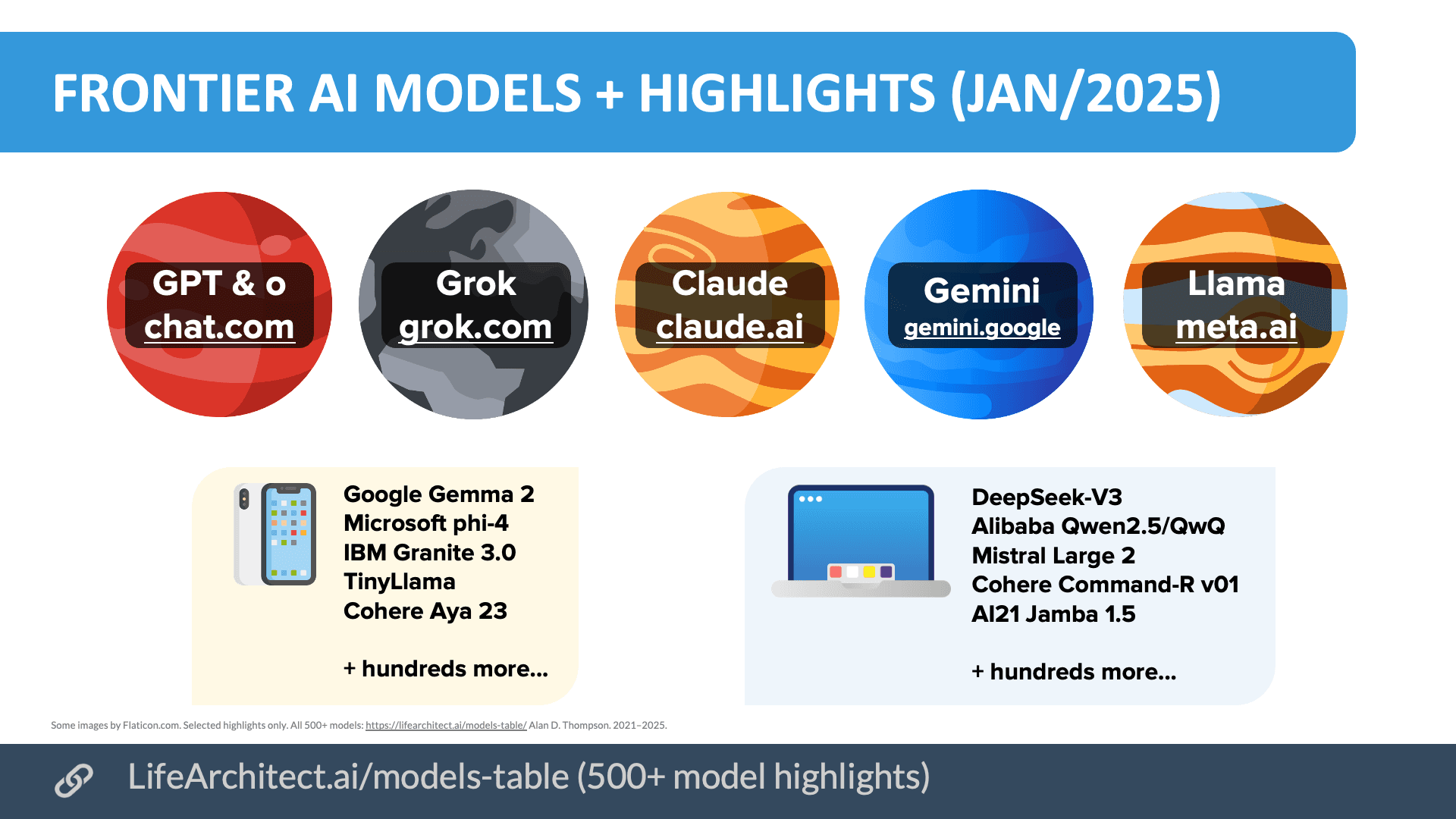

The core idea is to move beyond the standard, stateless interaction where an agent like GitHub Copilot, Cursor, or a custom GPT operates with only the context provided in the current chat window or open files. Instead, this system creates a persistent, living repository of a project's structure, architectural decisions, coding patterns, and documentation that the agent can continuously reference and update.

What It Does

The developer fed the system their frontend codebase and relevant source materials. The agent then uses this information to build and maintain its own internal wiki. This process creates a feedback loop: the agent consults the wiki for context before generating code, and then updates the wiki with new decisions or patterns it implements or learns from the developer.

The reported outcome is "much smarter and consistent outputs every session," as the agent no longer "forgets" project-specific conventions or previously made decisions from one coding session to the next. The developer states the tool is agent-agnostic and "works with any AI coding agent you want."

The Technical Problem It Solves

Current LLM-based coding agents suffer from a context window limitation. Even with large 128K or 200K token windows, an entire complex codebase and its history cannot fit into a single prompt. This leads to:

- Inconsistency: The agent might suggest a solution that contradicts an architectural pattern established earlier in the project.

- Re-learning: In each new session, the agent lacks the full project history and must be re-prompted with key context.

- Fragmented Knowledge: Project knowledge remains siloed in developer heads, READMEs, or scattered comments, not integrated into the agent's operational workflow.

This "LLM Wiki" acts as a retrieval system, dynamically fetching the most relevant pieces of project knowledge (code snippets, decision logs, component relationships) to inject into the agent's context window for each task, effectively giving it a form of long-term, associative memory.

How It Likely Works (Inference)

While the source tweet does not provide implementation details, a system like this would typically involve:

- Ingestion & Chunking: Codebase files, documentation, and possibly commit messages are parsed and broken into semantically meaningful chunks.

- Embedding & Indexing: These chunks are converted into vector embeddings and stored in a vector database (like Pinecone, Weaviate, or Chroma).

- Retrieval-Augmented Generation (RAG): When the coding agent receives a query (e.g., "add a new API endpoint"), the system queries the vector index for the most relevant chunks related to existing API patterns, authentication middleware, and data models.

- Context Augmentation: These retrieved chunks are formatted and prepended to the user's prompt, giving the agent immediate, project-specific context.

- Knowledge Maintenance: The system likely includes a way to update the index, either manually, through automated parsing of new commits, or via the agent's own summaries of its actions.

gentic.news Analysis

This project is a hands-on implementation of a trend we've been tracking: the shift from using LLMs in isolation to connecting them to external, updatable data sources. It directly applies the Retrieval-Augmented Generation (RAG) pattern, which has become dominant for knowledge-intensive tasks, to the specific domain of software development. This is a natural evolution beyond the basic code completion that launched tools like GitHub Copilot.

The developer's approach aligns with a broader industry movement towards agentic workflows and persistent memory. As we covered in our analysis of Devon and other autonomous coding agents, a major bottleneck is maintaining context across long-running tasks. Projects like this "LLM Wiki" are grassroots solutions to that problem, attempting to move agents from single-session tools to persistent project collaborators.

However, the key challenge this project highlights—and doesn't yet solve at scale—is knowledge curation and hygiene. A living wiki that an AI agent can update autonomously risks accumulating contradictions, outdated patterns, or low-quality entries. The next step for systems like this will be implementing validation layers, perhaps through human-in-the-loop review or self-correcting mechanisms, to ensure the "second brain" remains a reliable source of truth and doesn't degenerate into a garbled memory. Its success hinges on the quality of the retrieval and the update protocol more than the concept itself.

Frequently Asked Questions

Can I use this LLM Wiki tool right now?

The tool appears to be a personal project shared by a developer on X (formerly Twitter). As of now, there is no indication it is a publicly released product or open-source repository. The post serves as a proof-of-concept demonstration of the architecture.

How is this different from just giving the AI my whole codebase?

Most AI coding agents can only process a limited number of open files or context tokens at once (e.g., 10-20 files). A large codebase can contain thousands of files. This system uses semantic search to find and supply only the most relevant pieces of information from the entire code history to the agent, making efficient use of the context window.

Does this work with GitHub Copilot or Cursor?

The developer states it "works with any AI coding agent you want." In practice, this would likely require the system to act as a middleware layer that sits between the developer and the agent (like Copilot), augmenting prompts before they are sent. Deeper integration might be needed for full autonomy.

What's the biggest technical hurdle for a system like this?

The main challenge is creating accurate and semantically meaningful chunks from code. Code has dense, cross-referential structure. Simply splitting files by lines or tokens can destroy context. Effective systems need to understand code syntax to chunk by functions, classes, or modules and then build a knowledge graph of how those pieces relate.