In the rapidly evolving landscape of AI-assisted development, a surprising discovery has emerged from recent research that could fundamentally change how developers interact with their AI coding assistants. The study, highlighted by AI researcher Omar Sar, reveals that the common practice of packing AGENTS.md files with extensive context—long assumed to improve AI performance—may actually be counterproductive.

The AGENTS.md Conundrum

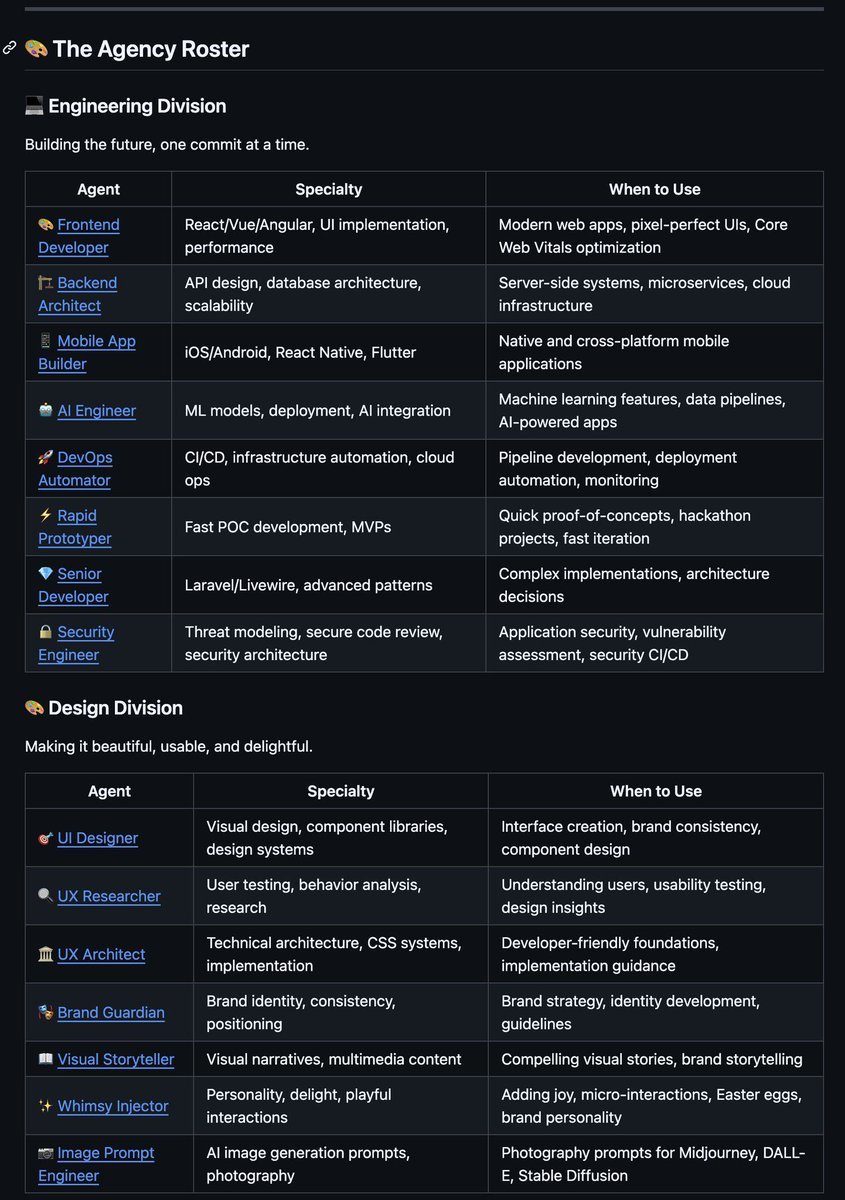

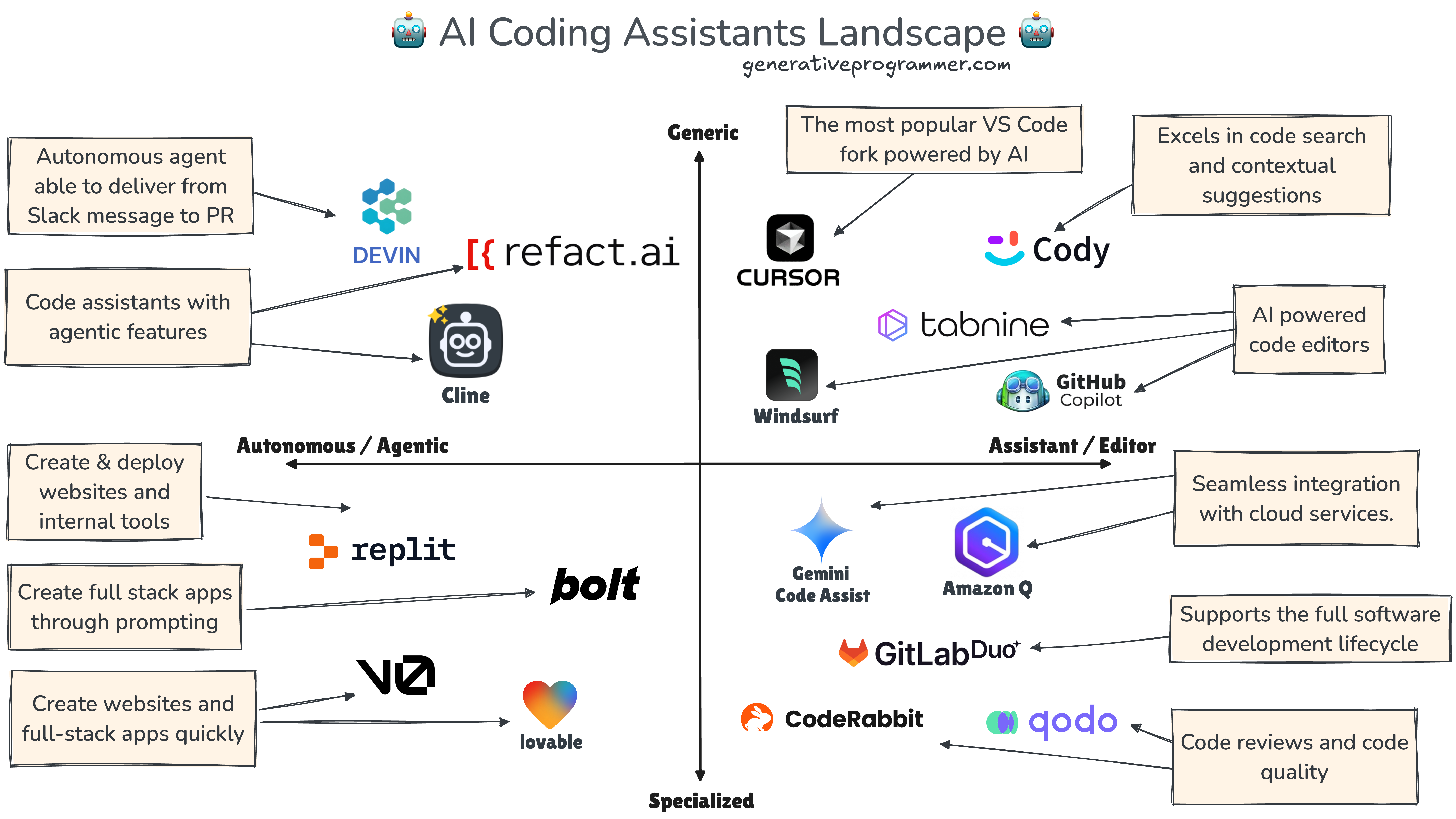

AGENTS.md files have become a standard fixture in software repositories, serving as instruction manuals specifically designed for AI coding agents like GitHub Copilot, Cursor, and other AI-powered development tools. These files typically contain project-specific guidelines, coding standards, architectural patterns, and other contextual information intended to help AI assistants understand the project's unique requirements and constraints.

For years, developers have operated under the assumption that more detailed AGENTS.md files would naturally lead to better AI performance. The logic seemed sound: provide more context, get more accurate and relevant code suggestions. However, the new research suggests this intuitive approach may be flawed.

The Research Findings

The study evaluated how different amounts and types of context in AGENTS.md files affect AI coding agent performance across various tasks. Researchers tested multiple AI models with AGENTS.md files of varying lengths and complexity, measuring metrics including code correctness, relevance to project requirements, and overall efficiency.

Surprisingly, the results showed a non-linear relationship between context volume and performance. While some context improved results compared to no context at all, excessive information led to diminishing returns and, in some cases, significant performance degradation. The AI agents appeared to struggle with information overload, sometimes focusing on less relevant details or becoming confused by contradictory or overly complex instructions.

Why More Isn't Always Better

Several factors contribute to this counterintuitive finding. First, modern AI models have limited context windows, meaning they can only process a certain amount of information at once. When AGENTS.md files become too lengthy, the AI may prioritize less important details or fail to recognize critical patterns.

Second, human developers often include redundant, contradictory, or outdated information in these files as projects evolve. Unlike human developers who can filter and prioritize information, AI agents may treat all content with equal importance, leading to suboptimal decision-making.

Third, the structure and organization of information matters significantly. Well-organized, concise AGENTS.md files with clear priorities outperformed longer, disorganized files even when both contained similar information.

Practical Implications for Developers

This research has immediate practical implications for development teams using AI coding assistants:

- Prioritize quality over quantity: Focus on including only the most essential, up-to-date information in AGENTS.md files

- Structure information strategically: Use clear headings, bullet points, and prioritization to help AI agents understand what matters most

- Regular maintenance: Treat AGENTS.md files as living documents that require regular review and pruning

- Test and iterate: Experiment with different levels of detail to find the optimal balance for your specific project and AI tools

The Broader Context

This discovery comes at a time when AI coding assistants are becoming increasingly integrated into development workflows. According to recent surveys, over 50% of professional developers now use AI coding tools regularly, with that number expected to grow significantly in the coming years. The efficiency of these tools directly impacts development velocity, code quality, and ultimately, business outcomes.

The research also highlights a broader trend in AI-human interaction: the need for optimized communication protocols. Just as humans benefit from clear, concise instructions, AI systems appear to perform best with carefully curated information rather than information dumps.

Future Research Directions

The study opens several important avenues for future research:

- Optimal context length: Determining the ideal amount of context for different types of projects and AI models

- Information prioritization algorithms: Developing methods to automatically prioritize and structure information for AI consumption

- Adaptive context systems: Creating systems that dynamically adjust the information provided based on the specific coding task

- Cross-project patterns: Identifying common patterns in effective AGENTS.md files across different types of software projects

Conclusion

The revelation that more context doesn't always mean better performance represents a significant shift in how developers should approach AI-assisted coding. Rather than treating AGENTS.md files as comprehensive documentation dumps, developers should view them as precision tools that require careful crafting and maintenance.

As AI coding assistants continue to evolve, understanding how to communicate effectively with these systems will become an increasingly important skill for developers. This research provides the first evidence-based guidelines for optimizing these interactions, potentially leading to substantial improvements in development efficiency and code quality.

The findings also serve as a reminder that our assumptions about AI-human interaction need continuous testing and refinement. What seems intuitive to humans may not align with how AI systems actually process information, highlighting the importance of empirical research in this rapidly developing field.

Source: Research highlighted by Omar Sar (@omarsar0) evaluating AGENTS.md files for coding agents