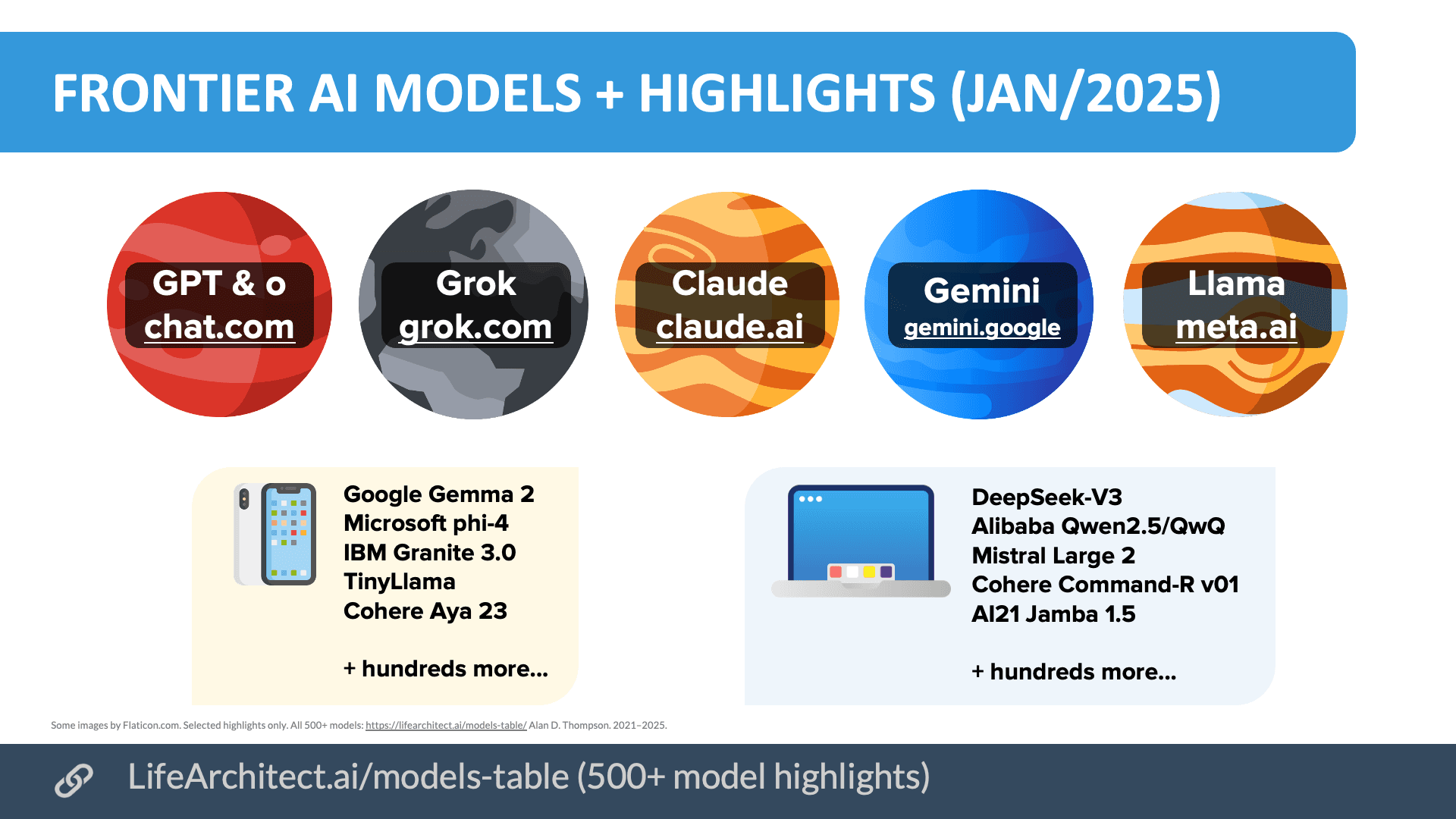

An analysis of the current frontier AI landscape reveals a clear and persistent hierarchy: US-based, closed-source models from Google, OpenAI, and Anthropic continue to set the pace, while Chinese contenders remain several months behind. The most significant shift is Meta's re-entry into the high-stakes race with a new closed-source model, signaling a potential strategic pivot.

The Current Frontier Hierarchy

According to the analysis, the top tier remains firmly occupied by Google, OpenAI, and Anthropic. These US companies are described as "well ahead of the pack" and may even be showing early signs of recursive self-improvement—a theoretical milestone where AI systems can significantly enhance their own capabilities, potentially accelerating progress.

Meta has made a notable move by releasing a new closed-source model. While the model itself is assessed as "not-quite-frontier," its mere existence and the company's approach suggest Meta is seriously attempting to rejoin the elite competition. This follows a period where Meta's public focus was heavily on its open-weight Llama family. All other US players are described as "far behind" this leading group.

xAI, Elon Musk's company, has reportedly "fallen from frontier status for now," though with promises of a return. Mistral, once a European hopeful challenging the frontier, also appears to have fallen from that status in this assessment.

The Chinese Contender Landscape

On the Chinese front, several major players are identified as still being "very much in the race":

- Alibaba (Qwen series)

- Moonshot (Kimi)

- MiniMax

- Xiaomi (MiMo)

- DeepSeek

- Zhipu AI (GLM series)

However, a critical gap persists: the best Chinese models are estimated to be 7-9+ months behind the currently released US closed-source models. This lag refers to publicly available models, not internal prototypes. The analysis also notes a trend where commitment to "open weights" (releasing model weights publicly) appears to be slipping for some Chinese players, specifically naming Xiaomi and Alibaba. This could indicate a strategic shift towards the closed-source, competitive model embraced by the US leaders.

What This Means for the AI Race

The analysis paints a picture of a bifurcated frontier. The US maintains its lead through a closed-source approach that concentrates resources and potentially accelerates proprietary advancements. China fields a larger number of credible competitors but trails by a significant and measurable timeframe. Meta's re-entry as a closed-source player underscores the perceived competitive necessity of this model for staying at the cutting edge. The retreat from open-weight commitments by some players suggests the intense competition is making openness a luxury fewer can afford.

gentic.news Analysis

This snapshot aligns with the competitive dynamics we've tracked throughout 2025 and into 2026. The sustained lead of the US closed-source trio (Google/DeepMind, OpenAI, Anthropic) is not new, but the mention of potential "recursive self-improvement" signs is a significant and rare claim from an observer. If even partially true, it would explain the widening gap and validate the massive compute investments these companies have made, as we covered in our analysis of the "Compute Drought" in late 2025.

Meta's pivot is the most tactically interesting development. For years, Meta's strategy, led by the Llama series, has been to leverage open-weight models to build ecosystem influence and catch up in research. This new closed-source model suggests internal benchmarking may have shown that approach hitting a ceiling against the frontier. It's a direct competitive response to the leaders and contradicts the earlier 2025 trend of increasing openness. We predicted this potential reversal in our article "The Closing of the Open AI Model Era," noting that commercial and safety pressures would push frontier players toward closure.

The 7-9+ month lag for Chinese models is a consistent estimate, but it masks a crucial detail: this gap is against released US models. The real, internal gap at the research frontier may be different. Chinese firms like DeepSeek have shown remarkable efficiency, and Alibaba's Qwen 2.5 series was highly competitive on many benchmarks. Their challenge is converting research prowess into a stable, leading-edge product pipeline. The slipping commitment to open weights, especially from a hardware-first company like Xiaomi, indicates they are prioritizing competitive differentiation over ecosystem building—a sobering sign for the global open-source community.

The fall of xAI and Mistral from frontier status highlights the extreme capital and talent intensity of this race. It is no longer a field for well-funded startups; it is a contest among corporate and national giants with near-unlimited resources.

Frequently Asked Questions

Who are the current leading AI model companies?

The current frontier leaders are US-based, closed-source companies: Google (with its Gemini and other models), OpenAI (GPT series), and Anthropic (Claude series). They are considered significantly ahead of other competitors.

How far behind are Chinese AI models?

The analysis estimates that the best publicly available Chinese AI models from companies like Alibaba, DeepSeek, and Zhipu AI are approximately 7 to 9 months behind the current released models from the leading US companies.

Why is Meta releasing a closed-source AI model now?

Meta, which previously focused on open-weight models like Llama, has released a new closed-source model in an attempt to re-enter the frontier AI race. This strategic pivot suggests they believe the closed-source approach is necessary to compete directly with the leading performance of Google, OpenAI, and Anthropic.

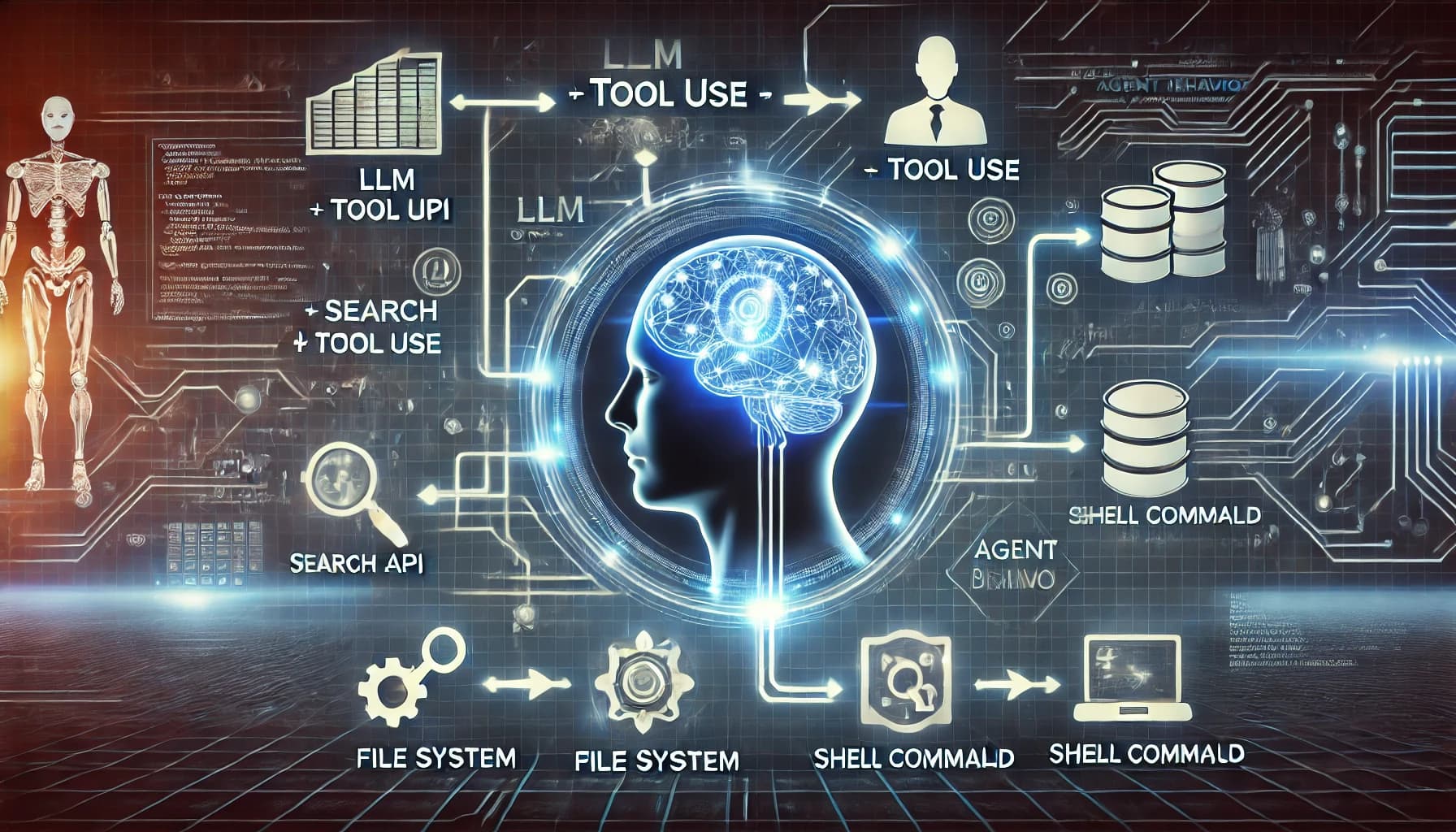

What does "recursive self-improvement" mean for AI?

Recursive self-improvement refers to the theoretical ability of an AI system to significantly enhance its own capabilities, design better versions of itself, or improve its own training processes. Signs of this in frontier models would indicate a potential acceleration in AI advancement, moving beyond human-paced research cycles.