What Happened

A new and unannounced AI model named Hunter Alpha has appeared on the model aggregation platform OpenRouter. The model was first noted in a Reuters report, which was subsequently highlighted by AI researcher Rohan Paul on X (formerly Twitter).

The model's listing provides no information about its developer, architecture, capabilities, or training data. Its sudden appearance on a public platform used by developers to compare and access various AI models has generated immediate speculation within the technical community.

Context & Speculation

OpenRouter serves as a unified API and price comparison platform for numerous large language models (LLMs), including offerings from OpenAI, Anthropic, Google, and open-source leaders like Meta. Models are typically listed with clear attribution to their developing organization.

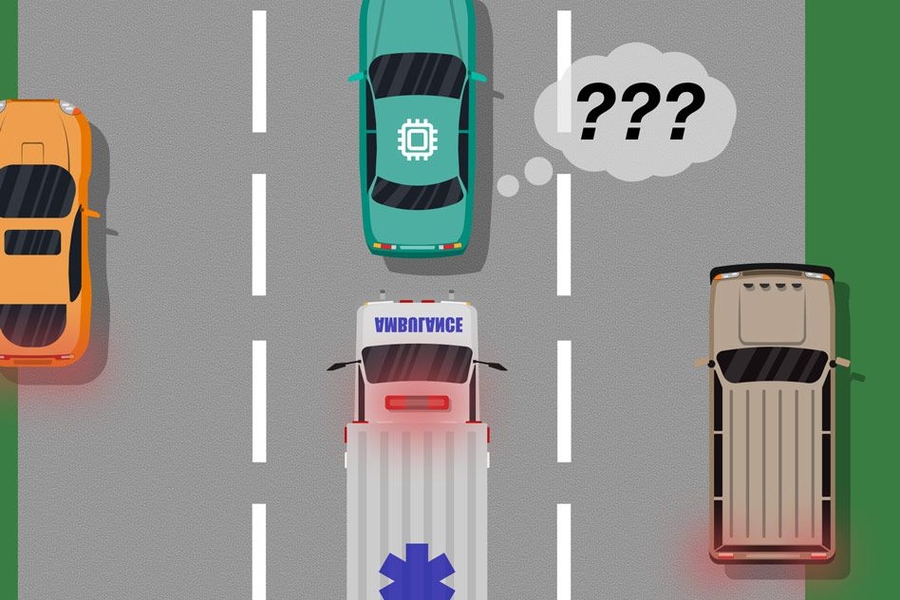

The complete lack of attribution for Hunter Alpha is highly unusual. According to the Reuters report cited by Paul, rumors suggest the model could be a secret test deployment by a major AI research lab. This practice, sometimes called a "silent launch" or "canary test," involves releasing a model under a codename to gather real-world performance and reliability data without the pressure of a formal public announcement.

No benchmarks, pricing, or detailed API specifications for Hunter Alpha are publicly available at this time. The model's presence suggests it is functionally accessible via the OpenRouter API, but its performance characteristics remain unknown.

The OpenRouter Platform

OpenRouter's role as a neutral aggregator makes it a plausible channel for such an anonymous test. Developers use the platform to route requests to the most cost-effective or capable model for their needs. A new, unlabeled model appearing in the list would be visible to any developer browsing the platform, allowing its creator to observe usage patterns and performance under real load without direct attribution.

This incident underscores the increasingly opaque and competitive nature of advanced AI development, where labs may seek to evaluate their models in the wild before committing to a full launch.