What Happened

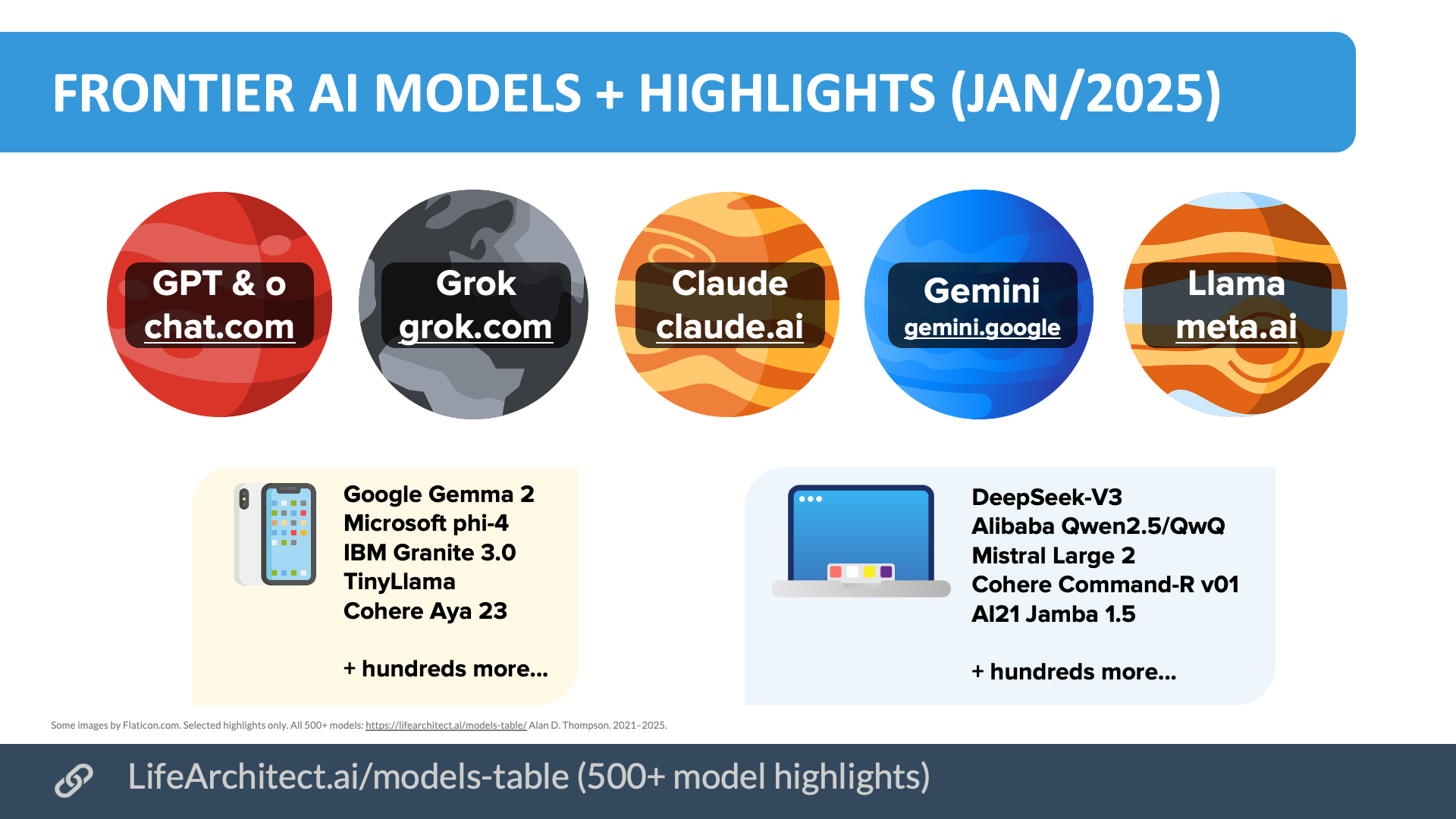

In a recent post on X, Ethan Mollick, a professor at the Wharton School who studies AI's impact on work and education, made a pointed observation about the current competitive landscape in advanced AI development. He stated that both Meta and xAI have "failed" to maintain technical parity with the leading frontier AI labs. Furthermore, he noted that the leading open-weight models coming from China continue to lag behind the state-of-the-art by several months.

Mollick's central conclusion is that if the field achieves a significant milestone known as recursive AI self-improvement—where an AI system can iteratively improve its own architecture, training processes, or code—this breakthrough is most likely to come from one of three companies: Google, OpenAI, or Anthropic.

Context

Mollick's statement is a commentary on the perceived stratification in AI capability. "Frontier labs" typically refers to organizations pushing the absolute limits of model scale, reasoning ability, and multimodal performance, often measured by benchmarks like MMLU, GPQA, or MATH. OpenAI's GPT-4 series, Anthropic's Claude 3 models, and Google's Gemini Ultra are considered the established leaders in this category.

Meta, while a massive contributor via its open-source Llama models, has not released a model that consistently outperforms these frontier models on a broad suite of benchmarks. Similarly, xAI's Grok-1, while competitive, has not been positioned as surpassing the top-tier models.

The lag of Chinese open-weight models, such as those from Qwen or DeepSeek, is a widely acknowledged fact in the technical community. While impressive, their releases typically follow and respond to architectural advances and benchmark scores set by the U.S.-based frontier labs.

Recursive self-improvement is a theoretical concept in AI safety and capabilities research. It describes a scenario where an AI system becomes capable of designing a successor system that is more intelligent than itself, potentially leading to a rapid, feedback-driven intelligence explosion. Mollick's post suggests the prerequisite capability for this—being at the absolute frontier—is currently concentrated in a very small set of organizations.