What Happened

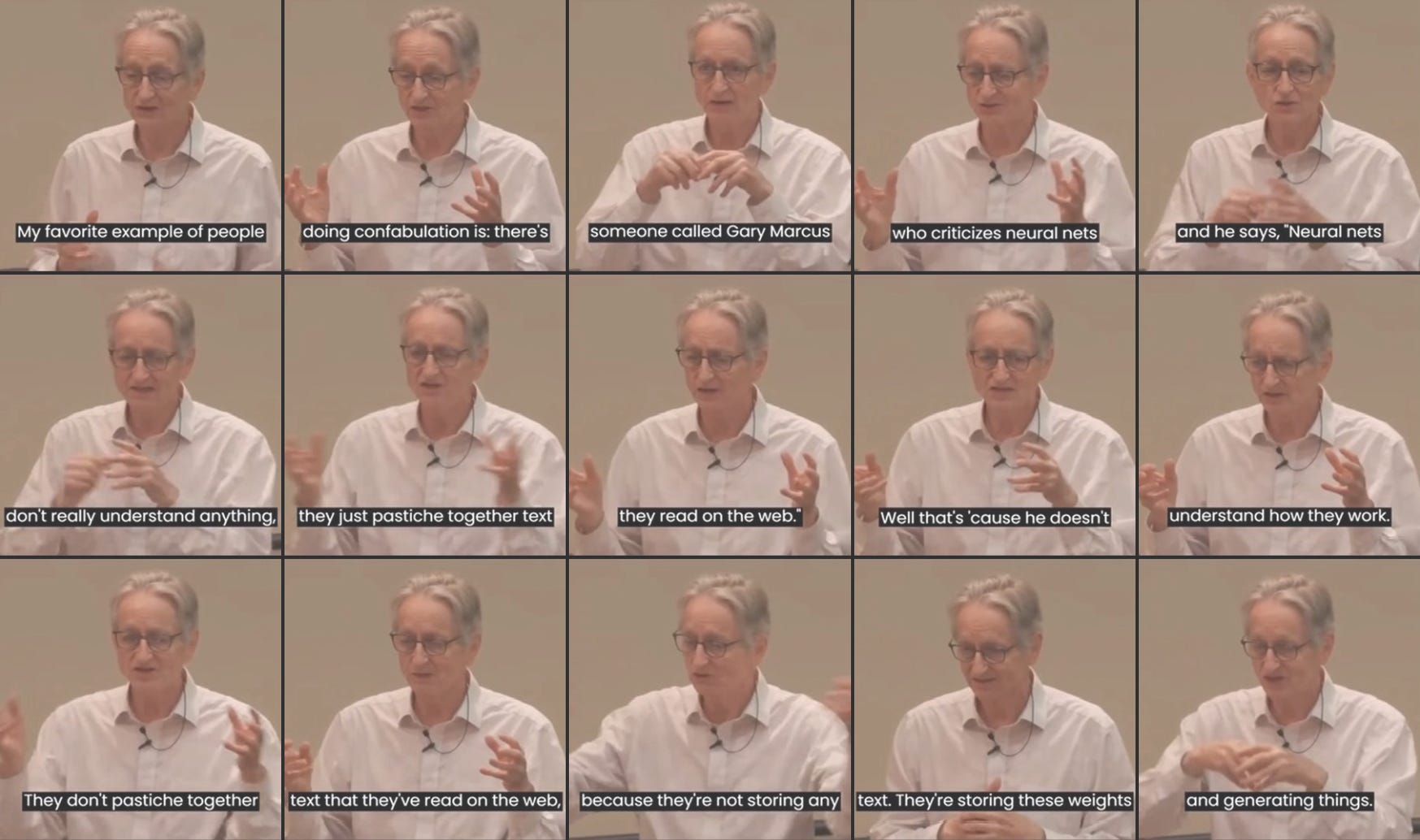

Geoffrey Hinton, the 'Godfather of AI,' has rebranded the term 'hallucinations' in AI systems as 'confabulations.' In a recent post on X (formerly Twitter), Hinton explained that intelligence — both biological and artificial — reconstructs reality into plausible stories rather than storing facts like a database. The very engine that produces creative synthesis also produces confident, incorrect details.

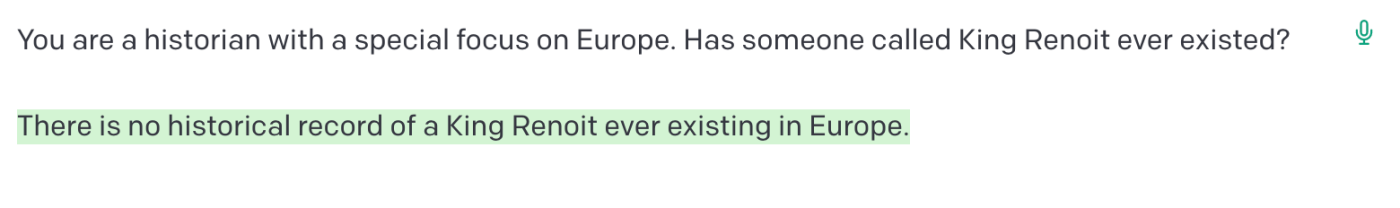

This reframing shifts the conversation from a bug-focused narrative to a feature-oriented one, suggesting that the tendency of large language models to generate false but plausible information is an inherent property of intelligence itself, not a correctable flaw.

Context

Hinton's statement comes amid ongoing debates about the reliability of AI systems. The term 'hallucination' has been widely used in the AI community to describe instances where models generate factually incorrect or nonsensical outputs. By rebranding it as 'confabulation,' Hinton draws a parallel to a psychological phenomenon where humans fill gaps in memory with fabricated, often believable, details without intent to deceive.

This aligns with Hinton's long-standing view that neural networks learn to compress and reconstruct reality, not to store exact copies of data. In his earlier work on Boltzmann machines and deep learning, he emphasized that intelligence is about finding structure in data, not memorizing it.

What This Means in Practice

For AI practitioners, this reframing has practical implications. If confabulations are an inherent feature of intelligence, then efforts to completely eliminate them may be misguided. Instead, the focus should shift to building systems that detect and flag confabulations, or that use external knowledge bases to verify facts. This is already happening with techniques like retrieval-augmented generation (RAG), which ground model outputs in external data.

gentic.news Analysis

Hinton's rebranding is more than semantic. It reflects a deeper philosophical stance on the nature of intelligence that has shaped his entire career. In our previous coverage of Hinton's departure from Google to speak freely about AI risks, we noted his growing concern about the pace of AI development. This latest statement can be seen as a corollary: if confabulations are intrinsic, then we must design systems that account for them rather than expecting perfect recall.

This also connects to the ongoing trend of 'interpretability' research we've covered. Companies like Anthropic and OpenAI are investing heavily in mechanistic interpretability — understanding the internal representations of neural networks. If Hinton is right, then interpretability is not just about debugging but about understanding the fundamental nature of machine intelligence.

The choice of the word 'confabulation' is also notable. In neuroscience, confabulation is associated with damage to the prefrontal cortex, but also with normal memory reconstruction. By using this term, Hinton may be implicitly arguing that AI systems are not broken — they are operating as designed, and the design is a reflection of how intelligence works.

Frequently Asked Questions

What did Geoffrey Hinton say about AI hallucinations?

Geoffrey Hinton rebranded AI hallucinations as 'confabulations,' arguing that intelligence reconstructs reality into plausible stories rather than storing facts like a database. He stated that the engine producing creative synthesis also produces confident, incorrect details.

Why does Hinton call them confabulations instead of hallucinations?

Hinton uses 'confabulation' to emphasize that this behavior is an inherent feature of intelligence, not a bug. In psychology, confabulation refers to the production of fabricated, distorted, or misinterpreted memories without the intent to deceive, which mirrors how AI models generate plausible but incorrect outputs.

Does this mean AI hallucinations cannot be fixed?

Not necessarily. Hinton's reframing suggests that eliminating confabulations entirely may be impossible if they are an intrinsic property of intelligence. However, practitioners can mitigate their impact using techniques like retrieval-augmented generation (RAG), fact-checking systems, and better training data curation.

How does this relate to human memory?

Hinton draws a direct parallel: human memory is reconstructive, not reproductive. We don't store exact copies of experiences; we reconstruct them each time we recall, often introducing errors. AI models, he argues, operate similarly — they learn to compress and reconstruct patterns, which inevitably produces confabulations.