Key Takeaways

- An article from Towards AI emphasizes that the reliability and safety of an AI agent depend more on its controlling 'harness'—the system of protocols, tools, and observability layers—than on the underlying model.

- This concept is reportedly worth $2 billion but remains poorly understood by many developers.

What Happened

A new article published on Towards AI, a prominent technical publication, makes a provocative claim: the ultimate performance and safety of an AI agent are determined not by the sophistication of its core large language model, but by the quality of its "harness."

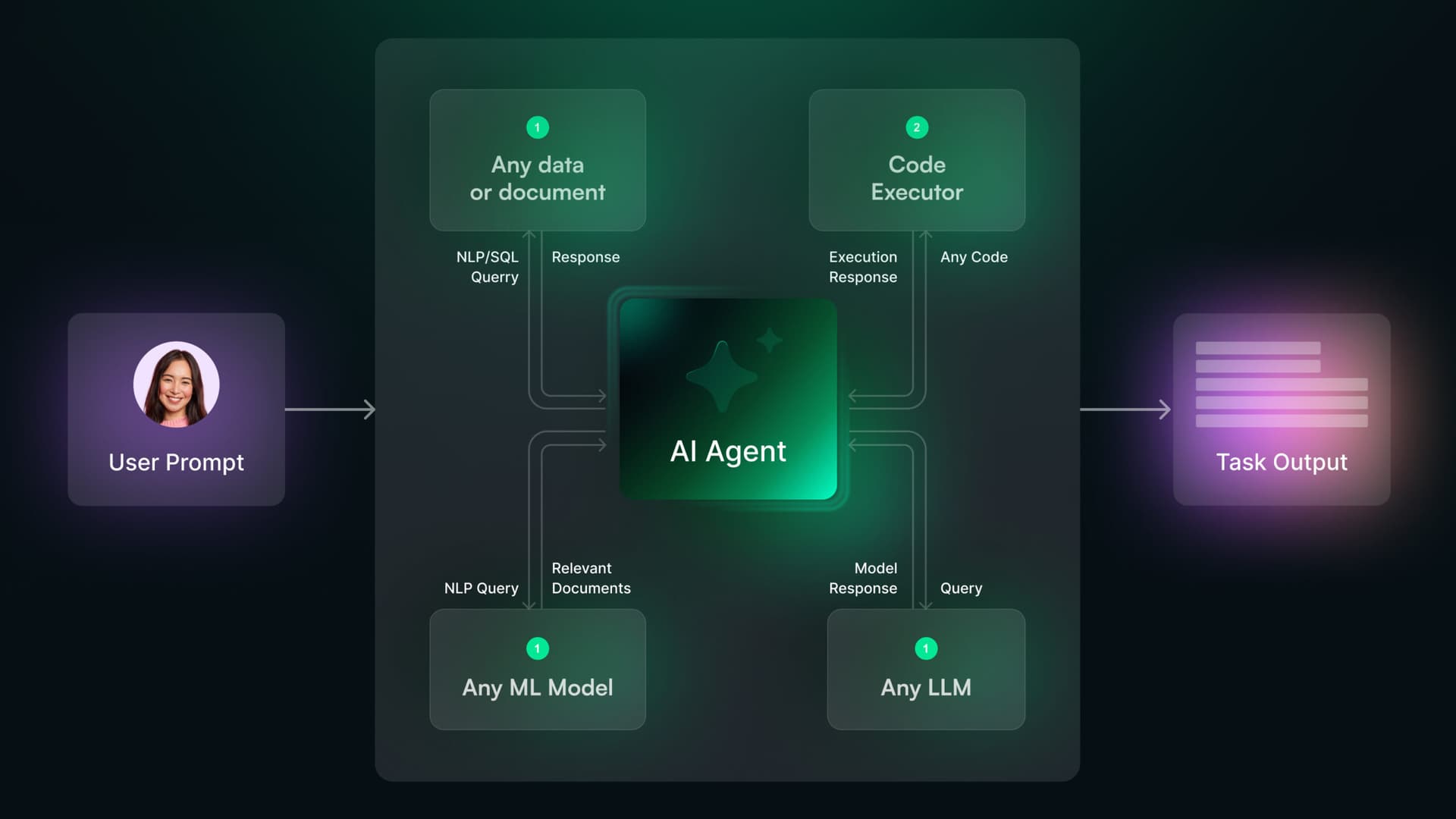

The author defines this harness as the complete control system built around an agent. It encompasses the protocols for tool use, the orchestration of multi-step workflows, the layers of observability for monitoring behavior, and the guardrails that prevent errors or unsafe actions. The central thesis is that a powerful but poorly harnessed agent is unreliable and potentially dangerous, while a well-harnessed agent of moderate capability can be robust and trustworthy. The article positions this concept as a critical, yet often overlooked, pillar of production AI systems—one it claims represents a $2 billion market opportunity that most developers still struggle to articulate.

Technical Details: Deconstructing the Harness

While the full article is behind a paywall, the premise aligns with a clear and growing technical discourse. Based on the snippet and related industry developments, we can infer the key components of a production-grade agent harness:

- Orchestration & Protocol Layers: This is the logic that sequences an agent's actions, especially when coordinating multiple tools or sub-agents. As noted in our Knowledge Graph, recent research (April 4, 2026) identified multi-tool coordination as the primary failure point for AI agents. A robust harness must manage this complexity, potentially using architectures like the two-layer MCP/UCP protocol we covered previously.

- Observability & Evaluation: A harness must provide deep visibility into the agent's decision-making process. This is not just logging outputs, but tracing reasoning, tool calls, and context shifts. Towards AI itself published a guide on four critical observability layers for production AI agents just weeks ago (April 3, 2026), underscoring this trend.

- Safety & Control Mechanisms: This includes "kill switches," context isolation (as seen in Gemini CLI's subagents), and behavioral guardrails to constrain actions within safe and brand-appropriate parameters.

- The Tooling Ecosystem: The harness integrates the agent with its available tools—from code executors and APIs to search functions and custom retail systems. The reliability of these connections is paramount.

This follows a pattern of increasing focus on Agentic AI and Agentic Commerce as research topics, with entities like Shopify already exploring agent integrations.

Retail & Luxury Implications

The implications for retail and luxury are profound, moving the conversation from "Can an AI agent do this?" to "How do we ensure it does this correctly, safely, and consistently 10,000 times a day?"

- Customer-Facing Agents: A luxury concierge agent powered by GPT-4 or Claude needs a harness that prevents it from hallucinating product details, making unauthorized promises, or losing the thread of a multi-day, multi-channel conversation with a VIP client. The harness ensures brand voice consistency and data privacy.

- Operational & Supply Chain Agents: An agent tasked with optimizing inventory allocation or predicting supplier delays must be harnessed with strict access controls to ERP systems, validated decision frameworks, and clear audit trails. A mistake here is a direct financial loss.

- Creative & Design Assistants: An agent helping with trend forecasting or mood board generation requires a harness that curates its source materials (e.g., only from approved archives or trend services) and encapsulates brand DNA guidelines into its creative process.

The core retail takeaway is that competitive advantage will not come from using the same base LLM as everyone else, but from building superior, domain-specific harnesses. A luxury group's harness for a clienteling agent would be infused with decades of savoir-faire, complex relationship rules, and an unparalleled standard for discretion—elements no off-the-shelf solution can provide.

gentic.news Analysis

This article taps into the central challenge of the current AI agent wave: moving from compelling demos to production-ready systems. As our Knowledge Graph shows, AI Agents have been mentioned in 228 prior articles and appeared in 15 this week alone, indicating explosive interest. The timeline reveals a maturation of the discussion: from predictions of a "breakthrough year" (Dec 2026) to grappling with "flawed human evaluation" (April 2026) and now focusing on the orchestration and control infrastructure—the harness.

The call for better harnesses directly addresses the "100th Tool Call Problem" that Towards AI diagnosed earlier this month (April 9), where agents degrade in reliability over extended operations. It also connects to our coverage of the Autogenesis Protocol and Avoko's 'Behavioral Lab', which are both efforts to create frameworks for testing and evolving agents systematically—key functions of a harness.

For retail AI leaders, this is a crucial strategic lens. Investment must shift from merely fine-tuning models to architecting these control systems. Partnerships with firms specializing in agent observability (like Avoko) or adopting open-source agent frameworks with strong harness concepts (like the "Startup OS" from Cabinet) may become more critical than choosing between model providers. The entity relationships show that leading agents already use tools from Anthropic, Google, and others; the differentiator will be how they are used. The harness is where proprietary retail logic, brand safety, and operational excellence get encoded, making it a core IP asset in the age of agentic commerce.