What Happened: A Guide to Fine-Tuning AI Agents on Azure

The source material is a technical article focused on the practical engineering of AI agents. It details strategies for fine-tuning these autonomous systems—software that uses large language models (LLMs) to perceive environments, make decisions, and execute actions—specifically within the Microsoft Azure cloud ecosystem. The core thesis is that deploying effective agents is not merely about selecting a model but involves a deliberate balancing act between three key variables: the accuracy of the agent's outputs, the computational and financial cost of running it, and the latency/performance of the overall system.

While the full article is behind a paywall, the provided context and knowledge graph indicate the piece is a practitioner's guide. It would logically cover Azure-specific tools and services (like Azure OpenAI Service, Azure Machine Learning, and Azure AI Foundry) relevant to the fine-tuning lifecycle. This includes data preparation, selecting base models (e.g., GPT-4, Llama), choosing between full fine-tuning and more parameter-efficient methods like LoRA (Low-Rank Adaptation), and subsequent deployment and monitoring.

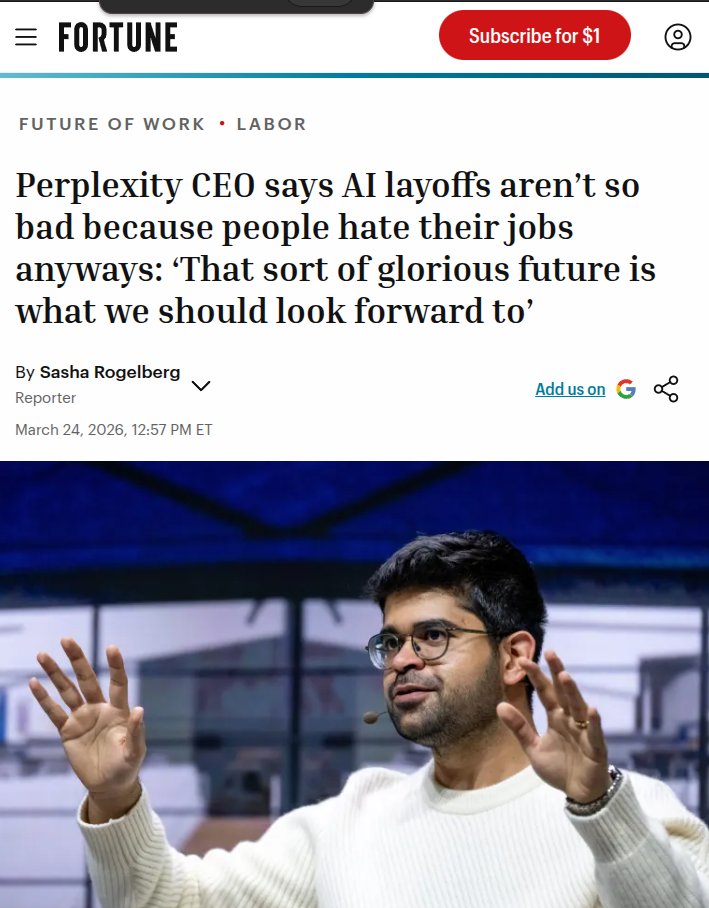

The broader knowledge graph context underscores the timeliness of this focus. Industry forecasts point to 2026 as a potential breakthrough year for AI agents, noting they have crossed a "critical reliability threshold." However, a parallel insight warns that compute scarcity makes AI expensive, forcing a strategic prioritization of high-value tasks. This article's emphasis on balancing cost and performance directly addresses that emerging economic reality of agent deployment.

Technical Details: The Core Trade-Offs in Agent Fine-Tuning

Fine-tuning is the process of further training a pre-trained foundation model on a specialized dataset to excel at a specific task or operate within a defined domain. For AI agents, this is not a one-size-fits-all process. The article likely delves into the following strategic considerations:

- Accuracy vs. Data & Compute Cost: Achieving the highest possible accuracy often requires extensive, high-quality domain-specific datasets and full fine-tuning, which is computationally intensive and expensive. The guide would discuss strategies to maximize accuracy gains while minimizing data collection costs and GPU hours, possibly through techniques like synthetic data generation, careful prompt engineering in the fine-tuning data, or iterative training cycles.

- Model Performance vs. Inference Cost/Latency: A heavily fine-tuned, larger model may be very accurate but slow and costly to run for every agent decision (inference). The article would address optimizing this, potentially by exploring smaller, distilled models for certain agent sub-tasks, implementing caching strategies, or using Azure's scalable inference endpoints to manage load and cost.

- Agent Architecture & Tool Use: An agent's performance isn't solely dependent on its core LLM. The fine-tuning strategy must align with the agent's architecture—its ability to call APIs, use retrieval-augmented generation (RAG), or employ specialized tools. Fine-tuning data must teach the model when and how to use these tools effectively within an Azure environment.

The guide positions Azure not just as infrastructure but as an integrated platform providing managed services for each step of this complex workflow, from data pipelines and training clusters to scalable deployment and monitoring dashboards.

Retail & Luxury Implications: Strategic Deployment of Autonomous Systems

For retail and luxury AI leaders, this article is a crucial resource for moving from experimental chatbots to robust, autonomous AI agents. The principles of balancing accuracy, cost, and performance are directly transferable to high-stakes retail applications.

Potential High-Value Agent Applications:

- Personal Shopping & Clienteling Agents: An agent fine-tuned on a brand's historical client notes, product catalogs, and CRM data could autonomously draft highly personalized outreach emails, suggest products based on real-time inventory and client preferences, and even schedule appointments. The accuracy (personalization quality) must be balanced against the cost of running such an agent for thousands of VIP clients.

- Supply Chain & Inventory Agents: Autonomous agents could monitor global logistics feeds, predict delays, and proactively re-route shipments or adjust production schedules. Here, performance (speed of decision-making) is critical, potentially favoring a leaner, faster fine-tuned model over a more verbose one.

- Dynamic Pricing & Promotion Agents: Agents could analyze competitor pricing, inventory levels, and demand signals to adjust prices in near-real-time. The financial impact of a pricing error is high, demanding extreme accuracy, which justifies a significant investment in fine-tuning with pristine historical pricing and sales data.

The Azure Advantage for Retail: For enterprises like LVMH or Kering, which likely have complex existing Microsoft and Azure ecosystems, this guide is particularly relevant. Fine-tuning and deploying agents within Azure can simplify integration with existing data sources (e.g., Dynamics 365, SAP on Azure) and ensure compliance with enterprise security and governance standards. The cost-management tools are vital for forecasting and controlling the operational expense of running a fleet of AI agents.

The key takeaway for retail technologists is that the era of generic chatbots is ending. The next competitive edge lies in specialized, fine-tuned agents. This article provides the framework for building them in a controlled, economical, and scalable way on a major cloud platform. The decision is no longer if to build agents, but how to build them strategically, and this guide offers a blueprint for that "how."