A significant shift is occurring in how AI systems are developed: AI agents are now being used to train other AI models, creating what developers are calling "state-of-the-art agentic systems." This trend traces back to Andrej Karpathy's autoresearch repository, which has inspired a wave of experimentation in automated AI research workflows.

Key Takeaways

- AI agents are now being used to train other AI models, creating advanced agentic systems.

- This development stems from Andrej Karpathy's autoresearch repository and represents early-stage automation of AI research.

What Happened

Andrej Karpathy, former Director of AI at Tesla and OpenAI researcher, created an autoresearch repository that demonstrated how AI systems could autonomously conduct research tasks. This repository has sparked what developer Omar Sar describes as "an impressive trend" where AI agents are now training other AI models to build sophisticated agentic systems.

The core insight is that while large language models (LLMs) still struggle with formulating novel research questions or hypotheses, they can effectively execute training pipelines, experiment with architectures, and optimize model parameters when given clear objectives.

How It Works

The autoresearch approach typically involves:

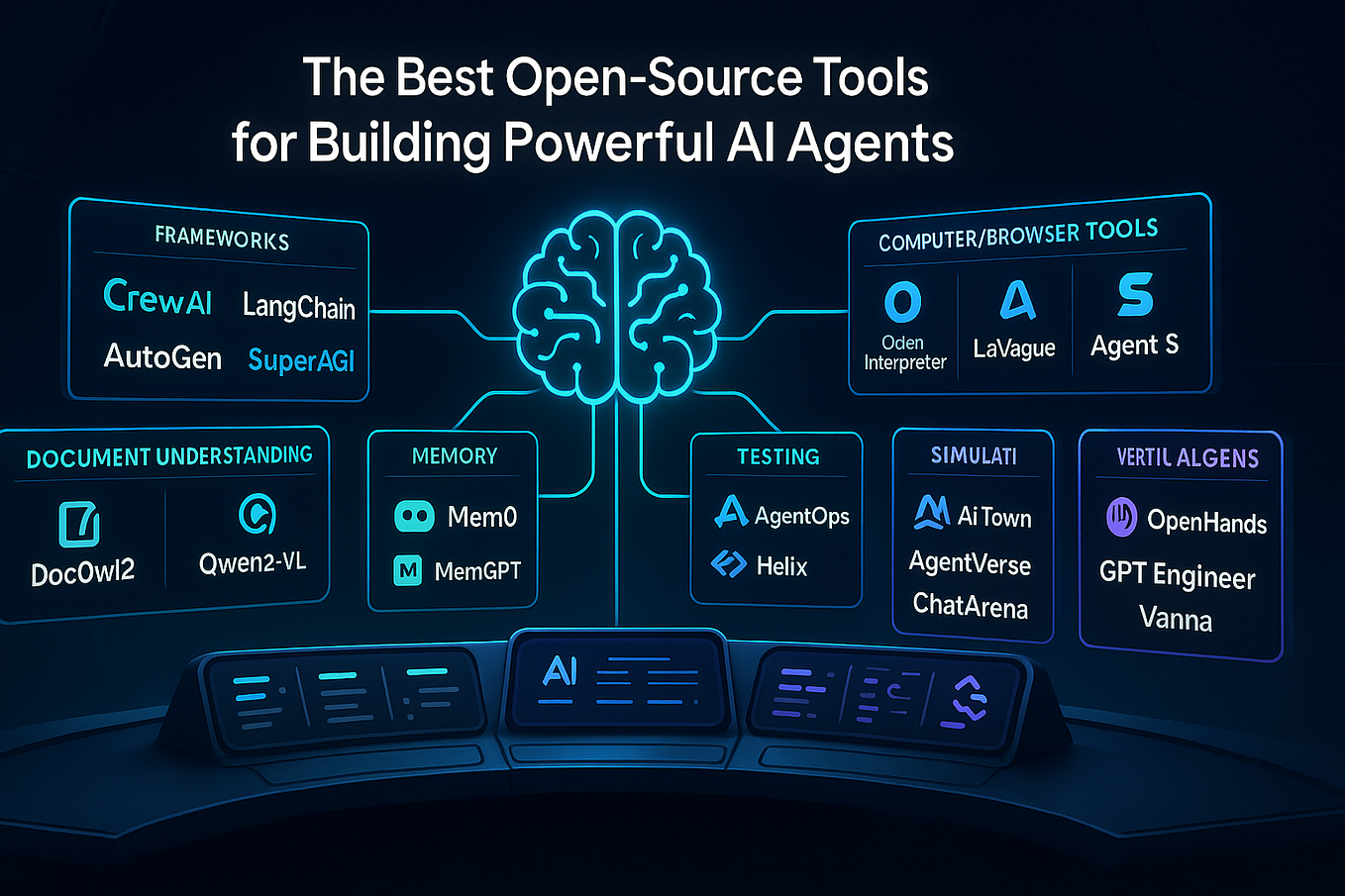

- Agentic Orchestration: An AI agent (often an LLM with tool access) receives a research objective

- Automated Pipeline Creation: The agent designs and implements training pipelines

- Iterative Experimentation: The system runs multiple training experiments with varying hyperparameters

- Evaluation and Selection: The agent evaluates results and selects the best-performing models

This creates a feedback loop where AI systems bootstrap their own improvement, though developers note this is "just scratching the surface" of what's possible.

Current Limitations

The most significant limitation identified in this trend is that LLMs "are not great at this (yet)" when it comes to formulating original research questions or hypotheses. The current systems excel at execution rather than ideation—they can optimize known approaches but struggle to propose genuinely novel directions.

This suggests a division of labor in AI research: humans define the research questions and high-level objectives, while AI agents handle the implementation, training, and optimization of models to address those questions.

Practical Implications

For AI engineers and researchers, this trend means:

- Reduced Iteration Time: Automated training pipelines can run 24/7 without human intervention

- Hyperparameter Optimization at Scale: Agents can explore parameter spaces more exhaustively than manual approaches

- Reproducible Research: Automated systems create detailed logs of every experiment

- Specialized Model Creation: Agents can train task-specific models more efficiently than general-purpose approaches

gentic.news Analysis

This development represents a natural evolution in the AI research automation trend we've been tracking since 2024. Karpathy's influence here is particularly notable given his history at both OpenAI and Tesla—two organizations that have pushed the boundaries of AI system scaling. His autoresearch repository follows his earlier work on nanoGPT and llm.c, which similarly democratized access to advanced AI techniques.

The trend aligns with broader industry movements toward AI-assisted development. In February 2026, we covered how GitHub's Copilot Workspace was enabling more automated coding workflows, and this agentic training trend represents the next logical step: not just assisting with code, but autonomously executing entire training pipelines.

What's particularly interesting is the recursive nature of this development—AI systems training other AI systems. This creates potential for rapid iteration cycles that could accelerate progress in specialized domains. However, the limitation around hypothesis generation is crucial: we're seeing automation of execution rather than creativity. This suggests that the most effective near-term approach will be human-AI collaboration where researchers provide the "what" and agents handle the "how."

Looking at the competitive landscape, this trend could impact how AI labs allocate researcher time. If routine training and optimization can be automated, human researchers might focus more on foundational questions and novel architectures. This could create a new specialization within AI teams: researchers who design the agents that train the models.

Frequently Asked Questions

What is Karpathy's autoresearch repository?

Andrej Karpathy's autoresearch repository is an open-source project that demonstrates how AI systems can autonomously conduct research tasks. It provides frameworks and examples for creating AI agents that can design experiments, run training pipelines, and evaluate results with minimal human intervention.

How are AI agents training other AI models?

AI agents are using automated pipelines to train other models by: 1) receiving research objectives from humans, 2) designing appropriate training architectures and hyperparameters, 3) executing training runs across available compute resources, 4) evaluating model performance, and 5) iterating based on results. These agents typically combine LLMs with tools for code execution, experiment tracking, and resource management.

What are the limitations of current AI research agents?

The primary limitation is that current AI agents struggle with formulating novel research questions or hypotheses. They excel at optimizing known approaches and executing predefined tasks but lack the creativity to propose genuinely new research directions. This means human researchers still need to provide the high-level objectives and conceptual frameworks.

How does this trend affect AI research jobs?

This trend is likely to change rather than eliminate AI research roles. Routine tasks like hyperparameter tuning, model training, and experiment execution may become increasingly automated, allowing human researchers to focus on higher-level tasks like problem formulation, novel architecture design, and interpreting results. The most successful researchers will likely be those who can effectively collaborate with and direct AI research agents.