A community-maintained wiki for large language model (LLM) engineering, started by AI researcher Andrej Karpathy, gained over 5,000 GitHub stars within 48 hours of its creation. The rapid adoption highlights intense developer demand for consolidated, practical LLM knowledge. In response, an independent developer has already extended the original repository with a new section covering the memory lifecycle—a feature explicitly noted as missing in Karpathy's initial outline.

Key Takeaways

- Andrej Karpathy's LLM Wiki repository gained 5,000 GitHub stars in two days.

- A developer has now extended it with memory lifecycle features, addressing a noted gap.

What Happened

On April 4, 2026, Andrej Karpathy, former Director of AI at Tesla and a founding member of OpenAI, created and made public a GitHub repository titled "LLM Wiki." The project is described as a "community-maintained wiki for all things LLM engineering." Within two days, the repository was starred by more than 5,000 developers, signaling immediate and significant interest from the technical community.

The original repository structure, as outlined by Karpathy, was intended to cover fundamental topics like prompting, retrieval-augmented generation (RAG), fine-tuning, evaluation, deployment, and security. A section labeled "Memory / State" was included in the initial table of contents but was marked with a "TODO" placeholder, indicating planned but not yet written content.

The Community Extension

Following the repository's viral growth, a developer (username not specified in the source) has forked or extended the project to specifically address the missing memory component. The extension adds content on the "Memory lifecycle," which is a critical architectural concept for building persistent, stateful applications with LLMs (often called agents). This lifecycle typically involves processes for creating, storing, retrieving, updating, and decaying memory in conversational AI systems.

This move represents a classic open-source response: a high-profile project identifies a gap, and the community immediately collaborates to fill it. The extension directly addresses one of the more complex and rapidly evolving areas in LLM application design.

Context: The LLM Engineering Knowledge Gap

Karpathy's initiative taps into a clear market need. As LLMs transition from chat interfaces to core components of complex software systems, engineers require deep, practical, and standardized knowledge. Best practices are still being codified, and information is scattered across research papers, blog posts, and framework documentation. A centralized, community-driven wiki aims to reduce this friction and accelerate development.

The explosive growth of the repo mirrors the trajectory of other seminal developer resources in the AI space, suggesting it may become a foundational reference.

gentic.news Analysis

This event is a textbook example of Karpathy's unique influence at the intersection of deep learning research and practical engineering. Following his departure from OpenAI in early 2025 to focus on independent projects and education, his technical tutorials and projects (like the llm.c minimal training code) have consistently garnered massive developer attention. The 5,000-star milestone in 48 hours for a wiki underscores that his credibility translates directly into community mobilization. It's a content play with the impact of a major code release.

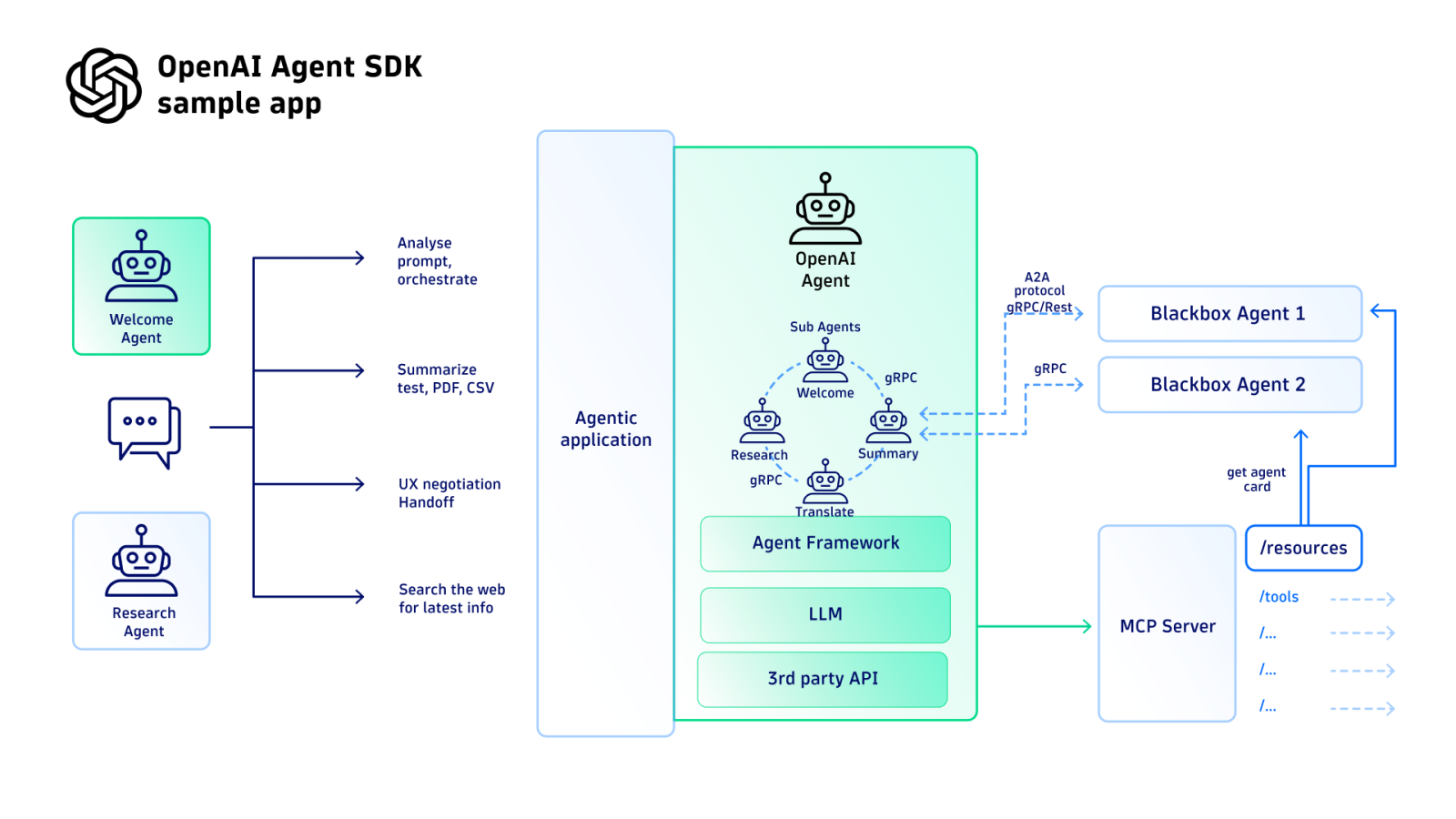

The community's immediate extension around memory lifecycle is particularly telling. As we covered in our analysis of the OpenAI "Starlight" agent framework launch last month, managing state and memory is the central technical hurdle preventing LLMs from becoming reliable, automated workflows. The fact that this was the first gap the community rushed to fill validates our previous reporting that agentic memory is the current frontier in applied AI. This aligns with increased activity (📈) from other players: LangChain and LlamaIndex have both recently shipped major memory management upgrades, and startups like Modelfarm are building entire platforms around stateful agent orchestration.

Practically, this wiki—if it maintains quality and activity—could become the MDN Web Docs for LLM engineers. Its success will depend on sustained community contributions and moderation to avoid fragmentation or staleness. For technical leaders, it's a resource to monitor and potentially contribute to, as the emerging standards documented here will shape implementation patterns across the industry.

Frequently Asked Questions

What is Andrej Karpathy's LLM Wiki?

It is a GitHub repository created by AI researcher Andrej Karpathy intended to serve as a community-maintained wiki covering practical engineering topics for large language models. It aims to be a centralized resource for concepts like prompting, fine-tuning, RAG, evaluation, and deployment.

Why did it get 5,000 stars so quickly?

Karpathy possesses significant credibility from his foundational roles at OpenAI and Tesla. Developers trust his technical judgment, creating immediate interest in any educational resource he curates. The rapid star count reflects a massive, unmet demand for consolidated, high-quality LLM engineering knowledge in a fast-moving field.

What is the "memory lifecycle" in LLMs?

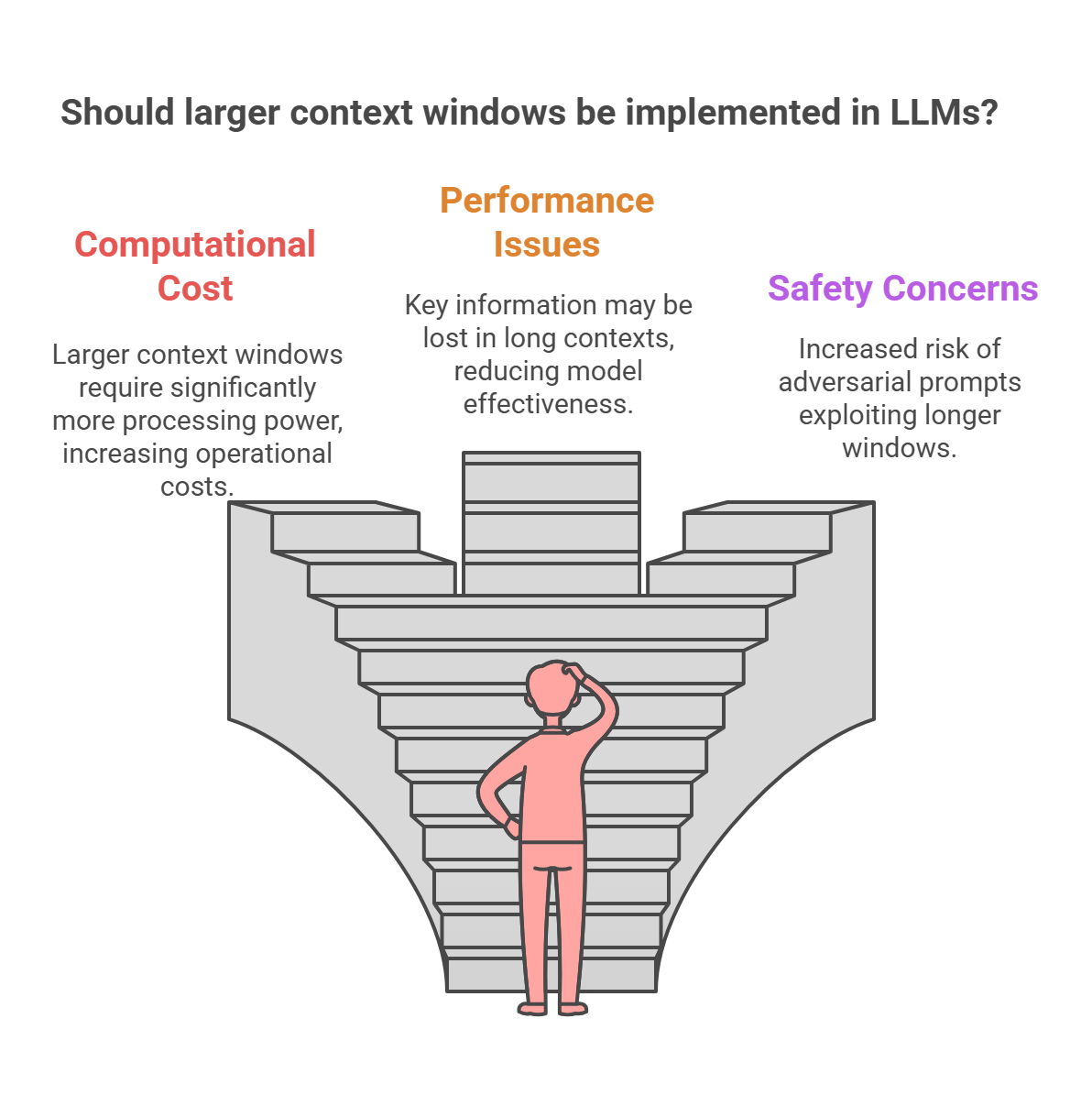

In the context of LLM applications (especially agents), the memory lifecycle refers to the end-to-end process of handling conversational or task state. This includes how memories (past interactions, facts, user preferences) are created, formatted, stored in a vector database or other storage, retrieved contextually for a given query, updated with new information, and potentially summarized or decayed over time to manage context window limits.

Is the extended wiki with memory features the official version?

Based on the source, the extension with memory lifecycle content appears to be a community-led fork or addition. The canonical repository remains Karpathy's original "LLM Wiki." Whether this extension will be merged into the main project or remain a separate community branch is not yet clear.