In a brief but pointed statement shared via social media, former OpenAI researcher and Tesla AI director Andrej Karpathy highlighted a fundamental shift he sees coming for the artificial intelligence industry. According to Karpathy, the industry "just has to reconfigure in so many ways, like the customer is not the human anymore, it's agents who are acting on behalf of humans. And this refactoring will be probably substantial in the space."

Key Takeaways

- Andrej Karpathy states the AI industry must reconfigure as AI agents become the primary customers, not humans.

- This shift will require substantial architectural and business model changes.

What Happened

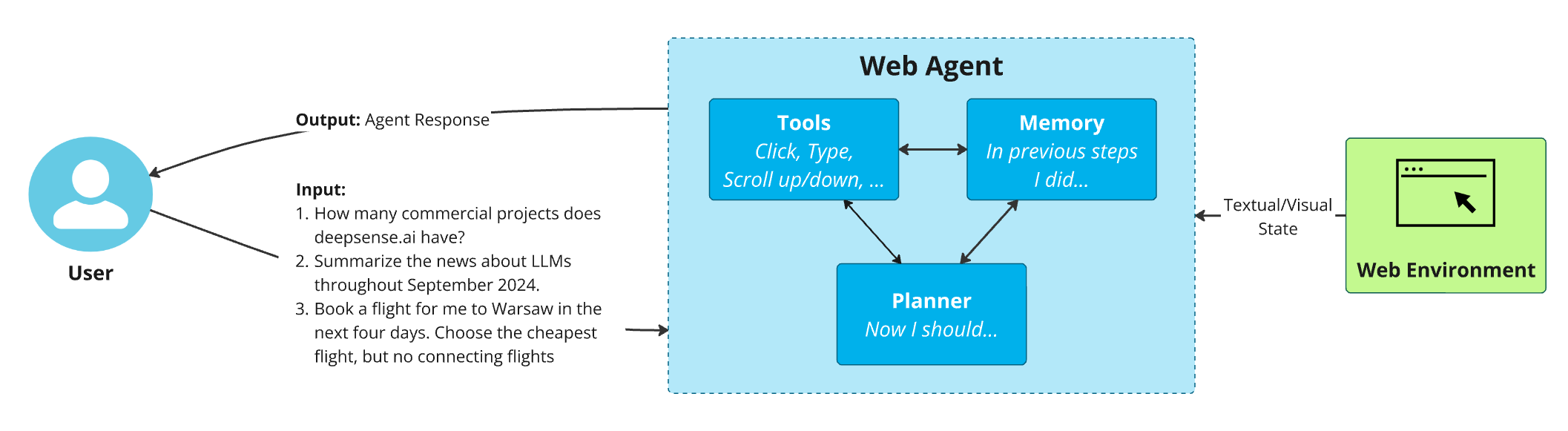

Karpathy's comment, shared by AI researcher Rohan Paul, captures a concise but significant prediction about the evolution of AI infrastructure and business models. While not detailing specific technical implementations, Karpathy points to a paradigm shift where AI systems ("agents") become the primary consumers of other AI services, APIs, and tools, rather than human end-users directly interacting with interfaces.

Context

This perspective aligns with growing industry focus on autonomous AI agents—systems that can plan, execute multi-step tasks, and interact with digital environments with minimal human intervention. The shift implies that APIs, model architectures, evaluation metrics, and even pricing models designed for human-in-the-loop interactions may become obsolete or require significant redesign.

Current AI infrastructure—from cloud GPU provisioning to inference optimization—is largely optimized for serving human requests through chat interfaces or applications. An agent-centric future would prioritize different characteristics: reliability for long-running tasks, cost efficiency at massive scale, inter-agent communication protocols, and robustness against failure cascades.

What This Means in Practice

If Karpathy's prediction holds, several industry segments would face transformation:

- API Design: Current RESTful APIs designed for human-paced interactions would need evolution toward event-driven, streaming, or persistent connection models better suited for agent consumption.

- Evaluation: Benchmarking would shift from human preference ratings (like Chatbot Arena) to objective task completion metrics measured across thousands of autonomous runs.

- Infrastructure: Cloud providers would need to optimize for sustained, predictable agent workloads rather than bursty human traffic patterns.

- Business Models: Pricing might move from per-token consumption toward subscription or compute-time models that better align with continuous agent operation.

gentic.news Analysis

Karpathy's observation isn't occurring in a vacuum. This follows his increased public commentary on AI infrastructure since departing OpenAI in early 2024 and aligns with his ongoing work on LLM operating systems and educational content about AI engineering. His perspective carries weight given his foundational role in developing Tesla's Autopilot and early contributions to deep learning education.

This agent-centric view connects directly to several trends we've covered. In February 2026, we reported on Google's Astra and OpenAI's o1 models, both explicitly designed for reasoning and agentic capabilities. The industry is already building infrastructure for this shift: Cognition Labs' Devin (an AI software engineer) and OpenAI's GPT-4o's computer use capabilities represent early examples of agents acting on behalf of humans. Microsoft's AutoGen framework and research into multi-agent systems further demonstrate the architectural groundwork being laid.

However, substantial challenges remain. Agentic systems today are fragile, expensive to run, and difficult to evaluate. The "substantial refactoring" Karpathy mentions will require breakthroughs in reliability, cost reduction, and safety—particularly as agents gain access to more powerful tools and real-world interfaces. This transition also raises significant questions about accountability, security, and economic impact that the industry has only begun to address.

Frequently Asked Questions

What does "agents as customers" mean?

It means AI systems (agents) will become the primary consumers of other AI services, rather than humans directly using those services. For example, instead of a human using a coding assistant, an AI project manager might coordinate multiple specialized coding agents that call various APIs to complete a software development task.

How soon will this shift happen?

Early forms are already here with AI coding assistants and research agents, but widespread adoption of complex multi-agent systems acting autonomously will likely take several years. The infrastructure, reliability, and economic models need to mature before this becomes the dominant paradigm.

What industries will be most affected?

Software development, customer service, content creation, and data analysis will likely see early transformation, as these domains have clear tasks that can be delegated to agents. Industries requiring physical interaction or high-stakes decision-making will likely adopt agentic AI more slowly.

Does this mean humans will be replaced?

Not replaced, but re-positioned. Humans will likely shift from performing individual tasks to supervising, directing, and providing high-level goals for agent systems. The most valuable skills may become agent orchestration, prompt engineering, and oversight rather than task execution.