Sam Altman, CEO of OpenAI, has outlined a vision for the evolution of the Codex Desktop ecosystem toward becoming a unified AI agent capable of controlling computers and working across multiple surfaces. In a recent discussion, Altman described a future where users interact with "your single AI that's working for you on a unified backend" with access to "all your data and ideas, and the ability to work across a lot of surfaces."

What Happened

Altman's comments, shared via social media, point toward a significant evolution in how AI assistants might function. Rather than multiple specialized AI tools for different tasks, he envisions a single, integrated AI system that can:

- Operate as a unified backend service

- Access comprehensive user data and ideas

- Control computer systems directly

- Work across multiple interfaces and platforms

This represents a shift from current AI coding assistants like GitHub Copilot (powered by OpenAI's Codex) toward more general-purpose AI agents that can take action on behalf of users.

Context

The Codex model, which powers GitHub Copilot, was originally designed as a code generation tool that suggests completions based on context. Altman's vision suggests expanding this capability beyond code generation to broader computer control and task automation.

This aligns with broader industry trends toward AI agents—systems that can perceive their environment, make decisions, and take actions to achieve goals. Recent developments from companies like Google (with its Project Astra), Microsoft (with its Copilot+ PC initiative), and Anthropic (with Claude Desktop) all point toward more integrated, proactive AI assistants.

What This Means in Practice

If realized, this vision would transform AI from a tool you query into an agent that works autonomously. Instead of asking "write code to sort this list," users might say "organize my project files" or "prepare my quarterly report"—with the AI understanding context, accessing relevant data, and executing across applications.

Frequently Asked Questions

What is Codex Desktop?

Codex Desktop refers to the ecosystem built around OpenAI's Codex model, which powers GitHub Copilot and other coding assistance tools. It's designed to help developers write code more efficiently by suggesting completions and generating code snippets based on natural language prompts.

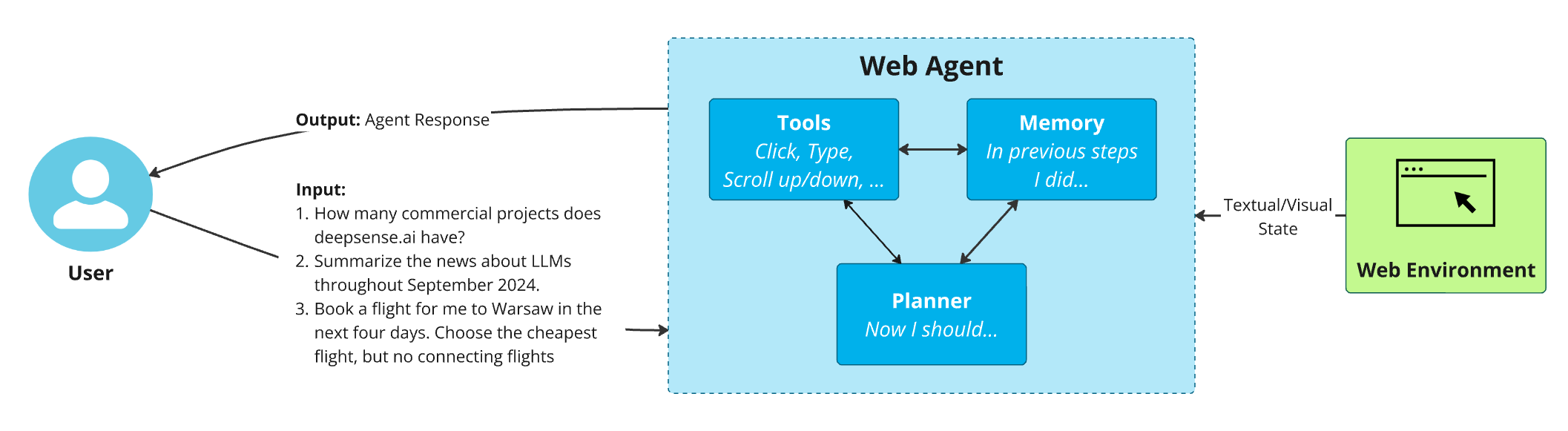

How would an AI agent control computers?

An AI agent controlling computers would likely use APIs, automation frameworks, and potentially direct system calls to interact with operating systems and applications. This could range from simple file operations to complex workflows involving multiple applications, similar to how human users interact with computers but automated and accelerated.

Is this different from current AI assistants like Siri or Alexa?

Yes, significantly. Current voice assistants primarily respond to specific commands and queries within limited domains. Altman's vision describes a unified AI with deep access to user data and the ability to work proactively across surfaces—more like a personal digital employee than a reactive tool.

When might we see this technology?

While Altman didn't provide a timeline, the direction suggests this is a medium-term goal rather than immediate release. Given OpenAI's development pace and the complexity of creating safe, reliable agents with broad system access, initial implementations might appear within 1-2 years, with more sophisticated versions developing over time.

gentic.news Analysis

Altman's comments represent a logical evolution of OpenAI's strategy, building on their existing Codex technology while addressing the fragmentation problem in today's AI landscape. Currently, users juggle multiple AI tools—one for coding, another for writing, another for research—each with separate interfaces and limited context sharing.

This vision aligns with several trends we've been tracking: the move toward multimodal AI (systems that understand text, images, audio, and potentially actions), the development of AI agents with memory and persistence, and increasing integration between AI systems and operating environments. Microsoft's recent announcements about AI integration directly into Windows 11 show similar thinking from a platform perspective.

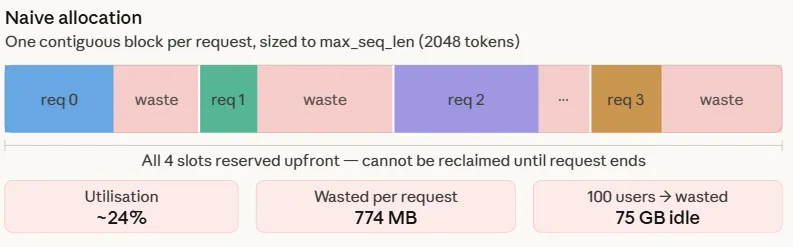

However, significant technical and safety challenges remain. Creating a unified backend that can securely access "all your data and ideas" requires solving difficult problems around privacy, security, and user control. The "ability to work across a lot of surfaces" implies solving cross-platform compatibility and developing robust action-taking capabilities that don't break or make dangerous mistakes.

From a competitive standpoint, this puts OpenAI in potential competition with operating system developers (Microsoft, Apple, Google) who are also working to integrate AI deeply into their platforms. The success of this vision may depend on whether OpenAI can establish its unified AI as a layer above individual platforms or needs to partner deeply with platform providers.

Practically, developers and AI engineers should watch for several developments: OpenAI's potential release of agent APIs, improvements in Codex's ability to understand and manipulate non-code systems, and any announcements about partnerships with software or platform companies. The transition from code generation to general computer control represents a significant technical leap that will require new approaches to training, safety, and user interaction.

Source: Social media post sharing Sam Altman's comments on Codex Desktop evolution