Wharton professor and AI researcher Ethan Mollick has called OpenAI's O1 model launch "the second most important release of the LLM era (after GPT-3.5)," highlighting what he considers a pivotal moment in AI development. In a recent tweet, Mollick expressed surprise that OpenAI chose to publicly disclose what he believes represents "the biggest advance in AI technology since the LLM" rather than keeping it proprietary for competitive advantage.

Key Takeaways

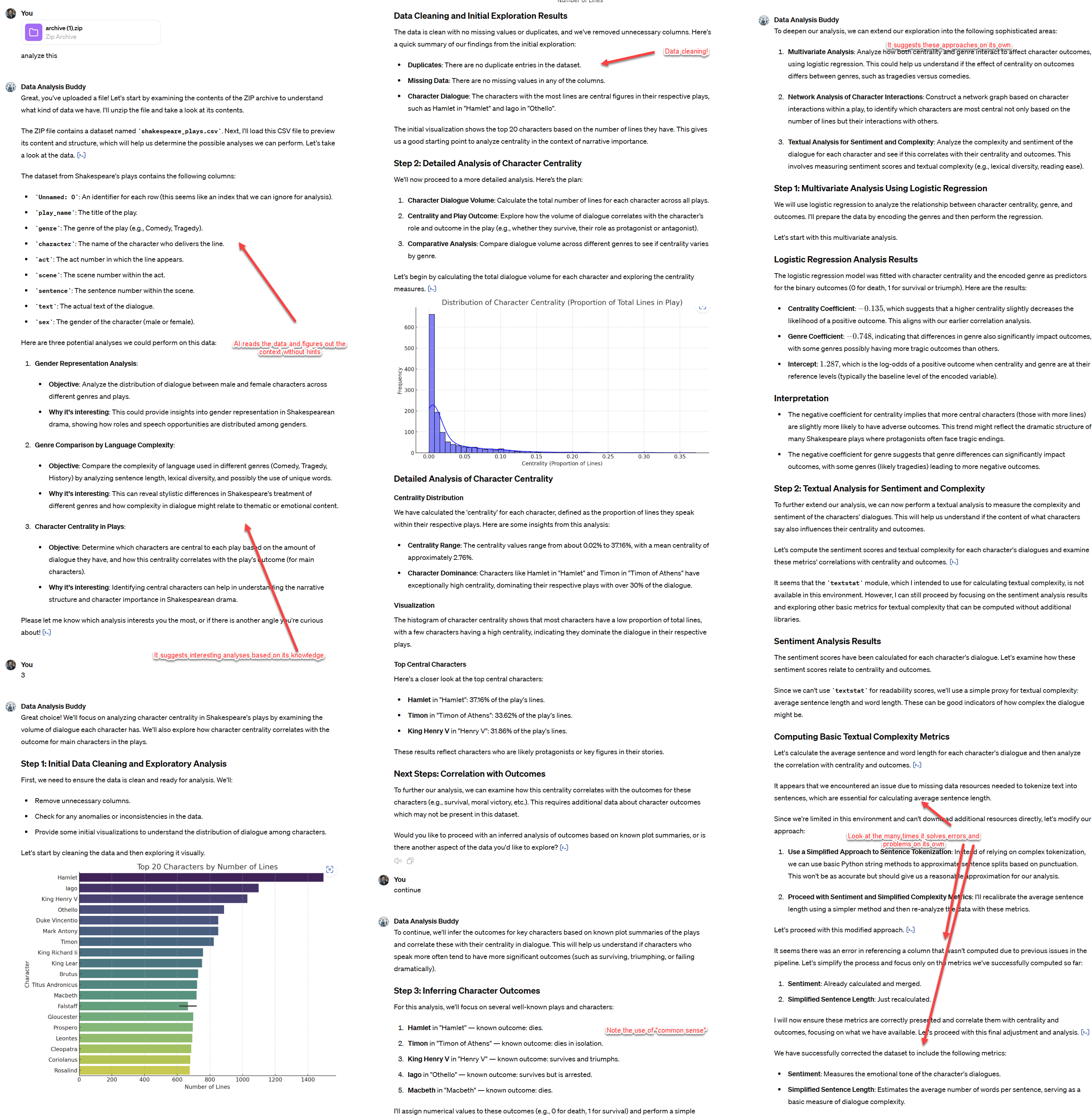

- Ethan Mollick tweeted that OpenAI's O1 launch was the second most important LLM release after GPT-3.5, featuring a pivotal chart.

- He expressed surprise that OpenAI disclosed its biggest AI advance rather than keeping it proprietary.

What Happened

Mollick's tweet references OpenAI's O1 model family launch, which introduced a new approach to reasoning and planning in large language models. While his tweet doesn't specify which chart he considers "the most important," it likely refers to OpenAI's published benchmarks showing O1's dramatic improvements in mathematical reasoning, coding, and complex problem-solving compared to previous models.

The O1 models represent a departure from traditional next-token prediction, incorporating more deliberate reasoning processes that enable step-by-step problem-solving. This architectural shift has produced measurable improvements on benchmarks requiring multi-step reasoning, particularly in mathematics and scientific domains.

Context: The O1 Launch

OpenAI announced the O1 models in late 2025, positioning them as "reasoning models" that could plan ahead before generating responses. The company released two versions: O1 and O1-mini, with the former representing their most advanced reasoning model to date.

Key technical aspects of the O1 architecture include:

- Extended reasoning time before response generation

- Improved planning capabilities for complex problems

- Enhanced mathematical reasoning surpassing previous state-of-the-art models

- Better handling of multi-step logical problems

Why Mollick's Perspective Matters

Mollick's characterization of the O1 launch as the "second most important" after GPT-3.5 places it above other significant releases like GPT-4, Claude 3.5, and various open-source breakthroughs. This ranking suggests he views the shift toward explicit reasoning capabilities as more fundamentally transformative than incremental parameter scaling or multimodal additions.

His surprise at OpenAI's transparency raises questions about competitive strategy in the AI industry. By openly publishing their architectural advances, OpenAI may be attempting to:

- Establish a new benchmark for reasoning capabilities

- Accelerate overall AI safety research by sharing approaches

- Position themselves as leaders in responsible AI development

- Force competitors to play catch-up in reasoning research

The Competitive Landscape

The O1 launch occurred against a backdrop of intensifying competition in the reasoning AI space. Anthropic's Claude 3.5 Sonnet had previously set new standards for coding and reasoning, while Google's Gemini models continued to advance mathematical capabilities. OpenAI's decision to publicly share their reasoning architecture represents either confidence in their lead or a strategic move to shape the direction of AI safety research.

Frequently Asked Questions

What is OpenAI's O1 model?

OpenAI's O1 is a family of "reasoning models" introduced in late 2025 that incorporate extended planning and deliberation before generating responses. Unlike traditional LLMs that predict the next token immediately, O1 models engage in multi-step reasoning processes, particularly excelling at mathematical problems, coding challenges, and complex logical tasks.

Why does Ethan Mollick consider O1 the second most important LLM release?

Mollick ranks O1 second only to GPT-3.5 because he believes it represents the biggest architectural advance in AI since the original LLM breakthrough. The shift from pure next-token prediction to deliberate reasoning with planning represents a fundamental change in how AI systems approach complex problems, potentially enabling new capabilities in science, mathematics, and strategic thinking.

What chart is Mollick referring to in his tweet?

While Mollick doesn't specify, he's likely referring to OpenAI's published benchmarks showing O1's dramatic performance improvements on mathematical and reasoning tasks. The most striking chart probably shows O1 outperforming previous state-of-the-art models by significant margins on datasets like MATH, GSM8K, or coding benchmarks, demonstrating the effectiveness of their reasoning architecture.

Why would OpenAI reveal such an important advance?

OpenAI's transparency about their reasoning architecture could serve multiple strategic purposes: establishing a new benchmark that competitors must match, advancing AI safety research by encouraging scrutiny of reasoning systems, positioning themselves as responsible leaders in AI development, or accelerating overall progress in reasoning capabilities that benefit their ecosystem and partners.

gentic.news Analysis

Mollick's assessment aligns with our previous coverage of the reasoning AI trend. In our December 2025 analysis "The Reasoning Race Heats Up," we noted that 2025 marked a strategic pivot from pure scale to architectural innovation for complex cognition. The O1 launch represents the culmination of research threads we've tracked since OpenAI's "Let's Verify" work in 2023 and their gradual integration of search and planning into language models.

This transparency strategy contrasts with OpenAI's earlier approach with GPT-4, where they published minimal technical details. The shift suggests either increased confidence in their moat or recognition that reasoning safety requires broader research community engagement. Notably, this comes as Anthropic continues advancing Constitutional AI and Google DeepMind pushes forward with AlphaGeometry-style approaches—creating a multi-front competition where architectural innovation matters as much as scale.

Practically, developers should expect reasoning capabilities to become a new battleground in enterprise AI adoption. While pure language fluency has largely plateaued for practical applications, reasoning improvements directly translate to better performance on business logic, data analysis, and complex workflow automation. The O1 architecture, if successfully productized, could reshape expectations for AI assistants in technical domains.

Looking forward, the key question isn't whether reasoning architectures will dominate—they clearly will—but whether OpenAI's specific implementation will maintain its lead. Mollick's surprise at their transparency suggests he expects rapid competitive response, potentially accelerating the next wave of AI capabilities beyond what any single company could achieve alone.