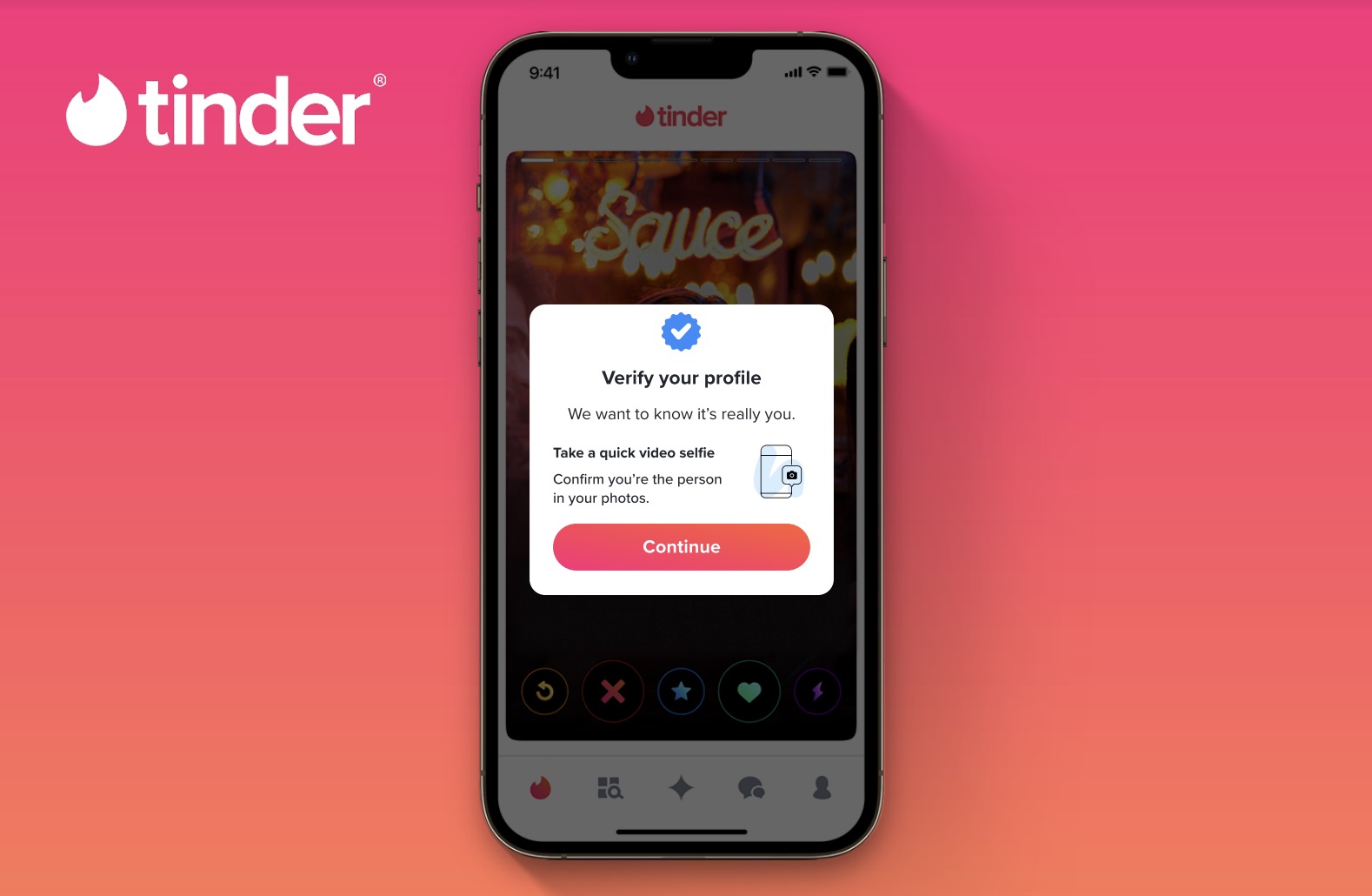

A BBC report, highlighted by AI researcher Rohan Paul, indicates that major consumer applications are moving to adopt "proof of humanity" as a standard security layer. The report names dating app Tinder and communications platform Zoom as backers of the Proof of Humanity protocol, a system that uses video selfies and biometric verification to cryptographically confirm a user is a unique human.

The push is a direct response to the escalating problem of AI-generated fake accounts, deepfakes, and automated bots, which have eroded trust and safety on digital platforms. By integrating such a protocol, platforms aim to create a foundational layer of verified human identity upon which other services can be built.

What Happened

The BBC report, as cited in the social media post, states that Tinder and Zoom have publicly backed the Proof of Humanity system. Proof of Humanity is a decentralized identity verification protocol built on the Ethereum blockchain. Users submit a video of themselves and a small deposit, which is then verified by other registered humans in a crowdsourced process. Successful verification grants a user a unique, Sybil-resistant digital identity (a "UBI" token).

This backing suggests these companies are exploring or planning to integrate this form of biometric verification, not as an optional feature, but potentially as a mandatory step for account creation or access to certain features. The goal is to create a reliable barrier against the mass creation of fake profiles by AI.

The Technical Layer: Proof of Humanity

Proof of Humanity operates on a combination of social verification, economic stake, and biometric analysis.

- Submission: A user uploads a short video stating a phrase and pays a small security deposit.

- Verification: The submission enters a challenge period where existing, verified users ("vouchers") can vouch for its authenticity or challenge it if they suspect it's a duplicate or fake.

- Registry & Token: Once verified, the user's profile is added to a public, on-chain registry and they begin receiving a Universal Basic Income (UBI) token stream, incentivizing honest participation.

The system is designed to be Sybil-resistant—making it economically and socially costly to create multiple fake identities—and privacy-preserving, as the on-chain registry stores only a hash of the user's information, not the raw video data.

The Driving Force: AI-Generated Fakery

The adoption drive is fueled by the rapidly decreasing cost and increasing quality of AI-generated synthetic media. In recent years, AI tools have made it trivial to:

- Generate photorealistic profile pictures for fake dating or social media accounts.

- Create deepfake videos for verification bypass or impersonation.

- Deploy sophisticated chatbots that mimic human interaction for scams or spam.

Traditional verification methods (SMS, email, CAPTCHAs) are increasingly inadequate against these AI-powered threats. Platforms are therefore seeking more robust, biometric-based "proof of personhood" solutions.

What This Means in Practice

If implemented as a standard login layer, users on platforms like Tinder or Zoom may soon be required to complete a one-time Proof of Humanity verification to create an account. This would:

- Drastically reduce fake bots and scam accounts on dating platforms.

- Add a layer of accountability to video communications, potentially mitigating harassment and deepfake misuse.

- Create a portable, reusable digital identity that users could employ across multiple services that support the protocol.

The trade-off is between enhanced security/safety and the friction of a more invasive sign-up process, raising questions about accessibility and privacy.

gentic.news Analysis

This development is a significant inflection point in the ongoing arms race between platform security and generative AI capabilities. It validates a trend we've been tracking since the rise of deepfakes in 2023: that biometric verification will move from a high-security niche (e.g., banking) to a mainstream consumer requirement.

The backing by Tinder and Zoom is particularly telling. Tinder's parent company, Match Group, has been under intense regulatory and user pressure to clean up its platforms of scams and fake profiles. Their exploration of Proof of Humanity aligns with their February 2025 announcement of a "Trust & Safety Initiative," which promised to invest in advanced verification tools. Zoom, on the other hand, faces unique challenges with AI avatars and deepfakes in real-time communication, making verified human presence a core feature for enterprise trust.

This move also represents a major endorsement for decentralized identity protocols over proprietary, corporate-owned systems. By backing an open standard like Proof of Humanity, these companies are implicitly acknowledging that a universal, user-controlled identity layer may be more effective and interoperable than building isolated walls. It directly relates to our October 2025 coverage of Worldcoin's struggles with privacy concerns, suggesting the market is seeking less invasive alternatives for proof of personhood.

For AI engineers, the implication is clear: the low-cost, large-scale automation of fake identity creation is forcing a structural change in internet architecture. The next generation of consumer apps will likely be built with a verified human layer as a default assumption, changing the threat models for both attackers and defenders.

Frequently Asked Questions

What is Proof of Humanity?

Proof of Humanity is a decentralized verification system built on Ethereum. It uses submitted video selfies, social vouching from existing users, and a small economic stake to create a Sybil-resistant registry of verified human identities. It issues a Universal Basic Income (UBI) token to verified users as an incentive.

Why are Tinder and Zoom doing this?

They are responding to the massive proliferation of AI-generated fake accounts, deepfakes, and bots on their platforms. Traditional verification is no longer sufficient. Tinder needs to combat romance scams and fake profiles, while Zoom needs to ensure participants in meetings are real people and not AI deepfakes, especially in sensitive corporate or legal contexts.

Will I have to do this to use Tinder or Zoom?

The BBC report indicates these companies are "backing" the protocol, which suggests they are seriously exploring integration. It is not yet clear if verification will be mandatory for all users or only for certain high-trust features. If implemented, new users would likely need to complete a one-time verification.

Is Proof of Humanity safe? What happens to my video?

The protocol is designed to be privacy-preserving. Your raw video is not stored on the public blockchain. Instead, a cryptographic hash (a unique digital fingerprint) of your profile information is stored. The system's security relies on decentralized social consensus and economic stakes to prevent fraud.