A groundbreaking study from Stanford University has pulled back the curtain on one of the most significant yet under-discussed privacy issues in artificial intelligence today. Published in September 2025 under the title "User Privacy and Large Language Models," the research systematically analyzed the privacy policies and data practices of six leading AI companies: OpenAI, Google, Meta, Anthropic, Microsoft, and Amazon. The findings paint a troubling picture of default data collection, opaque consent mechanisms, and a stark privacy divide between enterprise and regular users.

The Universal Default: Opt-Out as the Exception

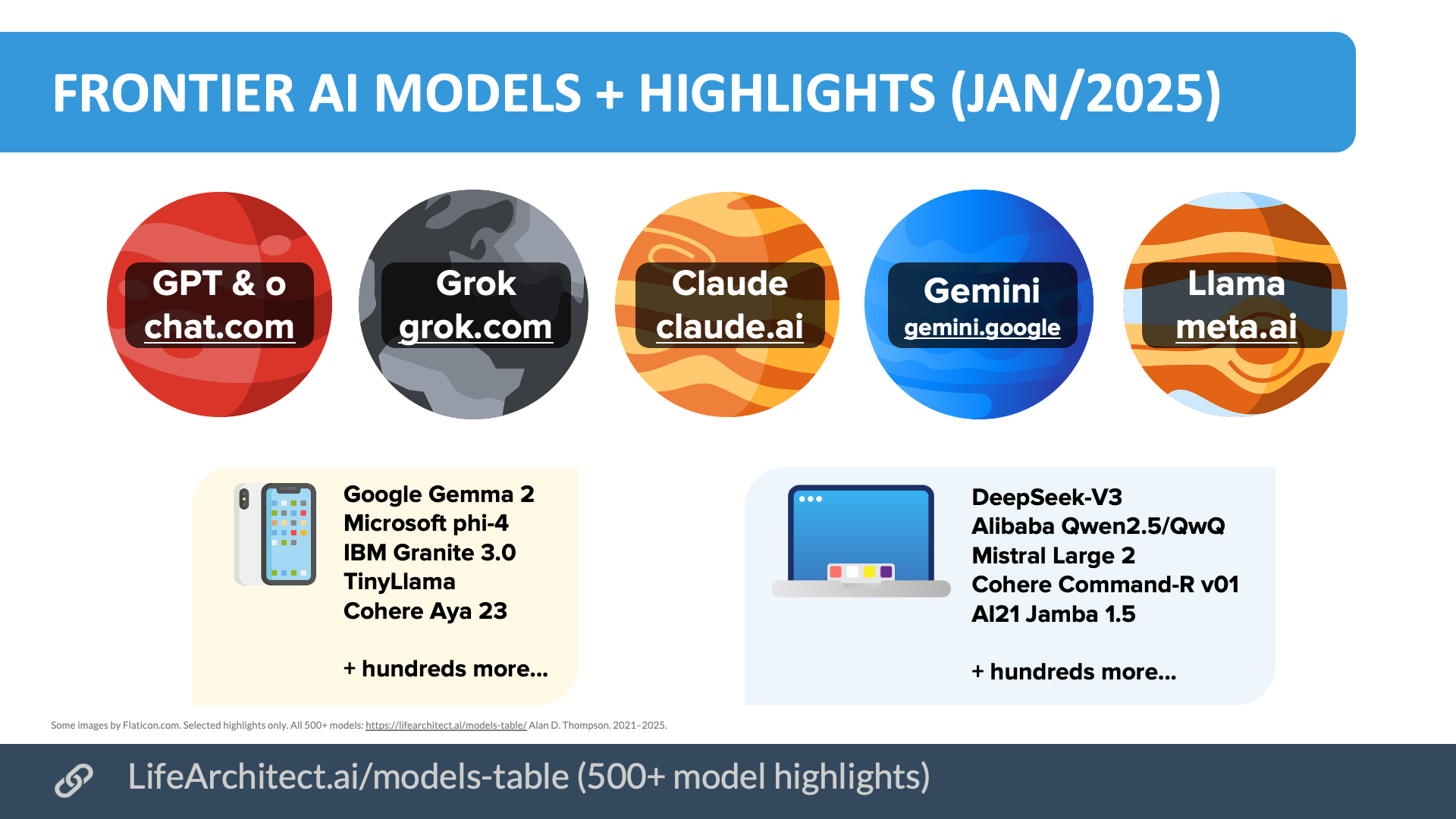

The core revelation of the Stanford paper is that all six companies train their AI models on user chat data by default. This means that every conversation, query, or interaction a user has with models like ChatGPT, Gemini, Claude, or Copilot is, unless explicitly prevented, potentially used to improve the underlying AI. The researchers noted a near-total absence of meaningful, informed consent. Users are not presented with clear, upfront choices about data usage for training; instead, they are automatically enrolled in what the study describes as a pervasive data collection regime.

The Illusion of Choice and the Guiltshaming Tactic

Where opt-out mechanisms exist, the Stanford team found them to be obscure, cumbersome, or incomplete. For instance, Amazon's privacy policy reportedly makes no mention of AI training at all, burying a notice only within the chat interface itself. Meta and Google were cited as offering "zero clear opt-out routes," leaving users with no straightforward way to prevent their data from being used for model improvement.

Perhaps more psychologically manipulative is the practice the researchers termed "guiltshaming." Companies like OpenAI frame data collection as a communal benefit, using language that suggests opting out hinders the model's improvement "for everyone." This creates social pressure, making users feel responsible for the AI's progress and potentially discouraging them from exercising their privacy rights.

A Two-Tiered Privacy System

One of the most striking disparities uncovered is the different treatment of enterprise versus regular users. The study found that business and enterprise clients are typically opted out of training by default. Their data is protected under stricter contractual agreements. In contrast, individual consumers and regular users are opted in by default, creating a clear privacy caste system where corporations receive automatic protections that are denied to the public.

Indefinite Retention and Human Review Risks

The data practices extend beyond just training. The paper highlights that OpenAI, Amazon, and Meta retain some user chat data indefinitely, meaning private conversations could persist in corporate systems forever, creating long-term security and privacy risks. Furthermore, the human element of data review poses significant dangers. The researchers noted that contract workers reviewing chat transcripts for Meta could sometimes identify specific users by name from the content, shattering any illusion of true anonymity.

The Special Vulnerability of Children

In a particularly alarming finding, the analysis suggests that four of the six companies appear to train their models on data from chats with children. Given the heightened legal and ethical protections required for minors' data globally (such as COPPA in the U.S. and the UK's Age-Appropriate Design Code), this practice raises serious questions about compliance and corporate responsibility.

The Fall of the Last Holdout

The trend toward opt-out-by-default solidified in late 2025. Anthropic, long praised for its principled stance of requiring opt-in consent for training data, reversed its policy in September 2025, switching to an opt-out model. This shift means there is no longer a major AI provider offering a default privacy-preserving stance, marking a consolidation of industry practice that prioritizes data acquisition over user choice.

The Path Forward: Demanding Transparency and Control

The Stanford study serves as a crucial wake-up call for regulators, users, and the tech industry itself. It underscores the urgent need for:

- Clear, upfront consent: Moving away from buried settings and toward explicit, informed choice.

- Universal opt-out ease: Making privacy controls as accessible for individuals as they are for enterprises.

- Stricter data retention policies: Implementing meaningful data minimization and deletion schedules.

- Enhanced regulatory scrutiny: Particularly concerning the handling of data from vulnerable groups like children.

As large language models become further embedded in daily life, the ethics of their data diet can no longer be an afterthought. The Stanford paper reveals that the current paradigm is built on a foundation of extracted, often non-consensual, human interaction. Building trustworthy AI requires not just more data, but more respect for the people behind it.

Source: "User Privacy and Large Language Models," Stanford University, September 2025, as highlighted in analysis by @hasantoxr.