Qwen released Qwen-Scope, an interpretability toolkit adding Sparse Autoencoders to Qwen3.5-27B. The toolkit exposes 81k features across 64 layers for steerable inference and mechanistic analysis.

Key facts

- Qwen-Scope adds SAEs to Qwen3.5-27B

- 81k features across 64 layers exposed

- Hosted on Hugging Face with open weights

- Enables steerable inference and mechanistic analysis

- No benchmark results or feature quality metrics disclosed

Qwen-Scope applies Sparse Autoencoders (SAEs) to Qwen3.5-27B, a 27-billion-parameter model from the Qwen family. The toolkit identifies 81,000 interpretable features distributed across all 64 transformer layers, enabling researchers to trace which internal activations drive specific outputs.

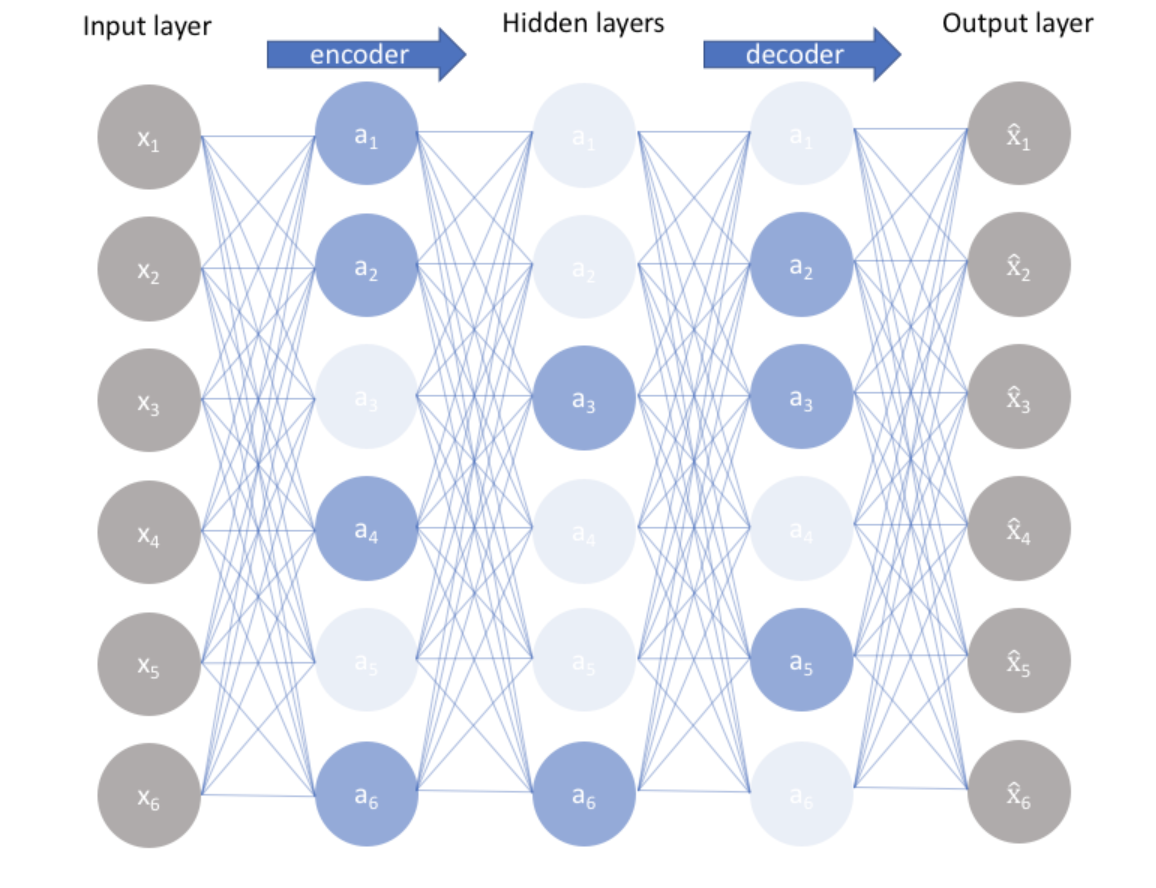

SAEs decompose model activations into sparse, human-interpretable components. Unlike prior work focused on smaller models (e.g., GPT-2 Small), Qwen-Scope scales feature discovery to a 27B-parameter architecture. The release includes pre-trained SAE weights, inference scripts, and a steering interface for modifying model behavior via feature manipulation.

The toolkit is hosted on Hugging Face [According to @HuggingPapers]. No benchmark results or feature quality metrics were disclosed, though the feature count—81k—is comparable to recent Anthropic work on Claude 3 Sonnet (millions of features) but at a smaller model scale. The key differentiator is openness: Qwen provides weights and code, whereas Anthropic's SAE research on Claude remains proprietary.

Why This Matters

This release makes mechanistic interpretability practical for a frontier open-weight model. Previously, SAE-based steering was limited to sub-10B models or required significant compute to train from scratch. Qwen-Scope lowers the barrier for researchers to experiment with feature-level control on a model competitive with Llama 3.1-70B on several benchmarks.

What's Missing

Qwen did not release training details, feature quality evaluations, or ablation studies. The 81k feature count is modest compared to Anthropic's reported millions, but Qwen may have prioritized coverage over density. No steering examples or output quality metrics were provided, making it difficult to assess real-world utility.

What to watch

Watch for community benchmarks on steering effectiveness—whether Qwen-Scope enables reliable output control (e.g., jailbreak prevention, style modulation) without degrading model quality. Also monitor if Anthropic or Google release comparable open SAE toolkits for their models.