What Happened

A new research paper, "Towards Transfer-Efficient Multi-modal Sequential Recommendation with State Space Duality," introduces MMM4Rec (Multi-Modal Mamba for Sequential Recommendation). This framework is designed to solve a core problem in modern recommendation systems: efficiently leveraging multi-modal data (like images and text) to predict a user's next action based on their historical sequence of interactions.

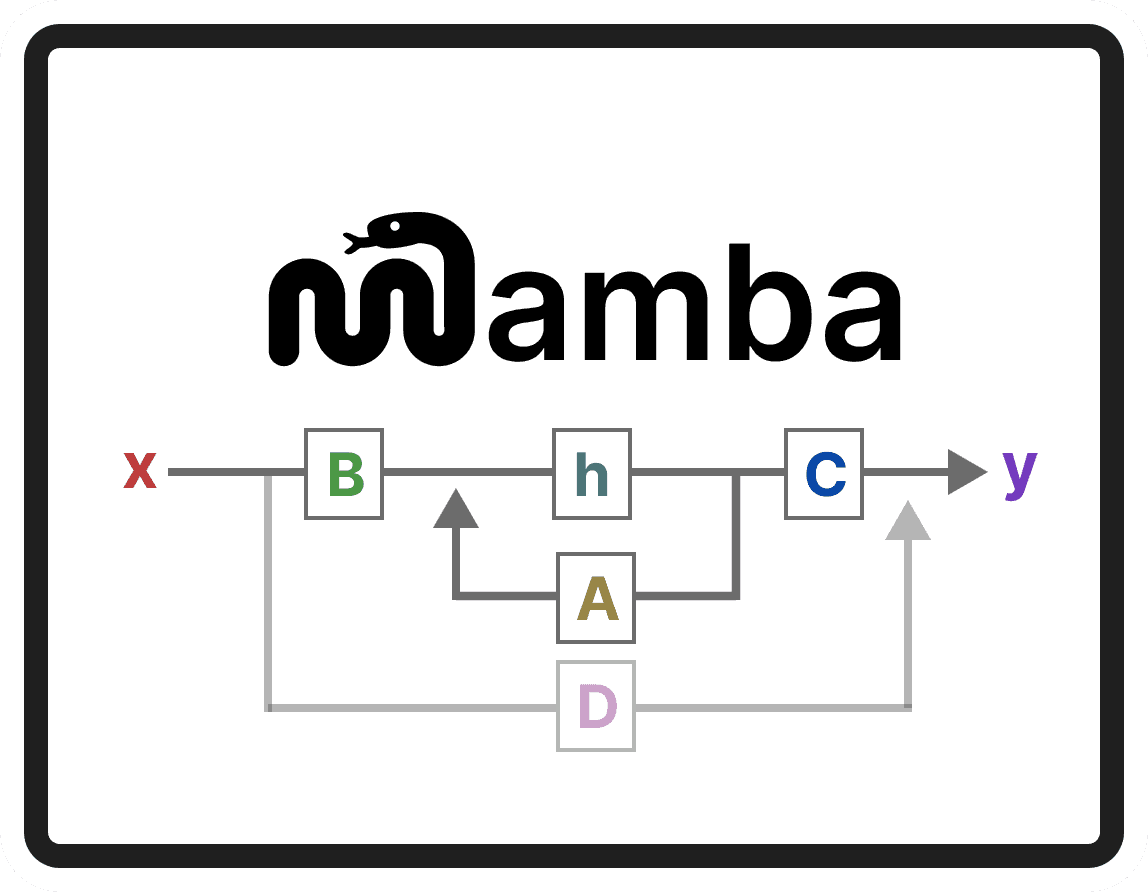

The core innovation is the replacement of the standard Transformer architecture with a State Space Model (SSM), specifically leveraging State Space Duality (SSD). The authors argue that existing multi-modal sequential recommendation models, while powerful, suffer from "slow fine-tuning convergence" and "negative transfer effects" when adapting a pre-trained model to a new, specific dataset or task. This makes them expensive and slow to deploy.

MMM4Rec's architecture is a constrained two-stage process:

- Sequence-Level Cross-Modal Alignment: Uses shared projection matrices to create a unified representation from different data types (e.g., product image embeddings and text descriptions).

- Temporal Fusion: Employs a novel Cross-SSD module combined with dual-channel Fourier adaptive filtering. This design is key—it allows the model to dynamically prioritize the most relevant modality information at each step in a user's sequence while suppressing noise. The SSD component provides inherent temporal decay properties, helping the model focus on recent, relevant interactions.

The reported results are significant. The model achieves state-of-the-art recommendation accuracy on benchmark datasets while demonstrating a 10x faster average convergence speed when transferring to large-scale downstream tasks. Crucially, it achieves this rapid fine-tuning with a simple cross-entropy loss, sidestepping the complex optimization schemes often required by Transformer-based counterparts. The code is publicly available on GitHub.

Technical Details

At its heart, this research is about architectural efficiency for a specific class of problem: Sequential Recommendation (SR). Traditional SR models often rely on IDs, but multi-modal SR incorporates visual and textual features from items, which is richer but computationally harder to unify over time.

- The Problem with Transformers: While Transformers are the backbone of modern AI, their self-attention mechanism has quadratic complexity with sequence length. For modeling long user histories with rich multi-modal data, this can be computationally prohibitive, especially during the fine-tuning phase for new applications.

- The Mamba/SSM Alternative: State Space Models (SSMs), like Mamba, offer linear-time complexity for sequence modeling. MMM4Rec builds on this by introducing State Space Duality (SSD), which the authors use to create a "globally-aware temporal modeling design." The "Cross-SSD" module is their novel contribution for fusing information across modalities within this efficient SSM framework.

- The Transfer Learning Bottleneck: The paper directly addresses the practical hurdle of transfer learning. A model pre-trained on a massive, generic dataset (e.g., all Amazon products) must be fine-tuned for a specific, smaller domain (e.g., a luxury fashion retailer's catalog). This process is where existing models slow down. MMM4Rec's design, with its algebraic constraints and SSD-based fusion, is engineered to make this adaptation step dramatically faster and more stable, mitigating "negative transfer" where pre-trained knowledge harms performance on the new task.

Retail & Luxury Implications

The potential applications for a high-performance, transfer-efficient multi-modal sequential recommender in retail are direct and substantial. However, it's critical to assess the gap between a promising arXiv paper and a production-ready system.

How This Could Apply:

- Hyper-Personalized Discovery: For a luxury client with a long purchase and browsing history, MMM4Rec could theoretically model the evolution of their taste with exceptional nuance. By jointly analyzing the sequence of items they've viewed (IDs), the aesthetics of those items (visual embeddings from product shots), and the language used to describe them (text embeddings), the model could predict the next item they'd desire with high accuracy, powering "For You" feeds and personalized email campaigns.

- Efficient Catalog Onboarding: A brand launching a new sub-line or acquiring another brand faces the challenge of integrating a new catalog into its existing recommendation ecosystem. MMM4Rec's claimed 10x faster fine-tuning convergence suggests it could be adapted to this new data domain much more quickly than current models, reducing time-to-value.

- Cross-Modal Search & Retrieval: The framework's strong "multi-modal retrieval capability" could enhance search systems. A user searching with an image ("find items like this") or an abstract text query ("elegant evening wear for gala") could be matched more precisely to items in the catalog based on a unified understanding of visual and textual semantics, learned from sequential user behavior.

The Reality Check:

This is a pre-print on arXiv, not a peer-reviewed publication or a deployed product. The 10x speedup claim, while compelling, needs validation on real-world, proprietary retail datasets, which often have unique noise, sparsity, and business rule constraints not present in academic benchmarks. Implementing MMM4Rec would require a team with deep expertise in SSMs/Mamba and multi-modal learning, a skillset that is still specialized. The computational savings are primarily during fine-tuning; the initial pre-training on a large multi-modal dataset would remain a significant investment.

For a luxury retailer, the ultimate value would hinge on the model's ability to capture the subtle, non-linear evolution of high-value customer taste and its seamless integration with existing CRM and e-commerce infrastructure. The paper points a promising direction, but the journey to production is non-trivial.