March 2026 — According to a social media post by a mathematician, three new problems from the extensive collection attributed to Paul Erdős have been solved "by an internal model at OpenAI." The claim, if verified, represents a significant milestone in applying artificial intelligence to frontier problems in pure mathematics.

What Happened

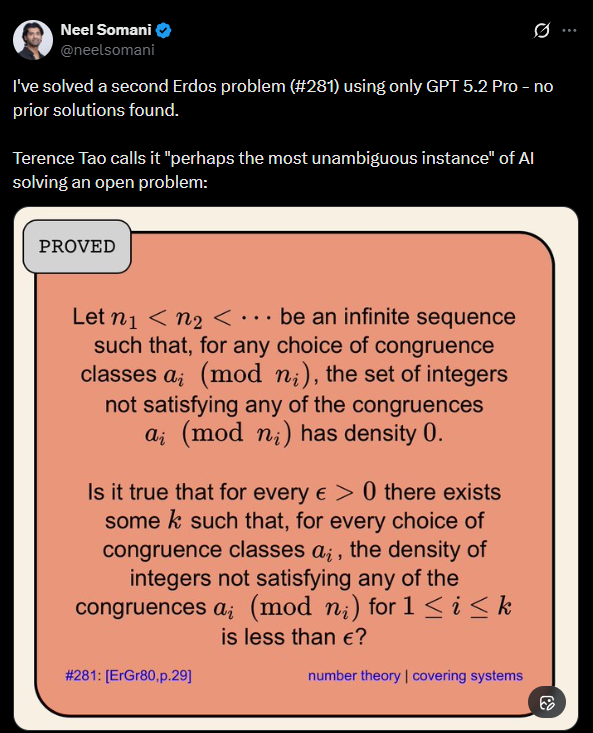

On March 28, 2026, mathematician and X user @kimmonismus posted: "Three new Erdos problems were solved 'by an internal model at OpenAI'." The post provides no further technical details, benchmarks, or verification. The statement implies that an AI system developed internally at OpenAI produced proofs or solutions to three mathematical problems from the famous list of thousands of conjectures and open questions associated with the prolific mathematician Paul Erdős.

Context: The Erdős Problems

Paul Erdős (1913–1996) was one of the most prolific mathematicians of the 20th century, known for his collaborative work across number theory, combinatorics, graph theory, and more. He famously offered monetary prizes for solutions to hundreds of problems, ranging from small sums to larger rewards for particularly difficult conjectures. The collection, often referred to as "Erdős problems," represents some of the most intriguing and challenging open questions in discrete mathematics. Solving any of them is considered a notable achievement within the mathematical community.

AI-assisted theorem proving has been a growing research area. Systems like OpenAI's own GPT-f (2020) and Lean-gptf demonstrated early potential by generating proofs for existing theorems in the Lean formal language. DeepMind's AlphaGeometry (2024) solved Olympiad-level geometry problems. More recently, projects like FunSearch (DeepMind, 2023) used LLMs to discover new algorithms and mathematical constructions. However, solving previously open Erdős problems would represent a qualitative jump in complexity and abstraction.

The Current State of AI Theorem Proving

Prior to this claim, the public state-of-the-art in AI for mathematics involved:

- Formalizing existing proofs in interactive theorem provers (ITPs) like Lean, Isabelle, or Coq.

- Solving curated competition problems (e.g., IMO, AMC) within known domains.

- Assisting human mathematicians in exploring conjecture spaces and finding counterexamples.

A verified solution to an open Erdős problem by an AI would shift the field from "assistance" and "formalization" to genuine discovery at the research frontier.

What We Don't Know (Yet)

The source is a single, unattributed claim. Critical details are missing:

- Which specific Erdős problems were solved? The difficulty and field (number theory, combinatorics, etc.) matter greatly.

- What is the "internal model"? Is it a fine-tuned version of o1, a new architecture, or a specialized theorem-proving agent?

- What is the nature of the "solution"? Is it a formal, machine-verified proof? A sketch convincing to human mathematicians? A counterexample to a conjecture?

- Has the work been peer-reviewed or formally verified? Mathematical claims require rigorous scrutiny.

OpenAI has made no official announcement. The company's last major public foray into mathematics was the GPT-f project. Their recent focus has been on o1 models, which emphasize deep reasoning and process supervision, capabilities highly relevant to mathematical proof.

Potential Implications

If true, this development would have immediate ramifications:

- For AI Research: It demonstrates that advanced reasoning models can navigate the complex, abstract search space of pure mathematics, a domain far removed from the training data's surface patterns.

- For Mathematics: It could accelerate the pace of discovery in certain fields, acting as a collaborative partner that can exhaustively check paths or propose novel constructions.

- For OpenAI: It validates their investment in reasoning-focused architectures and could preview a new research tool or product.

Next Steps for Verification

The mathematical community will expect:

- A preprint or publication detailing the problems, methods, and proofs.

- Formal verification of the proofs in an ITP, or thorough peer review by domain experts.

- Clarification from OpenAI on the model and methodology used.

Until then, the claim remains a notable but unverified signal of private progress.

gentic.news Analysis

This report, while thin on details, fits directly into two major, converging trends we've been tracking since 2024. First, it represents the logical endpoint of the reasoning model trajectory pioneered by OpenAI's o1 series. When o1 launched in late 2024, its key differentiator was "process supervision" for deep, chain-of-thought reasoning—a training methodology explicitly aimed at solving complex, multi-step problems like those in mathematics and coding. If an internal successor to o1 has cracked Erdős problems, it validates that architectural bet in the most demanding domain possible.

Second, this connects to the ongoing, quiet competition between AI labs in scientific discovery. DeepMind's AlphaFold (2021) set the standard in biology. In 2025, both Google's DeepMind and Anthropic published work on AI for material science and partial differential equations. OpenAI has been less visible in this "AI for Science" publishing arena. This leak suggests they have been pursuing a high-prestige, pure-science target—frontier mathematics—likely as a strategic benchmark to demonstrate a reasoning capability leap over rivals. It echoes the dynamic of the LLM benchmark wars, but shifted to a domain with clearer, verifiable intellectual milestones.

Furthermore, the mention of an "internal model" is key. It implies this capability is not yet in a public-facing product like ChatGPT, but is being developed in a research division. This pattern mirrors how GPT-4 was tested internally on the BAR exam and AP tests before its launch. Solving Erdős problems could be a similar internal milestone for a future "o2" or reasoning-focused system. The strategic leak itself may be a move to shape perception of OpenAI's technical lead amidst increasing competition from well-funded open-source efforts and other giants.

Frequently Asked Questions

What are Erdős problems?

Erdős problems are a collection of thousands of open conjectures and questions in mathematics, primarily in fields like number theory, combinatorics, and graph theory, posed by the prolific mathematician Paul Erdős. He often attached small monetary prizes to their solution. They range in difficulty but are generally considered non-trivial research-level problems. Solving one is a recognized achievement in the mathematical community.

Has AI solved open math problems before?

Yes, but at a different scale. In 2023, DeepMind's FunSearch discovered a new, improved algorithm for the cap set problem, a long-standing open question in combinatorics. In 2024, their AlphaGeometry system solved Olympiad-level geometry problems. However, a verified solution to a classic Erdős problem would be among the most prestigious and complex pure mathematics achievements by an AI system to date, due to the problems' fame and abstract nature.

What model did OpenAI likely use?

Based on OpenAI's public roadmap, the most likely candidate is an advanced, unreleased version of their o1 model family. The o1 models were specifically architected for deep reasoning using process supervision. They have shown strong performance on mathematics benchmarks like MATH. An internal version, potentially scaled up and trained with a theorem-proving objective, could possess the necessary multi-step logical deduction and abstraction capabilities. It is unlikely to be a standard conversational GPT variant.

When will we get official confirmation or details?

There is no timeline. OpenAI may choose to publish a paper, integrate the capability into a future product announcement (like a potential o2), or remain silent. The mathematical community will require peer-reviewed publication or formal verification before accepting the claims. If the solutions are correct, pressure for publication will be high, suggesting details may emerge within the next 3-12 months.